How Community Feedback Shapes User Behavior

Social media systems rely on user feedback and rating mechanisms for personalization, ranking, and content filtering. However, when users evaluate content contributed by fellow users (e.g., by liking a post or voting on a comment), these evaluations …

Authors: Justin Cheng, Cristian Danescu-Niculescu-Mizil, Jure Leskovec

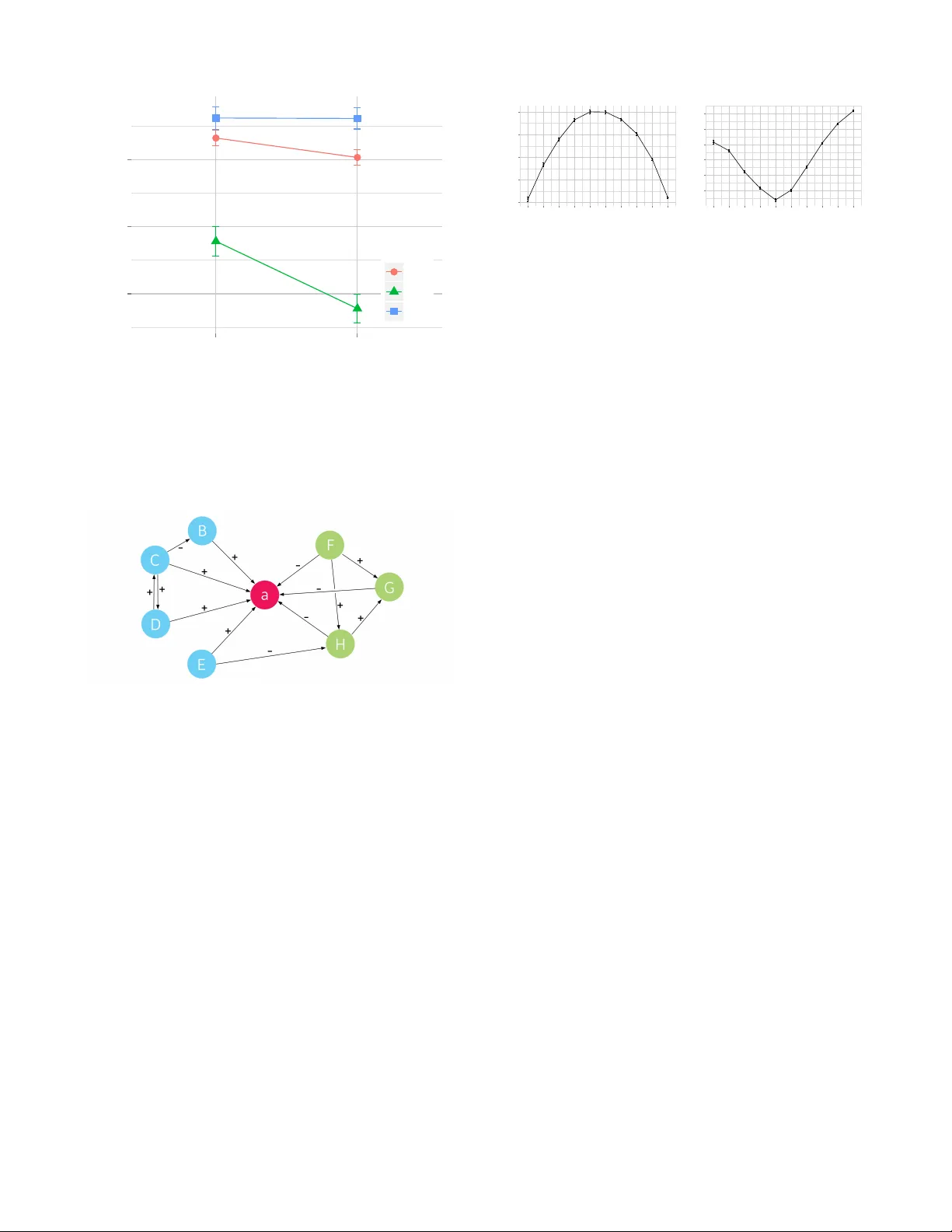

How Community Feedback Shapes User Behavior Justin Cheng ∗ , Cristian Danescu-Niculescu-Mizil † , Jure Lesko vec ∗ ∗ Stanford Univ ersity , † Max Planck Institute SWS jcccf | jure@cs.stanford.edu, cristian@mpi-sws.org Abstract Social media systems rely on user feedback and rating mechanisms for personalization, ranking, and content filtering. Howe ver , when users ev aluate content con- tributed by fellow users (e.g., by liking a post or voting on a comment), these ev aluations create comple x social feedback ef fects. This paper in vestigates how ratings on a piece of content affect its author’ s future behavior . By studying four large comment-based ne ws communi- ties, we find that negati ve feedback leads to significant behavioral changes that are detrimental to the commu- nity . Not only do authors of negati vely-e v aluated con- tent contribute more, but also their future posts are of lower quality , and are perceived by the community as such. Moreover , these authors are more likely to sub- sequently ev aluate their fellow users negativ ely , perco- lating these effects through the community . In contrast, positiv e feedback does not carry similar ef fects, and nei- ther encourages re warded authors to write more, nor im- prov es the quality of their posts. Interestingly , the au- thors that receive no feedback are most likely to leav e a community . Furthermore, a structural analysis of the voter network reveals that e valuations polarize the com- munity the most when positive and negati ve votes are equally split. Introduction The ability of users to rate content and provide feedback is a defining characteristic of today’ s social media systems. These ratings enable the discov ery of high-quality and trend- ing content, as well as personalized content ranking, filter- ing, and recommendation. Ho wev er , when these ratings ap- ply to content generated by fellow users—helpfulness rat- ings of product revie ws, likes on Facebook posts, or up- votes on news comments or forum posts—ev aluations also become a mean of social interaction. This can create social feedback loops that affect the behavior of the author whose content was e v aluated, as well as the entire community . Online rating and ev aluation systems have been e x- tensiv ely researched in the past. The focus has primar- ily been on predicting user-generated ratings (Kim et al. 2006; Ghose and Ipeirotis 2007; Otterbacher 2009; An- derson et al. 2012b) and on understanding their effects at Copyright c 2014, Association for the Advancement of Artificial Intelligence (www .aaai.org). All rights reserved. the community-level (Danescu-Niculescu-Mizil et al. 2009; Anderson et al. 2012a; Muchnik, Aral, and T aylor 2013; Sipos, Ghosh, and Joachims 2014). Howev er , little attention has been dedicated to studying the effects that ratings have on the beha vior of the author whose content is being ev alu- ated. Ideally , feedback would lead users to behav e in ways that benefit the community . Indeed, if positive ratings act as “re- ward” stimuli and negati ve ratings act as “punishment” stim- uli, the operant conditioning frame work from beha vioral psychology (Skinner 1938) predicts that community feed- back should guide authors to generate better content in the future, and that punished authors will contribute less than rew arded authors. Howe ver , despite being one of the funda- mental frameworks in behavioral psychology , there is lim- ited empirical evidence of operant conditioning effects on humans 1 (Baron, Perone, and Galizio 1991). Moreover , it remains unclear whether community feedback in complex online social systems brings the intuitiv e beneficial ef fects predicted by this theory . In this work we develop a methodology for quantifying and comparing the effects of rew ards and punishments on multiple facets of the author’ s future behavior in the com- munity , and relate these effects to the broader theoretical framew ork of oper ant conditioning . In particular, we seek to understand whether community feedback regulates the qual- ity and quantity of a user’ s future contrib utions in a w ay that benefits the community . By applying our methodology to four large online news communities for which we hav e complete article comment- ing and comment v oting data (about 140 million v otes on 42 million comments), we discover that community feedback does not appear to drive the beha vior of users in a direc- tion that is beneficial to the community , as predicted by the operant conditioning framew ork. Instead, we find that com- munity feedback is likely to perpetuate undesired behavior . In particular , punished authors actually write worse 2 in sub- 1 The framework was de veloped and tested mainly through ex- periments on animal behavior (e.g., rats and pigeons); the lack of human experimentation can be attributed to methodological and ethical issues, especially with regards to punishment stimuli (e.g. electric shocks). 2 One important subtlety here is that the observed quality of a post (i.e., the proportion of up-votes) is not entirely a direct conse- sequent posts, while rewarded authors do not improve sig- nificantly . In spite of this detrimental effect on content quality , it is conceiv able that community feedback still helps regu- late quantity by selectively discouraging contributions from punished authors and encouraging rew arded authors to con- tribute more. Surprisingly , we find that ne gativ e feedback ac- tually leads to more (and more frequent) future contrib utions than positive feedback does. 3 T aken together , our findings suggest that the content ev aluation mechanisms currently implemented in social media systems hav e effects contrary to the interest of the community . T o further understand differences in social mechanisms causing these behavior changes, we conducted a structural analysis of the voter network around popular posts. W e dis- cov er that not only does positi ve and negati ve feedback tend to come from communities of users, but that the voting net- work is most polarized when votes are split equally between up- and down-v otes. These observations underscore the asymmetry between the effects of positiv e and neg ativ e feedback: the detrimen- tal impact of punishments is much more noticeable than the beneficial impact of rew ards. This asymmetry echoes the ne gativity effect studied extensi v ely in social psychology lit- erature: negati ve e vents ha ve a greater impact on individu- als than positive events of the same intensity (Kanouse and Hanson 1972; Baumeister et al. 2001). T o summarize our contrib utions, in this paper we • v alidate through a cro wdsourcing experiment that the pro- portion of up-votes is a robust metric for measuring and aggregating community feedback, • introduce a frame work based on propensity score match- ing for quantifying the effects of community feedback on a user’ s post quality , • discov er that effects of community ev aluations are gener- ally detrimental to the community , contradicting the intu- ition brought up by the operant conditioning theory , and • re veal an important asymmetry between the mechanisms underlying negati v e and positiv e feedback. Our results lead to a better understanding of how users re- act to peer ev aluations, and point to ways in which online rating mechanisms can be improved to better serve indi vid- uals, as well as entire communities. Further Related W ork Our contributions come in the context of an extensi ve lit- erature examining social media voting systems. One major research direction is concerned with predicting the helpful- ness ratings of product revie ws starting from te xtual and social factors (Ghose and Ipeirotis 2007; Liu et al. 2007; Otterbacher 2009; Tsur and Rappoport 2009; Lu et al. 2010; quence of the actual textual quality of the post, but is also affected by community bias effects. W e account for this through experi- ments specifically designed to disentangle these two factors. 3 W e note that these observations cannot simply be attrib uted to flame wars, as they spread o ver a much lar ger time scale. Mudambi and Schuff 2010) and understanding the underlay- ing social dynamics (Chen, Dhanasobhon, and Smith 2008; Danescu-Niculescu-Mizil et al. 2009; W u and Huberman 2010; Sipos, Ghosh, and Joachims 2014). The mechanisms driving user v oting behavior and the related community ef- fects ha ve been studied in other contexts, such as Q&A sites (Anderson et al. 2012a), Wikipedia (Burke and Kraut 2008; Leskov ec, Huttenlocher , and Kleinberg 2010; Anderson et al. 2012b), Y ouT ube (Siersdorfer et al. 2010), social news aggregation sites (Lampe and Resnick 2004; Lampe and Johnston 2005; Muchnik, Aral, and T aylor 2013) and on- line multiplayer games (Shores et al. 2014). Our work adds an important dimension to this general line of research, by providing a framework for analyzing the ef fects votes have on the author of the ev aluated content. The setting considered in this paper, that of comments on news sites and blogs, has also been used to study other social phenomena such as controversy (Chen and Berger 2013), political polarization (Park et al. 2011; Balasubramanyan et al. 2012), and community formation (G ´ omez, Kaltenbrun- ner , and L ´ opez 2008; Gonzalez-Bailon, Kaltenbrunner , and Banchs 2010). News commenting systems ha ve also been analyzed from a community design perspectiv e (Mishne and Glance 2006; Gilbert, Bergstrom, and Karahalios 2009; Di- akopoulos and Naaman 2011), including a particular focus on understanding what types of articles are likely to attract a large volume of user comments (Tsagkias, W eerkamp, and de Rijke 2009; Y ano and Smith 2010). In contrast, our anal- ysis focuses on the ef fects of v oting on the behavior of the author whose content is being ev aluated. Our findings here reveal that negati ve feedback does not lead to a decrease of undesired user behavior , but rather attenuates it. Given the difficulty of moderating undesired user behavior , it is worth pointing out that anti-social be- havior in social media systems is a growing concern (Hey- mann, K outrika, and Garcia-Molina 2007), as emphasized by work on revie w spamming (Lim et al. 2010; Mukher- jee, Liu, and Glance 2012; Ott, Cardie, and Hancock 2012), trolling (Shachaf and Hara 2010), social de viance (Shores et al. 2014) and online harassment (Y in et al. 2009). Measuring Community F eedback W e aim to develop a methodology for studying the subtle effects of community-provided feedback on the behavior of content authors in realistic large-scale settings. T o this end, we start by describing a longitudinal dataset where millions of users explicitly ev aluate each others’ content. Following that, we discuss a crowdsourcing experiment that helps es- tablish a robust aggre gate measure of community feedback. Dataset description W e in vestigate four online ne ws communities: CNN.com (general ne ws), Breitbart.com (political ne ws), IGN.com (computer gaming), and Allkpop.com (K orean entertain- ment), selected based on diversity and their large size. Com- mon to all these sites is that community members post com- ments on (ne ws) articles, where each comment can then be up- or do wn-voted by other users. W e refer to a comment as a post and to all posts relating to the same article as a thr ead . Community # Threads # Posts # V otes (Prop. Up-votes) # Registered Users Prop. Up-votes Q 1 Q 3 CNN 200,576 26,552,104 58,088,478 (0.82) 1,111,755 0.73 1.00 IGN 682,870 7,967,414 40,302,961 (0.84) 289,576 0.69 1.00 Breitbart 376,526 4,376,369 18,559,688 (0.94) 214,129 0.96 1.00 allkpop 35,620 3,901,487 20,306,076 (0.95) 198,922 0.84 1.00 T able 1: Summary statistics of the four communities analyzed in this study . The lower ( Q 1 ) and upper ( Q 3 ) quartiles for the proportion of up-votes only tak es into account posts with at least ten votes. From the commenting service provider , we obtained com- plete timestamped trace of user acti vity from March 2012 to August 2013. 4 W e restrict our analysis to users who joined a giv en community after March 2012, so that we are able to track users’ behavior from their “birth” onwards. As shown in T able 1 the data includes 1.2 million threads with 42 mil- lion comments, and 140 million votes from 1.8 million dif- ferent users. In all communities around 50% of posts receive at least one vote, and 10% recei v e at least 10 votes. Measures of Community Feedback Giv en a post with with some number of up- and do wn-votes we next require a measure that aggregates the post’ s votes into a single number , and that corresponds to the magnitude of rew ard/punishment received by the author of the post. Howe ver , it is not a priori clear how to design such a mea- sure. For example, consider a post that received P up-votes and N down-v otes. How can we combine P and N into a single number that best reflects the overall e v aluation of the community? There are several natural candidates for such a measure: the total number of up-votes ( P ) receiv ed by a post, the proportion of up-v otes ( P / ( P + N ) ), or the dif fer - ence in number of up-/do wn-votes ( P − N ). Howe ver , each of these measures has a particular drawback: P does not con- sider the number of down-v otes (e.g., 10+/0- vs. 10+/20-); P / ( P + N ) does not differentiate between absolute num- bers of votes recei ved (e.g., 4+/1- vs. 40+/10-); and, P − N does not consider the effect relativ e to the total number of posts (e.g., 5+/0- vs. 50+/45-). T o understand what a person’ s “utility function” for v otes is, we conducted an Amazon Mechanical T urk experiment that asked users ho w they would perceive receiving a given number of up- and down-v otes. On a sev en-point Likert scale, workers rated how they would feel about recei ving a certain number of up- and down-v otes on a comment that they made. The number of up- and down-v otes was v ar- ied between 0 and 20, and each worker responded to 10 randomly-selected pairs of up-votes and down-v otes. W e then took the average response as the mean rating for each pair . 66 workers labeled 4,302 pairs in total, with each pair obtaining at least 9 independent ev aluations. W e find that the proportion of up-votes ( P / ( P + N ) ) is a very good measure of how positiv ely a user perceives a certain number of up-v otes and down-v otes. In Figure 1, we notice a strong “diagonal” effect, suggesting that increasing 4 This is prior to an interface change that hides do wn-votes. 0 5 10 15 20 0 5 10 15 20 # P ositive V otes # Negative V otes 1 2 3 4 5 6 Rating Figure 1: People perceiv e votes received as proportions, rather than as absolute numbers. Higher ratings correspond to more positiv e perceptions. the total number of votes receiv ed, while maintaining the proportion of up-votes constant, does not significantly alter a user’ s perception. In T able 2 we e valuate how different measures correlate with human ratings, and find that P / ( P + N ) explains almost all the v ariance and achie ves the highest R 2 of 0.92. While other more complex measures could result in slightly higher R 2 , we subsequently use p = P / ( P + N ) because it is both intuitiv e and fairly rob ust. Thus, for the rest of the paper we use the proportion of up-votes (denoted as p ) as the measure of the ov erall feed- back of the community . W e consider a post to be positively evaluated if the proportion of up-v otes recei ved is in the up- per quartile Q 3 (75 th percentile) of all posts, and negatively evaluated if the fraction is instead in the lower quartile Q 1 (25 th percentile). This lets us account for dif ferences in com- munity voting norms: in some communities, a post may be perceiv ed as bad, even with a high fraction of up-votes (e.g. Breitbart). As the proportion of up-votes is skewed in most Measure R 2 F-Statistic p -value P 0.410 F (439) = 306 . 1 < 10 − 16 P − N 0.879 F (438) = 1603 < 10 − 16 P / ( P + N ) 0.920 F (438) = 5012 < 10 − 16 T able 2: The proportion of up-votes p = P / ( P + N ) best captures a person’ s perception of up-v oting and down- voting, according to a cro wdsourcing e xperiment. communities, at the 75th percentile all votes already tend to be up-votes (i.e, feedback is 100% positi ve). Further , in or- der to obtain sufficient precision of community feedback, we require that these posts hav e at least ten votes. Unless specified otherwise, all reported observations are consistent across all four communities we studied. For brevity , the figures that follo w are reported only for CNN, with error bars indicating 95% confidence interv als. Post Quality The operant conditioning frame work posits that an individ- ual’ s behavior is guided by the consequences of its past behavior (Skinner 1938). In our setting, this would predict that community feedback would lead users to produce bet- ter content. Specifically , we expect that users punished via negati v e feedback would either improv e the quality of their posts, or contribute less. Similarly , users rewarded by recei v- ing positiv e feedback would write higher quality posts, and contribute more often. In this section, we focus on understanding the effects of positiv e and negati ve feedback on the the quality of one’ s posts. W e start by simply measuring the post quality as the pr oportion of up-votes a giv en post receiv ed, denoted by p . Figure 2 plots the proportion of up-votes p as a function of time for users who received a positiv e, negati ve or neu- tral ev aluation. W e compare the proportion of up-votes of posts written before receiving the positiv e/negati ve ev alua- tion with that of posts written after the ev aluation. Interest- ingly , there is no significant difference for positi vely e valu- ated users (i.e., there is no significant dif ference between p before and after the ev aluation event). In the case of a negativ e ev aluation howev er , punishment leads to w orse community feedback in the future. More pre- cisely , the dif ference in the proportion of up-votes receiv ed by a user before/after the feedback e vent is statistically sig- nificant at p < 0 . 05 . This means that negati ve feedback seems to hav e exactly the opposite effect than predicted by the operant conditioning frame work (Skinner 1938). Rather than feedback leading to better posts, Figure 2 suggests that punished users actually get w orse, not better , after recei ving a negati v e ev aluation. T extual vs. Community Effects One important subtlety is that the observed proportion of up-votes is not entirely a direct consequence of the actual textual quality of the post, but could also be due to a com- munity’ s biased perception of a user . In particular , the drop in the proportion of up-votes received by users after nega- tiv e ev aluations observed in Figure 2 could be explained by two non-mutually exclusi ve phenomena: (1) after negativ e ev aluations, the user writes posts that are of lower quality than before ( textual quality ), or (2) users that are known to produce low quality posts automatically recei ve lower ev al- uations in the future, regardless of the actual textual quality of the post ( community bias ). W e disentangle these two ef fects through a methodology inspired by propensity matching, a statistical technique used to support causality claims in observational studies (Rosen- baum and Rubin 1983). 0.5 0.6 0.7 0.8 Before After Index Propor tion of Up−votes A vg Neg P os Figure 2: Proportion of up-votes before/after a user recei ves a positi ve (“Pos”), negati ve (“Neg”) or neutral (“ A vg”) e val- uation. After a positiv e e valuation, future e valuations of an author’ s posts do not differ significantly from before. How- ev er , after a negativ e ev aluation, an author receiv es worse ev aluations than before. First, we build a machine learning model that predicts a post’ s quality ( q ) by training a binomial regression model using only textual features e xtracted from the post’ s content, i.e. q is the pr edicted proportion of a post’ s up-votes. This way we are able to model the relationship between the con- tent of a post and the post’ s quality . This model was trained on half the posts in the community , and used to predict q for the other half (mean R = 0 . 22 ). W e v alidate this model using human-labeled text qual- ity scores obtained for a sample of posts ( n = 171 ). Us- ing Crowdflo wer , a crowdsourcing platform, workers were asked to label posts as either “good” (defined as something that a user would want to read, or that contributes to the discussion), or “bad” (the opposite). They were only shown the text of individual posts, and no information about the post’ s author . T en workers independently labeled each post, and these labels were aggregated into a “quality score” q 0 , the proportion of “good” labels. W e find that the correlation of q 0 with q ( R 2 = 0 . 25 ) is more than double of that with p ( R 2 = 0 . 12 ), suggesting that q is a reasonable approxi- mation of text quality . The low correlation of q 0 with p also suggests that a community effect influences the v alue of p . Since the model was trained to predict the proportion of a post’ s fraction of up-votes p , but only encodes text fea- tures (bigrams), the predicted proportion of up-v otes q corre- sponds to the quality of the post’ s te xt. In other w ords, when we compare changes in q , these can be attrib uted to changes in the text, rather than to how a community perceiv es the user . 5 Thus, this model allo ws us to assess the textual qual- ity of the post q , while the difference between the predicted and true proportion of up-v otes ( p − q ) allows us to quantify community bias. 5 Even though the predicted proportion of up-votes q can be biased by user and community effects, this bias affects all posts equally (since the model is only trained on te xtual features). In fact, we find the model error , p − q , to be uniformly distributed across all values of p . Figure 3: T o measure the effects of positive and negati v e ev aluations on post quality , we match pairs of posts of simi- lar te xtual quality q ( a 0 ) ≈ q ( b 0 ) written by tw o users A and B with similar post histories, where A ’ s post a 0 receiv ed a positiv e ev aluation, and B ’ s post b 0 receiv ed a negativ e ev al- uation. W e then compute the change in quality in the subse- quent three posts: q ( a [1 , 3] ) − q ( a 0 ) and q ( b [1 , 3] ) − q ( b 0 ) . Using the textual regression model, we match pairs of users ( A, B ) that contributed posts of similar quality , but that receiv ed very different ev aluations: A ’ s post was pos- itiv ely ev aluated, while B ’ s post was negati vely ev aluated. This experimental design can be interpreted as selecting pairs of users that appear indistinguishable before the “treat- ment” (i.e., ev aluation) e vent, but where one was punished while the other rew arded. The goal then is to measure the ef- fect of the treatment on the users’ future beha vior . As the two users “looked the same” before the treatment, an y change in their future behavior can be attributed to the effect of the treatment (i.e., the act of receiving a positiv e or a negati ve ev aluation). Figure 3 summarizes our experimental setting. Here, A ’ s post a 0 receiv ed a positiv e ev aluation and B ’ s post b 0 re- ceiv ed a neg ativ e ev aluation, and we ensure that these posts are of the same textual quality , | q ( a 0 ) − q ( b 0 ) | ≤ 10 − 4 . W e further control for the number of words written by the user , as well as for the user’ s past beha vior: both the number of posts written before the ev aluation was received, and the mean proportion of up-votes votes recei ved on posts in the past (T able 3). T o establish the effect of reward and punish- ment we then examine the next three posts of A ( a [1 , 3] ) and the next three posts of B ( b [1 , 3] ). It is safe to assume that when a user contributes the first post a 1 after being punished or rewarded, the feedback on her previous post a 0 has already been receiv ed. Depending on the community , in roughly 70 to 80% of the cases the feedback on post a 0 at time of a 1 ’ s posting is within 1% of a 0 ’ s final feedback p ( a 0 ) . How feedback affects a user’ s post quality . T o under- stand whether ev aluations result in a change of text quality , we compare the post quality for users A and B before and after the y recei ve a punishment or a rew ard. Importantly , we do not compare the actual fraction p of up-v otes recei ved by the posts, but rather the fraction q as predicted by the text- only regression model. By design, both A and B write posts of similar quality q ( a 0 ) ≈ q ( b 0 ) at time t = 0 . W e then compute the quality of the three posts following t = 0 as the av erage predicted fraction of up-votes q ( a [1 , 3] ) of posts a 1 , a 2 , a 3 . Finally , we compare the post quality before/after the treatment ev ent, by computing the difference ∆ a = q ( a [1 , 3] ) − q ( a 0 ) for the rew arded user A . Similarly , we compute ∆ b = q ( b [1 , 3] ) − q ( b 0 ) for the punished user B . Now , if the positive (respectiv ely neg ativ e) feedback has no effect and the post quality does not change, then the dif- ference ∆ a (respectiv ely ∆ b ) should be close to zero. How- ev er , if subsequent post quality changes, then this quantity should be dif ferent from zero. Moreov er , the sign of ∆ a (re- spectiv ely ∆ b ) gi ves us the direction of change: a positive value means that the post quality of positiv ely (respectively negati v ely) e v aluated users improves, while a negati ve value means that post quality drops after the ev aluation. Using a Mann-Whitney’ s U test, we find that across all communities, the quality of text significantly changes af- ter the ev aluation. In particular , we find that the post qual- ity significantly dr ops after a negati ve e valuation ( ∆ b < 0 at significance le vel p < 0 . 05 and effect size r > 0 . 06 ). This effect is similar both within and across threads (av er- age r = 0 . 19 , 0 . 18 respecti vely). While the effect of nega- tiv e feedback is consistent across all communities, the effect of positiv e feedback is inconsistent and not significant. These results are interesting as they establish the effect of re ward and punishment on the quality of a user’ s fu- ture posts. Surprisingly , our findings are in a sense exactly the opposite than what we would expect under the operant conditioning framew ork. Rather than ev aluations increasing the user’ s post quality and steering the community to wards higher quality discussions, we find that negati ve ev aluations actually decrease post quality , with no clear trend for posi- tiv e e valuations ha ving an ef fect either way . How feedback affects community perception. W e also aim to quantify whether ev aluations changes the commu- nity’ s perception of the ev aluated user (community bias). That is, do users that generally contribute good posts “un- deservedly” receiv e more up-votes for posts that may actu- ally not be that good? And similarly , do users that tend to contribute bad posts receiv e more down-v otes e ven for posts that are in fact good? T o measure the community perception effect we use the experimental setup already illustrated in Figure 3. As be- fore, we first match users ( A, B ) on the predicted frac- tion of up-votes q ; we then measure the residual differ- ence between the true and the predicted fraction of up-votes p ( a [1:3] ) − q ( a [1:3] ) after user A ’ s treatment (analogously for user B ). Systematic non-zero residual differences are sug- gestiv e of community bias effects, i.e., posts get ev aluated differently from how they should be based solely on their textual quality . Specifically , if the community ev aluates a user’ s posts higher than expected then the residual differ - ence is positiv e, and if a user’ s posts are ev aluated lower than expected then the residual dif ference is neg ativ e. Across all communities, posts written by a user after re- ceiving negati ve ev aluations are perceiv ed worse than the Befor e matching After matching Positiv e ( A ) Negati ve ( B ) Positive ( A ) Ne gativ e ( B ) n = 72463 n = 39788 n = 35640 n = 35640 T extual quality q ( a 0 ) / q ( b 0 ) 0.885 0.810 0.828 0.828 Number of words 42.1 34.5 29.8 30.0 Number of past posts 507 735 596 607 Prop. positiv e votes on past posts 0.833 0.650 0.669 0.668 T able 3: T o obtain pairs of positiv ely and negati vely ev aluated users that were as similar as possible, we matched these user pairs on post quality and the user’ s past behavior . On the CNN dataset, the mean v alues of these statistics were significantly closer after matching. Similar results were also obtained for other communities. Figure 4: The ef fect of ev aluations on user behavior . W e ob- serve that both community perception and text quality is sig- nificantly worse after a negati v e ev aluation than after a pos- itiv e e v aluation (in spite of the initial post and user match- ing). Significant dif ferences are indicated with stars, and the scale of effects ha v e been edited for visibility . text-only model prediction, and this discrepancy is much larger than the one observed after positiv e ev aluations ( p < 10 − 16 , r > 0 . 03 ). This ef fect is also stronger within threads (av erage r = 0 . 57 ) than across threads (av erage r = 0 . 13 ). For instance, after a negati ve ev aluation on CNN, posts writ- ten by the punished author in the same thread are ev aluated on a verage 48 percentage points lo wer than expected by just considering their text. Note that the true magnitude of these ef fects could be smaller than reported, as using a different set of textual fea- tures could result in a more accurate classifier , and hence smaller residuals. Nev ertheless, the experiment on post qual- ity presented earlier does not suf fer from these potential classifier deficiencies. Summary . Figure 4 summarizes our observations regard- ing the effects of community feedback on the textual and perceiv ed quality of the author’ s future posts. W e plot the textual quality and proportion of up-votes before and after the e v aluation e vent (“treatment”). Before the e v aluation the textual quality of the posts of two users A and B is indistin- guishable, i.e., q ( a 0 ) ≈ q ( b 0 ) . Ho we ver , after the e valuation ev ent, the textual quality of the posts of the positi vely ev alu- ated user A remains at the same lev el, i.e., q ( a 0 ) ≈ q ( a [1:3] ) , while the quality of the posts of the negati vely e v aluated user B drops significantly , i.e., q ( b 0 ) > q ( b [1:3] ) . W e conclude that community feedback does not improve the quality of discussions, as predicted by the operand conditioning theory . Instead, punished authors actually write w orse in subsequent posts, while rew arded authors do not improv e significantly . W e also find suggestiv e evidence of community bias ef- fects, creating a discrepancy between the percei ved quality of a user’ s posts and their textual quality . This perception bias appears to mostly affect neg ativ ely ev aluated users: the perceiv ed quality of their subsequent posts p ( b [1:3] ) is much lower than their textual quality q ( b [1:3] ) , as illustrated in Fig- ure 4. Perhaps surprisingly , we find that community percep- tion is an important factor in determining the proportion of up-votes a post recei v es. Overall, we notice an important asymmetry between the effects of positi ve and negati ve feedback: the detrimental ef- fects of punishments are much more noticeable than the ben- eficial ef fects of rew ards. This asymmetry echoes the nega- tivity effect studied extensi vely in the social psychology lit- erature (Kanouse and Hanson 1972; Baumeister et al. 2001). User Activity Despite the detrimental effect of community feedback on an author’ s content quality , community feedback could still hav e a beneficial effect by selecti vely regulating quantity , i.e., discouraging contributions from punished authors and encouraging rew arded authors to contribute more. T o establish whether this is indeed the case we again use a methodology based on propensity score matching, where our v ariable of interest is now posting frequenc y . As before, we pair users that wrote posts of the same textual quality (ac- cording to the textual regression model), ensuring that one post was positi vely e v aluated, and the other negati vely e v al- uated. W e further control for the variable of interest by con- sidering matching pairs of users that had the same posting frequency before the ev aluation. This methodology allows us to compare the effect of positive and negati ve feedback on the author’ s future posting frequency . Figure 5 plots the ratio between in verse posting frequency after the treatment and in verse posting frequency before the treatment, where inv erse frequency is measured as the av- erage time between posts in a windo w of three posts af- 0 50 100 150 No Feedback P ositive Negative Ratio of Time Between P osts After / Before Figure 5: Negativ e ev aluations increase posting frequency more than positi ve ev aluations; in contrast, users that re- ceiv ed no feedback slow down. (V alues below 100 corre- spond to an increase in posting frequency after the ev alua- tion; a lower v alue corresponds to a larger increase.) ter/before the treatment. Contrary to what operant condition- ing would predict, we find that negati v e ev aluations encour- age users to post more frequently . Comparing the change in frequency of the punished users with that of the re warded users, we also see that neg ativ e ev aluations hav e a greater ef- fect than positiv e ev aluations ( p < 10 − 15 , r > 0 . 18 ). More- ov er , when we examine the users who receiv ed no feedback on their posts, we find that they actually slow down. In par- ticular , users who receiv ed no feedback write about 15% less frequently , while those who received positi ve feedback write 20% more frequently than before, and those who receiv ed negati v e feedback write 30% more frequently than before. These ef fects are also statistically significant, and consistent across all four communities. The same general trend is true when considering the im- pact of ev aluations on user retention (Figure 6): punished users (“Neg”) are more likely than rewarded users (“Pos”) to stay in the community and contribute more posts ( χ 2 > 6 . 8 , p < 0 . 01 ); also both types or users are less likely to leav e the community than the control group (“ A vg”). Note, howe ver , that the nature of this experiment does not allow one to control for the v alue of interest (retention rate) before the ev aluation. The fact that both types of ev aluations encourage users to post more frequently suggests that providing negati ve feed- back to “bad” users might not be a good strategy for com- bating undesired behavior in a community . Giv en that users who receiv e no feedback post less frequently , a potentially effecti ve strategy could be to ignore undesired behavior and provide no feedback at all. V oting Behavior Our findings so far suggest that negati ve feedback worsens the quality of future interactions in the community as pun- ished users post more frequently . As we will discuss next, 0.6 0.7 0.8 0.9 1.0 1 3 5 7 9 11 13 15 17 19 Number of Subsequent P osts Retention Rate A vg Neg P os Figure 6: Rewarded users (“Pos”) are likely to leave the community sooner than punished users (“Ne g”). A verage users (“ A vg”) are most likely to leav e. For a gi ven number of subsequent posts x the retention rate is calculated as the fraction of users that posted at least x more posts. 0.45 0.50 0.55 0.60 0.65 0.70 0.00 0.25 0.50 0.75 Propor tion of Up−votes Receiv ed from Others Prop . Up−V otes Given to Others Figure 7: Users seem to engage in “tit-for -tat” — the more up-votes a user receiv es, the more likely she is to give up- votes to content written by others. these detrimental effects are exacerbated by the changes in the voting beha vior of e valuated users. Tit-f or -tat. As users receiv e feedback, both their posting and voting beha vior is affected. When comparing the frac- tion of up-votes received by a user with the fraction of up- votes given by a user , we find a strong linear correlation (Figure 7). This suggests that user behavior is largely “tit- for-tat”. If a user is negati vely/positi vely ev aluated, she in turn will negati vely/positi vely e v aluate others. Howe ver , we also note an interesting deviation from the general trend. In particular , very negati v ely ev aluated people actually respond in a positi ve direction: the proportion of up-votes they give is higher than the proportion of up-votes they receiv e. On the other hand, users receiving many up-v otes appear to be more “critical”, as they e valuate others more neg ativ ely . For example, people receiving a fraction of up-votes of 75% tend to giv e up-v otes only 67% of the time. 0.62 0.64 0.66 Before After Index Propor tion of Up−votes Giv en A vg Neg P os Figure 8: If a user is e v aluated negati vely , then she also tends to vote on others more negati vely in the week following the ev aluation than in the week before the ev aluation. Howe ver , we observe no statistically significant effect on users who receiv e a positi ve e v aluation. Figure 9: An example voting network G around a post a . Users B to H up-vote (+) or down-v ote (-) a , and may also ha ve voted on each other . The graph induced on the four users who up-voted a ( B , C , D , E ) forms G + (blue nodes), and that induced on the three users who do wn-v oted a ( F , G, H ) forms G − (green nodes). Nev ertheless, this ov erall perspecti ve does not directly distinguish between the ef fects of positiv e and ne gati ve e val- uations on voting behavior . T o achiev e that, Figure 8 com- pares the change in voting behavior following a positive or negati v e ev aluation. W e find that ne gatively-evaluated users ar e mor e likely to down-vote others in the week follo wing an ev aluation, than in the week before it ( p < 10 − 13 , r > 0 . 23 ). In contrast, we observe no significant ef fect for the positiv ely ev aluated users. Overall, punished users not only change their posting be- havior , but also their voting beha vior by becoming more likely to ev aluate their fellow users negati vely . Such behav- ior can percolate the detrimental effects of negativ e feedback through the community . 0.04 0.06 0.08 0.10 0.12 0.0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 Proportion of Positive V otes Difference from Random (a) Balance −0.4 −0.3 −0.2 −0.1 0.0 0.1 0.0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 Proportion of Positive V otes Difference from Random (b) Edges across the camps Figure 10: (a) The difference between the observed fraction of balanced triangles and that obtained when edge signs are shuffled at random. The peak at 50% suggests that when votes are split ev enly , these v oters belong to different groups. (b) The difference of the observed number of edges between the up-voters and negati ve voters, versus those in the case of random re wiring. The lowest point occurs when the v otes are split ev enly . Organization of V oting Networks Having observed the effects community feedback has on user behavior , we now turn our attention to structural signa- tures of positive and negati ve feedback. In particular , we aim at studying the structure of the social network around a post and understanding (1) when do ev aluations most polarize this social network, and (2) whether positi ve/neg ativ e feed- back comes from independent people or from tight groups. Experimental setup. W e define a social networks around each post, a voting network , as illustrated in Figure 9. For a giv en post a , we generate a graph G = ( V , E ) , with V being the set of users who voted on a . An edge ( B , C ) exists be- tween voters B and C if B voted on C in the 30 days prior to when the post a was created. Edges are signed: positi ve for up-votes, negati ve for down-v otes. W e examine voting net- works for posts which obtained at least ten votes, and have at least one up-vote and one do wn-v ote. When is the voting network most polarized? And, to what degree do coalitions or factions form in a post’ s v oting network? As our networks are signed, we apply structural balance theory (Cartwright and Harary 1956), and examine the fraction of balanced triads in our network. A triangle is balanced if it contains three positive edges (a set of three “friends”), or two negati v e edges and a positive edge (a pair of “friends” with a common “enemy”). The more balanced the network, the stronger the separation of the network into coalitions — nodes inside the coalition up-vote each other, and down-v ote the rest of the network. Figure 10a plots the fraction of balanced triangles in a post’ s voting network as a function of the proportion of up- votes that post recei ves (normalized by randomly shuffling the edge signs in the original v oting network). When votes on a post are split about e venly between up- and down-v otes, the network is most balanced. This means that when votes are split e venly , the coalitions in the network are most pro- nounced and thus the network most polarized. This observa- tion holds in all four studied communities. W e also compare the number of edges between the up- voters and down-v oters (i.e., edges crossing between G + and G − ) to that of a randomly rewired network (a random network with the same degree distribution as the original). Figure 10b plots the normalized number edges between the two camps as a function of the proportion of up-votes the post received. W e make two interesting observ ations. First, the number of edges crossing the positi ve and negativ e camp is lo west when votes are split about ev enly . Thus, when votes are split e venly not only is the network most balanced, b ut also the number of edges crossing the camps is smallest. Second, the number of edges is always below what occurs in randomly rewired networks (i.e., the Z-scores are nega- tiv e). This suggests that the camp of up-v oters and the camp of down-v oters are generally not voting on each other . These effects are qualitati vely similar in all four communities. Where does feedback come from? Ha ving observed the formation of coalitions, we are next interested in their rela- tiv e size. Is feedback generally giv en by isolated individu- als, or by tight groups of like-minded users? W e find inter- esting differences between communities. In general-interest news sites lik e CNN, up-votes on positiv ely-e valuated posts are likely to come from multiple groups — the size of the largest connected component decreases as the proportion of up-votes increases. In other words, ne gati ve voters on a post are likely to have voted on each other . Howe v er , on special- interest web sites like Breitbart, IGN, and Allkpop, the size of the largest connected component also peaks when votes are almost all positiv e. Thus, up-voters in these communi- ties are also likely to have voted on each other suggesting that they come from tight groups. Discussion Rating, voting and other feedback mechanisms are heavily used in today’ s social media systems, allowing users to ex- press opinions about the content the y are consuming. In this paper , we contribute to the understanding of how feedback mechanisms are used in online systems, and how the y af fect the underlying communities. W e start from the observation that when users ev aluate content contributed by a fello w user (e.g., by liking a post or voting on a comment) they also im- plicitly ev aluate the author of that content, and that this can lead to complex social ef fects. In contrast to previous work, we analyze effects of feed- back at the user lev el, and validate our results on four large, div erse comment-based ne ws communities. W e find that negati v e feedback leads to significant changes in the author’ s behavior , which are much more salient than the effects of positiv e feedback. These ef fects are detrimental to the com- munity: authors of negati vely ev aluated content are encour- aged to post more, and their future posts are also of lower quality . Moreov er , these punished authors are more likely to later e v aluate their fellow users negati v ely , percolating these undesired effects through the community . W e relate our empirical findings to the operand condition- ing theory from behavioral psychology , which explains the underlying mechanisms behind reinforcement learning, and find that the observed behaviors deviate significantly from what the theory predicts. There are several potential factors that could e xplain this de viation. Feedback in online settings is potentially very different from that in controlled labora- tory settings. For example, receiving down-votes is likely a much less se vere punishment than recei ving electric shocks. Also, feedback effects might be stronger if a user trusts the authority providing feedback, e.g., site administrators down- voting author’ s posts could hav e a greater influence on the author’ s behavior than peer users doing the same. Crucial to the arguments made in this paper is the abil- ity of the machine learning regression model to estimate the textual quality of a post. Estimating text quality is a very hard machine learning problem, and although we v alidate our model of text quality by comparing its output with hu- man labels, the goodness of fit we obtain can be further im- prov ed. Improving the model could allow for finer-grained analysis and re veal ev en subtler relations between commu- nity feedback and post quality . Localized back-and-forth arguments between people (i.e. flame wars) could also potentially affect our results. How- ev er , we discard these as being the sole explanation since the observed behavioral changes carry on across different threads, in contrast to flame wars which are usually con- tained within threads. Moreover , we find that the set of users providing feedback changes drastically across differ - ent posts of the same user . This suggests that users do not usually create “enemies” that continue to follo w them across threads and down-v ote any posts they write. Future work is needed to understand the scale and effects of such beha vior . There are many interesting directions for future research. While we focused only on one type of feedback — v otes coming from peer users — there are se veral other types that would be interesting to consider , such as feedback provided through textual comments. Comparing voting communities that support both up- and do wn-v otes with those that only al- low for up-votes (or lik es) may also rev eal subtle dif ferences in user beha vior . Another open question is how the relati ve authority of the feedback provider affects author’ s response and change in behavior . Further, building machine learning models that could identify which types of users improv e af- ter receiving feedback and which types worsen could allow for targeted intervention. Also, we have mostly ignored the content of the discussion, as well as the context in which the post appears. Performing deeper linguistic analysis, and un- derstanding the role of the context may re veal more comple x interactions that occur in online communities. More broadly , online feedback also relates to the key soci- ological issues of norm enforcement and socialization (Par- sons 1951; Cialdini and T rost 1998, inter alia), i.e. what role does peer feedback play in directing users to wards the be- havior that the community expects, and how a user’ s reac- tion to feedback can be interpreted as her desire to con- form to (or depart from) such community norms. For ex- ample, our results suggest that negati ve feedback could be a potential trigger of de viant behavior (i.e., behavior that goes against established norms, such as trolling in online communities). Here, surveys and controlled experiments can complement our e xisting data-dri ven methodology and shed light on these issues. Acknowledgments W e thank Disqus for the data used in our experiments and our anonymous revie wers for their helpful comments. This work has been supported in part by a Stanford Graduate Fellowship, an Alfred P . Sloan Fellow- ship, a Microsoft Faculty Fello wship and NSF IIS-1159679. References Anderson, A.; Huttenlocher, D.; Kleinberg, J.; and Leskovec, J. 2012a. Discovering V alue from Community Activity on Focused Question Answering Sites: A Case Study of Stack Overflo w . In Pr oc. KDD . Anderson, A.; Huttenlocher, D.; Kleinberg, J.; and Leskovec, J. 2012b . Effects of user similarity in social media. In Proc. WSDM . Balasubramanyan, R.; Cohen, W . W .; Pierce, D.; and Redlawsk, D. P . 2012. Modeling Polarizing T opics: When Do Different Polit- ical Communities Respond Dif ferently to the Same News? In Pr oc. ICWSM . Baron, A.; Perone, M.; and Galizio, M. 1991. Analyzing the re- inforcement process at the human level: can application and be- havioristic interpretation replace laboratory research? Behavioral Analysis . Baumeister , R. F .; Bratslavsk y , E.; Finkenauer , C.; and V ohs, K. D. 2001. Bad is stronger than good. Rev . of General Psycholo gy . Burke, M., and Kraut, R. 2008. Mopping up: modeling wikipedia promotion decisions. In Pr oc. CSCW . Cartwright, D., and Harary , F . 1956. Structural balance: a general- ization of heider’ s theory . Psychological Re v . Chen, Z., and Berger , J. 2013. When, Why , and How Controversy Causes Con versation. J . of Consumer Resear ch . Chen, P .-Y .; Dhanasobhon, S.; and Smith, M. D. 2008. All Re views are Not Created Equal: The Disaggregate Impact of Revie ws and Revie wers at Amazon.Com. SSRN eLibrary . Cialdini, R. B., and T rost, M. R. 1998. Social influence: Social norms, conformity and compliance . McGraw-Hill. Danescu-Niculescu-Mizil, C.; Kossinets, G.; Kleinberg, J.; and Lee, L. 2009. How opinions are receiv ed by online communities: A case study on Amazon.com helpfulness votes. In Proc. WWW . Diakopoulos, N., and Naaman, M. 2011. T owards quality discourse in online news comments. In Proc. CSCW . Ghose, A., and Ipeirotis, P . G. 2007. Designing Novel Revie w Ranking Systems: Predicting the Usefulness and Impact of Re- views. Proc. A CM EC . Gilbert, E.; Bergstrom, T .; and Karahalios, K. 2009. Blogs are Echo Chambers: Blogs are Echo Chambers. In Pr oc. HICSS . G ´ omez, V .; Kaltenbrunner, A.; and L ´ opez, V . 2008. Statistical analysis of the social network and discussion threads in Slashdot. In Pr oc. WWW . Gonzalez-Bailon, S.; Kaltenbrunner , A.; and Banchs, R. E. 2010. The structure of political discussion networks: A model for the analysis of online deliberation. J. of Information T echnology . Heymann, P .; Koutrika, G.; and Garcia-Molina, H. 2007. Fighting Spam on Social W eb Sites: A Survey of Approaches and Future Challenges. IEEE Internet Computing . Kanouse, D. E., and Hanson, L. R. 1972. Ne gativity in Evaluations . General Learning Press. Kim, S.-M.; Pantel, P .; Chklovski, T .; and Pennacchiotti, M. 2006. Automatically assessing revie w helpfulness. Pr oc. EM-NLP . Lampe, C., and Johnston, E. 2005. Follo w the (Slash) Dot: Effects of Feedback on New Members in an Online Community. In Pr oc. A CM SIGGR OUP . Lampe, C., and Resnick, P . 2004. Slash(dot) and burn: Distributed moderation in a large online con versation space. Pr oc. CHI . Leskov ec, J.; Huttenlocher, D.; and Kleinberg, J. 2010. Gov er- nance in Social Media: A case study of the Wikipedia promotion process. In Pr oc. ICWSM . Lim, E.-P .; Nguyen, V .-A.; Jindal, N.; Liu, B.; and Lauw , H. W . 2010. Detecting product revie w spammers using rating behaviors. Pr oc. CIKM . Liu, J.; Cao, Y .; Lin, C.-Y .; Huang, Y .; and Zhou, M. 2007. Low- quality product re view detection in opinion summarization. Pr oc. EMNLP-CoNLL . Lu, Y .; Tsaparas, P .; Ntoulas, A.; and Polan yi, L. 2010. Exploiting social context for re view quality prediction. In Proc. WWW . Mishne, G., and Glance, N. 2006. Leave a reply: An analysis of weblog comments. In WWE . Muchnik, L.; Aral, S.; and T aylor , S. 2013. Social Influence Bias: A Randomized Experiment. Science 341. Mudambi, S. M., and Schuff, D. 2010. What makes a helpful online revie w? A study of consumer re views on Amazon.com. MIS Quarterly . Mukherjee, A.; Liu, B.; and Glance, N. 2012. Spotting Fake Re- viewer Groups in Consumer Re vie ws. In Pr oc. WWW . Ott, M.; Cardie, C.; and Hancock, J. 2012. Estimating the Prev a- lence of Deception in Online Review Communities. In Pr oc. WWW . Otterbacher , J. 2009. ’Helpfulness’ in online communities: a mea- sure of message quality . Proc. CHI . Park, S.; Ko, M.; Kim, J.; Liu, Y .; and Song, J. 2011. The politics of comments: predicting political orientation of news stories with commenters’ sentiment patterns. In Pr oc. CSCW . Parsons, T . 1951. The social system . London: Routledge. Rosenbaum, P . R., and Rubin, D. B. 1983. The central role of the propensity score in observational studies for causal effects. Biometrika . Shachaf, P ., and Hara, N. 2010. Beyond vandalism: W ikipedia trolls. J. of Information Science . Shores, K.; He, Y .; Swanenburg, K. L.; Kraut, R.; and Riedl, J. 2014. The Identification of Deviance and its Impact on Retention in a Multiplayer Game. In Pr oc. CSCW . Siersdorfer , S.; Chelaru, S.; Nejdl, W .; and San Pedro, J. 2010. Ho w useful are your comments?: Analyzing and predicting Y ouTube comments and comment ratings. In Pr oc. WWW . Sipos, R.; Ghosh, A.; and Joachims, T . 2014. W as This Re view Helpful to Y ou? It Depends! Conte xt and V oting Patterns in Online Content. In Pr oc. WWW . Skinner , B. F . 1938. The behavior of or ganisms: an experimental analysis . Appleton-Century . Tsagkias, M.; W eerkamp, W .; and de Rijke, M. 2009. Predicting the volume of comments on online ne ws stories. In Pr oc. CIKM . Tsur , O., and Rappoport, A. 2009. Revrank: A fully unsuper- vised algorithm for selecting the most helpful book revie ws. Proc. ICWSM . W u, F ., and Huberman, B. A. 2010. Opinion formation under costly expression. A CM T rans. on Intelligent Systems and T ec hnology . Y ano, T ., and Smith, N. A. 2010. What’ s worthy of comment? Content and comment volume in political blogs. In Proc. ICWSM . Y in, D.; Xue, Z.; Hong, L.; Davison, B. D.; Kontostathis, A.; and Edwards, L. 2009. Detection of harassment on W eb 2.0. In Pr oc. CA W2.0 W orkshop at WWW .

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment