Exploiting Agent and Type Independence in Collaborative Graphical Bayesian Games

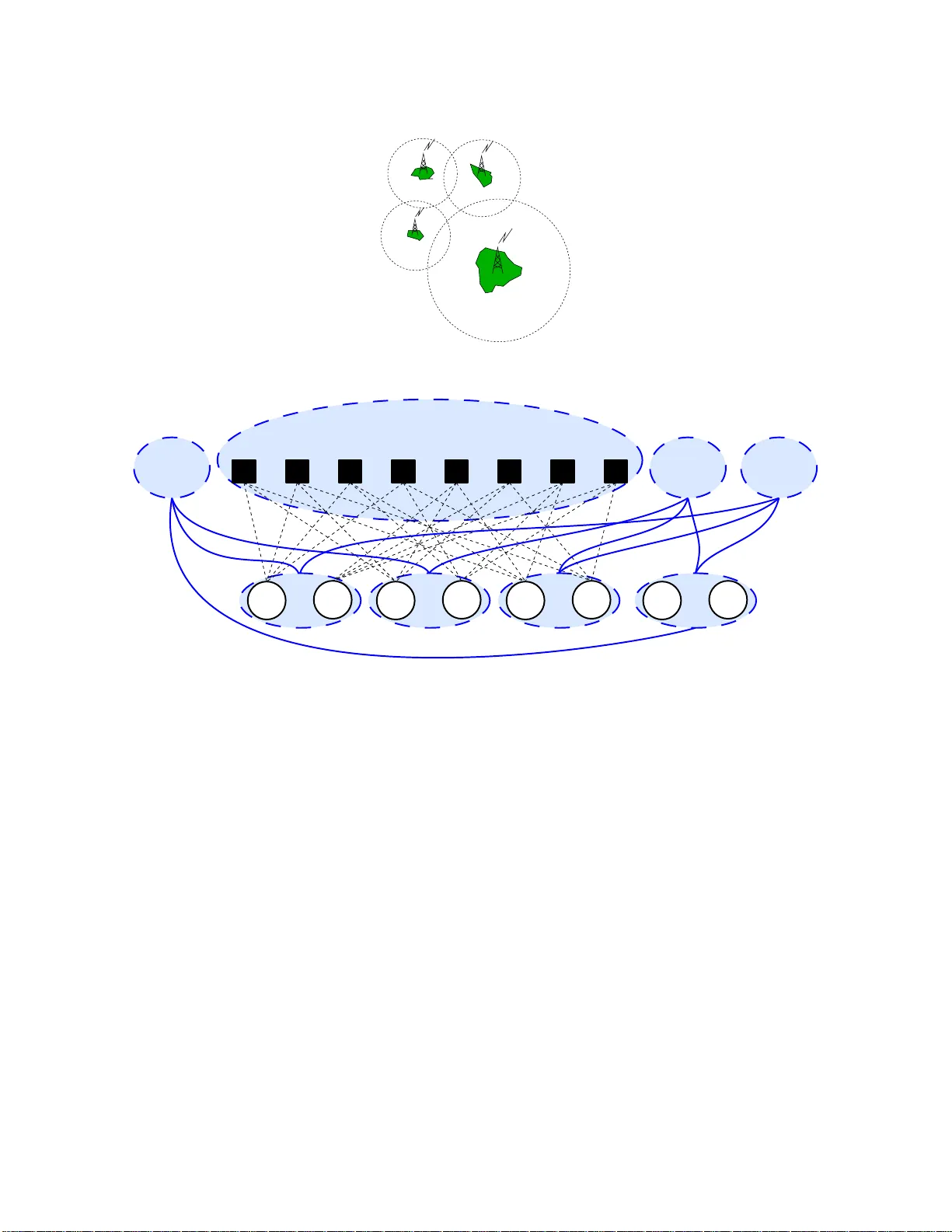

Efficient collaborative decision making is an important challenge for multiagent systems. Finding optimal joint actions is especially challenging when each agent has only imperfect information about the state of its environment. Such problems can be …

Authors: Frans A. Oliehoek, Shimon Whiteson, Matthijs T.J. Spaan