Tolerating Silent Data Corruption in Opaque Preconditioners

We demonstrate algorithm-based fault tolerance for silent, transient data corruption in “black-box” preconditioners. We consider both additive Schwarz domain decomposition with an ILU(k) subdomain solver, and algebraic multigrid, both implemented in the Trilinos library. We evaluate faults that corrupt preconditioner results in both single and multiple MPI ranks. We then analyze how our approach behaves when then application is scaled. Our technique is based on a Selective Reliability approach that performs most operations in an unreliable mode, with only a few operations performed reliably. We also investigate two responses to faults and discuss the performance overheads imposed by each. For a non-symmetric problem solved using GMRES and ILU, we show that at scale our fault tolerance approach incurs only 22% overhead for the worst case. With detection techniques, we are able to reduce this overhead to 1.8% in the worst case.

💡 Research Summary

This paper addresses the growing concern of silent data corruption (SDC) in large‑scale parallel systems, where energy constraints and shrinking device geometries make soft faults increasingly likely. Unlike hard faults that cause process loss and are typically handled by global checkpoint/restart, SDC can silently corrupt data without being detected, potentially leading to incorrect scientific results. The authors propose an algorithm‑based fault‑tolerance approach that treats preconditioners as opaque black‑boxes, avoiding any modification of their complex code bases.

The core idea is a Selective Reliability (SR) programming model: the preconditioner is executed in an “unreliable” mode, while the outer iterative linear solver runs in a “reliable” mode. Errors produced by the preconditioner therefore appear as a changing preconditioner to the outer solver, which is chosen to be robust against such variations. This mirrors the FT‑GMRES concept of an outer‑inner solver pair, but the authors extend it to both GMRES (for nonsymmetric systems) and CG (for symmetric positive‑definite systems).

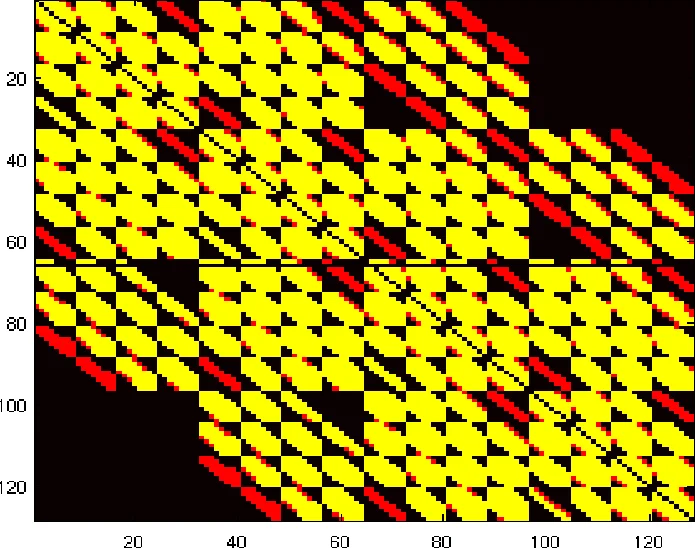

Two representative preconditioners are examined: (1) additive Schwarz domain decomposition with an ILU(k) subdomain solver, and (2) Algebraic Multigrid (AMG) implemented via Trilinos’ MueLu package. The former has no overlap between subdomains, so a fault on one MPI rank does not affect others; the latter has a multilevel hierarchy, allowing a fault at a coarse level to propagate across many ranks.

The fault model abstracts SDC as a numerical error in the preconditioner output vector ( \tilde{z}=M^{-1}w ). Two classes of “bad” errors are defined: (a) permutations of a subdomain’s portion of the global vector, which preserve the L2 norm, and (b) scaling of that portion, which changes the L2 norm. Faults are injected at the MPI‑process level, either on a single rank or on multiple ranks simultaneously.

Experiments are conducted using Trilinos’ Tpetra, Belos, Ifpack2, and MueLu libraries. The primary metric is the number of extra preconditioner applications required to recover convergence after a fault, which directly translates into runtime overhead. Results show:

- GMRES with ILU(k) incurs at most a 22 % overhead (1.22× extra preconditioner calls) even in the worst case where all ranks are corrupted.

- CG with additive Schwarz experiences virtually no extra calls because the error remains local and CG’s residual‑based correction quickly neutralizes it.

- GMRES with AMG suffers larger overhead (up to 1.5× extra calls) due to error propagation through the multilevel hierarchy. However, when a lightweight detection mechanism (monitoring L2 norm changes or sudden residual spikes) is combined with a rollback to the last known good preconditioner result, the overhead drops dramatically to 1.8 % in the worst case.

The detection/rollback strategy adds negligible communication cost because the rollback simply reuses a previously stored local vector. Scaling studies up to thousands of MPI processes demonstrate that the SR approach’s overhead grows sub‑linearly; the additive Schwarz case remains essentially cost‑free, while AMG’s overhead is bounded by the depth of the V‑cycle and the frequency of detected faults.

In summary, the authors provide a practical pathway to tolerate SDC in preconditioned Krylov solvers without altering the preconditioner code. By isolating the preconditioner in an unreliable sandbox and relying on the outer solver’s inherent robustness, they achieve fault tolerance with modest performance penalties. The combination of SR with simple norm‑based detection and rollback yields overheads as low as 1.8 % even under aggressive fault injection, suggesting that future exascale systems can maintain scientific reliability while operating in energy‑efficient, potentially less‑reliable hardware regimes.

Comments & Academic Discussion

Loading comments...

Leave a Comment