Piecewise Constant Sequential Importance Sampling for Fast Particle Filtering

Particle filters are key algorithms for object tracking under non-linear, non-Gaussian dynamics. The high computational cost of particle filters, however, hampers their applicability in cases where the likelihood model is costly to evaluate, or where large numbers of particles are required to represent the posterior. We introduce the approximate sequential importance sampling/resampling (ASIR) algorithm, which aims at reducing the cost of traditional particle filters by approximating the likelihood with a mixture of uniform distributions over pre-defined cells or bins. The particles in each bin are represented by a dummy particle at the center of mass of the original particle distribution and with a state vector that is the average of the states of all particles in the same bin. The likelihood is only evaluated for the dummy particles, and the resulting weight is identically assigned to all particles in the bin. We derive upper bounds on the approximation error of the so-obtained piecewise constant function representation, and analyze how bin size affects tracking accuracy and runtime. Further, we show numerically that the ASIR approximation error converges to that of sequential importance sampling/resampling (SIR) as the bin size is decreased. We present a set of numerical experiments from the field of biological image processing and tracking that demonstrate ASIR’s capabilities. Overall, we consider ASIR a promising candidate for simple, fast particle filtering in generic applications.

💡 Research Summary

The paper introduces the piecewise‑constant Sequential Importance Sampling/Resampling (pcSIR) algorithm as a computationally efficient alternative to the classical Sequential Importance Resampling (SIR) particle filter. In many tracking applications—especially those involving image data—the evaluation of the likelihood p(z_k|x_k) dominates the runtime because each particle must be processed individually. pcSIR mitigates this cost by partitioning the n‑dimensional state space into a regular grid of non‑overlapping bins (cells). All particles that fall into the same bin are represented by a single dummy particle located at the centre of mass of the real particles and carrying the mean state vector. The likelihood is evaluated only for this dummy particle; the resulting weight is then copied to every particle in the bin. Consequently, the number of expensive likelihood evaluations drops from the total particle count N to the number of occupied bins B, which can be orders of magnitude smaller.

The authors provide a rigorous error analysis based on multivariate Taylor expansion and midpoint Riemann‑sum bounds. For a bin with side lengths l_x and l_y, the approximation error E_pcSIR(l_x,l_y) is shown to be O(l_x² + l_y²). As the bin size shrinks, this error converges to the SIR error E_SIR(N). Since the dominant source of error in particle filters is the Monte‑Carlo sampling error O(N^{-1/2}), a first‑order (piecewise‑constant) approximation is sufficient for most practical purposes. The paper also discusses extensions to higher‑order multipole expansions that could store additional moments of the particle distribution within each bin.

Related work includes histogram filters and the Box Particle Filter (BPF). While histogram filters also use piecewise‑constant approximations, they store a single value per box and lack a formal convergence proof. BPF employs interval analysis and box‑shaped particles, which makes it unsuitable for narrow posterior distributions. pcSIR, by contrast, retains point particles and therefore can accurately represent sharply peaked posteriors.

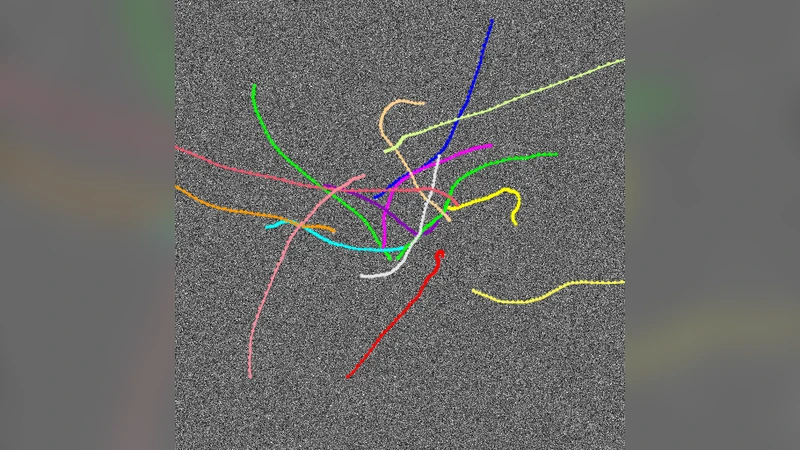

Experimental validation is performed on fluorescence microscopy sequences tracking sub‑cellular structures such as endosomes and mitochondria. A nearly‑constant‑velocity dynamics model (position, velocity, intensity) is used, and two likelihood models (Gaussian and uniform) are tested. The authors compare three configurations: standard SIR, pcSIR with one‑pixel cells (pcSIR‑1x1), and pcSIR with 2×2 sub‑pixel cells (pcSIR‑2x2). Results show that pcSIR achieves virtually identical tracking accuracy (root‑mean‑square error) to SIR while reducing runtime by a factor of 8–12 on average; in cases where the likelihood involves a full image‑formation simulation, speed‑ups exceed 20×. Moreover, a systematic study of cell size demonstrates the predicted convergence of the approximation error as the grid is refined.

In summary, pcSIR offers a simple, drop‑in replacement for SIR that dramatically lowers computational cost in scenarios with expensive likelihood evaluations, without sacrificing estimation quality. The method requires only minor modifications to existing SIR implementations, making it attractive for real‑time applications. Future directions suggested include adaptive bin sizing, incorporation of higher‑order moments, and extension to non‑Cartesian state spaces.

Comments & Academic Discussion

Loading comments...

Leave a Comment