Community characterization of heterogeneous complex systems

We introduce an analytical statistical method to characterize the communities detected in heterogeneous complex systems. By posing a suitable null hypothesis, our method makes use of the hypergeometric distribution to assess the probability that a given property is over-expressed in the elements of a community with respect to all the elements of the investigated set. We apply our method to two specific complex networks, namely a network of world movies and a network of physics preprints. The characterization of the elements and of the communities is done in terms of languages and countries for the movie network and of journals and subject categories for papers. We find that our method is able to characterize clearly the identified communities. Moreover our method works well both for large and for small communities.

💡 Research Summary

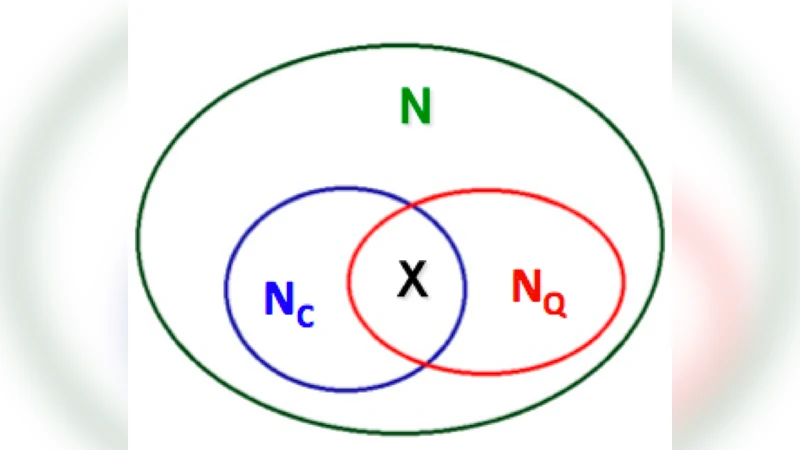

The paper introduces a statistical framework designed to characterize the semantic content of communities detected in heterogeneous complex systems. The authors begin by highlighting a common shortcoming in network science: while community detection algorithms can partition a graph into densely connected substructures, there is often no systematic way to interpret what those substructures represent in terms of external attributes (metadata). Existing approaches typically rely on ad‑hoc visual inspection or on pre‑defined labels, which lack statistical rigor and are difficult to apply uniformly across domains.

To address this gap, the authors formulate a null hypothesis that any given attribute (for example, a language, a country, a journal, or a subject category) is distributed uniformly at random among all nodes of the network. For each community, they then count how many nodes possess the attribute of interest and compare this observed count to the distribution expected under the null hypothesis. The comparison is performed using the hypergeometric distribution, which precisely models the probability of drawing a certain number of “successes” (nodes with the attribute) without replacement from a finite population. The resulting p‑value quantifies the likelihood that the observed enrichment could have arisen by chance. Because the hypergeometric test explicitly incorporates both the size of the community and the overall prevalence of the attribute, it remains valid for very small communities where traditional chi‑square or G‑tests would be unreliable.

To control for the multiple testing problem inherent in evaluating many attributes across many communities, the authors apply a false discovery rate (FDR) correction. This yields a set of attributes that are statistically over‑expressed (or “enriched”) in each community with a controlled expected proportion of false positives. The method is deliberately generic: it works with any binary attribute matrix, can be extended to multi‑label or weighted attributes, and does not depend on the specific community detection algorithm used.

The framework is demonstrated on two distinct real‑world networks. The first is a bipartite graph of movies and actors derived from a global film database. Each movie is annotated with two categorical metadata fields: production language and country of origin. After applying a standard modularity‑maximization algorithm (Louvain) to obtain roughly thirty communities, the authors compute enrichment scores for each language–country pair. The results show clear, statistically significant clusters such as “Japanese language – Japan”, “French language – France”, and “Spanish language – Spain”. Notably, even communities containing as few as ten movies display strong enrichment, confirming the method’s sensitivity to small groups.

The second case study involves a co‑authorship network of physics preprints from the arXiv repository. Here, each paper carries metadata about the journal in which it was later published (e.g., Physical Review Letters, Journal of High Energy Physics) and its subject category (e.g., quantum mechanics, condensed matter, astrophysics). After community detection, the authors identify over‑expressed journal–category combinations such as “Physical Review Letters – Quantum Mechanics” and “Journal of High Energy Physics – Particle Physics”. Again, the enrichment holds for both large communities (hundreds of papers) and very small ones (fewer than ten papers).

The authors discuss several strengths of their approach. First, the hypergeometric test provides an exact probability model that does not rely on asymptotic approximations, making it suitable for sparse or unevenly sized groups. Second, the method can incorporate multiple metadata dimensions simultaneously, enabling a richer, multidimensional characterization of each community. Third, because the statistical test is decoupled from the community detection step, the framework can be integrated into any existing pipeline, facilitating automated, reproducible analyses across disciplines.

Limitations are also acknowledged. The technique assumes that metadata are accurate, complete, and binary; missing or noisy labels can inflate false negatives or produce spurious enrichments. Moreover, the null hypothesis of uniform random allocation ignores the underlying network topology, which may itself bias attribute distribution (e.g., homophily). The authors suggest future extensions such as Bayesian hierarchical models that embed prior knowledge about attribute prevalence, or null models that preserve degree sequences or community‑level mixing patterns. They also propose adapting the test to continuous attributes (e.g., citation counts, ratings) by employing appropriate statistical distributions.

In conclusion, the paper delivers a robust, domain‑agnostic statistical tool for linking detected network communities to external categorical information. By rigorously quantifying over‑expression, the method transforms community detection from a purely structural exercise into a meaningful interpretive process, opening avenues for deeper insight in fields ranging from cultural analytics (film, music) to scientometrics (publications, patents) and beyond.

Comments & Academic Discussion

Loading comments...

Leave a Comment