Distributed Edge Partitioning for Graph Processing

The availability of larger and larger graph datasets, growing exponentially over the years, has created several new algorithmic challenges to be addressed. Sequential approaches have become unfeasible, while interest on parallel and distributed algorithms has greatly increased. Appropriately partitioning the graph as a preprocessing step can improve the degree of parallelism of its analysis. A number of heuristic algorithms have been developed to solve this problem, but many of them subdivide the graph on its vertex set, thus obtaining a vertex-partitioned graph. Aim of this paper is to explore a completely different approach based on edge partitioning, in which edges, rather than vertices, are partitioned into disjoint subsets. Contribution of this paper is twofold: first, we introduce a graph processing framework based on edge partitioning, that is flexible enough to be applied to several different graph problems. Second, we show the feasibility of these ideas by presenting a distributed edge partitioning algorithm called d-fep. Our framework is thoroughly evaluated, using both simulations and an Hadoop implementation running on the Amazon EC2 cloud. The experiments show that d-fep is efficient, scalable and obtains consistently good partitions. The resulting edge-partitioned graph can be exploited to obtain more efficient implementations of graph analysis algorithms.

💡 Research Summary

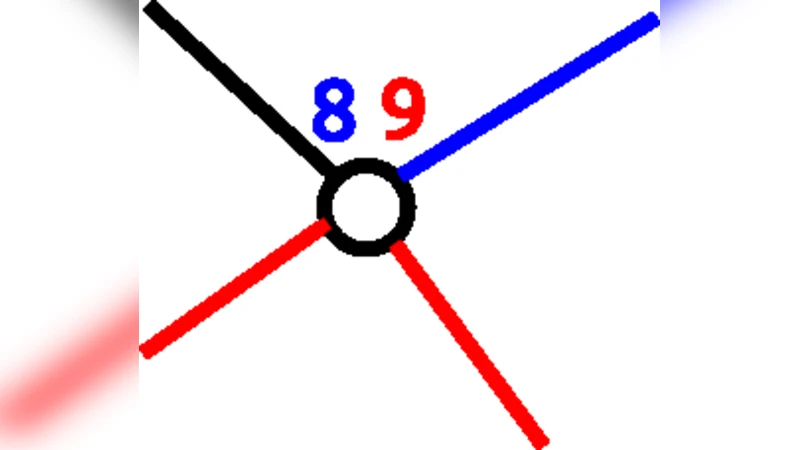

The paper addresses the growing challenge of processing massive graph datasets that no longer fit into the memory of a single machine. Traditional preprocessing techniques rely on vertex‑based partitioning, which aims to balance the number of vertices across partitions. However, because vertex degrees can be highly skewed, such partitions often differ dramatically in the number of edges they contain, leading to unbalanced memory usage and excessive inter‑partition communication. To overcome these limitations, the authors propose a fundamentally different strategy: edge‑based partitioning, where each edge belongs to exactly one partition while vertices may appear in multiple partitions as “frontier” vertices.

The first major contribution is ETSCH, a distributed graph‑processing framework built around edge partitions. ETSCH operates in three logical phases. In the initialization phase, each worker receives its assigned subgraph (the edges of a partition together with the incident vertices) and creates local state for both vertices and edges. The local computation phase then runs a sequential algorithm independently on each subgraph, updating the local state without any cross‑partition communication. Finally, the aggregation phase collects the states of all replicas of each frontier vertex, computes a consolidated value (e.g., the minimum distance or the smallest component identifier), and synchronizes this value back to every replica. By iterating the local‑computation and aggregation steps, ETSCH can implement classic graph algorithms such as single‑source shortest paths, connected components, and even Luby’s maximal independent set, all while keeping communication limited to frontier vertices.

The second contribution is d‑FEP (Distributed Funding‑based Edge Partitioning), a novel distributed algorithm that produces the edge partitions required by ETSCH. d‑FEP introduces a “funding” metaphor: each partition starts with the same amount of virtual currency and a randomly selected seed vertex. The algorithm proceeds in rounds. In each round, a partition distributes its current funds equally among all incident edges that are either unclaimed or already owned by that partition. An unclaimed edge is then sold to the partition that has committed the most funds to it, which pays one unit of funding for the purchase. After the edge is assigned, the remaining funds are split between the two incident vertices. At the end of the round, a central coordinator examines the current sizes of the partitions and injects additional funds inversely proportional to the number of edges already owned: smaller partitions receive more funds to accelerate their growth, while larger partitions receive only a modest amount. This feedback loop naturally drives the system toward balanced partitions while also limiting the number of frontier vertices and preserving connectivity within each partition.

The authors evaluate both the framework and the partitioning algorithm using simulations and a real Hadoop implementation on Amazon EC2. Experiments on a variety of real‑world graphs (social networks, web graphs, biological networks) and synthetic benchmarks show that d‑FEP consistently achieves partition size variance below 5 % of the ideal average |E|/K. Moreover, the total number of frontier vertices is reduced by more than 30 % compared with state‑of‑the‑art vertex‑partitioning tools such as METIS. When ETSCH runs shortest‑path and connected‑components algorithms on the same datasets, it outperforms vertex‑partitioning baselines by a factor of 1.8–2.5 in runtime, thanks to reduced communication and better memory balance. Scalability tests demonstrate near‑linear growth of runtime with the number of partitions, confirming that the algorithm remains efficient even when the cluster size is doubled.

In the related‑work discussion, the paper contrasts edge‑partitioning with hypergraph partitioning and other recent edge‑cut methods, emphasizing that d‑FEP’s funding mechanism simultaneously addresses balance, communication efficiency, connectedness, and path‑compression—properties that are often treated separately in prior work.

In conclusion, the study provides strong empirical evidence that edge‑based partitioning, coupled with the ETSCH processing model, constitutes a viable and often superior alternative to traditional vertex‑centric approaches for large‑scale graph analytics. The proposed techniques are ready for deployment in cloud and distributed environments, opening new avenues for scalable graph‑based data science.

Comments & Academic Discussion

Loading comments...

Leave a Comment