Neuronal Synchrony in Complex-Valued Deep Networks

Deep learning has recently led to great successes in tasks such as image recognition (e.g Krizhevsky et al., 2012). However, deep networks are still outmatched by the power and versatility of the brain, perhaps in part due to the richer neuronal comp…

Authors: David P. Reichert, Thomas Serre

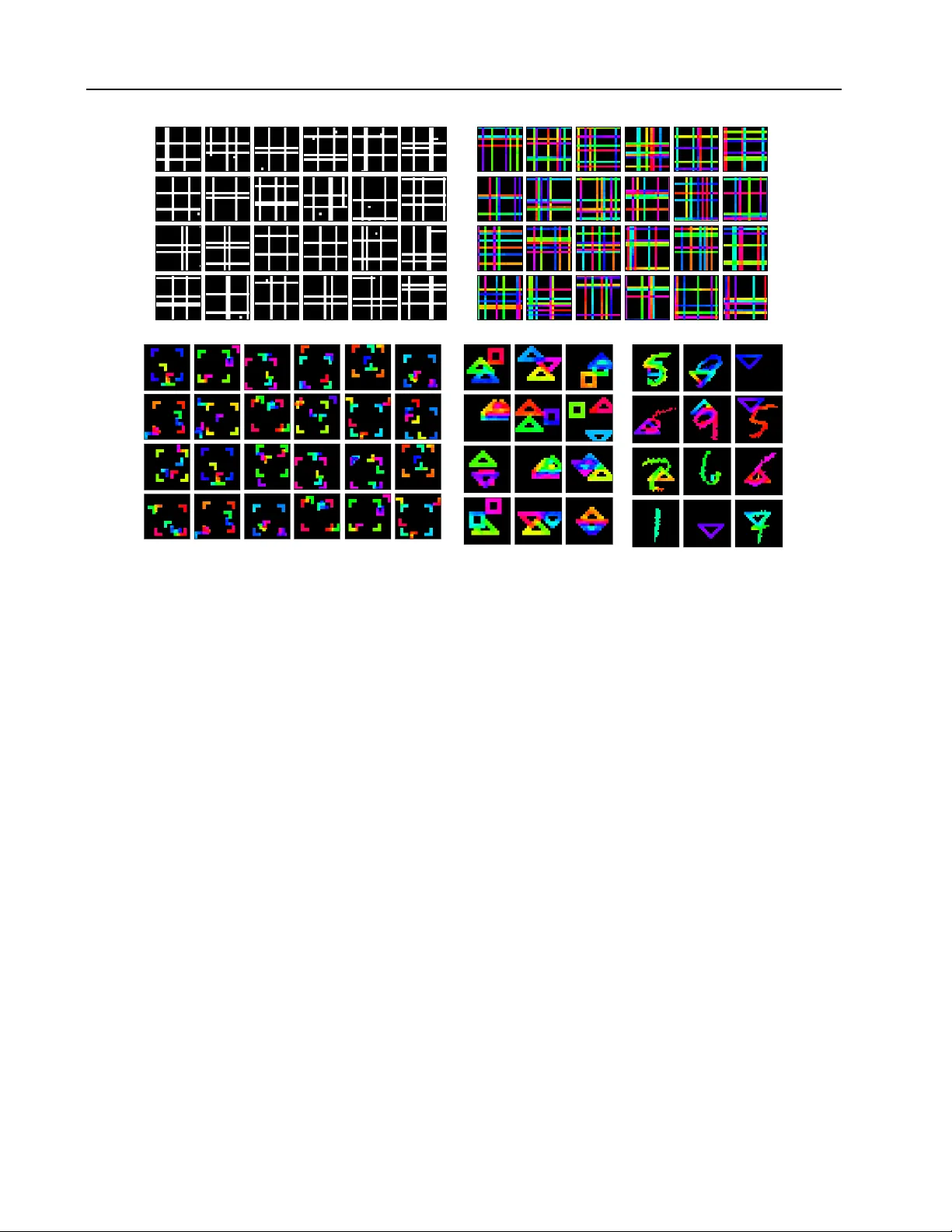

Neur onal Synchr ony in Complex-V alued Deep Networks David P . Reichert DA V I D _ R E I C H E RT @ B RO W N . E D U Thomas Serre T H O M A S _ S E R R E @ B RO W N . E D U Department of Cognitiv e, Linguistic & Psychological Sciences, Bro wn Uni v ersity Appearing in the pr oceedings of the 2nd International Confer ence on Learning Repr esentations (ICLR2014). Abstract Deep learning has recently led to great suc- cesses in tasks such as image recognition (e.g Krizhevsk y et al. , 2012 ). Howe ver , deep net- works are still outmatched by the po wer and ver - satility of the brain, perhaps in part due to the richer neuronal computations av ailable to corti- cal circuits. The challenge is to identify which neuronal mechanisms are rele v ant, and to find suitable abstractions to model them. Here, we show ho w aspects of spike timing, long hypothe- sized to play a crucial role in cortical information processing, could be incorporated into deep net- works to b uild richer , versatile representations. W e introduce a neural network formulation based on complex-v alued neuronal units that is not only biologically meaningful but also amenable to a variety of deep learning frame works. Here, units are attributed both a firing rate and a phase, the latter indicating properties of spik e timing. W e show how this formulation qualitati vely captures sev eral aspects thought to be related to neuronal synchrony , including g ating of information pro- cessing and dynamic binding of distributed ob- ject representations. Focusing on the latter , we demonstrate the potential of the approach in se v- eral simple experiments. Thus, neuronal syn- chrony could be a flexible mechanism that fulfills multiple functional roles in deep networks. 1. Introduction Deep learning approaches hav e prov en successful in v ar- ious applications, from machine vision to language pro- cessing ( Bengio et al. , 2012 ). Deep networks are often taken to be inspired by the brain as idealized neural net- works that learn representations through several stages of non-linear processing, perhaps akin to how the mammalian cortex adapts to represent the sensory world. These ap- proaches are thus also rele vant to computational neuro- science ( Cadieu et al. , 2013 ): for example, con volutional networks ( LeCun et al. , 1989 ) possibly capture aspects of the organization of the visual cortex and are indeed closely related to biological models like HMAX ( Serre et al. , 2007 ), while deep Boltzmann machines ( Salakhutdi- nov & Hinton , 2009 ) ha ve been applied as models of gen- erativ e cortical processing ( Reichert et al. , 2013 ). The most impressive recent deep learning results ha ve been achiev ed in classification tasks, in a processing mode akin to rapid feed-forward recognition in humans ( Serre et al. , 2007 ), and required supervised training with large amounts of labeled data. It is perhaps less clear whether current deep networks truly support neuronal representations and processes that naturally allo w for flexible, rich reasoning about e.g. objects and their relations in visual scenes, and what machinery is necessary to learn such representations from data in a mostly unsupervised way . At the implemen- tational lev el, there is a host of cortical computations not captured by the simplified mechanisms utilized in deep net- works, from the complex laminar organization of the cor- tex to dendritic computations or neuronal spikes and their timing. Such mechanisms might be ke y to realizing richer representations, but the challenge is to identify which of these mechanisms are functionally rele vant and which can be discarded as mere implementation details. One candidate mechanism is temporal coordination of neu- ronal output, or in particular , synchronization of neuronal firing. V arious theories posit that synchrony is a key ele- ment of ho w the cortex processes sensory information (e.g. von der Malsb urg , 1981 ; Crick , 1984 ; Singer & Gray , 1995 ; Fries , 2005 ; Uhlhaas et al. , 2009 ; Stanley , 2013 ), though these theories are also contested (e.g. Shadlen & Mo vshon , 1999 ; Ray & Maunsell , 2010 ). Because the de gree of syn- chrony of neuronal spikes af fects the output of downstream neurons, synchrony has been postulated to allow for gat- ing of information transmission between neurons or whole cortical areas ( Fries , 2005 ; Benchenane et al. , 2011 ). More- ov er , the relati ve timing of neuronal spikes may carry infor - mation about the sensory input and the dynamic network Neuronal Synchr ony in Complex-V alued Deep Networks state (e.g. Geman , 2006 ; Stanley , 2013 ), beyond or in addi- tion to what is con ve yed by firing rates. In particular, neu- ronal subpopulations could dynamically form synchronous groups to bind distributed representations ( Singer , 2007 ), to signal that perceptual content represented by each group forms a coherent entity such as a visual object in a scene. Here, we aim to demonstrate the potential functional role of neuronal synchrony in a framework that is amenable to deep learning. Rather than dealing with more realistic but elaborate spiking neuron models, we thus seek a mathe- matical idealization that naturally e xtends current deep net- works while still being interpretable in the conte xt of bio- logical models. T o this end, we use complex-v alued units, such that each neuron’ s output is described by both a firing rate and a phase variable. Phase variables across neurons represent relativ e timing of activity . In Section 2 , we briefly describe the ef fect of synchrony on neuronal information processing. W e present the frame- work based on complex-v alued networks, and show what functional roles synchrony could play , within this frame- work. Thanks to the specific formulation employed, we had some success with con verting deep nets trained without synchrony to incorporate synchrony . Using this approach, in Section 3 we underpin our argument with se veral simple experiments, focusing on binding by synchrony . Exploiting the presented approach further will require learning with synchrony . W e discuss principled w ays to do so and chal- lenges to ov ercome in Section 4 . It should be noted that complex-v alued neural networks are not new (e.g. Zemel et al. , 1995 ; Kim & Adalı , 2003 ; Nitta , 2004 ; Fiori , 2005 ; Aizenberg & Moraga , 2007 ; Savitha et al. , 2011 ; Hirose , 2011 ). Howe ver , they do not seem to have attracted much attention within the deep learning community—perhaps because their benefits still need to be explored further . 1 There are a few cases where such net- works were employed with the interpretation of neuronal synchrony , including the work of Rao et al. ( 2008 ), Rao & Cecchi ( 2010 ; 2011 ), which is similar to ours. These prior approaches will be discussed in Section 4 . 2. Neuronal synchr ony Cortical neurons communicate with electric action poten- tials (so-called spikes). There is a long-standing debate in neuroscience on whether various features of spike timing matter to neuronal information processing, rather than just av erage firing rates (e.g. Stanley , 2013 ). In common deep neural networks (conv olutional networks, Boltzmann ma- chines, etc.), the output of a neuronal unit is characterized by a single (real-valued) scalar; the state of a network and 1 Beyond possibly applications where the data itself is natu- rally represented in terms of complex numbers. how it relates to an interpretation of sensory input is fully determined by the joint scalar outputs across all units. This suggests an interpretation in terms of av erage, static firing rates, lacking any notion of relati ve timing. Here, we con- sider how to incorporate such notions into deep netw orks. Consider Figure 1 a for an e xample of how a more dynamic code could be transmitted between neurons (simulated with the Brian simulator , Goodman & Brette , 2009 ). This ex- ample is based on the hypothesis that neuronal rhythms, ubiquitous throughout the brain, play a functional role in information processing (e.g. Singer & Gray , 1995 ; Fries , 2005 ; Uhlhaas et al. , 2009 ; Benchenane et al. , 2011 ). A neuron recei ves spike train inputs modulated by oscillatory firing rates. This results in rh ythmic output activity , with an av erage firing rate that depends both on the amplitudes and relativ e phase of the inputs (Figure 1 b). Such interactions are difficult to represent with just static firing rates. 2.1. Modeling neuronal synchr ony with complex-valued units In deep networks, a neuronal unit receives inputs from other neurons with states vector x via synaptic weights vec- tor w . W e denote the total ‘postsynaptic’ input as χ : = w · x . The output is computed with an activ ation function f as f ( χ ) (or, in the case of Gibbs-sampling in Boltzmann ma- chines, f ( χ ) is a conditional probability from which the output state is sampled). 2 W e can now model aspects of spike timing by replacing the real-valued states x with com- plex states z . For unit state z i = r i e φ i , the magnitude r i = | z i | can be interpreted as the a verage firing rate analogously to the real-valued case. The phase φ i could correspond to the phase of a neuronal rhythm as in Figure 1 a, or , more gener- ally , the timing of maximal acti vity in some temporal inter- val (Figure 1 c). Because neuronal messages are no w added in the complex plane (keeping the weights w real-valued, for now), a neuron’ s total input ζ : = w · z no longer de- pends only on the firing rates of the input units, and the strength of the synapses, b ut also their r elative timing . This naturally accounts for the earlier , spiking neuron example: input states that are synchronous, i.e. have similar phases, result in a stronger total input, whereas less synchronous inputs result in weaker total input (Figure 1 d). A straightforward way to define a neuron’ s output state z i = r i e i φ i from the (comple x-valued) total input ζ is to apply an activ ation function, f : R + 7→ R + , to the input’ s magnitude | ζ | to compute the output magnitude, and to set the output phase to the phase of the total input: φ i = arg ( ζ ) , r i = f ( | ζ | ) , where ζ = w · z . (1) 2 Operations such as max-pooling require separate treatment. Also, bias parameters b can be added to the inputs to control the intrinsic excitability of the neurons. W e omit them for bre vity . Neuronal Synchr ony in Complex-V alued Deep Networks (a) 0.5 time (s) 0 100 200 r ate (s - 1 ) 0.5 time (s) 0 100 200 r ate (s - 1 ) 0.5 1.0 time (s) − 80 − 40 0 40 v oltage (mV) (b) 0 π 2 π ∆φ (rad) 0 1 normalized output rate (c) 0 π / 2 π 3 / 2 π 2 π (d) (e) 0 π 2 π Δφ (r ad) 0 1 nor m. output magnitude Figure 1. T ransmission of rhythmical activity , and corr esponding model using complex-v alued units. (a) A Hodgkin–Huxle y model neuron receiv es two rhythmic spike trains as input, plus background activity . The inputs are modeled as inhomogeneous Poisson processes modulated by sinusoidal rate functions (left; shown are rates and generated spikes), with identical frequencies but differing phases. The output of the neuron is itself rhythmical (right; plotted is the membrane potential). (b) The neuron’ s output rate is modulated by the phase dif ference between the two inputs (rate a veraged ov er 15s runs). (c) W e represent the timing of maximal activity of a neuron as the phase of a complex number, corresponding to a direction in the complex plane. The firing rate is the magnitude of that complex number . Also shown is the color coding used to indicate phase throughout this paper (thus, figures should be viewed in color). (d) The outputs of the input neurons are scaled by synaptic weights (numbers next to edges) and added in the complex plane. The phase of the resulting complex input determines the phase of the output neuron. The acti v ation function f is applied to the magnitude of the input to compute the output magnitude. T ogether , this models the influence of synchronous neuronal firing on a postsynaptic neuron. (e) Output magnitude as function of phase dif ference of two inputs. With a second term added to a neuron’ s input, out-of-phase excitation never cancels out completely (see main text for details; curv es are for w 1 = w 2 > 0, | z 1 | = | z 2 | ). Compare to 1b. Again this is intuiti ve as a biological model, as the total strength and timing of the input determine the ‘firing rate’ and timing of the output, respectiv ely . There are, howe ver , issues with this simple approach to modeling neuronal synchrony , which are problematic for the biological model but also, possibly , for the functional capabilities of a netw ork. In analogy to the spiking neuron example, consider two inputs to a neuron that are excita- tory (i.e., w 1 , w 2 > 0), and furthermore of equal magnitude, | w 1 z 1 | = | w 2 z 2 | . While it is desirable that the net total input is decreased if the tw o inputs are out of phase, the net input in the complex-valued formulation can actually be zero, if the difference in input phases is π , no matter ho w strong the individual inputs (Figure 1 e, lower curv e). Biologically , it seems unrealistic that strong excitatory input, ev en if not synchronized, would not excite a neuron. 3 3 Arguably , refractory periods or network motifs such as disy- Moreov er , in the above formulation, the role of inhibition (i.e., connections with w < 0) has changed: inputs with negati ve weights are equiv alent to excitatory inputs of the opposite phase , due to − 1 = e i π . Again, this is a desir- able property that leads to desynchronization between neu- ronal groups, in line with biological models, as we will show below . Ho we ver , it also means that inputs from con- nections with negati ve weights, on their own, can strongly driv e a neuron; in that sense, there is no longer actual inhi- bition that always has a suppressi ve effect on neuronal out- puts. Additionally , we found that the phase shifting caused by inhibition could result in instability in networks with dominant neg ati ve weights, leading to fast switching of the phase variables. naptic feedforward inhibition ( Gabernet et al. , 2005 ; Stanley , 2013 ) could indeed result in destructiv e interference of out-of- phase excitation. Neuronal Synchr ony in Complex-V alued Deep Networks W e introduce a simple fix for these issues, modifying how the output magnitude of a neuron is computed as follows: r i = f ( | ζ | ) → r i = f ( 1 2 | ζ | + 1 2 χ ) , where ζ = w · z , χ : = w · | z | . (2) The first term, which we refer to as synchr ony term , is the same as before. The second, classic term , applies the weights to the magnitudes of the input units and thus does not depend on their phases; a network using only the clas- sic terms reduces to its real-valued counterpart (we thus reuse the v ariable χ , earlier denoting postsynaptic input in a real-valued network). T ogether, the presence of the clas- sic term implies that excitatory input always has a net ex- citatory component, e ven if the input neurons are out of phase such that the synchrony term is zero (thus matching the spiking neuron e xample, 4 compare Figures 1 b and 1 e). Similarly , input from negativ e connections alone is nev er greater than zero. Lastly , this formulation also makes it possible to gi ve different weightings to synchrony and clas- sic terms, thus controlling ho w much impact synchrony has on the network; we do not explore this possibility here. 2.2. The functional rele vance of synchrony The advantage of using complex-v alued neuronal units rather than, say , spiking neuron models is that it is natural to consider how to apply deep learning techniques and ex- tend existing deep learning neural networks in this frame- work. Indeed, our experiments presented later are based on pretraining standard, real-v alued nets (deep Boltzmann ma- chines in this case) and con verting them to comple x-v alued nets after training. In this section, we briefly describe how our framework lends itself to realize two functional roles of synchrony as postulated by biological theories. G R O U P I N G ( B I N D I N G ) B Y S Y N C H R O N Y The activ ation of a real-valued unit in an artificial neural network can often be understood as signaling the presence of a feature or combination of features in the data. The phase of a complex-v alued unit could provide additional information about the feature. Binding by synchr ony theo- ries ( Singer , 2007 ) postulate that neurons in the brain dy- namically form synchronous assemblies to signal where distributed representations together correspond to coherent sensory entities. For example, different objects in a visual scene would correspond to different synchronous assem- blies in visual cortex. In our formulation, phases can anal- ogously signal a soft assignment to different assemblies. Importantly , communication with complex-v alued mes- 4 Real neuronal networks and realistic simulations have many degrees of freedom, hence we make no claim that our formulation is a general or quantitativ e model of neuronal interactions. Figure 2. Gating of interactions. Out-of-phase input, when com- bined with a stronger input, is weakened. In this example, with ∆ φ = π and as long as | w 1 · z 1 | > | w 2 z 2 | , ef fecti ve input from the neuron to the right is zero, for any input strength (classic and synchrony terms contributions cancel, bottom panel). Hence, neu- ronal groups with different phases (gradually) decouple. sages also naturally leads to different synchronous groups emerging: for excitatory connections, messages that ‘agree’ ( Zemel et al. , 1995 ) in their phases prev ail ov er those that do not; inhibitory messages, on the other hand, equate to excitatory messages of opposite phases, and thus encourage de synchronization between neurons. For com- parison, consider the more realistic spiking model of visual cortex of Miconi & V anRullen ( 2010 ), where synchronous groups arise from a similar interaction between excitation and inhibition. That these interactions can indeed lead to meaningful groupings of neuronal representations in deep networks will be sho wn empirically in Section 3 . D Y N A M I C G A T I N G O F I N T E R AC T I O N S A N D I N F O R M A T I O N FL O W Because synchrony affects which neuronal messages are transmitted preferentially , it has also been postulated that synchrony may gate information flow dynamically depend- ing on the sensory input, the current network state and top-down control ( Stanley , 2013 ), as well as to modulate the ef fecti ve interactions between cortical areas depend- ing on their lev el of coherence ( Fries , 2005 ; Benchenane et al. , 2011 ). A similar modulation of interactions can be reproduced in our framework. Let us consider an exam- ple scenario (Figure 2 ) where a neuron is a member of a synchronous assembly , receiving excitatory inputs w 1 · z 1 from neurons that all hav e similar phases. No w consider the effect of adding another neuron that also provides ex- citatory input, w 2 z 2 , but of a different phase, and assume that | w 1 · z 1 | < | w 2 z 2 | (i.e. the input of the first group domi- nates). The net effect the latter additional input has depends again on the phase dif ference. In particular , if the phase difference is maximal ( π ) , the net contrib ution from the second neuron turns out to be zer o . The output magnitude is computed as in Eq. 2 , taking both synchrony and classic terms into account. In the complex plane, w 2 z 2 is antiparal- Neuronal Synchr ony in Complex-V alued Deep Networks lel to w 1 · z 1 , thus the synchrony term is reduced by | w 2 z 2 | . Howe ver , this reduction is exactly canceled out by the clas- sic term contribution from the second input (Figure 2 lo wer panel). There is also no effect on the output phase as the phase of the total input remains equal to the phase of w 1 · z 1 . Analogous reasoning applies for inhibitory connections. Thus, the effecti ve connecti vity between neuronal units is modulated by the units’ phases, which themselves are a re- sult of network interactions. In particular , if inference re- sults in neurons being segreg ated into different assemblies (ideally because they represent independent causes in the sensory input, or independent regions in an image), exis- tent connections between groups are weakened. 3. Experiments: the case of binding by synchrony In this section, we support our reasoning with se veral sim- ple experiments, and further elucidate on the possible roles of synchrony . W e focus on the binding aspect. All experiments were based on pretraining networks as normal, real-valued deep Boltzmann machines (DBMs, Salakhutdinov & Hinton , 2009 ). DBMs are multi-layer net- works that are framed as probabilistic (undirected graphi- cal) models. The visible units make up the first layer and are set according to data, e.g. images. Sev eral hidden layers learn internal representations of the data, from which they can generate the latter by sampling the visible units. By definition, in a DBM there are only (symmetric) connec- tions between adjacent layers and no connections within a layer . Given the inputs from adjacent layers, a unit’ s state is updated stochastically with a probability giv en by a sigmoid (logistic) activ ation function (implementing Gibbs sampling). T raining was carried out layer-wise with stan- dard methods including contrastiv e div ergence (for model and training details, see Appendix B ). Training and exper - iments were implemented within the Pylearn2 framework of Goodfellow et al. ( 2013b ). W e emphasize ho we ver that our frame work is not spe- cific to DBMs, but can in principle be adapted to various deep learning approaches (we are currently e xperimenting with networks trained as autoencoders or con volutional net- works). The learning and inference procedures of a DBM deriv e from its definition as a probabilistic model, but for our purpose here it is more appropriate to simply think of a DBM as a multi-layer recurrent neural network (cf. Good- fellow et al. , 2013a ) with logistic activ ation function; 5 we can demonstrate how our framework works by taking the pretrained network, introducing complex-v alued unit states and applying the activ ation function to magnitudes as de- 5 The acti vation function is stochastic in the case of Gibbs sam- pling, deterministic in the case of mean-field inference. scribed in Section 2.1 . 6 Howe ver , dev eloping a principled probabilistic model based on Boltzmann machines to use with our framew ork is possible as well (Section 4 ). This con version procedure applied to real-valued networks offers a simple method of exploring aspects of synchrony , but there is no guarantee that it will work (for additional discussion, see Appendix B ). W e use it here to sho w what the functional roles of synchrony could be in principle; learning with synchrony will be required to move beyond simple experiments (Section 4 ). Throughout the experiments, we clamped the magnitudes of the visible units according to (binary) input images, which were not seen during training, and let the network infer the hidden states ov er multiple iterations. The phases of the visible layer were initialized randomly and then de- termined by the input from the hidden layer abo ve. Hence, any synchronization observ ed was spontaneous. 3.1. Dynamic binding of independent components in distributed r epresentations In this first experiment, we trained a DBM with one hidden layer (a restricted Boltzmann machine, Smolensky , 1986 ) on a version of the classic ‘bars problem’ ( Földiák , 1990 ), where binary images are created by randomly dra wing hor- izontal and vertical bars (Figure 3 a). This dataset has clas- sically been used to test whether unsupervised learning al- gorithms can find the independent components that consti- tute the image, by learning to represent the individual bars (though simple, the bars problem is still occasionally em- ployed, e.g. Lücke & Sahani , 2008 ; Spratling , 2011 ). W e chose this dataset specifically to elucidate on the role of synchrony in the conte xt of distributed representations. W e hard-coded the recepti ve field sizes (regions with non- zero weights to the input) of the hidden units to be restricted to regions smaller than the entire image (but together tiling the whole image). By necessity , this implies that any in- dividual unit can ne ver fully represent a full-length bar , in the sense that the the unit’ s weights correspond to the bar , or that one can read out the presence of the full bar from this unit’ s state alone. Howe ver , this does not imply that the full network cannot learn that the images are constituted by bars (as long as recepti ve fields overlap). For example, we found that when sampling from the model (activ ating hidden and visible units freely), the resulting images con- tained full-length bars most of the time (see supplementary figure S1a and supplementary videos, Appendix A ); simi- larly , the network would fill in the remainder of a bar when the visible units where clamped to a part of it. After con version to a complex-v alued network, the model 6 Our results were qualitatively similar whether we computed the output magnitudes stochastically or deterministically . Neuronal Synchr ony in Complex-V alued Deep Networks (a) (b) input (c) Figure 3. Binding by synchr ony in shallow , distributed r epresentations. (a) Each image of our version of the bars problem contained 6 vertical and 6 horizontal bars at random positions. (b) A restricted Boltzmann machine was trained on bars images and then con verted to a comple x-valued network. The magnitudes of the visible units were clamped according to the input image (bottom left), whereas the hidden units and phases of the visible units were activ ated freely . After 100 iterations, units representing the various bars were found to have synchronized (right; the phases are color-coded for units that are active; black means a unit is off). The neurons synchronized ev en though recepti ve fields of the hidden units were constrained to be smaller than the bars. Thus, binding by synchrony could make the ‘independent components’ of sensory data explicit in distrib uted representation, in particular when no single neuron can possibly represent a component (a full-length bar) on its own. (c) Histogram of the unit phases in the visible layer for the example sho wn in b. was run on input images for 100 iterations each. Results are plotted in Figure 3 b, depicting both visible and hid- den states for one input image (Further examples in Figure S1b). W e found that visible neurons along a bar would often synchronize to the same phase (except where bars crossed), whereas different bars tended to hav e dif ferent phase values. Figure 3 c sho ws a histogram of phase values in the visible layer for this e xample image, with clear peaks corresponding to the phases of the bars. Such synchroniza- tion was also found in the hidden layer units ( 3 b). Based on these results, we make three points. First, the re- sults show that our formulation indeed allows for neurons to dynamically organize into meaningful synchronous as- semblies, even without supervision towards what neurons should synchronize to, e.g. by providing phase targets in training—here, synchrony was not used in training at all. That the con version from a real-valued network can work suggests that an unsupervised or semi-supervised approach to learning with synchrony could be successful as long as synchrony benefits the task at hand. Second, synchronization of visible and hidden units, which together represent individual bars, can occur for neurons sev eral synapses apart. At the same time, not all bars syn- chronized to different phases. The number of distinct, sta- ble phase groups that can be formed is likely to be limited. Notably , it has been argued that this aspect of synchrony coding explains certain capacity limits in cognition ( Jensen & Lisman , 2005 ; Fell & Axmacher , 2011 ). The third point relates to the nature of distributed repre- sentations. For the bars problem, whether a neural net (or probabilistic model) disco vers the bars is usually e valuated by e xamining whether indi vidual units correspond to indi- vidual bars, as can be seen by inspecting the weights or by probing the response properties of individual neurons (e.g. Lücke & Sahani , 2008 ; Spratling , 2011 ). A similar ‘local- ist’ approach was taken in recent attempts to make sense of the somewhat opaque hidden representations learned by deep networks (as in the example of the neurons that dis- cov ered the ‘concept’ of a cat from unsupervised learning Le et al. , 2011 , or the work of Zeiler & Fergus , 2013 on analyzing con volutional networks). In our experiment, it is not possible to map indi vidual neurons to the image con- stituents, by construction; bars could only be represented in a distributed fashion. Synchrony could make explicit which neurons together represent a sensory entity , e.g. for a read- out (more on that below), as well as of fer a mechanism that establishes the grouping in the first place. 3.2. Binding in deep networks T o examine the ef fects of synchrony in deeper networks, we trained a DBM with three hidden layers on another dataset, consisting of binary images that contained both four ‘cor- ners’ arranged in a square shape (centered at random po- sitions) and four corners independently dra wn (Figure 4 a). Receptiv e field sizes in the first hidden layer were chosen such that the fields w ould only cover indi vidual corners, not the whole square arrangements, making it impossible for the first hidden layer to discov er the latter during training. 7 Receptiv e field sizes were larger in higher layers, with the topmost hidden layer being fully connected. After con verting the net to complex values, we found that the four corners arranged as a square w ould often synchro- nize to one phase, whereas the other , independent corners 7 Note that there w as only layer-wise pretraining, no training of the full DBM, thus first layer representations were not influenced by higher layers during training either . Neuronal Synchr ony in Complex-V alued Deep Networks (a) (b) input Figure 4. Binding by synchrony in a deep network. (a) Each image contained four corners arranged in a square shape, and four randomly positioned corners. (b) The four corners arranged in a square were usually found to synchronize. The synchronization of the corresponding hidden units is also clearly visible in the hidden layers. The recepti ve field sizes in the first hidden layer were too small for a hidden unit to ‘see’ more than individual corners. Hence, the synchronization of the neurons representing the square in the fist hidden and visible layers was due to feedback from higher layers (the topmost hidden layer had global connectivity). would assume one or multiple phases different from the phase of the square (Figure 4 b; more e xamples Figure S1c). Synchronization was also clear in the hidden layers. Again we make several observations. First, because of the restricted receptiv e fields, the synchronization of the units representing parts of the square in the visible layer and first hidden layer was necessarily due to top-down input from the higher layers. Whether or not a corner represented by a first layer neuron w as part of a larger whole was made e x- plicit in the synchronous state. Second, this example also demonstrates that neurons need not synchronize through connected image regions as was the case in the bars ex- periment. Lastly , note that, with or without synchrony , restricted receptive fields and topographic arrangement of hidden units in intermediate hidden layers make it possible to roughly identify which units participate in representing the same image content, by virtue of their position in the layers. This is no longer possible with the topmost, glob- ally connected layer . By identifying hidden units in the topmost layer with visible units of similar phase, howe ver , it becomes possible to establish a connection between the hidden units and what they are activ ated by in image space. 3.3. Reading out object repr esentations via phase W ith a final set of experiments, we demonstrate that indi- vidual synchrony assemblies can be selected on the basis of their phase, and their representational content be accessed one group at a time. W e trained on two additional datasets containing multiple simple objects: one with images of ge- ometric toy shapes (triangles or squares, Figure 5 a), with three randomly chosen instances per image, and a dataset where we combined handwritten digits from the commonly used MNIST dataset with the geometric shapes (Figure 5 c). As before, we found a tendency in the comple x-valued net- work to synchronize indi vidual objects in the image to dis- tinct phases (Figure 5 b, d, Figure S1d, e). After a network was run for a fixed number of steps, for each layer , units were clustered according to their activity vectors in the complex plane. For clustering we assumed for simplicity that the number of objects was known in ad- vance and used k-means, with k , the number of clusters, being set to the number of objects plus one for a general background. In this fashion, each neuron w as assigned to a cluster , and the assignments could be used to define masks to read out one representational component at a time. 8 For the visible layer , we thus obtained segmented images as shown in Figures 5 b, d. Especially for the modified MNIST images, the segmentations are often noisy . Howe ver , it is notew orthy that segmentations can be obtained at all, gi ven that the network training in volved no notion of segmenta- tion. Moreover , binding by synchron y is more general than segmentation (in the sense of assigning labels to pix els), as it applies to all neurons in the network and, in principle, to arbitrarily abstract and non-visual forms of representation. Thus, units can also be selected in the hidden layers accord- ing to phase. The phase-masked representations could, for instance, be used for classification, one object at a time. W e can also decode what these representations corresponded to in image space. T o this end, we took the masked states for each cluster (treating the other states as being zero 9 ) and used a simple decoding procedure as described by Re- ichert et al. ( 2010 ); Reichert ( 2012 ), performing a single deterministic top-down pass in the network (with doubled weights) to obtain a reconstructed image. See Figure 6 for an example. Though the images decoded in this fashion are somewhat noisy , it is apparent that the higher layer units do indeed represent the same indi vidual objects as the visi- ble layer units that hav e assumed the same phase (in cases where objects are separated well). Earlier , we discussed gating by synchrony as it arises from the effect that synchrony has directly on network interac- 8 Alternativ ely , peaks could be selected in the phase histogram of a layer and units masked according to distance to the peaks, allowing for o verlapping clusters. 9 This only works in a netw ork where units signal the presence of image content by being on and not by being off, so that setting other units to zero has the ef fect of removing image content. This can be achiev ed with inductive biases such as sparsity being ap- plied during training, see the discussion of Reichert et al. ( 2011 ). Neuronal Synchr ony in Complex-V alued Deep Networks (a) (b) (c) (d) Figure 5. Simple segmentation from phases. (a) The 3-shapes set consisted of binary images each containing three simple geometric shapes (square, triangle, rotated triangle). (b) V isible states after synchronization (left), and segmented images (right). (c) For each image in the MNIST+shape dataset, a MNIST digit and a shape were drawn each with probability 0.8. (d) Analogous to (b). input 0 π / 2 π 3 / 2 π 2 π decode phase mask Figure 6. Using phase to access and decode inter - nal object representations. By selecting subsets of neurons according to their phase (e.g. through clustering), representations of each object can be read out one by one (right-hand side). For the hid- den layers, plotted are images decoded from each of the synchronous sub-populations, using a simple decoding procedure (see main text). tions. Selecting explicitly indi vidual synchrony assemblies for further processing, as done here, is another potential form of gating by synchrony . In the brain, some cortical regions, such as in prefrontal cortex, are highly intercon- nected with the rest of the corte x and implement functions such as executi ve control and working memory that de- mand flexible usage of capacity-limited resources accord- ing to conte xt and task-demands. Coherence of cortical ac- tivity and synchrony hav e been suggested to possibly play a causal role in establishing dynamic routing between these areas (e.g. Benchenane et al. , 2011 ; Miller & Buschman , 2013 ). Similarly , attentional processing has been hypoth- esized to emerge from a dynamically changing, globally coherent state across the cortex (e.g. Duncan et al. , 1997 ; Miller & Buschman , 2013 ). It is possible that there are dedicated structures in the brain, such as the pulvinar in the thalamus, that coordinate cross-cortical processing and cortical rhythms (e.g. Shipp , 2003 ; Saalmann et al. , 2012 ). In our model, one could interpret selecting synchrony as- semblies as prefrontal areas reading out subsets of neuronal populations as demanded by the task. Through binding by synchrony , such subsets could be defined dynamically across many dif ferent cortical areas (or at least sev eral lay- ers in a feature hierarchy , in our model). 4. Discussion W e argue that extending neural networks beyond real- valued units could allo w for richer representations of sen- sory input. Such an extension could be motiv ated by the fact that the brain supports such richer coding, at least in principle. More specifically , we e xplored the notion of neu- ronal synchron y in deep networks. W e moti vated the hypo- thetical functional roles of synchron y from biological the- ories, introduced a formulation based on complex-valued units, sho wed how the formulation related to the biological phenomenon, and examined its potential in simple e xperi- ments. Neuronal synchrony could be a versatile mechanism that supports v arious functions, from gating or modulating neuronal interactions to establishing and signaling seman- tic grouping of neuronal representations. In the latter case, synchrony realizes a form of soft constraint on neuronal Neuronal Synchr ony in Complex-V alued Deep Networks representations, imposing that the sensory world should be organized according to distinct perceptual entities such as objects. Unfortunately , this melding of various functional roles might make it more dif ficult to treat the synchrony mechanism in principled theoretical terms. The formulation we introduced is in part motiv ated by it being interpretable in a biological model. It can be un- derstood as a description of neurons that fire rhythmically , and/or in relation to a global network rhythm. Other as- pects of spike timing could be functionally relev ant, 10 but the complex-valued formulation can be seen as a step be- yond current, ‘static firing rate’ neural networks. The for - mulation also has the advantage of making it possible to explore synchrony in conv erted pretrained real-valued net- works (without the addition of the classic term in Eq. 2 , the qualitati ve change of e xcitation and inhibition is detri- mental to this approach). Howe ver , for the machine learn- ing application, various alternati ve formulations would be worthy of exploration (different weights in synchrony and classic terms, complex-v alued weights, etc.). W e presented our simulation results in terms of representa- tiv e examples. W e did not provide a quantitati ve analysis, simply because we do not claim that the current, simple ap- proach would compete with, for e xample, a dedicated se g- mentation algorithm, at this point. In particular , we found that the con version from arbitrary real-valued nets did not consistently lead to fav orable results (we provide additional comments in Appendix B ). Our aim with this paper is to demonstrate the synchrony concept, how it could be im- plemented and what functions it could fulfill, in principle, to the deep learning community . T o find out whether syn- chrony is useful in real applications, it is necessary to de- velop appropriate learning algorithms. W e address learning in the context of related work in the follo wing. W e are aware of a few examples of prior work employ- ing comple x-valued neural networks with the interpreta- tion of neuronal synchrony . 11 Zemel et al. ( 1995 ) intro- duced the ‘directional unit Boltzmann machine’ (DUBM), an extension of Boltzmann machines to complex weights and states (on the unit circle). A related approach is used by Mozer et al. ( 1992 ) to model binding by synchrony in vision, performing phase-based segmentation of simple ge- ometric contours, and by Behrmann et al. ( 1998 ) to model aspects of object-based attention. The DUBM is a prin- cipled probabilistic frame work, within which a complex- valued extension of Hebbian learning can be deri ved, with 10 Consider for instance the tempotron neuron model ( Gütig & Sompolinsky , 2006 ), which learns to recognize spike patterns. 11 Of interest is also the work of Cadieu & Olshausen ( 2011 ), who use a comple x-valued formulation to separate out motion and form in a generati ve model of natural movies. They make no con- nection to neuronal synchrony ho wever . potentially interesting functional implications and biolog- ical interpretations in terms of Spike T iming Dependant Plasticity ( Sjöström & Gerstner , 2010 ). For our purposes, the DUBM energy function could be extended to include synchrony and classic terms (Eq. 2 ), if desired, and to al- low the units to switch off (rather than being constrained to the unit circle), e.g. with a ‘spike and slab’ formulation ( Courville et al. , 2011 ; Ki vinen & Williams , 2011 ). Per- forming mean-field inference in the resulting model should be qualitati vely similar to running the networks used in our work here. The original DUBM applications were limited to simple data, shallow architectures, and supervised training (input phases were provided). It would be worthwhile to reex- amine the approach in the context of recent deep learn- ing dev elopments; howe ver , training Boltzmann machines successfully is not straightforward, and it is not clear whether approximate training methods such as contrastiv e div ergence ( Hinton , 2002 ; 2010 ) can be translated to the complex-v alued case with success. W eber & W ermter ( 2005 ) briefly describe a complex- valued neural network for image segmentation, modeling synchrony mediated by lateral interactions in primary vi- sual cortex, though the results and analysis presented are perhaps too limited to conclude much about their approach. A model of binding by synchrony in a multi-layer network is proposed by Rao et al. ( 2008 ) and Rao & Cecchi ( 2010 ; 2011 ). There, both neuronal dynamics and weight learn- ing are derived from optimizing an objective function (as in sparse coding, Olshausen & Field , 1997 ). The resulting formulation is actually similar to ours in sev eral respects, as is the underlying motiv ation and analysis. W e became aware of this work after having dev eloped our approach. Our work is complementary in se veral regards: our goal is to provide a broader perspectiv e on how synchrony could be used in neural netw orks, rather than proposing one par- ticular model; we performed a dif ferent set of experiments and conceptual analyses (for example, Rao and colleagues do not address the gating aspect of synchrony); Rao et al. ’ s approach relied on the model seeing only indi vidual objects during training, which we showed to be unnecessary; and lastly , ev en though they applied synchrony during learn- ing, the dataset they used for their experiments is, ar guably , ev en simpler than our datasets. Thus, it remains to be tested whether their particular model formulation is ideal. Finally , we are currently also exploring training synchron y networks with backpropagation. Even a feed-forward net- work could potentially benefit from synchrony as the latter could carry information about sensory input and network state ( Geman , 2006 ), though complex-v alued weights may be necessary for detecting synchrony patterns. Alterna- tiv ely , to allow for dynamic binding by synchrony , a net- Neuronal Synchr ony in Complex-V alued Deep Networks work could be trained as recurrent network with backprop- agation through time ( Rumelhart et al. , 1985 ), gi ven appro- priate input data and cost functions. In our experiments, the number of iterations required was in the order of tens or hundreds, thus making such training challenging. Again, complex-v alued weights could be beneficial in establishing synchrony assemblies more rapidly . Note: ICLR has an open r eview format and allows for pa- pers to be updated. W e addr ess some issues raised by the r eviewers in Appendix C . Acknowledgements W e thank Nicolas Heess, Christopher K.I. W illiams, Michael J. Frank, David A. Mély , and Ian J. Goodfellow for helpful feedback on earlier versions of this work. W e would also like to thank Elie Bienenstock and Stuart Ge- man for insightful discussions which motived this project. This work was supported by ONR (N000141110743) and NSF early career award (IIS-1252951). DPR was sup- ported by a fellowship within the Postdoc-Programme of the German Academic Exchange Service (D AAD). Addi- tional support was provided by the Robert J. and Nancy D. Carney Fund for Scientific Innov ation, the Brown Institute for Brain Sciences (BIBS), the Center for V ision Research (CVR) and the Center for Computation and V isualization (CCV). A ppendix A. Supplementary figures and videos Additional outcome examples from the various experi- ments are shown in Figure S 1 (as referenced in main text). W e also provide the following supplementary videos on the arXiv (): a sample being generated from a model trained on the bars problem ( bars_sample_movie.mp4 ), an example of the synchro- nization process in the visible and hidden layers on a bars image ( bars_synch_movie.mp4 ), and se veral examples of visible layer synchronization for the 3-shapes and MNIST+shape datasets ( 3shapes_synch_movie.mp4 and MNIST_1_shape_synch_movie.mp4 , respecti vely). B. Model and simulation parameters T raining was implemented within the Pylearn2 frame work of Goodfellow et al. ( 2013b ). All networks were trained as real-valued deep Boltzmann machines, using layer -wise training. Layers were trained with 60 epochs of 1-step con- trastiv e div ergence ( Hinton , 2002 ; learning rate 0.1, mo- mentum 0.5, weight decay 10 − 4 ; see Hinton , 2010 , for e x- planation of these training aspects), with the exception of the model trained on MNIST+shape, where 5-step persis- tent contrastiv e div ergence ( Tieleman , 2008 ) was used in- stead (learning rate 0.005, with exponential decay factor of 1 + 1 . 5 × 10 − 5 ). All datasets had 60,000 training im- ages, and were divided into mini-batches of size 100. Bi- ases were initialized to -4 to encourage sparse representa- tions (for reasons discussed by Reichert et al. , 2011 ). Initial weights were drawn randomly from a uniform distribution with support [ − 0 . 05 , 0 . 05 ] . The number of hidden layers, number of hidden units, and sizes of the receptiv e fields were varied from e xperiment to experiment to demonstrate various properties of neuronal synchronization in the networks (after con version to com- plex values). The specific numbers were chosen mostly to be in line with earlier work and not of importance. In detail, model architectures were as follo ws: for the bars problem (Section 3.1 ), input images were 20 × 20, and the restricted Boltzmann machine had one hidden layer with 14 × 14 × 3 units (14 height, 14 width, 3 units per location), and 7 × 7 receptiv e fields. For the corners dataset (Section 3.2 ), input images were 28 × 28, three hidden layers had 22 × 22 × 2, 13 × 13 × 4, and 676 units, respectively , and receptiv e fields were 7 × 7, 10 × 10 , and 13 × 13 (i.e., global in the last layer). For the 3-shapes dataset (Section 3.3 ), input im- ages were 20 × 20, hidden layer dimensions 14 × 14 × 3, 8 × 8 × 10, and 676, and receptiv e fields 7 × 7, 7 × 7, and 8 × 8 (global). For the MNIST+shape data (also Section 3.3 ), input images were 28 × 28, hidden layer dimensions 22 × 22 × 2, 13 × 13 × 4, and 676, and receptiv e fields 7 × 7, 10 × 10, and 13 × 13 (global). For the synchronization figures, the number of steps to run was chosen so that synchronization w as fairly stable at that point (100 steps was generally found to be suf ficient for all models but the one trained on MNIST+shape images, where we chose 1000 steps). Lastly , as mentioned in the main text, we note that the con- version of pretrained real-v alued DBMs did not always lead to models exhibiting successful synchronization. Here, successful refers to the ability of the model to separately synchronize different objects in the input images. Unsuc- cessful setups resulted in either all visible units synchro- nizing to a single phase, or objects not synchronizing fully , across most of the images in a dataset. W e found that whether or not a setup worked depended both on the dataset and the training procedures used. The presented results are representativ e of well performing networks. Proper synchronization is an outcome of the right balance of excitatory and inhibitory connectivity patterns. Further analysis of ho w network parameters affect synchronization is the subject of ongoing work, as is incorporating synchro- nization during learning to achieve desired synchronization behavior . Neuronal Synchr ony in Complex-V alued Deep Networks (a) (b) (c) (d) (e) Supplementary figure S1. Additional results. (a) Samples generated from a restricted Boltzmann machine trained on the bars problem. The generated images consist mostly of full-length bars. The individual receptive fields in the hidden layer were constrained to image regions of smaller e xtent than the bars. Thus, bars were necessarily represented in a distributed fashion. (b) - (e) Additional examples of synchronized visible units for the various datasets. The magnitudes of the visible units were set according to the binary input images (not used in training), the phases were determined by input from the hidden units. See also supplementary videos ( http: //arxiv.org/abs/1312.6115 ), and the main text for details. C. Addressing issues raised by the r eviewers In the following, we summarize parts of the discussion of the ICLR re view period, paraphrasing the comments of the ICLR re viewers. W e expand se veral points that were only briefly cov ered in the main text. 1. In the bars experiment, some bars appear to share the same phase. W ouldn’t a readout be confused and judge multiple bars to be the same object? This is a very important issue that we are still considering. It is perhaps an issue more generally with the underlying biological theories rather than just our specific approach. As we noted in the main text, some theories pose that a limit on ho w many discrete objects can be represented in an oscillation cycle, without interference, e xplains certain ca- pacity limits in cognition. The references we cited ( Jensen & Lisman , 2005 ; Fell & Axmacher , 2011 ) refer to w orking memory as an example (often 4-7 items; note the number of peaks in Figure 3 c—obviously this needs more quanti- tativ e analysis). W e would posit that, more generally , anal- ysis of visual scenes requiring the concurrent separation of multiple objects is limited accordingly (one might call this a prediction—or a ‘postdiction’?—of our model). The question is then, how does the brain cope with this limi- tation? As usual in the face of perceptual capacity limits, the solution likely would in volve attentional mechanisms. Such mechanisms might dynamically change the grouping of sensory inputs depending on task and context, such as whether questions are asked about individual parts and fine detail, or object groups and lar ger patterns. In the bars ex- ample, one might percei ve the bars as a single group or tex- ture, or focus on individual bars as capacity allows, perhaps relegating the rest of the image to a general background. Dynamically changing phase assignments according to context, through top-down attentional input, should, in principle, be possible within the proposed framew ork: this is similar to grouping according to parts or wholes with top-down input, as in the e xperiment of Section 3.2 . 2.What about the overlaps of the bars? These ar eas seem to be mis- or ambiguously labeled. This is more of a problem with the task itself being ill- defined on binary images, where an ov erlapping pixel can- not really be meaningfully said to belong to either object Neuronal Synchr ony in Complex-V alued Deep Networks alone (as there is no occlusion as such). W e plan to use (representations of) real-valued images in the future. 3. What are the contrib utions of this paper compar ed to the work of Rao et al.? As we have acknowledged, the w ork of Rao et al. is similar in sev eral points (we arriv ed at our framew ork and results independently). W e make additional contributions. First of all, to clarify the issue of training on multiple objects: in Rao et al. ’ s work, the training data consisted of a small number of fix ed 8 × 8 pixel images (16 or less images in to- tal for a dataset), containing simple patterns (one example has 4 small images with two faces instead). T o demon- strate binding by synchrony , two of these patterns are su- perimposed during test time. W e belie ve that going beyond this extremely constrained task, in particular showing that the binding can work when trained and tested on multiple objects, on multiple datasets including MNIST containing thousands of (if simple) images, is a valid contrib ution from our side. Our results also provide some insights into the nature of representations in a DBM trained on multiple ob- jects. Similarly , as far as we can see, Rao et al. do not discuss the gating aspect at all (Section 2.2 ), nor the specific is- sues with excitation and inhibition (Section 2.1 ) that we pointed out as motiv ation for using both classic and syn- chrony terms. Lastly , the following issues are addressed in our experiments only: network behavior on more than two objects; synchronization for objects that are not contigu- ous in the input images, as well as part vs. whole effects (Section 3.2 ); decoding distributed hidden representations according to phase (Section 3.3 ). In particular , it seems to be the case that Rao et al. ’ s networks had a localist (single object ↔ single unit) representation in the top hidden layer in the majority of cases. 4. The intr oduction of phase is done in an ad-hoc way , with- out r eal justification fr om pr obabilistic goals. W e agree that framing our approach as a proper proba- bilistic model would be helpful (e.g. using an extension of the DUBM of Zemel et al. , 1995 , as discussed). At the same time, there is v alue to presenting the heuris- tic as is, based on a specific neuronal activ ation function, to emphasize that this idea could find application in neu- ral networks more generally , not only those with a prob- abilistic interpretation or Boltzmann machines (that our approach is div orced from any one particular model is another dif ference when compared to Rao et al. ’ s work). In particular, we hav e performed exploratory experiments with networks trained (pretrained as real-v alued nets or trained as complex-valued nets) with backpropagation, in- cluding (con volutional) feed-forward neural networks, au- toencoders, or recurrent networks, as well as a biological model of lateral interactions in V1. A more rigorous math- ematical and quantitativ e analysis is needed in any case. 5. How does running the complex-valued network r elate to infer ence in the DBM? W e essentially use the normal DBM training as a form of pretraining for the final, complex-v alued architecture. The resulting neural network is likely not exactly to be inter- preted as a probabilistic model. Howe ver , if such an inter - pretation is desired, our understanding is that running the network could be seen as an approximation of inference in a suitably extended DUBM (by adding an off state and a classic term; refer to Zemel et al. , 1995 , for comparison). For our e xperiments, we used two procedures (with similar outcomes) in analogy to inference in a DBM: either sam- pling a binary output magnitude from f () , or letting f () de- termine the output magnitude deterministically; the output phase was always set to the phase of the total postsynaptic input. The first procedure is similar to inference in such an extended DUBM, b ut, rather than sampling from a circular normal distribution on the unit circle when the unit is on, we simply take the mode of that distribution. The second procedure should qualitati vely correspond to mean-field in- ference in an extended DUBM (see Eqs. 9 and 10 in the DUBM paper), using a slightly different output function. 6. Do phases assigned to the input chang e when running for mor e iterations than what is shown? Phase assignments appear to be stable (see the supplemen- tary movies), though we did not analyze this in detail. It should also be noted that the o verall network is in variant to absolute phase, so only the relativ e phases matter . References Aizenberg, I. & Moraga, C. (2007). Multilayer feedforward neural network based on multi-valued neurons (MLMVN) and a backpropagation learning algorithm. Soft Computing , 11(2):169–183. Behrmann, M., Zemel, R.S., & Mozer , M.C. (1998). Object- based attention and occlusion: evidence from normal partic- ipants and a computational model. Journal of experimental psychology . Human perception and performance , 24(4):1011– 1036. PMID: 9706708. Benchenane, K., T iesinga, P .H., & Battaglia, F .P . (2011). Os- cillations in the prefrontal cortex: a gate way to memory and attention. Current Opinion in Neur obiology , 21(3):475–485. Bengio, Y ., Courville, A., & V incent, P . (2012). Representation learning: A revie w and new perspectiv es. [cs] . Cadieu, C.F ., Hong, H., Y amins, D., Pinto, N., Majaj, N.J., & DiCarlo, J.J. (2013). The neural representation benchmark and its ev aluation on brain and machine. . Neuronal Synchr ony in Complex-V alued Deep Networks Cadieu, C.F . & Olshausen, B.A. (2011). Learning intermediate- lev el representations of form and motion from natural movies. Neural Computation , 24(4):827–866. Courville, A.C., Bergstra, J., & Bengio, Y . (2011). A spike and slab restricted Boltzmann machine. In International Confer- ence on Artificial Intelligence and Statistics , p. 233–241. Crick, F . (1984). Function of the thalamic reticular complex: the searchlight hypothesis. Proceedings of the National Academy of Sciences , 81(14):4586–4590. PMID: 6589612. Duncan, J., Humphreys, G., & W ard, R. (1997). Competiti ve brain activity in visual attention. Curr ent Opinion in Neur obi- ology , 7(2):255–261. Fell, J. & Axmacher , N. (2011). The role of phase synchro- nization in memory processes. Natur e Reviews Neur oscience , 12(2):105–118. Fiori, S. (2005). Nonlinear complex-valued extensions of Heb- bian learning: An essay . Neural Computation , 17(4):779–838. Fries, P . (2005). A mechanism for cognitive dynamics: neuronal communication through neuronal coherence. T r ends in cogni- tive sciences , 9(10):474–480. PMID: 16150631. Földiák, P . (1990). Forming sparse representations by local anti- Hebbian learning. Biological Cybernetics , 64(2):165–170. Gabernet, L., Jadha v , S.P ., Feldman, D.E., Carandini, M., & Scanziani, M. (2005). Somatosensory integration controlled by dynamic thalamocortical feed-forward inhibition. Neuron , 48(2):315–327. Geman, S. (2006). Inv ariance and selectivity in the ventral visual pathway . Journal of Physiology-P aris , 100(4):212–224. Goodfellow , I.J., Courville, A., & Bengio, Y . (2013a). Joint training deep Boltzmann machines for classification. arXiv:1301.3568 . Goodfellow , I.J., W arde-Farley , D., Lamblin, P ., Dumoulin, V ., Mirza, M., Pascanu, R., Bergstra, J., Bastien, F ., & Bengio, Y . (2013b). Pylearn2: a machine learning research library . arXiv e-print 1308.4214 . Goodman, D.F .M. & Brette, R. (2009). The brian simulator . F r on- tiers in Neuroscience , 3(2):192–197. PMID: 20011141 PM- CID: PMC2751620. Gütig, R. & Sompolinsky , H. (2006). The tempotron: a neuron that learns spike timing–based decisions. Natur e Neur oscience , 9(3):420–428. Hinton, G.E. (2002). Training products of experts by minimiz- ing contrastiv e diver gence. Neur al Computation , 14(8):1771– 1800. Hinton, G.E. (2010). A practical guide to training restricted Boltz- mann machines. T echnical report UTML TR 2010-003 , Depart- ment of Computer Science, Machine Learning Group, Univer - sity of T oronto. Hirose, A. (2011). Nature of comple x number and complex- valued neural networks. F r ontiers of Electrical and Electr onic Engineering in China , 6(1):171–180. Jensen, O. & Lisman, J.E. (2005). Hippocampal sequence- encoding driven by a cortical multi-item working memory buf fer . T r ends in Neurosciences , 28(2):67–72. Kim, T . & Adalı, T . (2003). Approximation by fully complex mul- tilayer perceptrons. Neural Computation , 15(7):1641–1666. Kivinen, J. & Williams, C. (2011). T ransformation equiv ariant Boltzmann machines. In T . Honkela, W . Duch, M. Girolami, & S. Kaski, eds., Artificial Neur al Networks and Mac hine Learn- ing – ICANN 2011 , vol. 6791 of Lectur e Notes in Computer Science , pp. 1–9. Springer Berlin / Heidelberg. Krizhevsk y , A., Sutskev er, I., & Hinton, G. (2012). ImageNet classification with deep conv olutional neural networks. In P . Bartlett, F .C.N. Pereira, C.J.C. Burges, L. Bottou, & K.Q. W einberger , eds., Advances in Neur al Information Pr ocessing Systems 25 , pp. 1106–1114. Le, Q.V ., Ranzato, M., Monga, R., De vin, M., Chen, K., Corrado, G.S., Dean, J., & Ng, A.Y . (2011). Building high-lev el features using large scale unsupervised learning. arXiv:1112.6209 [cs] . LeCun, Y ., Boser , B., Denker , J.S., Henderson, D., Ho ward, R.E., Hubbard, W ., & Jackel, L.D. (1989). Backpropagation ap- plied to handwritten zip code recognition. Neural Computa- tion , 1(4):541–551. Lücke, J. & Sahani, M. (2008). Maximal causes for non-linear component extraction. The J ournal of Mac hine Learning Re- sear ch , 9:1227–1267. Miconi, T . & V anRullen, R. (2010). The gamma slideshow: Object-based perceptual cycles in a model of the visual cor- tex. F r ontiers in Human Neuroscience , 4. PMID: 21120147 PMCID: PMC2992033. Miller , E.K. & Buschman, T .J. (2013). Cortical circuits for the control of attention. Curr ent Opinion in Neur obiology , 23(2):216–222. Mozer , M.C., Zemel, R.S., Behrmann, M., & Williams, C.K. (1992). Learning to segment images using dynamic feature binding. Neural Computation , 4(5):650–665. Nitta, T . (2004). Orthogonality of decision boundaries in complex-v alued neural networks. Neural Computation , 16(1):73–97. Olshausen, B.A. & Field, D.J. (1997). Sparse coding with an ov ercomplete basis set: A strategy employed by V1? V ision Resear ch , 37(23):3311–3325. Rao, A.R. & Cecchi, G.A. (2010). An objecti ve function utiliz- ing comple x sparsity for efficient segmentation in multi-layer oscillatory networks. International J ournal of Intelligent Com- puting and Cybernetics , 3(2):173–206. Rao, A. & Cecchi, G. (2011). The effects of feedback and lateral connections on perceptual processing: A study using oscilla- tory networks. In IJCNN 2011 , pp. 1177–1184. Rao, A., Cecchi, G., Peck, C., & K ozloski, J. (2008). Unsuper- vised se gmentation with dynamical units. IEEE T ransactions on Neural Networks , 19(1):168–182. Ray , S. & Maunsell, J.H.R. (2010). Differences in gamma fre- quencies across visual corte x restrict their possible use in com- putation. Neuron , 67(5):885–896. Neuronal Synchr ony in Complex-V alued Deep Networks Reichert, D., Seriès, P ., & Storkey , A. (2010). Hallucinations in Charles Bonnet syndrome induced by homeostasis: a deep Boltzmann machine model. In J. Lafferty , C.K.I. Williams, J. Shawe-T aylor , R.S. Zemel, & A. Culotta, eds., Advances in Neural Information Pr ocessing Systems 23 , pp. 2020–2028. Reichert, D.P . (2012). Deep Boltzmann machines as hierarchical generativ e models of perceptual inference in the corte x. PhD thesis, Univ ersity of Edinburgh, Edinb urgh, UK. Reichert, D.P ., Seriès, P ., & Storke y , A.J. (2011). A hierar- chical generati ve model of recurrent object-based attention in the visual cortex. In T . Honkela, W . Duch, M. Girolami, & S. Kaski, eds., Artificial Neur al Networks and Mac hine Learn- ing - ICANN 2011 , vol. 6791, pp. 18–25. Springer Berlin Hei- delberg, Berlin, Heidelber g. Reichert, D.P ., Seriès, P ., & Storke y , A.J. (2013). Charles Bon- net syndrome: Evidence for a generative model in the cortex? PLoS Comput Biol , 9(7):e1003134. Rumelhart, D.E., Hinton, G.E., & Williams, R.J. (1985). Learning internal representations by error propagation. T ech. rep. Saalmann, Y .B., Pinsk, M.A., W ang, L., Li, X., & Kastner, S. (2012). The pulvinar regulates information transmission be- tween cortical areas based on attention demands. Science , 337(6095):753–756. Salakhutdinov , R. & Hinton, G. (2009). Deep Boltzmann ma- chines. In Pr oceedings of the 12th International Conference on Artificial Intellig ence and Statistics (AISTA TS) , v ol. 5, pp. 448–455. Savitha, R., Suresh, S., & Sundararajan, N. (2011). Metacognitiv e learning in a fully complex-v alued radial basis function neural network. Neural Computation , 24(5):1297–1328. Serre, T ., Oliv a, A., & Poggio, T . (2007). A feedforward archi- tecture accounts for rapid categorization. Proceedings of the National Academy of Sciences , 104(15):6424–6429. Shadlen, M.N. & Movshon, J.A. (1999). Synchrony unbound: a critical e valuation of the temporal binding hypothesis. Neur on , 24(1):67–77. Shipp, S. (2003). The functional logic of cortico–pulvinar con- nections. Philosophical T ransactions of the Royal Society of London. Series B: Biological Sciences , 358(1438):1605–1624. PMID: 14561322. Singer , W . & Gray , C.M. (1995). V isual feature inte gration and the temporal correlation hypothesis. Annual r eview of neur o- science , 18:555–586. PMID: 7605074. Singer , W . (2007). Binding by synchrony . Scholarpedia , 2(12):1657. Sjöström, J. & Gerstner , W . (2010). Spike-timing dependent plas- ticity . Scholarpedia , 5(2):1362. Smolensky , P . (1986). Information processing in dynamical sys- tems: foundations of harmony theory . In P arallel distributed pr ocessing: explor ations in the micr ostructure of cognition. V ol. 1. F oundations , p. 194–281. MIT Press, Cambridge, MA. Spratling, M.W . (2011). Unsupervised learning of generati ve and discriminative weights encoding elementary image com- ponents in a predictiv e coding model of cortical function. Neu- ral Computation , 24(1):60–103. Stanley , G.B. (2013). Reading and writing the neural code. Natur e Neur oscience , 16(3):259–263. T ieleman, T . (2008). Training restricted Boltzmann machines using approximations to the lik elihood gradient. In Pr oceed- ings of the 25th Annual International Confer ence on Machine Learning , pp. 1064–1071. Helsinki, Finland. Uhlhaas, P .J., Pipa, G., Lima, B., Melloni, L., Neuenschwander, S., Nikoli ´ c, D., & Singer , W . (2009). Neural synchrony in cor- tical netw orks: history , concept and current status. F rontier s in Inte grative Neur oscience , 3:17. von der Malsbur g, C. (1981). The correlation theory of brain func- tion. T ech. Rep. S1-2 , Department of Neurobiology , MPI for Biophysical Chemistry , Goettingen, W .-Germany . W eber, C. & W ermter, S. (2005). Image se gmentation by complex-v alued units. In W . Duch, J. Kacprzyk, E. Oja, & S. Zadro ˙ zny , eds., Artificial Neural Networks: Biological In- spirations – ICANN 2005 , no. 3696 in Lecture Notes in Com- puter Science, pp. 519–524. Springer Berlin Heidelberg. Zeiler , M.D. & Fergus, R. (2013). V isualizing and understanding con volutional networks. arXiv:1311.2901 [cs] . Zemel, R.S., W illiams, C.K., & Mozer, M.C. (1995). Lending direction to neural networks. Neural Networks , 8(4):503–512.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment