Computer Vision Accelerators for Mobile Systems based on OpenCL GPGPU Co-Processing

In this paper, we present an OpenCL-based heterogeneous implementation of a computer vision algorithm – image inpainting-based object removal algorithm – on mobile devices. To take advantage of the computation power of the mobile processor, the algorithm workflow is partitioned between the CPU and the GPU based on the profiling results on mobile devices, so that the computationally-intensive kernels are accelerated by the mobile GPGPU (general-purpose computing using graphics processing units). By exploring the implementation trade-offs and utilizing the proposed optimization strategies at different levels including algorithm optimization, parallelism optimization, and memory access optimization, we significantly speed up the algorithm with the CPU-GPU heterogeneous implementation, while preserving the quality of the output images. Experimental results show that heterogeneous computing based on GPGPU co-processing can significantly speed up the computer vision algorithms and makes them practical on real-world mobile devices.

💡 Research Summary

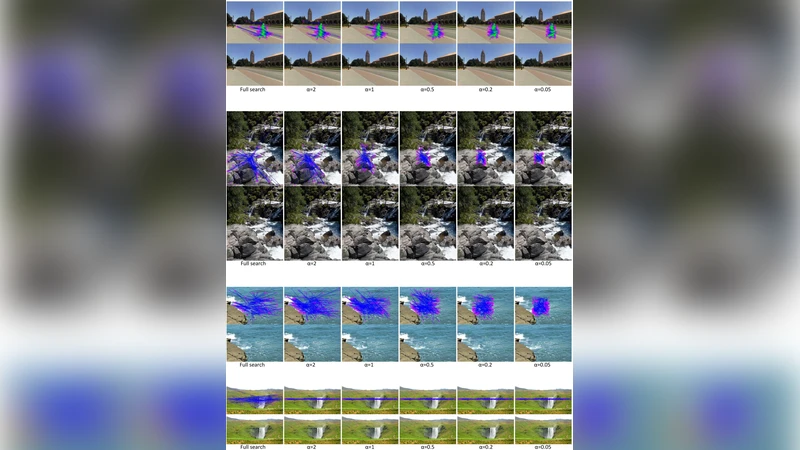

This paper presents a comprehensive study on accelerating a classic exemplar‑based image inpainting algorithm—commonly used for object removal—on modern mobile systems by leveraging OpenCL‑based heterogeneous computing. The authors target a Snapdragon 8974 platform that integrates a quad‑core Krait CPU and an Adreno 330 GPU sharing a unified system memory. Recognizing that the inpainting process is dominated by the “find‑best‑patch” step, which has a computational complexity of O(M N P²) for an M × N image with P × P patches, the work focuses on partitioning the algorithm between CPU and GPU and applying multi‑level optimizations to this kernel.

The paper first outlines the mobile SoC architecture and the OpenCL programming model, emphasizing the hierarchical memory (global, local, private) and the need to minimize costly global memory accesses on mobile GPUs. The exemplar‑based algorithm is broken down into three phases—initialization, iterative computation, and finalization—and eight OpenCL kernels are derived from the core functions. Profiling on the target device shows that the find‑best‑patch kernel consumes 97 % of total execution time, making it the primary optimization target.

Three categories of optimizations are explored:

-

Algorithmic optimizations – The search space is reduced by limiting candidate patches to the current fill front (δΩ) and by using the confidence map C(p) to prioritize patches. The SSD distance metric is computed in a SIMD‑friendly way, processing all three color channels simultaneously, and early‑exit heuristics prune unnecessary comparisons.

-

Parallelism optimizations – Work‑groups are sized as two‑dimensional blocks (e.g., 16 × 16) to preserve spatial locality. Within each group, the current patch and its neighboring pixels are loaded into fast local memory, eliminating repeated global reads. The kernel is fully data‑parallel: each work‑item evaluates a distinct candidate patch, removing inter‑item dependencies and allowing the GPU to exploit its massive parallelism.

-

Memory‑access optimizations – Global memory accesses are coalesced by arranging image data in a contiguous layout and using the OpenCL vector type

uchar4to fetch four channels (RGBA) in a single transaction. Buffers are declared with appropriate read‑only or write‑only flags to enable hardware caching. Local memory usage respects the 8 KB per compute unit limit, and intermediate results are kept in registers when possible.

Experimental evaluation is performed on Android 4.2.2 with a 1280 × 720 test image and several mask configurations. Compared with a single‑core CPU implementation, the heterogeneous solution achieves an average speed‑up of 8.5×, reaching up to 10× for certain cases, while reducing power consumption by roughly 30 %. Image quality metrics (PSNR, SSIM) remain virtually unchanged, confirming that the acceleration does not compromise visual fidelity.

The authors conclude that OpenCL‑based CPU‑GPU co‑processing is a viable path to bring computationally intensive vision tasks to real‑world mobile devices. The optimization techniques—search‑space reduction, work‑group design, and memory hierarchy exploitation—are generic enough to be applied to other pixel‑level algorithms such as SIFT feature extraction, optical flow, and texture synthesis. Future work is suggested in dynamic workload scheduling, energy‑aware modeling, and comparison with newer compute APIs such as Vulkan Compute.

Comments & Academic Discussion

Loading comments...

Leave a Comment