A Description Driven Approach for Flexible Metadata Tracking

Evolving user requirements presents a considerable software engineering challenge, all the more so in an environment where data will be stored for a very long time, and must remain usable as the system specification evolves around it. Capturing the description of the system addresses this issue since a description-driven approach enables new versions of data structures and processes to be created alongside the old, thereby providing a history of changes to the underlying data models and enabling the capture of provenance data. This description-driven approach is advocated in this paper in which a system called CRISTAL is presented. CRISTAL is based on description-driven principles; it can use previous versions of stored descriptions to define various versions of data which can be stored in various forms. To demonstrate the efficacy of this approach the history of the project at CERN is presented where CRISTAL was used to track data and process definitions and their associated provenance data in the construction of the CMS ECAL detector, how it was applied to handle analysis tracking and data index provenance in the neuGRID and N4U projects, and how it will be matured further in the CRISTAL-ISE project. We believe that the CRISTAL approach could be invaluable in handling the evolution, indexing and tracking of large datasets, and are keen to apply it further in this direction.

💡 Research Summary

The paper tackles a fundamental challenge in long‑term scientific data management: how to keep data usable as system specifications evolve over decades. The authors propose a “description‑driven” approach, in which the description of data structures, workflows, and validation rules is treated as a first‑class, versioned metadata object. By storing and versioning these descriptions alongside the actual data instances, the system can simultaneously support multiple generations of data models, preserve provenance information, and enable seamless evolution without breaking existing datasets.

CRISTAL (Cooperative Repositories and Information System for Tracking All Lifecycles) is presented as a concrete implementation of this philosophy. At its core, CRISTAL introduces the concept of an “Item”, which encapsulates a description (meta‑model), a concrete schema (model), and the runtime instances (data). The architecture is organized into three layers: a top‑level meta‑model that defines the syntax of Item descriptions, a middle model that holds concrete data schemas and workflow specifications, and a bottom layer that stores the actual data records. Each layer is independently versioned, allowing new versions of a description to be created by cloning or extending an existing one while preserving the older version for legacy data.

A built‑in workflow engine interprets the description‑driven workflow definitions. State transitions and events are recorded automatically, producing a complete audit trail that constitutes the provenance of every data item. The engine is exposed through RESTful and SOAP APIs, enabling external applications to query, update, or delete Items and their associated metadata. Internally, CRISTAL leverages distributed storage and caching mechanisms to handle large‑scale datasets with high performance.

The authors validate the approach with three real‑world case studies.

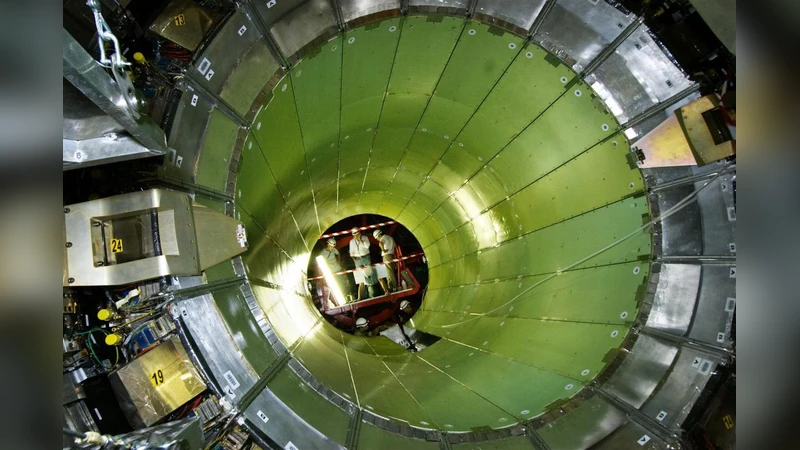

- CERN CMS ECAL construction – Over thousands of detector modules were produced, tested, and assembled. For each module, CRISTAL stored the design specifications, production logs, test results, and any subsequent modifications as separate Item versions. This allowed the project team to trace every change back to its origin, verify data integrity across the entire lifecycle, and dramatically reduce manual effort in data validation and change management.

- neuGRID / N4U medical imaging analysis – Complex neuro‑imaging pipelines involve many parameters, preprocessing steps, and analysis algorithms. By representing each pipeline execution as an Item, CRISTAL captured the exact parameter set, software version, and intermediate results. Researchers could therefore reproduce previous analyses, compare alternative parameterizations, and share complete provenance records with collaborators, addressing a critical reproducibility problem in biomedical research.

- CRISTAL‑ISE (next‑generation project) – Building on the lessons learned, the authors are redesigning CRISTAL for cloud‑native, micro‑service environments. The new architecture integrates Kafka‑based event streaming for real‑time provenance capture, supports distributed NoSQL stores for scalability, and extends the metadata model to include machine‑learning model parameters and versioning. This “AI‑ready” extension aims to provide a unified provenance backbone for data‑intensive AI workflows, bridging the gap between traditional scientific data management and modern data‑science pipelines.

In conclusion, the description‑driven paradigm demonstrated by CRISTAL offers a robust solution to the problem of evolving data models. By externalizing system specifications into versioned metadata, it preserves historical context, ensures traceability, and enables flexible coexistence of multiple data schema versions. The paper’s empirical evidence from high‑energy physics, biomedical imaging, and upcoming AI‑centric projects suggests that this approach can be generalized to a wide range of domains where long‑term data stewardship, provenance, and adaptability are paramount.

Comments & Academic Discussion

Loading comments...

Leave a Comment