Intrinsically Motivated Learning of Visual Motion Perception and Smooth Pursuit

We extend the framework of efficient coding, which has been used to model the development of sensory processing in isolation, to model the development of the perception/action cycle. Our extension combines sparse coding and reinforcement learning so that sensory processing and behavior co-develop to optimize a shared intrinsic motivational signal: the fidelity of the neural encoding of the sensory input under resource constraints. Applying this framework to a model system consisting of an active eye behaving in a time varying environment, we find that this generic principle leads to the simultaneous development of both smooth pursuit behavior and model neurons whose properties are similar to those of primary visual cortical neurons selective for different directions of visual motion. We suggest that this general principle may form the basis for a unified and integrated explanation of many perception/action loops.

💡 Research Summary

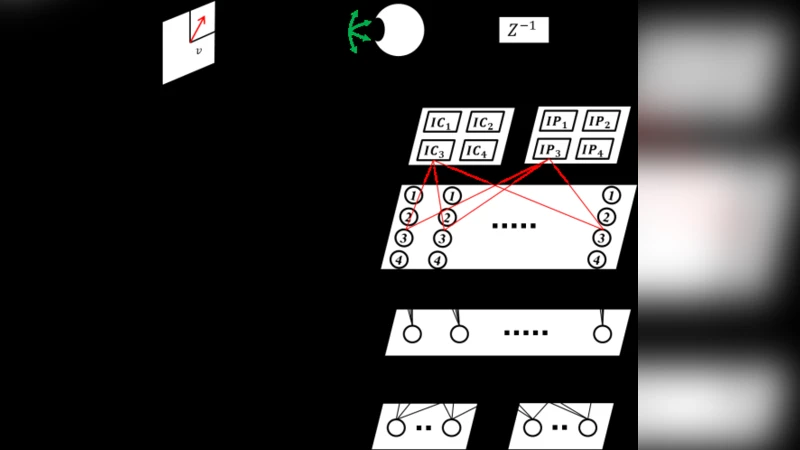

The paper presents a unified computational framework that extends the efficient coding hypothesis to the entire perception‑action loop. Traditional efficient coding models have focused on optimizing sensory representations in isolation, assuming a static downstream read‑out. In contrast, the authors argue that sensory processing and motor behavior co‑evolve to maximize a shared intrinsic motivation: the fidelity of neural encoding under strict resource constraints (limited number of active neurons). To instantiate this idea they combine two learning mechanisms. First, a sparse coding network learns a dictionary of basis functions that reconstructs the incoming visual stream with minimal activity. The reconstruction error and the total activity serve as a joint cost that the network minimizes, thereby enforcing an efficient representation. Second, a reinforcement learning agent controls the eye’s rotational velocity. The agent’s state consists of the current visual input and recent motor commands, and its action is the chosen eye velocity. Crucially, the reward signal is derived from the sparse coding stage: it is the inverse of the reconstruction error penalized by the number of active units. Thus, actions that make the visual input more predictable (e.g., by keeping the target on the fovea) improve coding efficiency and receive higher reward, shaping the policy toward smooth pursuit.

The authors test the model in a simulated environment where a point target moves across a 2‑D visual field. Initially the eye moves randomly and the dictionary is unstructured. Over training episodes the system spontaneously develops two hallmark features of the primate visual‑motor system. (1) The eye learns a smooth‑pursuit behavior that keeps the moving target near the fovea, reducing retinal slip and consequently lowering the reconstruction error. (2) The dictionary converges to a set of Gabor‑like filters selective for specific motion directions, speeds, and spatial frequencies, mirroring the tuning properties of primary visual cortex (V1) neurons. Quantitative analyses show that the emergent direction‑selective units exhibit cosine‑tuned response profiles and that the pursuit gain matches experimentally observed values.

The study demonstrates that a single, biologically plausible objective—maximizing coding fidelity while minimizing neural activity—can simultaneously drive the development of both perceptual representations and motor policies. This provides a compelling mechanistic account of how perception and action may be jointly shaped during development without requiring external supervision or hand‑crafted loss functions. The authors suggest that the same principle could be extended to more complex perception‑action loops, such as eye‑hand coordination, navigation, or language‑grounded sensorimotor learning. By showing that intrinsic motivation alone can give rise to sophisticated sensorimotor behavior and cortical‑like representations, the work bridges the gap between computational neuroscience theories of efficient coding and reinforcement‑learning models of behavior, offering a promising unified framework for future research on embodied intelligence.

Comments & Academic Discussion

Loading comments...

Leave a Comment