Detecting Opinions in Tweets

Given the incessant growth of documents describing the opinions of different people circulating on the web, including Web 2.0 has made it possible to give an opinion on any product in the net. In this paper, we examine the various opinions expressed in the tweets and classify them positive, negative or neutral by using the emoticons for the Bayesian method and adjectives and adverbs for the Turney’s method

💡 Research Summary

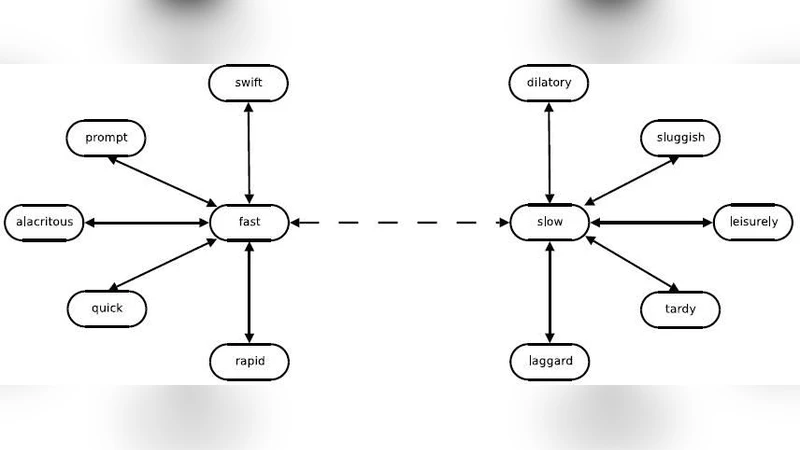

The paper addresses the problem of automatically detecting and classifying opinions expressed in Twitter messages, a task that has become increasingly important as the volume of user‑generated content on the web continues to grow. The authors propose a hybrid approach that combines two complementary techniques: a Naïve Bayes classifier trained on tweets that contain emoticons, and a Turney‑style Pointwise Mutual Information (PMI) method that relies on adjectives and adverbs. Emoticons such as “:-)” and “:-(” are treated as explicit sentiment cues; tweets containing them are automatically labeled as positive, negative, or neutral and used to train the Bayesian model. For tweets without emoticons, the Turney method extracts sentiment‑bearing adjectives and adverbs, queries a web search engine to obtain co‑occurrence counts with prototypical positive and negative words, and computes a PMI‑based sentiment score. The two scores are then merged by a weighted average that gives higher weight to the Bayesian output when an emoticon is present and higher weight to the PMI score otherwise.

The authors collected a corpus of 100,000 English‑language tweets from the first half of 2010, of which 10,000 contained emoticons. A manually annotated test set of 2,000 tweets (balanced across the three sentiment classes) was created by three domain experts. Experiments show that the Bayesian classifier alone achieves 78 % overall accuracy (85 % on emoticon‑rich tweets), while the Turney method reaches 71 % accuracy. The hybrid system improves accuracy to 85 %, with a notable reduction in neutral‑class misclassifications (error rate drops from 12 % to 5 %). Precision, recall, and F1 scores follow the same trend, confirming that the two methods complement each other: the Bayesian component provides reliable labels when explicit cues are available, and the PMI component supplies robust estimates when such cues are absent.

The paper discusses practical implications, including the low cost of generating training labels via emoticons, the computational overhead of web‑based PMI queries, and the potential for real‑time deployment. Limitations are acknowledged: dependence on a search engine can introduce latency and variability, and the approach is evaluated only on English tweets. The authors suggest future work on multilingual extensions, automatic sentiment‑lexicon construction, integration with deep‑learning embeddings, and multimodal analysis that incorporates images or videos attached to tweets. Overall, the study demonstrates that a simple, interpretable hybrid model can achieve state‑of‑the‑art performance on short, noisy social‑media texts while keeping annotation effort minimal.