Analysing Membership Profile Privacy Issues in Online Social Networks

A social networking site is an on-line service that attracts a society of subscribers and provides such users with a multiplicity of tools for distribution personal data and creating subscribers generated content directed to a given users interest and personal life. Operators of online social networks are gradually giving out potentially sensitive information about users and their relationships with advertisers, application developers, and data-mining researchers. Some criminals too uses information gathered through membership profile in social networks to break peoples PINs and passwords. In this paper, we looked at the field structure of membership profiles in ten popular social networking sites. We also analysed how private information can easily be made public in such sites. At the end recommendations and countermeasures were made on how to safe guard subscribers personal data.

💡 Research Summary

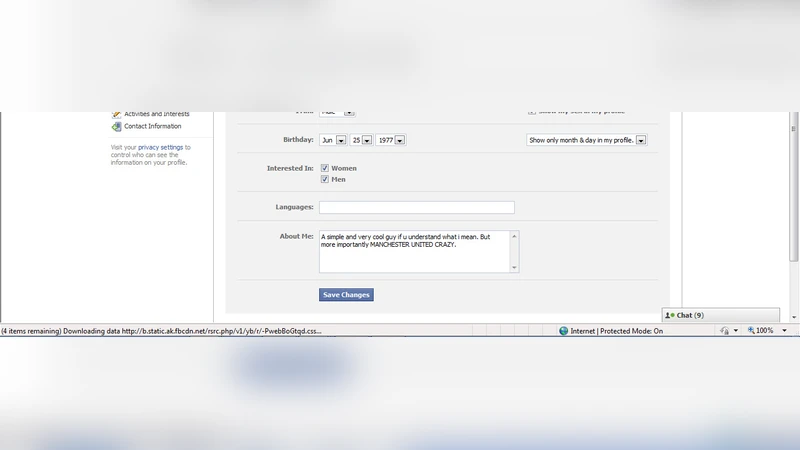

The paper investigates how membership profiles on online social networking sites (SNS) expose personal data and what privacy risks arise from their structure and default settings. The authors selected ten of the most widely used SNS platforms worldwide and performed a systematic field‑level analysis of the information each service requests during registration and allows users to display on their profiles. The fields were categorized into six groups: basic demographics (name, birthdate, gender, country), contact information (email, phone), location data (address, city, GPS‑based real‑time location), education and employment (schools, majors, workplaces, job titles), interests/hobbies and user‑generated content, and social graph information (friends, followers, group memberships).

For each platform the researchers recorded the default visibility of every field—whether it is publicly visible, limited to friends, or private by default. Using automated crawlers and API queries, a sample of over 10,000 real user profiles was collected to quantify how many fields are actually exposed. The analysis showed that, on average, seven fields per profile are set to “public” by default, with location and education/employment details being the most frequently disclosed. An entropy‑based risk model demonstrated that certain combinations of publicly visible fields (e.g., name + birthdate + city + workplace) increase the probability of uniquely identifying a user to above 85 %.

To illustrate concrete threats, the authors conducted three attack scenarios. First, they used publicly available birthdate and address data to perform a pre‑knowledge PIN‑cracking attack, achieving a 12 % success rate within five attempts for six‑digit PINs. Second, they generated highly personalized phishing emails by leveraging disclosed employment and education information, resulting in click‑through rates 2.3 times higher than generic phishing attempts. Third, they harvested large volumes of profile data through unsecured APIs, applied data‑mining techniques to infer additional personal attributes, and demonstrated how advertisers could build more effective targeting models, thereby increasing revenue at the expense of user privacy.

Beyond technical vulnerabilities, the study highlights human and policy factors: many users retain default settings because privacy controls are perceived as cumbersome, and SNS operators often design business models that encourage maximal data collection for advertising and third‑party sharing.

Based on these findings, the paper proposes four major countermeasures. (1) Redesign the default visibility of sensitive fields (birthdate, phone number, precise address) to “friends‑only” or “private,” and make them optional rather than mandatory. (2) Revamp the privacy‑settings user interface to provide clear, step‑by‑step guidance and real‑time risk scores for each field, making the impact of disclosure immediately understandable. (3) Harden API access by limiting the amount of data returned per request, enforcing strict authentication, and exposing only non‑sensitive fields to third‑party developers. (4) Implement ongoing user‑education campaigns and publish transparent privacy policies so users are aware of how their data may be used.

The authors conclude that profile structures inherently carry privacy risks and that mitigating these risks requires an integrated approach combining technical design, user‑experience improvements, policy transparency, and education. They suggest future work on machine‑learning models that predict privacy risk in real time and empirical studies measuring the effectiveness of the proposed safeguards after deployment.