Survey on Sparse Coded Features for Content Based Face Image Retrieval

Content based image retrieval, a technique which uses visual contents of image to search images from large scale image databases according to users’ interests. This paper provides a comprehensive survey on recent technology used in the area of content based face image retrieval. Nowadays digital devices and photo sharing sites are getting more popularity, large human face photos are available in database. Multiple types of facial features are used to represent discriminality on large scale human facial image database. Searching and mining of facial images are challenging problems and important research issues. Sparse representation on features provides significant improvement in indexing related images to query image.

💡 Research Summary

The paper presents a comprehensive survey of recent advances in content‑based face image retrieval, with a particular focus on sparse‑coded feature representations. As digital cameras, smartphones, and social‑media platforms generate massive collections of facial photographs, the need for efficient retrieval mechanisms that operate on visual content rather than textual metadata has become critical. The authors first outline the traditional retrieval pipeline—feature extraction, feature compression/representation, indexing, and search—and discuss the most widely used low‑level descriptors (LBP, Gabor filters, HOG) alongside modern deep‑learning embeddings such as FaceNet, VGG‑Face, and ArcFace. While handcrafted descriptors are computationally light, they are sensitive to illumination, pose, and expression variations; deep embeddings provide superior discriminative power but suffer from high dimensionality, making direct indexing impractical for large‑scale databases.

Sparse coding is introduced as a unifying solution to the dimensionality problem. By learning an over‑complete dictionary (via K‑means, K‑SVD, or online dictionary learning) and representing each feature vector as a linear combination of a few dictionary atoms, the original high‑dimensional data can be compressed into highly sparse vectors. This compression retains essential structural information while drastically reducing storage and computational demands. The survey details the trade‑offs involved in dictionary construction: larger dictionaries improve reconstruction fidelity but reduce sparsity, whereas smaller dictionaries increase sparsity at the cost of expressive power. The authors emphasize that online dictionary learning is especially suitable for dynamic environments where new images continuously arrive.

Once features are sparsely encoded, indexing becomes far more efficient. Sparse vectors contain many zero entries, enabling the use of inverted‑index structures, hash tables, or product quantization schemes that store only non‑zero components. Consequently, memory consumption drops and distance calculations during search can ignore zero dimensions, leading to substantial speed‑ups. The paper compares several similarity metrics—Euclidean distance, cosine similarity, χ² distance—and finds that cosine similarity often yields the best discrimination for sparse representations. Moreover, a multi‑level (coarse‑to‑fine) indexing strategy is advocated: an initial coarse retrieval quickly narrows the candidate set, and a subsequent fine‑grained re‑ranking refines the results, achieving both low latency and high accuracy.

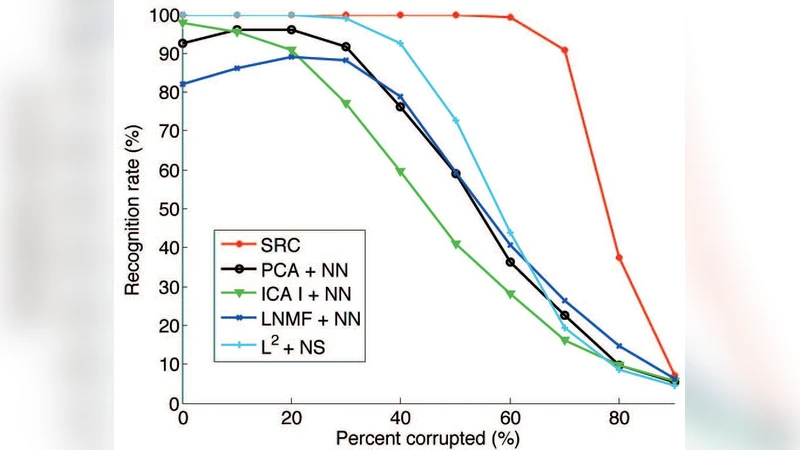

A significant portion of the survey is devoted to hybrid feature approaches. The authors describe methods that independently apply sparse coding to both traditional texture/shape descriptors and deep‑learning embeddings, then concatenate or fuse the resulting sparse vectors. This hybridization exploits complementary information: handcrafted features capture fine‑grained local patterns, while deep embeddings encode high‑level semantic cues. Experiments reported in the literature show that such combinations improve robustness against challenging conditions such as extreme lighting, occlusion (e.g., masks), and low resolution.

The paper also candidly discusses current limitations. Sparse‑coded retrieval still relies on large, labeled datasets for dictionary training; updating dictionaries in real time can be computationally expensive; and existing dictionaries may not generalize well to novel variations like face masks or severe compression artifacts. To address these challenges, the authors outline future research directions: (1) unsupervised or weakly‑supervised dictionary learning using generative models or contrastive learning; (2) dynamic dictionary adaptation mechanisms that incrementally incorporate new samples without full retraining; (3) integration of multimodal cues (audio, textual metadata) to enrich retrieval contexts; and (4) hardware‑aware implementations (e.g., FPGA or edge‑AI accelerators) that enable real‑time sparse coding and search on resource‑constrained devices.

In conclusion, the survey positions sparse coding as a pivotal technology that simultaneously achieves dimensionality reduction, storage efficiency, and fast similarity search for face image retrieval. When combined with robust feature extraction, hybrid descriptor fusion, and sophisticated indexing schemes, sparse‑coded systems can deliver high retrieval accuracy and low response times even on databases containing millions of faces. The authors argue that this integrated perspective not only advances face retrieval but also offers valuable insights for broader visual and multimodal search applications, setting a clear agenda for future research in scalable, content‑driven image retrieval.

Comments & Academic Discussion

Loading comments...

Leave a Comment