A novel sparsity and clustering regularization

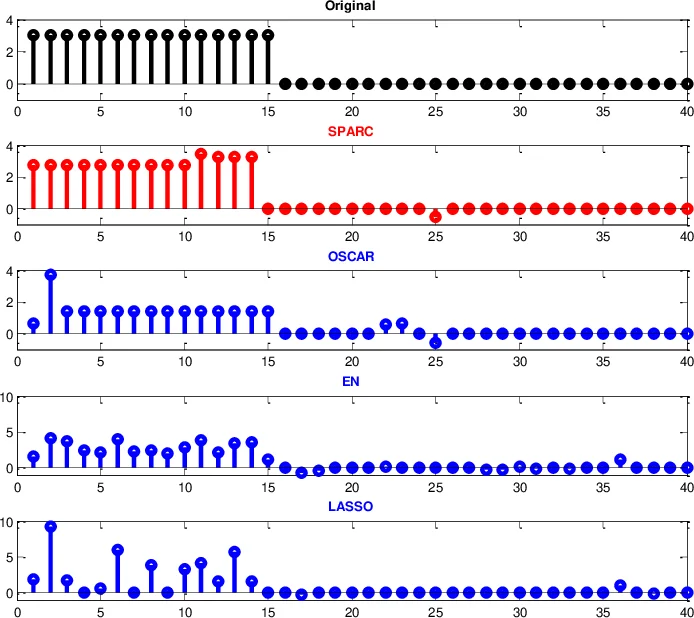

We propose a novel SPARsity and Clustering (SPARC) regularizer, which is a modified version of the previous octagonal shrinkage and clustering algorithm for regression (OSCAR), where, the proposed regularizer consists of a $K$-sparse constraint and a pair-wise $\ell_{\infty}$ norm restricted on the $K$ largest components in magnitude. The proposed regularizer is able to separably enforce $K$-sparsity and encourage the non-zeros to be equal in magnitude. Moreover, it can accurately group the features without shrinking their magnitude. In fact, SPARC is closely related to OSCAR, so that the proximity operator of the former can be efficiently computed based on that of the latter, allowing using proximal splitting algorithms to solve problems with SPARC regularization. Experiments on synthetic data and with benchmark breast cancer data show that SPARC is a competitive group-sparsity inducing regularizer for regression and classification.

💡 Research Summary

The paper introduces a new regularization method called SPARC (Sparsity and Clustering) that builds on the strengths of the previously proposed OSCAR regularizer while addressing its key shortcomings. OSCAR combines an ℓ₁ norm with a pairwise ℓ∞ penalty to promote both sparsity and equal‑magnitude grouping of coefficients. However, because the ℓ₁ term acts on all variables, small coefficients that should be zero are also penalized by the ℓ∞ term, which can hinder accurate clustering, and large coefficients are unnecessarily shrunk.

SPARC replaces the global ℓ₁ penalty with an explicit K‑sparsity constraint: the solution is forced to have at most K non‑zero entries. The pairwise ℓ∞ penalty is then applied only to the K components with the largest absolute values, denoted Ω_K(x). Formally, \

Comments & Academic Discussion

Loading comments...

Leave a Comment