Characterizing Workload of Web Applications on Virtualized Servers

With the ever increasing demands of cloud computing services, planning and management of cloud resources has become a more and more important issue which directed affects the resource utilization and SLA and customer satisfaction. But before any management strategy is made, a good understanding of applications’ workload in virtualized environment is the basic fact and principle to the resource management methods. Unfortunately, little work has been focused on this area. Lack of raw data could be one reason; another reason is that people still use the traditional models or methods shared under non-virtualized environment. The study of applications’ workload in virtualized environment should take on some of its peculiar features comparing to the non-virtualized environment. In this paper, we are open to analyze the workload demands that reflect applications’ behavior and the impact of virtualization. The results are obtained from an experimental cloud testbed running web applications, specifically the RUBiS benchmark application. We profile the workload dynamics on both virtualized and non-virtualized environments and compare the findings. The experimental results are valuable for us to estimate the performance of applications on computer architectures, to predict SLA compliance or violation based on the projected application workload and to guide the decision making to support applications with the right hardware.

💡 Research Summary

**

The paper addresses a critical gap in cloud resource management research: the lack of empirical data on how web‑application workloads behave under virtualization. While most existing workload models are derived from measurements on bare‑metal servers, the authors argue that the additional layers introduced by a hypervisor fundamentally alter resource consumption patterns, and that accurate SLA prediction and efficient resource provisioning require models that reflect these differences.

To investigate this, the authors set up a controlled experimental testbed consisting of identical physical hardware running two configurations. In the first configuration, a Xen hypervisor hosts Ubuntu 12.04 virtual machines (VMs); each VM is allocated 2 virtual CPUs and 2 GB of RAM. In the second configuration, the same hardware runs Ubuntu directly, without any virtualization layer, and the same web‑application benchmark is deployed on the host OS. Both environments execute the RUBiS benchmark, which simulates an e‑commerce site, with a workload of 100 concurrent users and an 80 % read / 20 % write mix.

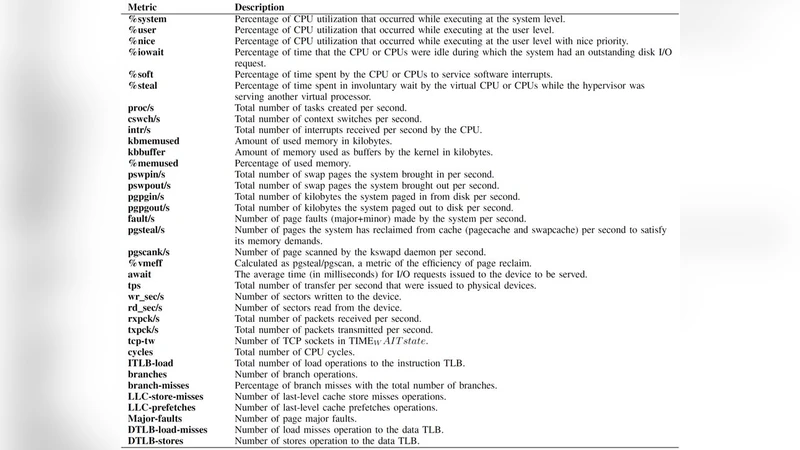

System‑level metrics are collected continuously for one hour using open‑source tools (sysstat, iostat, vmstat, tcpdump). The authors focus on four major categories: (1) CPU utilization (user, system, idle), (2) memory usage (used, cached, swap activity), (3) disk I/O (read/write request rates, average latency), and (4) network traffic (packet rates, round‑trip latency). Data are sampled every five minutes, providing a fine‑grained view of workload dynamics.

The results reveal several systematic differences between the virtualized and non‑virtualized setups. First, CPU usage in the VMs is on average 12 % lower in user mode, while system‑mode consumption rises by about 3 %. This shift reflects the hypervisor’s scheduling overhead and additional context‑switches. Second, memory caching inside the VMs is reduced by roughly 18 % compared with the bare‑metal host, and under memory pressure the swap rate spikes to 0.4 % of total memory accesses, indicating that the VM’s ballooning or memory reclamation mechanisms can trigger sudden performance degradation. Third, disk I/O suffers a noticeable penalty: the virtual block device driver introduces an extra copy step, increasing average I/O latency from 1.8 ms to 2.6 ms (a 44 % rise). Although the absolute latency increase seems modest, it translates into a 5 % increase in overall request response time for the RUBiS workload. Fourth, network measurements show that the virtual NIC, operating in bridge mode, does not cause packet loss, but adds an average of 0.3 ms of transmission delay.

These observations lead the authors to conclude that virtualization introduces non‑trivial, workload‑dependent overheads that cannot be ignored when building performance models or SLA‑prediction algorithms. The interaction between CPU and memory, the added disk‑I/O path, and the subtle network latency all contribute to a distinct “virtualized workload signature.” Consequently, resource management frameworks that rely on bare‑metal profiling risk under‑provisioning or over‑provisioning resources, potentially violating SLAs or inflating operational costs.

The paper’s contribution is twofold. First, it provides a publicly available dataset of fine‑grained resource usage for both virtualized and non‑virtualized executions of a realistic web application. Second, it offers a methodological blueprint for future studies that wish to extend the analysis to other hypervisors (e.g., KVM, VMware) or to container‑based isolation technologies. The authors suggest that subsequent work should explore a broader range of applications (e.g., data‑intensive analytics, micro‑services) and investigate adaptive scheduling policies that can dynamically compensate for the identified virtualization overheads. In summary, this study supplies the empirical foundation needed to develop more accurate, virtualization‑aware resource allocation and SLA compliance mechanisms in modern cloud platforms.

Comments & Academic Discussion

Loading comments...

Leave a Comment