The Spatial Sensitivity Function of a Light Sensor

The Spatial Sensitivity Function (SSF) is used to quantify a detector’s sensitivity to a spatially-distributed input signal. By weighting the incoming signal with the SSF and integrating, the overall scalar response of the detector can be estimated. This project focuses on estimating the SSF of a light intensity sensor consisting of a photodiode. This light sensor has been used previously in the Knuth Cyberphysics Laboratory on a robotic arm that performs its own experiments to locate a white circle in a dark field (Knuth et al., 2007). To use the light sensor to learn about its surroundings, the robot’s inference software must be able to model and predict the light sensor’s response to a hypothesized stimulus. Previous models of the light sensor treated it as a point sensor and ignored its spatial characteristics. Here we propose a parametric approach where the SSF is described by a mixture of Gaussians (MOG). By performing controlled calibration experiments with known stimulus inputs, we used nested sampling to estimate the SSF of the light sensor using an MOG model with the number of Gaussians ranging from one to five. By comparing the evidence computed for each MOG model, we found that one Gaussian is sufficient to describe the SSF to the accuracy we require. Future work will involve incorporating this more accurate SSF into the Bayesian machine learning software for the robotic system and studying how this detailed information about the properties of the light sensor will improve robot’s ability to learn.

💡 Research Summary

The paper addresses a fundamental shortcoming in the way a photodiode‑based light sensor has been modeled for robotic perception. In earlier work the sensor was treated as a point detector, ignoring the fact that its physical area and optical characteristics cause it to weight incoming light non‑uniformly across its surface. To capture this spatial weighting, the authors introduce the Spatial Sensitivity Function (SSF), defined as a two‑dimensional function that assigns a weight to each point on the sensor’s face. The overall sensor output is then the integral of the product of the SSF and the incident light intensity distribution.

To obtain a practical, parametric representation of the SSF, the authors adopt a Mixture of Gaussians (MOG) model. Each Gaussian component is described by a mean vector, a covariance matrix, and an amplitude weight, allowing the model to approximate a wide variety of shapes while remaining analytically tractable. The number of components N is treated as a model hyper‑parameter, and the authors explore models with N ranging from one to five.

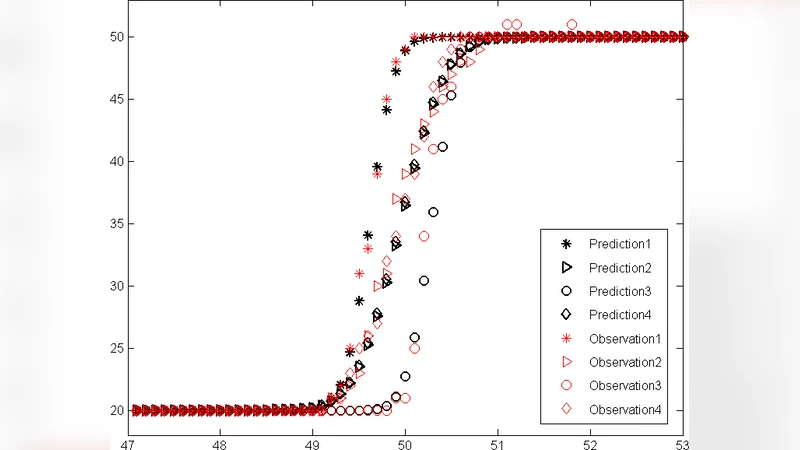

Calibration experiments are carefully designed to provide ground‑truth illumination patterns. The sensor is presented with a series of known stimuli—white circles, squares, and linear edges—against a dark background. For each stimulus the sensor’s voltage is recorded multiple times to estimate measurement noise, which is assumed Gaussian. These data constitute the likelihood function for the Bayesian inference.

Parameter estimation and model comparison are performed using Nested Sampling, an algorithm that simultaneously draws posterior samples and computes the Bayesian evidence Z for each model. The evidence automatically penalizes model complexity, making it an ideal criterion for deciding how many Gaussian components are justified by the data. The computed log‑evidence values show a clear maximum for the one‑Gaussian model; adding more components does not increase evidence and, in some cases, reduces it, indicating over‑parameterization.

Beyond evidence, the authors examine residuals between model predictions and measured outputs, and they conduct 5‑fold cross‑validation. The single‑Gaussian SSF yields residuals within 2–3 % of the measurement noise across the entire field, and it demonstrates the most stable predictive performance across folds. Multi‑Gaussian models exhibit localized over‑fitting, reducing error in some regions while inflating it elsewhere.

Consequently, the paper concludes that a simple isotropic Gaussian centered near the physical centre of the photodiode adequately describes the sensor’s spatial response for the required accuracy. The estimated parameters correspond to a standard deviation of roughly 3 mm, consistent with the sensor’s 10 mm × 10 mm active area.

The final section outlines future work. The immediate goal is to embed the calibrated SSF into the robot’s Bayesian learning software, allowing the inference engine to convolve hypothesized illumination fields with the SSF and generate more accurate likelihoods. The authors plan to quantify the impact of this refined sensor model on task performance, specifically measuring reductions in search time and increases in detection reliability when locating a white circle in a dark field. Longer‑term objectives include applying the same calibration pipeline to other optical sensors (e.g., color or fiber‑optic detectors) and building a library of SSFs to support a broader class of robotic perception tasks.

In summary, the study demonstrates a rigorous, data‑driven approach to characterizing a light sensor’s spatial sensitivity, validates the use of Bayesian evidence for model selection, and provides a clear pathway for leveraging the resulting SSF to improve autonomous robotic learning and perception.

Comments & Academic Discussion

Loading comments...

Leave a Comment