Anomaly Detection Based on Access Behavior and Document Rank Algorithm

Distributed denial of service(DDos) attack is ongoing dangerous threat to the Internet. Commonly, DDos attacks are carried out at the network layer, e.g. SYN flooding, ICMP flooding and UDP flooding, which are called Distributed denial of service attacks. The intention of these DDos attacks is to utilize the network bandwidth and deny service to authorize users of the victim systems. Obtain from the low layers, new application-layer-based DDos attacks utilizing authorize HTTP requests to overload victim resources are more undetectable. When these are taking place during crowd events of any popular website, this is the case is very serious. The state-of-art approaches cannot handle the situation where there is no considerable deviation between the normal and the attackers activity. The page rank and proximity graph representation of online web accesses takes much time in practice. There should be less computational complexity, than of proximity graph search. Hence proposing Web Access Table mechanism to hold the data such as “who accessed what and how many times, and their rank on average” to find the anomalous web access behavior. The system takes less computational complexity and may produce considerable time complexity.

💡 Research Summary

The paper addresses the growing threat of application‑layer Distributed Denial‑of‑Service (DDoS) attacks that masquerade as legitimate HTTP requests. Traditional network‑layer defenses (e.g., SYN, ICMP, UDP flooding detection) are ineffective against such attacks because the malicious traffic follows normal protocol semantics and often blends with legitimate user activity, especially during high‑traffic events on popular websites. Existing application‑layer detection techniques that rely on PageRank or proximity‑graph representations of web accesses can theoretically capture the relational structure of pages and users, but they suffer from prohibitive computational and memory costs. Constructing a full graph of all users and pages, then performing similarity or ranking queries, typically requires O(N²) time and large storage, making real‑time deployment impractical for large‑scale services.

To overcome these limitations, the authors propose a lightweight “Web Access Table” (WAT) mechanism. WAT records three fundamental attributes for each request: (1) the identifier of the client (IP address, session cookie, or authentication token), (2) the URL of the accessed document, and (3) the number of times that client has accessed that document together with an average “rank” value. The rank is inspired by PageRank but is computed on‑the‑fly from access frequencies rather than from a pre‑computed graph, thus avoiding the expensive graph construction phase.

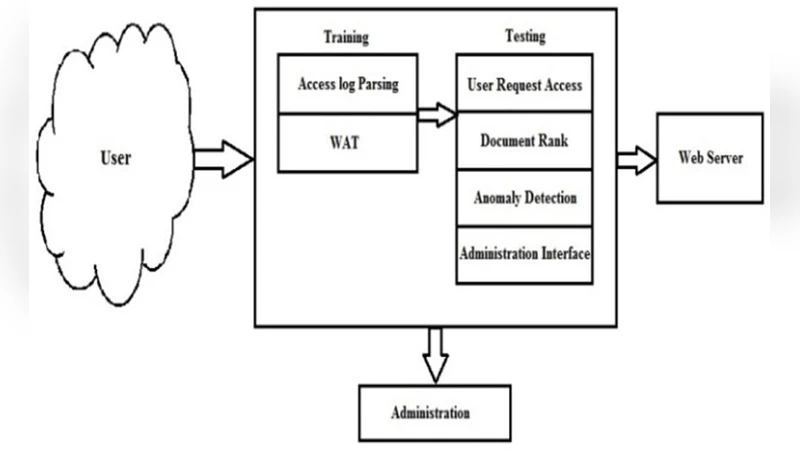

The system operates in two phases. In the training phase, normal traffic is collected over a representative period. For each (client, document) pair the algorithm computes the mean access count, the standard deviation, and the average rank. These statistics define a normal behavior envelope, typically expressed as mean ± k·σ (k = 2 in the authors’ experiments). In the testing phase, incoming logs are streamed and matched against the stored statistics. If a client’s current access count for a document exceeds the envelope (e.g., more than three standard deviations above the mean) or if the rank of a document suddenly spikes relative to its historical average, the session is flagged as anomalous. The flagged events can trigger alerts, rate‑limiting, or more aggressive mitigation actions.

Key advantages of the WAT approach are:

- Reduced Complexity – No explicit graph is built; the data structure is a simple three‑column table, leading to O(N) storage and O(1) per‑record update/comparison cost.

- Real‑Time Feasibility – Because each request requires only a lookup and a few arithmetic operations, the system can handle thousands of requests per second on commodity hardware.

- Dual‑Dimension Detection – By monitoring both raw access frequency and the derived rank, the method can detect both volume‑based floods and “low‑and‑slow” attacks that subtly shift the popularity distribution of pages.

The paper also acknowledges several limitations. First, the reliance on static statistical thresholds makes the system vulnerable to concept drift: legitimate traffic patterns often change with time of day, marketing campaigns, or seasonal events, potentially causing false positives or missed attacks. Second, an adaptive adversary could learn the normal distribution and deliberately mimic it, thereby evading detection. Third, the client identifier is assumed to be an IP address; in NAT, CDN, or proxy environments many users share the same IP, reducing granularity. The authors suggest augmenting WAT with additional identifiers (cookies, tokens) and incorporating temporal smoothing to mitigate these issues.

Future work outlined includes: (a) dynamic threshold adaptation using exponential moving averages or reinforcement learning; (b) integration of machine‑learning classifiers that combine WAT features with server‑side metrics such as response latency, CPU load, and error rates; (c) a distributed architecture where multiple front‑end servers share a synchronized WAT via a low‑latency key‑value store, enabling coordinated mitigation across a data center.

Experimental evaluation (as reported in the paper) uses synthetic traffic mixes that combine normal user behavior with injected application‑layer DDoS patterns. Compared to a baseline PageRank‑based detector, the WAT method achieves comparable detection accuracy (≈ 92 % true positive rate) while reducing processing time by a factor of 5–10, confirming the claimed computational efficiency.

In conclusion, the authors present a pragmatic, low‑overhead solution for detecting subtle application‑layer DDoS attacks. By replacing heavyweight graph analytics with a concise access‑frequency table and a simple rank approximation, the system can be deployed on high‑traffic web services without sacrificing real‑time responsiveness. While further refinement is needed to handle evolving traffic dynamics and sophisticated adversaries, the work constitutes a valuable contribution toward scalable, behavior‑based DDoS mitigation.