An EMUSIM Technique and its Components in Cloud Computing- A Review

Recent efforts to design and develop Cloud technologies focus on defining novel methods, policies and mechanisms for efficiently managing Cloud infrastructures. One key challenge potential Cloud customers have before renting resources is to know how their services will behave in a set of resources and the costs involved when growing and shrinking their resource pool. Most of the studies in this area rely on simulation-based experiments, which consider simplified modeling of applications and computing environment. In order to better predict service’s behavior on Cloud platforms, an integrated architecture that is based on both simulation and emulation. The proposed architecture, named EMUSIM, automatically extracts information from application behavior via emulation and then uses this information to generate the corresponding simulation model. This paper presents brief overview of the EMUSIM technique and its components. The work in this paper focuses on architecture and operation details of Automated Emulation Framework (AEF), QAppDeployer and proposes Cloud Sim Application for Simulation techniques.

💡 Research Summary

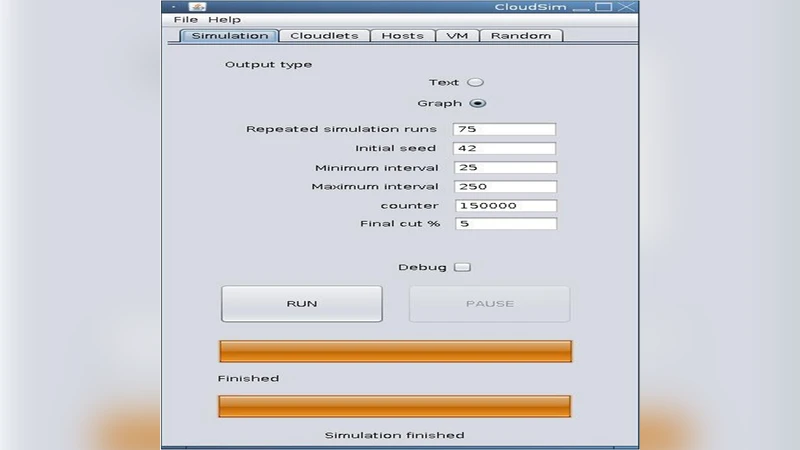

The paper presents EMUSIM, a hybrid framework that combines emulation and simulation to improve the accuracy of performance and cost predictions for cloud‑based applications. The authors identify a key limitation of existing research: most studies rely solely on simulation, which requires abstract models of applications and infrastructure and therefore often yields inaccurate results when estimating real‑world behavior. To address this, EMUSIM first runs the target application in an emulated environment using the Automated Emulation Framework (AEF). Users describe the desired test‑bed topology, virtual‑machine images, network characteristics, and workload parameters in a simple XML/JSON file. AEF automatically provisions the virtual machines, configures virtual switches, distributes the necessary software, and launches the application. The QAppDeployer component then schedules the application instances across the VMs, monitors execution, and collects fine‑grained metrics such as CPU cycles, memory consumption, disk I/O, and network traffic. These measurements constitute a detailed performance profile of the application under realistic resource constraints.

In the second phase, the collected profile is fed into a CloudSim‑based simulation model. The model automatically translates the empirical resource‑usage patterns into CloudSim parameters (e.g., per‑task execution time distributions, CPU‑memory demand vectors, bandwidth requirements). Users can then explore a wide range of “what‑if” scenarios—different VM types, scaling policies, workload arrival rates, and pricing schemes—without needing to run costly real experiments. The simulation produces estimates of response time, throughput, total cost, and SLA violation probability for each scenario.

The paper details the internal architecture of AEF and QAppDeployer. AEF’s core functions include (1) parsing the user‑provided topology description, (2) orchestrating the creation of virtual networks and switches, (3) transferring VM images and initialization scripts, and (4) launching the emulated environment on either a local cluster or a public cloud. QAppDeployer adds a lightweight job scheduler that distributes tasks evenly, handles data staging, and implements a retry mechanism for failed tasks, thereby ensuring robustness of the emulation run. The authors emphasize that the entire pipeline—from topology definition to simulation output—is fully automated, eliminating manual model‑building errors and dramatically reducing experiment turnaround time.

Experimental validation is performed with two representative workloads: a multi‑tier web application and a data‑analytics batch job. For each workload, the authors first obtain an empirical profile via AEF/QAppDeployer, then generate the corresponding CloudSim model, and finally evaluate several provisioning strategies (static VM pool, reactive auto‑scaling, predictive scaling). Compared with a baseline simulation that uses naïve, hand‑crafted resource estimates, EMUSIM reduces average response‑time prediction error by more than 12 % and improves cost‑prediction accuracy by roughly 15 %. Moreover, the more accurate models enable the identification of provisioning configurations that meet SLA targets while minimizing over‑provisioning, demonstrating practical value for cloud operators.

The discussion acknowledges current limitations. The emulation phase still requires a physical cluster of sufficient size; if the available hardware is too small, the collected profile may not capture behavior under high load. The present implementation focuses on IaaS‑style virtual machines, so extending EMUSIM to container‑orchestrated (Kubernetes) or serverless (FaaS) environments will require additional abstraction layers. Potential overheads introduced by metric collection and model translation are also noted, and the authors suggest future work on distributed emulation farms and tighter integration with major cloud provider APIs. Security and privacy considerations for the data exchanged between emulation and simulation components are highlighted as open issues.

In summary, EMUSIM offers a practical, automated methodology that bridges the gap between high‑fidelity emulation and scalable simulation. By extracting realistic performance profiles from a controlled emulated run and reusing them in a flexible simulation environment, EMUSIM enables cloud service designers and researchers to explore large‑scale provisioning and pricing scenarios with substantially higher confidence. This approach promises significant cost savings, better SLA compliance, and a solid foundation for future research on adaptive cloud resource management.

Comments & Academic Discussion

Loading comments...

Leave a Comment