A Two-Level Variant of Additive Schwarz Preconditioning for Use in Reservoir Simulation

The computation time for reservoir simulation is dominated by the linear solver. The sets of linear equations which arise in reservoir simulation have two distinctive features: the problems are usually highly anisotropic, with a dominant vertical flow direction, and the commonly used fully implicit method requires a simultaneous solution for pressure and saturation or molar concentration variables. These variables behave quite differently, with the pressure feeling long-range effects while the saturations vary locally. In this paper we review preconditioned iterative methods used for solving the linear system equations in reservoir simulation and their parallelisation. We then propose a variant of the classical additive Schwarz preconditioner designed to achieve better results on a large number of processors and discuss some directions for future research.

💡 Research Summary

**

The paper addresses the computational bottleneck in reservoir simulation, namely the solution of large, sparse, non‑symmetric linear systems that arise from fully implicit discretizations of multiphase flow. These systems contain two fundamentally different types of unknowns: pressure, which exhibits long‑range, elliptic behavior, and saturation (or molar concentration), which varies sharply and locally. Traditional preconditioners such as incomplete LU (ILU), nested factorisation, or classical additive Schwarz (AS) either struggle with strong anisotropy, suffer from poor parallel scalability, or smear the local saturation fronts when coarse‑grid corrections are applied.

The authors first review the state‑of‑the‑art iterative solvers used in reservoir simulators (GMRES, BiCGStab, ORTHOMIN) and their common preconditioners (ILU(0), nested factorisation, algebraic multigrid). They point out that while multigrid efficiently captures the pressure’s low‑frequency modes, it inevitably smooths saturation variables, leading to loss of physical fidelity. Classical AS methods improve parallelism by assigning each processor a sub‑domain plus an overlap, but they do not adequately eliminate the small eigenvalues associated with pressure, causing iteration counts to rise as the processor count grows.

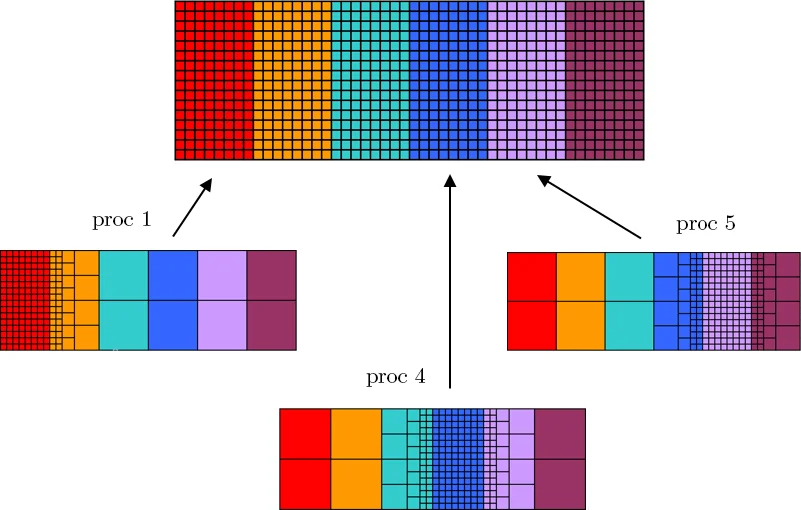

To overcome these limitations, the paper proposes a two‑level additive Schwarz preconditioner specially tailored for multiphase flow. The key idea is to replace the usual restriction operator (R_i) (which simply truncates the matrix outside a processor’s sub‑domain) with a coarsening operator. Each processor builds a local coarse‑grid representation of the parts of the global matrix that lie outside its own sub‑domain. This is done by simple element‑wise summation, which guarantees that the sum of residuals over each coarsened region is zero, thereby preserving mass balance in a coarse sense.

Two kinds of coarse representations are introduced:

- Near‑field coarse operators – constructed for each processor’s immediate neighbours. They are fine enough to capture local saturation variations while still providing a boundary condition for the processor’s high‑resolution sub‑problem.

- Far‑field coarse operator – a global coarse grid shared by all processors, designed to capture the long‑range pressure effects.

The algorithm proceeds as follows:

- For each processor (i), form the restricted matrix (A_i = R_i A R_i^T) where (R_i) now includes the coarsened blocks.

- Solve the local system (A_i \Delta x_i = -\Delta r_i) exactly (in the prototype implementation by Gaussian elimination) to obtain a local preconditioner (B_i^{-1}=R_i^T A_i^{-1} R_i).

- Assemble the global search direction by concatenating the rows corresponding to each processor: (\Delta x = \sum_i P_i \Delta x_i), where (P_i) projects onto the processor’s variables.

- At the start of each nonlinear (Newton) iteration, recompute the coarse matrices; during linear iterations only the coarsened residuals need to be communicated.

The authors implemented a prototype in Python and tested it on several benchmark matrices: the public ORSREG1 matrix and four variants of the SPE1 model (different well configurations, both IMPES and fully implicit formulations). Matrices range from ~2 k to ~10 k unknowns, with condition numbers up to 6 × 10⁵. They compared three configurations: (a) near‑field only (standard AS), (b) far‑field only (global coarse correction), and (c) the combined two‑level approach.

Results show that the combined approach consistently reduces the number of Krylov iterations by 30‑45 % relative to the baseline AS and outperforms ILU(0) and nested factorisation, especially as the number of processors increases. The iteration count remains nearly constant when scaling from 8 to 128 processors, indicating good parallel scalability. Moreover, the method preserves sharp saturation fronts because the coarse correction is applied only to the pressure‑related components; saturation variables are solved on the fine sub‑domains without smearing.

The paper acknowledges that the current implementation solves each sub‑domain exactly, which is not feasible for industrial‑scale problems. Future work includes integrating high‑performance sparse direct or iterative solvers for the local problems, automating the selection of near‑ and far‑field coarsening levels, extending the approach to unstructured grids and highly heterogeneous reservoirs, and coupling the preconditioner more tightly with the nonlinear Newton solver.

In summary, the authors present a novel two‑level additive Schwarz preconditioner that respects the distinct physical natures of pressure and saturation, achieves superior convergence and parallel efficiency, and offers a practical pathway toward scalable reservoir simulation on modern high‑core‑count architectures.

Comments & Academic Discussion

Loading comments...

Leave a Comment