Understanding Individual Differences: Towards Effective Mobile Interface Design and Adaptation for the Blind

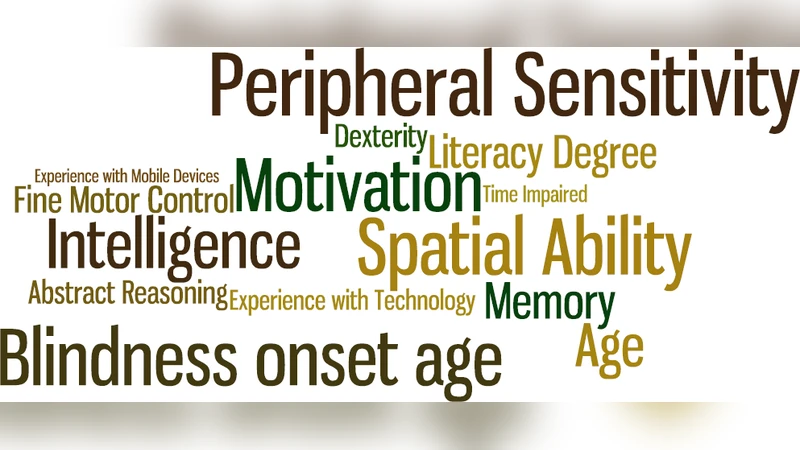

No two people are alike. We usually ignore this diversity as we have the capability to adapt and, without noticing, become experts in interfaces that were probably misadjusted to begin with. This adaptation is not always at the user’s reach. One neglected group is the blind. Spatial ability, memory, and tactile sensitivity are some characteristics that diverge between users. Regardless, all are presented with the same methods ignoring their capabilities and needs. Interaction with mobile devices is highly visually demanding which widens the gap between blind people. Our research goal is to identify the individual attributes that influence mobile interaction, considering the blind, and match them with mobile interaction modalities in a comprehensive and extensible design space. We aim to provide knowledge both for device design, device prescription and interface adaptation.

💡 Research Summary

The paper addresses a critical gap in mobile accessibility for blind users by moving beyond the conventional “one‑size‑fits‑all” approach and focusing on the substantial individual differences that exist within this population. The authors identify three core cognitive and sensory attributes—spatial ability, working memory, and tactile sensitivity—as key factors that influence how blind users interact with mobile devices. They develop a systematic methodology to quantify these attributes, map them to interaction modalities, and create an extensible design space that can guide device design, prescription, and dynamic UI adaptation.

Background and Motivation

Mobile interfaces are predominantly visual, and existing assistive technologies such as screen readers or voice assistants are applied uniformly to all blind users. Prior research has hinted that blind individuals vary widely in spatial cognition, memory capacity, and tactile perception, yet these variations have rarely been incorporated into design decisions. The authors argue that ignoring these differences perpetuates a usability gap, especially as mobile interactions become increasingly gesture‑driven and visually demanding.

Research Objectives

- Establish reliable, low‑burden assessments for spatial ability, working memory, and tactile sensitivity that can be administered to blind users.

- Construct a three‑dimensional design space linking (a) the user’s attribute profile, (b) the available interaction modalities (voice, haptic feedback, physical keyboard, gesture‑based input), and (c) UI adaptation strategies (feedback intensity, menu depth, input complexity).

- Validate the design space through empirical study and demonstrate how it can inform device selection and real‑time UI adaptation.

Methodology

A total of 48 blind participants (balanced gender, ages 18‑55, varying degrees of visual loss) completed a battery of tests:

- Spatial ability: mental rotation tasks and 3‑D object manipulation.

- Working memory: digit‑span and sequential command recall tasks.

- Tactile sensitivity: two‑point discrimination thresholds and skin conductance measures.

After profiling, each participant performed a set of standardized mobile tasks (app navigation, text entry, gesture execution) using four distinct modalities: (1) voice commands, (2) haptic‑enhanced feedback, (3) a physical keypad with adjustable key size, and (4) gesture‑based controls. Performance metrics included task completion time, error rate, and subjective satisfaction (Likert scale). Multivariate regression and cluster analysis were applied to uncover relationships between user attributes and modality performance.

Key Findings

- Spatial ability strongly predicts success with complex gestures. High‑spatial users excel with multi‑finger swipes and rotations, preferring gesture‑centric UIs over voice. Low‑spatial users benefit from simple one‑finger gestures or clearly labeled physical keys.

- Working memory influences the effectiveness of voice‑driven input and deep menu structures. Users with higher memory capacity handle longer voice commands and nested menus with fewer errors, whereas those with limited memory require shallow navigation hierarchies and step‑by‑step auditory guidance.

- Tactile sensitivity determines the utility of haptic cues. Participants with low sensitivity cannot reliably detect subtle vibrations, leading to higher error rates when haptic feedback is the sole error‑signaling mechanism. Enlarged key spacing and textured surfaces dramatically improve accuracy for this group, while high‑sensitivity users appreciate fine‑grained vibration patterns for status feedback.

These correlations allowed the authors to populate a “attribute‑modality mapping matrix.” For example, a profile of high spatial ability, moderate memory, and high tactile sensitivity maps to a UI that emphasizes gestures, fine haptic cues, and optional voice prompts. Conversely, a profile of low spatial ability, low memory, and low tactile sensitivity maps to a UI with large physical keys, strong vibration alerts, and minimal gesture complexity.

Dynamic Adaptation Framework

Beyond static mapping, the paper proposes a runtime adaptation engine. After an initial profiling session, the system deploys a baseline UI tailored to the user’s attributes. During interaction, the engine continuously monitors error patterns, latency, and user‑initiated corrections. When predefined thresholds are crossed (e.g., rising gesture error rate), the engine automatically simplifies gestures, amplifies haptic feedback, or inserts voice confirmations. This closed‑loop adaptation reduces fatigue, shortens learning curves, and supports long‑term proficiency.

Implications for Design, Prescription, and Policy

- Device Design: Manufacturers should offer interchangeable keypad modules with variable key dimensions and tactile textures, as well as haptic actuators capable of a broader intensity range.

- Device Prescription: Rehabilitation centers and assistive‑technology providers can use the profiling battery to recommend specific smartphone models (e.g., devices with larger physical buttons for low‑tactile users, or phones with robust voice‑recognition for high‑memory users).

- Standards and Guidelines: The authors advocate for an “individualized accessibility layer” in international UI standards, enabling developers to embed attribute‑based adaptation logic without reinventing the wheel.

Limitations and Future Work

The study’s sample size, while sufficient for initial validation, limits generalizability across cultures and languages. The adaptation algorithms were evaluated in a controlled lab environment; real‑world deployment on commercial operating systems remains to be demonstrated. Future research will expand the participant pool, incorporate machine‑learning models for predictive adaptation, and conduct longitudinal field trials to assess long‑term benefits.

Conclusion

By rigorously quantifying spatial cognition, working memory, and tactile perception among blind users and linking these traits to interaction modalities, the paper provides a concrete, extensible framework for personalized mobile UI design. This shift from uniform accessibility solutions to individualized, adaptive interfaces promises to narrow the usability gap for blind users, informing device engineering, prescription practices, and future accessibility standards.