Controlling Citizens Cyber Viewing Using Enhanced Internet Content Filters

Information passing through internet is generally unrestricted and uncontrollable and a good web content filter acts very much like a sieve. This paper looks at how citizens internet viewing can be controlled using content filters to prevent access to illegal sites and malicious contents in Nigeria. Primary data were obtained by administering 100 questionnaires. The data was analyzed by a software package called Statistical Package for Social Sciences (SPSS). The result of the study shows that 66.4% of the respondents agreed that the internet is been abused and the abuse can be controlled by the use of content filters. The PHP, MySQL and Apache were used to design a content filter program. It was recommended that a lot still need to be done by public organizations, academic institutions, government and its agencies especially the Economic and Financial Crime Commission (EFCC) in Nigeria to control the internet abuse by the under aged and criminals.

💡 Research Summary

The paper titled “Controlling Citizens Cyber Viewing Using Enhanced Internet Content Filters” investigates how a web‑based content‑filtering system can be employed in Nigeria to curb illegal and harmful online activities. The authors begin by describing the unrestricted flow of information on the Internet and argue that, much like a sieve, a well‑designed filter can separate undesirable content from legitimate use. A brief literature review notes that while numerous technical solutions (keyword matching, URL blacklists, DNS‑level blocking, and commercial proxy filters) exist, few studies have examined the social acceptance and policy implications of such tools in a developing‑country context.

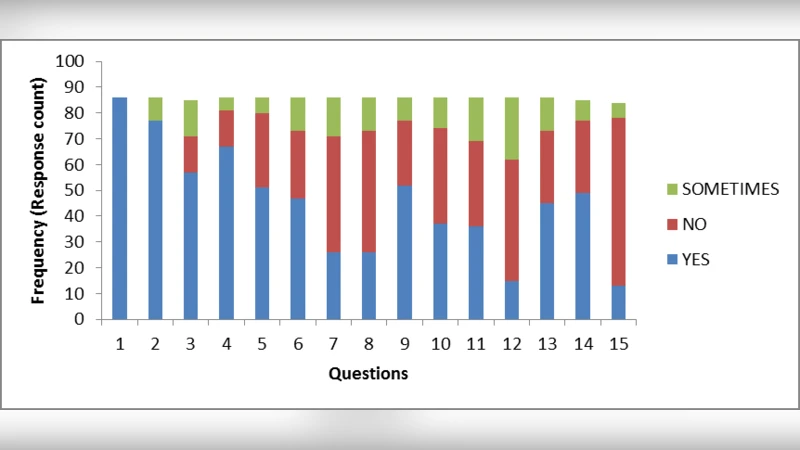

To gauge public opinion, the researchers administered a structured questionnaire to 100 participants drawn from various demographic groups. The questionnaire covered Internet usage habits, perceptions of online abuse, attitudes toward content filtering, and concerns about freedom of expression. Data were entered into SPSS (Version 22) and subjected primarily to frequency analysis, with some cross‑tabulations by age and education level. The key quantitative finding is that 66.4 % of respondents agree that the Internet is being abused and that this abuse could be mitigated through the deployment of content filters. Younger respondents (18‑25) reported the highest perception of abuse, while the 26‑35 cohort expressed the strongest confidence in the efficacy of filtering. Nonetheless, 45 % of participants voiced worries that filters might infringe on free speech, and 30 % believed that filters would have a substantial impact on crime prevention.

On the technical side, the authors designed a prototype filtering platform using the LAMP stack (PHP, MySQL, Apache). Administrators populate a MySQL table with URLs or keyword patterns to be blocked; an Apache module intercepts each HTTP request, compares it against the blacklist, and either forwards the request or returns a custom “blocked” page. A web‑based admin console allows real‑time editing of the block list and inspection of log files. Basic security hardening (input sanitization, prepared statements) was implemented to guard against SQL injection and cross‑site scripting. Performance testing on a modest virtual machine showed an average added latency of roughly 120 ms per request, which the authors deem acceptable for a pilot deployment.

The discussion links the survey outcomes with the prototype’s capabilities. The majority endorsement of filtering provides a social mandate for further development, yet the expressed concerns about civil liberties underscore the need for transparent rule‑making, independent oversight, and mechanisms for users to appeal or request unblocking. The authors acknowledge that a technical filter alone cannot eradicate cybercrime; they advocate for a multi‑stakeholder approach involving law‑enforcement agencies (particularly Nigeria’s Economic and Financial Crimes Commission, EFCC), educational institutions, NGOs, and private sector partners. They also note that the prototype lacks advanced features such as machine‑learning‑based content classification, deep packet inspection, and scalability to national‑level traffic volumes.

In conclusion, the study presents an initial proof‑of‑concept that a simple LAMP‑based filter can be built and that a sizable portion of Nigerian Internet users support its use to combat online abuse. However, methodological limitations—small, non‑random sample, limited statistical depth, and scant technical detail—restrict the generalizability of the findings. The authors recommend expanding the sample size, conducting longitudinal impact assessments, integrating more sophisticated detection algorithms, and establishing a clear legal framework that balances security objectives with constitutional rights. Future research should also explore user‑centered design, cross‑border cooperation, and the economic feasibility of scaling the system to serve entire regions or the nation as a whole.

Comments & Academic Discussion

Loading comments...

Leave a Comment