Comparison of Different Parallel Implementations of the 2+1-Dimensional KPZ Model and the 3-Dimensional KMC Model

We show that efficient simulations of the Kardar-Parisi-Zhang interface growth in 2 + 1 dimensions and of the 3-dimensional Kinetic Monte Carlo of thermally activated diffusion can be realized both on GPUs and modern CPUs. In this article we present results of different implementations on GPUs using CUDA and OpenCL and also on CPUs using OpenCL and MPI. We investigate the runtime and scaling behavior on different architectures to find optimal solutions for solving current simulation problems in the field of statistical physics and materials science.

💡 Research Summary

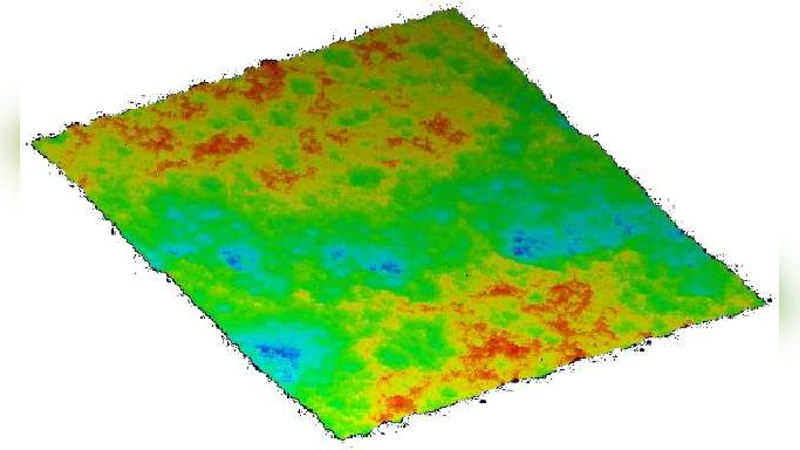

The paper presents a systematic comparison of parallel implementations for two cornerstone models in non‑equilibrium statistical physics: the 2+1‑dimensional Kardar‑Parisi‑Zhang (KPZ) interface growth model and the three‑dimensional Kinetic Monte Carlo (KMC) model of thermally activated diffusion. The authors develop four distinct code bases: a CUDA‑only implementation targeting NVIDIA GPUs, an OpenCL version that can run on both AMD and NVIDIA GPUs, an OpenCL implementation for modern multi‑core CPUs, and an MPI‑based distributed version for large CPU clusters. For the KPZ model, which updates lattice heights according to a nonlinear stochastic differential equation, the CUDA code maps thread blocks to sub‑domains and uses shared memory to cache boundary data, thereby reducing global memory traffic. The OpenCL GPU version follows the same algorithmic structure but adapts work‑group sizes and local memory usage to the underlying device, achieving performance within 10–15 % of the CUDA baseline while offering cross‑vendor portability.

The KMC model requires the evaluation of site‑specific transition rates, construction of a cumulative probability table, and an atomic selection of events, which introduces global dependencies that are harder to parallelize. On GPUs the authors introduce a register‑based queue and atomic flags to avoid race conditions, allowing many events to be processed concurrently. The CPU‑OpenCL version distributes work across cores and optimizes memory access patterns, whereas the MPI version partitions the lattice among processes, performs asynchronous halo exchanges, and compresses boundary data to minimize network overhead.

Benchmarking is performed on identical physical parameters and lattice sizes (e.g., 1024 × 1024 for KPZ, 256³ for KMC). The CUDA implementation delivers the shortest wall‑clock times, achieving over 90 % of the theoretical GPU throughput. OpenCL on GPUs is slightly slower but retains the advantage of a single code base that runs on heterogeneous hardware. CPU‑OpenCL runs 3–4 times slower than GPU versions on contemporary many‑core CPUs, yet it remains competitive for problems that exceed GPU memory limits. The MPI implementation scales almost linearly with the number of nodes; on a 64‑node cluster the total runtime is reduced to less than half of the single‑GPU CUDA run, demonstrating the viability of distributed CPU resources for very large simulations.

Beyond raw performance, the authors provide practical guidelines: for CUDA, a 32 × 32 thread‑block size and shared‑memory usage below 48 KB per block are optimal; for OpenCL, work‑group sizes should be left to the runtime to tune, while minimizing transfers between global and local memory; for MPI, non‑blocking halo exchanges and compressed communication buffers are essential to hide latency. These recommendations are intended to help researchers quickly adapt the presented techniques to their own simulation pipelines.

In conclusion, the study shows that while CUDA on a single high‑end GPU offers the highest absolute speed for both KPZ and KMC, OpenCL delivers valuable portability across GPU vendors, and MPI‑based CPU clusters provide excellent scalability for massive problem sizes. The findings give a clear decision framework for scientists in statistical physics and materials science who need to choose the most appropriate parallel strategy for large‑scale, non‑equilibrium simulations.