From Participatory Sensing to Mobile Crowd Sensing

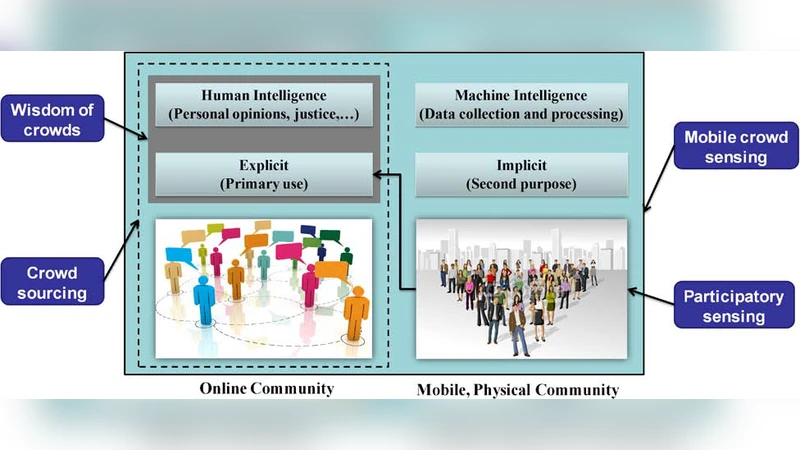

The research on the efforts of combining human and machine intelligence has a long history. With the development of mobile sensing and mobile Internet techniques, a new sensing paradigm called Mobile Crowd Sensing (MCS), which leverages the power of citizens for large-scale sensing has become popular in recent years. As an evolution of participatory sensing, MCS has two unique features: (1) it involves both implicit and explicit participation; (2) MCS collects data from two user-participant data sources: mobile social networks and mobile sensing. This paper presents the literary history of MCS and its unique issues. A reference framework for MCS systems is also proposed. We further clarify the potential fusion of human and machine intelligence in MCS. Finally, we discuss the future research trends as well as our efforts to MCS.

💡 Research Summary

The paper traces the evolution of sensing systems that combine human and machine intelligence, culminating in the emergence of Mobile Crowd Sensing (MCS) as a distinct paradigm enabled by the widespread adoption of smartphones and mobile Internet. While participatory sensing—where users explicitly contribute sensor readings—has been the dominant model for over a decade, the authors argue that it suffers from limited data sources, high participation cost, and scalability constraints. MCS expands this model in two fundamental ways. First, it embraces both explicit participation (users actively submit sensor data or answer questionnaires through dedicated apps) and implicit participation (data harvested automatically from users’ activities on mobile social networks, such as check‑ins, posts, and metadata). This dual‑mode approach dramatically increases the volume and diversity of information available for analysis. Second, MCS draws from two complementary data streams: (a) mobile social network data that reflect social behavior, sentiment, and trends, and (b) mobile sensing data collected by built‑in smartphone sensors (GPS, accelerometer, microphone, etc.) that capture physical environmental conditions. By fusing these streams, MCS can address complex urban problems—traffic congestion, air quality, disaster response—through a richer, multi‑dimensional lens.

To structure the design of MCS applications, the authors propose a reference framework composed of five layers: (1) Data Collection, (2) Pre‑processing and Cleaning, (3) Human‑Machine Intelligence Fusion, (4) Service Delivery, and (5) Feedback‑Driven Learning. Each layer is defined with clear interfaces to promote modularity and scalability. The most novel component is the Human‑Machine Intelligence Fusion layer, where large‑scale sensor streams are first processed by machine‑learning models (deep learning, reinforcement learning) for rapid pattern detection, and then refined by human experts who provide labeling, validation, or contextual interpretation. This “Human‑in‑the‑Loop” approach seeks to combine the speed and pattern‑recognition power of algorithms with the domain knowledge and judgment of people, thereby improving both accuracy and explainability.

The paper enumerates several critical challenges that must be solved for MCS to mature. Incentive design is paramount: users must be motivated through monetary rewards, social recognition, or personalized services, and emerging mechanisms such as gamification and blockchain‑based transparency are discussed. Data quality management is another concern; sensor noise, user input errors, and malicious data injection require robust outlier detection, credibility scoring, and redundancy strategies. Privacy and security are especially acute because MCS aggregates location and social data that can easily identify individuals. Techniques such as differential privacy, homomorphic encryption, and anonymization are surveyed as potential safeguards. Energy efficiency is addressed through adaptive sampling and edge‑computing strategies that reduce battery drain on mobile devices. Finally, handling massive, real‑time data streams demands hybrid cloud‑edge architectures capable of low‑latency processing and elastic scaling.

Looking ahead, the authors outline four research directions. Multi‑modal data fusion will integrate text, images, audio, and sensor signals to generate richer insights. Context‑aware adaptive sensing will dynamically adjust sampling rates based on the user’s current activity (e.g., walking vs. stationary) to balance data fidelity with resource consumption. Direct integration of MCS outputs into policy‑making, urban planning, and emergency management is advocated, requiring standardized data formats and cross‑sector collaboration. Lastly, the authors call for international standardization efforts to harmonize protocols, privacy regulations, and quality metrics across MCS deployments.

The paper concludes with a description of the authors’ ongoing work: they are building a city‑scale MCS platform that combines real‑time traffic monitoring, air‑quality sensing, and citizen feedback. Early trials demonstrate that the Human‑Machine fusion pipeline can improve anomaly detection rates while maintaining user privacy through differential‑privacy mechanisms. These results support the authors’ claim that MCS is poised to become a cornerstone technology for smart cities, enabling large‑scale, participatory data collection and intelligent decision‑making that leverages the complementary strengths of humans and machines.