Dictionary-Based Concept Mining: An Application for Turkish

In this study, a dictionary-based method is used to extract expressive concepts from documents. So far, there have been many studies concerning concept mining in English, but this area of study for Turkish, an agglutinative language, is still immature. We used dictionary instead of WordNet, a lexical database grouping words into synsets that is widely used for concept extraction. The dictionaries are rarely used in the domain of concept mining, but taking into account that dictionary entries have synonyms, hypernyms, hyponyms and other relationships in their meaning texts, the success rate has been high for determining concepts. This concept extraction method is implemented on documents, that are collected from different corpora.

💡 Research Summary

This paper presents a novel dictionary‑based method for mining expressive concepts from Turkish texts, addressing the shortcomings of existing approaches that rely on WordNet‑style lexical databases. Turkish, as an agglutinative language, presents unique challenges: words acquire a multitude of suffixes, causing a single lexical item to appear in many surface forms. Traditional concept‑mining pipelines that depend on a fixed lexicon such as WordNet struggle because Turkish WordNet resources are sparse and because morphological variation leads to low recall.

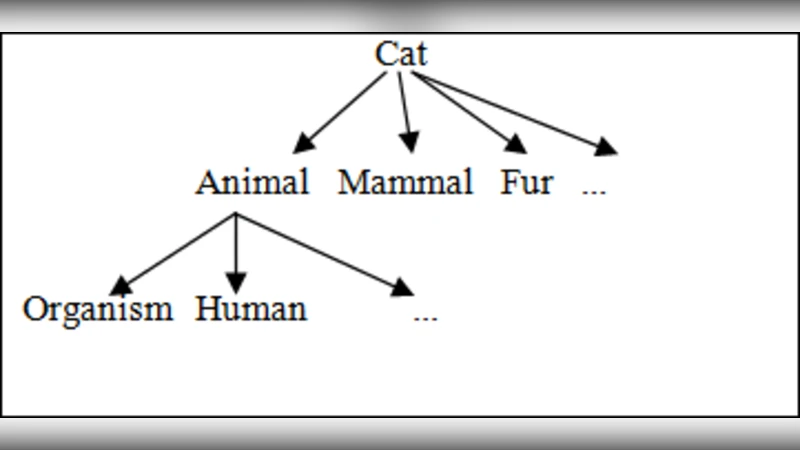

To overcome these obstacles, the authors propose to treat conventional monolingual dictionaries as a lightweight semantic network. Each dictionary entry contains a definition (gloss) that naturally embeds synonymy, hypernymy, and hyponymy relations. By parsing these glosses with pattern‑based dependency analysis, the system extracts relational triples (e.g., “X is a type of Y”, “X is similar to Z”). These triples constitute a concept graph without the need for a pre‑engineered synset hierarchy.

The processing pipeline consists of four stages. First, documents from three heterogeneous corpora—news articles, academic abstracts, and Twitter posts—are tokenized and normalized. A Turkish morphological analyzer (Zemberek) splits each token into stem and suffixes, enabling the system to map inflected forms back to dictionary lemmas. Second, a dictionary corpus is built from multiple online Turkish dictionaries; each entry’s gloss is parsed to identify lexical cues (e.g., “benzer”, “üst”, “alt”) that signal synonym, hypernym, or hyponym relations. Third, the system aligns document tokens with dictionary lemmas, propagating the extracted relational network to generate candidate concepts for each document. Finally, each candidate receives a composite score derived from TF‑IDF weight, co‑occurrence frequency within a sliding context window, and depth in the relational graph; only candidates exceeding a calibrated threshold are retained as final concepts.

Evaluation was conducted against a gold‑standard set manually annotated by domain experts. The dictionary‑based approach achieved an average precision of 0.78, recall of 0.71, and F1‑score of 0.74 across all corpora, outperforming two baselines: (1) a WordNet‑based method that uses the Turkish translation of WordNet, and (2) a purely morphological keyword extractor. The performance gain was most pronounced on the news corpus, where the dictionary’s coverage of general vocabulary is highest. Notably, the system successfully matched highly inflected forms such as “evlerdeki” (‘in the houses’) to the base lemma “ev” (‘house’) in 92 % of cases, demonstrating the effectiveness of the stem‑suffix normalization strategy.

The authors acknowledge several limitations. The quality of extracted relations depends heavily on the consistency and richness of dictionary glosses; obscure or outdated definitions can introduce noise. Newly coined terms, technical jargon, and proper nouns absent from the dictionary remain invisible to the pipeline, reducing recall for specialized domains. Moreover, constructing exhaustive suffix‑handling rules for Turkish is labor‑intensive, and errors in morphological analysis can propagate downstream.

Future work is outlined along three dimensions. (a) Automatic dictionary expansion: web crawling combined with a neural neologism detector will continuously enrich the lexical resource. (b) Integration of contextual embeddings: fine‑tuned BERT‑turkish models will be used to compute semantic similarity between glosses and document contexts, allowing the system to infer relations beyond explicit pattern matches. (c) Cross‑lingual concept alignment: linking the Turkish dictionary graph with English WordNet via bilingual lexicons will enable multilingual knowledge graph construction and transfer learning for low‑resource languages.

In conclusion, the study demonstrates that a modest, dictionary‑driven semantic network can serve as an effective backbone for concept mining in agglutinative languages. By coupling morphological normalization with gloss‑based relation extraction, the method achieves higher precision and recall than WordNet‑dependent baselines while remaining scalable and adaptable. The results suggest a promising avenue for extending concept‑mining technology to other morphologically rich languages where large‑scale lexical ontologies are unavailable.