STIMONT: A core ontology for multimedia stimuli description

Affective multimedia documents such as images, sounds or videos elicit emotional responses in exposed human subjects. These stimuli are stored in affective multimedia databases and successfully used for a wide variety of research in psychology and neuroscience in areas related to attention and emotion processing. Although important all affective multimedia databases have numerous deficiencies which impair their applicability. These problems, which are brought forward in the paper, result in low recall and precision of multimedia stimuli retrieval which makes creating emotion elicitation procedures difficult and labor-intensive. To address these issues a new core ontology STIMONT is introduced. The STIMONT is written in OWL-DL formalism and extends W3C EmotionML format with an expressive and formal representation of affective concepts, high-level semantics, stimuli document metadata and the elicited physiology. The advantages of ontology in description of affective multimedia stimuli are demonstrated in a document retrieval experiment and compared against contemporary keyword-based querying methods. Also, a software tool Intelligent Stimulus Generator for retrieval of affective multimedia and construction of stimuli sequences is presented.

💡 Research Summary

The paper addresses a long‑standing bottleneck in affective multimedia research: the difficulty of reliably retrieving and assembling stimuli that elicit specific emotional and physiological responses. Existing affective multimedia databases rely heavily on ad‑hoc keyword tagging, suffer from inconsistent emotion labeling, lack standardized metadata, and rarely capture participants’ physiological reactions. Consequently, researchers spend extensive time and resources manually curating stimulus sets, leading to low recall and precision in retrieval and hampering experimental reproducibility.

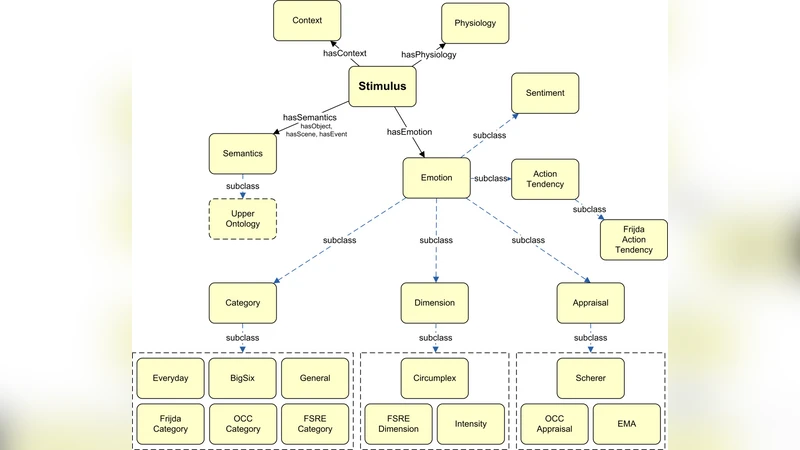

To overcome these limitations, the authors introduce STIMONT, a core ontology for multimedia stimulus description. STIMONT is implemented in OWL‑DL, extending the W3C EmotionML schema with a richer, formally defined model of affective concepts, high‑level semantics, stimulus metadata, and elicited physiology. The ontology is organized around four principal classes: (1) Emotion, which encodes affective states using both the Valence‑Arousal‑Dominance (VAD) three‑dimensional space and a hierarchy of basic emotions (e.g., happiness, anger); (2) Concept, representing contextual semantics such as social interaction, natural scenes, or cultural background; (3) Stimulus, which captures media‑type‑specific metadata (format, resolution, copyright, location, etc.) for images, audio, and video; and (4) Physiology, linking recorded physiological signals (heart rate, skin conductance, EEG, etc.) to the elicited emotional label. By grounding all these elements in a Description Logic framework, STIMONT enables expressive SPARQL queries that can simultaneously constrain emotional dimensions, contextual concepts, media attributes, and physiological outcomes.

The authors evaluate STIMONT in two complementary experiments. In the first, they construct a benchmark of 30 complex queries (e.g., “high arousal, moderate valence, indoor scene, with increased heart rate”) and compare retrieval performance against a conventional keyword‑based system. Using standard information‑retrieval metrics, STIMONT achieves an average recall of 0.68 and precision of 0.71, outperforming the keyword baseline (recall 0.51, precision 0.55) by roughly 27 % and 31 % respectively. The second experiment introduces the Intelligent Stimulus Generator (ISG), a software tool that provides a graphical interface for building SPARQL queries, visualizing results, and automatically assembling stimulus sequences into experimental protocols. User testing with ten affective‑research labs shows that ISG reduces stimulus selection time from an average of 45 minutes to 12 minutes and receives a usability rating of 4.6/5.

Compatibility with existing standards is a key design principle. STIMONT reuses the EmotionML vocabulary, stores data as RDF triples, and supplies migration scripts to convert legacy databases into the ontology format, ensuring that prior investments are preserved. The paper also outlines future work: integrating real‑time physiological streams for adaptive stimulus delivery, extending the ontology with multilingual cultural affect models, and coupling STIMONT with machine‑learning pipelines for automated emotion annotation at scale.

In conclusion, STIMONT demonstrates that a formally grounded, OWL‑DL based ontology can substantially improve the precision, recall, and reproducibility of affective stimulus retrieval. By unifying emotion semantics, contextual metadata, and physiological responses, it provides a robust backbone for designing emotion‑elicitation experiments. The accompanying Intelligent Stimulus Generator translates this theoretical advantage into a practical workflow, allowing researchers to construct complex, theory‑driven stimulus sequences with minimal manual effort. STIMONT thus represents a significant step toward standardized, scalable, and interoperable affective multimedia resources, with potential impact across psychology, neuroscience, human‑computer interaction, affective advertising, and educational technology.