Feature Selection Using Classifier in High Dimensional Data

Feature selection is frequently used as a pre-processing step to machine learning. It is a process of choosing a subset of original features so that the feature space is optimally reduced according to a certain evaluation criterion. The central objective of this paper is to reduce the dimension of the data by finding a small set of important features which can give good classification performance. We have applied filter and wrapper approach with different classifiers QDA and LDA respectively. A widely-used filter method is used for bioinformatics data i.e. a univariate criterion separately on each feature, assuming that there is no interaction between features and then applied Sequential Feature Selection method. Experimental results show that filter approach gives better performance in respect of Misclassification Error Rate.

💡 Research Summary

The paper addresses the pervasive problem of high‑dimensional data in bioinformatics, where thousands of gene‑expression measurements are available for a relatively small number of samples. In such settings, the “curse of dimensionality” leads to over‑fitting, inflated computational cost, and unstable statistical estimates. The authors therefore investigate two classic feature‑selection paradigms—filter methods and wrapper methods—and evaluate their impact on classification performance when coupled with two discriminant analysis classifiers: Quadratic Discriminant Analysis (QDA) and Linear Discriminant Analysis (LDA).

Methodology

The filter approach employs a univariate statistical test (e.g., t‑test or ANOVA) applied independently to each feature. Features are ranked by their ability to separate the class labels, and the top N features are retained. This selection ignores any interaction among features, which makes it computationally cheap and easy to integrate into a preprocessing pipeline. The selected subset is then fed directly into a QDA classifier, which models each class with its own covariance matrix and thus can capture non‑linear decision boundaries.

Conversely, the wrapper approach uses Sequential Feature Selection (SFS). Starting from an empty set, SFS iteratively adds the feature that yields the greatest improvement in classification accuracy for an LDA model. Because the wrapper evaluates the actual classifier during the search, it implicitly accounts for feature interactions and the specific assumptions of LDA (shared covariance across classes). However, the search space grows combinatorially, leading to substantially higher computational demands.

Experimental Design

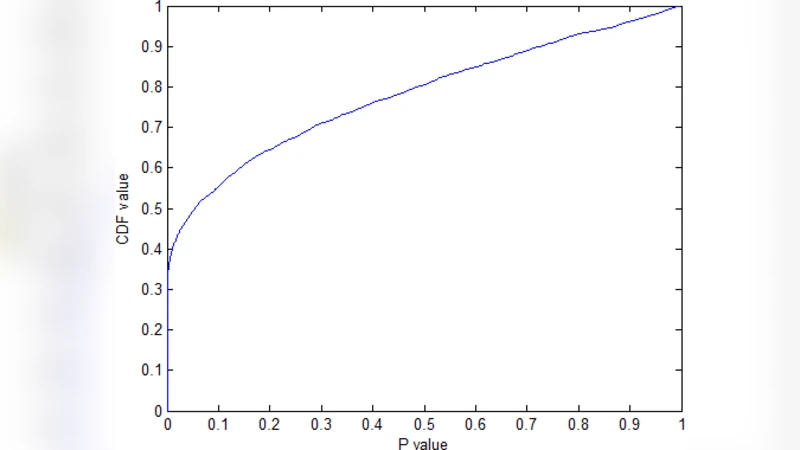

The authors conduct experiments on a publicly available microarray dataset commonly used in cancer‑type classification studies. The data are split using 10‑fold cross‑validation to obtain unbiased estimates of generalization error. The primary performance metric is Misclassification Error Rate (MER); secondary metrics include ROC‑AUC, precision, and recall. Feature set sizes of 5, 10, 20, 50, and 100 are examined to understand how the number of retained features influences both accuracy and runtime.

Results

The filter‑QDA pipeline consistently achieves low MER values even with very few features. With as few as ten genes, MER remains below 0.12, and the runtime is negligible because the univariate ranking is linear in the number of features. Adding more features yields only marginal MER improvements, indicating that the most discriminative information is already captured early in the ranking.

The wrapper‑LDA pipeline shows a more pronounced MER reduction as the feature set grows, reaching a minimum MER of about 0.08 when 50–100 features are selected. However, the computational cost escalates dramatically; the SFS process can be an order of magnitude slower than the filter method, and memory consumption rises due to repeated model fitting. Moreover, because SFS optimizes the LDA objective directly, the selected feature combinations sometimes over‑fit the training folds, leading to variability in performance across cross‑validation splits.

Overall, the filter approach outperforms the wrapper in terms of the trade‑off between accuracy and efficiency. The authors argue that, for high‑dimensional biological data where rapid analysis is often required (e.g., clinical decision support), the filter‑QDA combination offers a pragmatic solution.

Discussion and Insights

- Model‑Selection Compatibility: QDA’s flexibility in modeling class‑specific covariance structures makes it well‑suited to the feature subsets produced by univariate filters, which tend to retain features with strong individual discriminative power. LDA, by contrast, assumes a common covariance matrix; when the wrapper forces feature combinations that violate this assumption, the classifier’s advantage diminishes.

- Feature Interaction vs. Cost: While the wrapper can theoretically capture synergistic effects among genes, the empirical gains are modest relative to the steep increase in computational burden. This suggests that, at least for the dataset examined, most predictive information is already present in strong marginal effects rather than complex multivariate interactions.

- Evaluation Beyond MER: The authors acknowledge that MER alone does not capture the full picture of classifier utility. Incorporating ROC‑AUC, F1‑score, and calibration metrics would provide a more nuanced assessment, especially in imbalanced biomedical datasets where false‑negative rates are critical.

- Future Directions: The paper proposes several avenues for extending the work: (a) integrating multivariate filter techniques such as mutual information or ReliefF to capture weak but coordinated signals; (b) employing meta‑heuristic search strategies (genetic algorithms, particle swarm optimization) within the wrapper to reduce search cost; (c) exploring hybrid pipelines that first apply a fast filter to prune the space and then run a lightweight wrapper on the reduced set; and (d) testing the approach on other high‑dimensional domains (image patches, text n‑grams) to assess generalizability.

Conclusion

The study demonstrates that a simple, univariate filter combined with a QDA classifier can achieve competitive classification performance on high‑dimensional bioinformatics data while maintaining computational tractability. Although wrapper methods can marginally improve accuracy by exploiting feature interactions, their prohibitive runtime and susceptibility to over‑fitting limit their practicality in many real‑world scenarios. Consequently, for practitioners needing rapid, reliable feature reduction in genomics or similar fields, the filter‑QDA strategy represents an effective baseline, and future research should focus on hybrid or meta‑heuristic enhancements that preserve its efficiency while capturing more subtle multivariate patterns.

Comments & Academic Discussion

Loading comments...

Leave a Comment