Missing Value Imputation With Unsupervised Backpropagation

Many data mining and data analysis techniques operate on dense matrices or complete tables of data. Real-world data sets, however, often contain unknown values. Even many classification algorithms that are designed to operate with missing values stil…

Authors: Michael S. Gashler, Michael R. Smith, Richard Morris

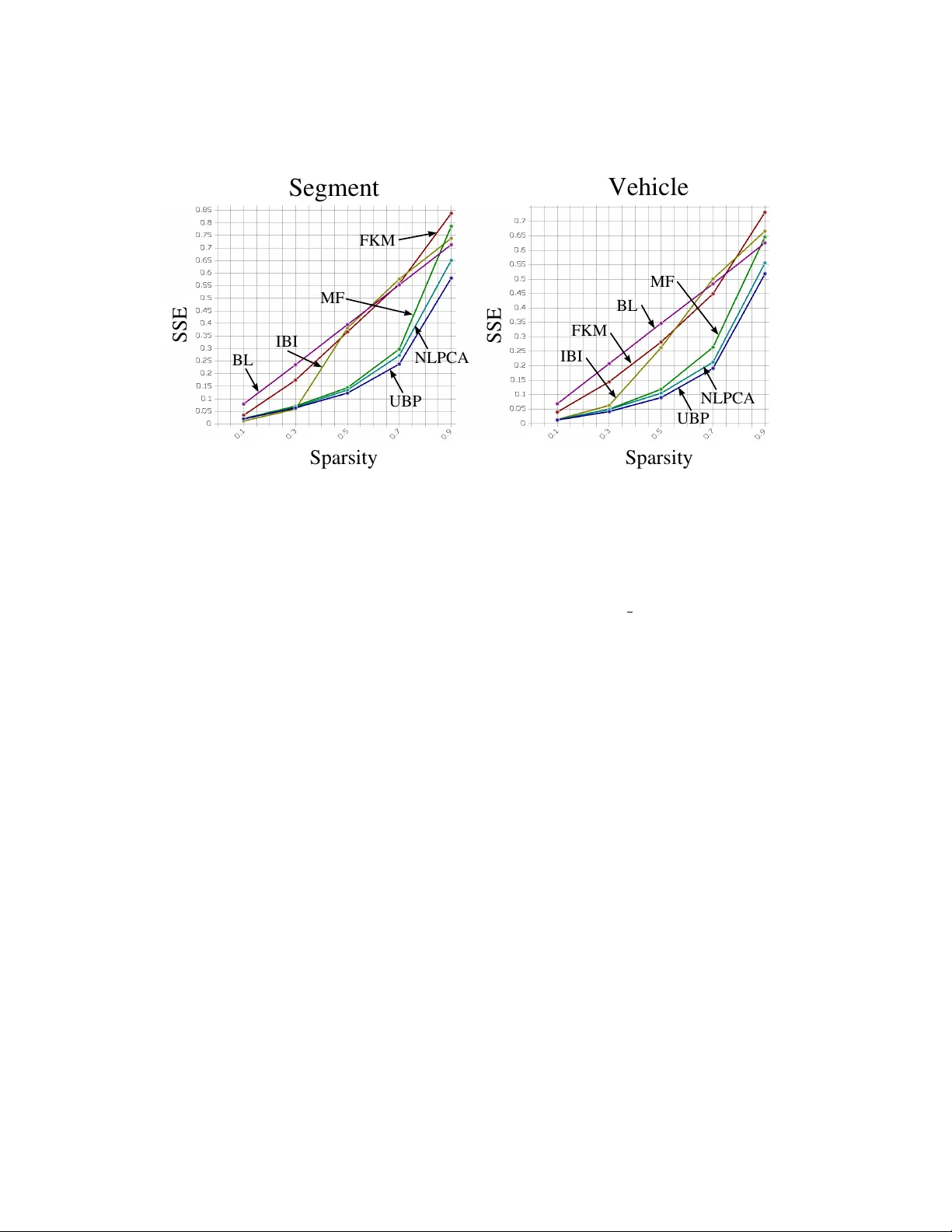

Missing V alue Imputation With Unsuper vised Backpropagation M I C H A E L S . G A S H L E R mgashler@uark.edu Department of Computer Science and Computer Engineering, University of Arkansas, F ayetteville, Arkansas, USA. M I C H A E L R . S M I T H , R I C H A R D M O R R I S , T O N Y M A RT I N E Z msmith@axon.cs.byu.edu, rmorris@axon.cs.byu.edu, martinez@cs.byu.edu Department of Computer Science, Brigham Y oung University , Pr o vo, Utah, USA. Many data mining and data analysis techniques operate on dense matrices or complete tables of data. Real- world data sets, howe ver , often contain unknown values. Even many classification algorithms that are designed to operate with missing values still exhibit deteriorated accuracy . One approach to handling missing v alues is to fill in (impute) the missing values. In this paper, we present a technique for unsupervised learning called Unsupervised Backpr opa gation (UBP), which trains a multi-layer perceptron to fit to the manifold sampled by a set of observ ed point-vectors. W e ev aluate UBP with the task of imputing missing v alues in datasets, and show that UBP is able to predict missing values with significantly lo wer sum-squared error than other collaborati ve filtering and imputation techniques. W e also demonstrate with 24 datasets and 9 supervised learning algorithms that classification accuracy is usually higher when randomly-withheld values are imputed using UBP , rather than with other methods. K ey words: Imputation; Manifold learning; Missing values; Neural networks; Unsupervised learning. 1. INTR ODUCTION Many effecti v e machine learning techniques are designed to operate on dense matrices or complete tables of data. Unfortunately , real-world datasets often include only samples of observed v alues mix ed with many missing or unkno wn elements. Missing v alues may occur due to human impatience, human error during data entry , data loss, faulty sensory equipment, changes in data collection methods, inability to decipher handwriting, pri vac y issues, legal requirements, and a variety of other practical factors. Thus, impro vements to methods for imputing missing v alues can ha ve far -reaching impact on improving the ef fectiv eness of existing learning algorithms for operating on real-world data. W e present a method for imputation called Unsupervised Backpr opagation (UBP), which trains a multi- layer perceptron (MLP) to fit to the manifold represented by the kno wn features in a dataset. W e demonstrate this algorithm with the task of imputing missing v alues, and we show that it is significantly more ef fectiv e than other methods for imputation. Backpropagation has long been a popular method for training neural networks (Rumel- hart et al., 1986; W erbos, 1990). A typical supervised approach trains the weights, W , of a multilayer perceptron (MLP) to fit to a set of training examples, consisting of a set of n fea- ture vectors X = h x 1 , x 2 , ..., x n i , and n corresponding label vectors Y = h y 1 , y 2 , ..., y n i . W ith many interesting problems, howe v er , training data is not av ailable in this form. In this paper , we consider the significantly different problem of training an MLP to estimate, or impute, the missing attribute v alues of X . Here X is represented as an n × d matrix where each of the d attributes may be continuous or categorical. Because the missing elements in X must be predicted, X becomes the output of the MLP , rather than the input. A new set of latent vectors, V = h v 1 , v 2 , ..., v n i , will be fed as inputs into the MLP . Howe v er , no examples from V are gi ven in the training data. Thus, both V and W must be trained using 2 M I S S IN G V A L U E I M P U T A T I O N W I T H U N S U P ERV I S E D B A C K PR O P AG A T I O N only the known elements in X . After training, each v i may be fed into the MLP to predict all of the elements in x i . T raining in this manner causes the MLP to fit a surface to the (typically non-linear) manifold sampled by X . After training, V may be considered as a reduced-dimensional representation of X . That is, V will be an n × t matrix, where t is typically much smaller than d , and the MLP maps V 7→ X . UBP accomplishes the task of training an MLP using only the kno wn attrib ute v alues in X with on-line backpropagation. For each presentation of a known v alue of the c th attribute from the r th instance ( x r,c ∈ X ), UBP simultaneously computes a gradient vector g to update the weights W , and a gradient vector h to update the input vector v r . ( x r,c is the element in ro w r , column c of X .) In this paper , we demonstrate UBP as a method for imputing missing values, and sho w that it outperforms other approaches at this task. W e compare UBP against 5 other imputation methods on a set of 24 data sets. 10% to 90% of the values are remov ed from the data sets completely at random. W e show that UBP predicts the missing values with signficantly lo wer error (as measured by sum-squared dif ference with normalized values) than other approaches. W e also ev aluated 9 learning algorithms to compare classification accuracy using imputed data sets. Learning algorithms using imputed data from UBP usually achiev e higher classification accuracy than with any of the other methods. The increase is most significant when 30% to 70% of the data is missing. The remainder of this paper is organized as follows. Section 2 re views related work to UBP and missing value imputation. UBP is described in Section 3. Section 4 presents the results of comparing UBP with other imputation methods. W e provide conclusions and a discussion of future directions for UBP in Section 5. 2. RELA TED W ORK As an algorithm, UBP falls at the intersection of sev eral dif ferent paradigms: neural networks, collaborativ e filtering, data imputation, and manifold learning. In neural networks, UBP is an extension of generativ e backpropagation (Hinton, 1988). Generati ve backpropa- gation adjusts the inputs in a neural network while holding the weights constant. UBP , by contrast, computes both the weights and the input v alues simultaneously . Related approaches hav e been used to generate labels for images (Coheh and Shawe-T aylor, 1990), and for natural language (Bengio et al., 2006). Although these techniques have been used for labeling images and documents, to our knowledge, they ha ve not been used for the application of imputing missing v alues. UBP dif fers from generati ve backpropagation in that it trains the weights simultaneously with the inputs, instead of training them as a pre-processing step. UBP may also be classified as a manifold learning algorithm. Like common non-linear dimensionality reduction (NLDR) algorithms, such as Isomap (T enenbaum et al., 2000), MLLE (Zhang and W ang, 2007), or Manifold Sculpting (Gashler et al., 2008), it reduces a set of high-dimensional vectors, X , to a corresponding set of lo w-dimensional vectors, V . Unlike these algorithms, howe ver , UBP also learns a model of the manifold. Also unlike these algorithms, UBP is designed to operate with incomplete observ ations. In collaborati ve filtering, UBP may be viewed as a non-linear generalization of matrix factorization (MF). MF is a linear dimensionality reduction technique that can be effecti ve for collaborativ e filtering (Adomavicius and T uzhilin, 2005) as well as imputation. This method has become a popular technique, in part due to its effecti veness with the data used in the NetFlix competition (Koren et al., 2009). MF inv olv es factoring the data matrix into two much-smaller matrices. These smaller matrices can then be combined to predict all of the missing values in the original dataset. It is equi v alent to using linear regression to project M I S S IN G V A L U E I M P U T A T I O N W I T H U N S U P ERV I S E D B A C K PR O P AG A T I O N 3 the data onto its first few principal components. Unfortunately , MF is not well-suited for data that exhibits non-linearities. It was previously sho wn that matrix factorization could be represented with a neural network model in v olving one hidden layer and linear activ ation functions (T ak ´ acs et al., 2009). In comparison with this approach, UBP uses a standard MLP with an arbitrary number of hidden layers and non-linear acti vation functions, instead of the network structure previously proposed for matrix factorization. MF produces very good results at the task of imputation, but we demonstrate that UBP does better . As an imputation technique, UBP is a refinement of Nonlinear PCA (Scholz et al., 2005) (NLPCA), which has been shown to be effecti v e for imputation. This approach also uses gradient descent to train an MLP to map from lo w to high-dimensional space. After training, the weights of the MLP can be used to represent non-linear components within the data. If these components are extracted one-at-a-time from the data, then they are the principal components, and NLPCA becomes a non-linear generalization of PCA. T ypically , ho wev er , these components are all learned together , so it would more properly be termed a non-linear generalization of MF . NLPCA was ev aluated with the task of missing value imputation (Scholz et al., 2005), but its relationship to MF was not yet recognized at the time, so it was not compared against MF . One of the contributions of this paper is that we show NLPCA to be a significant improvement over MF at the task of imputation. W e also demonstrate that UBP achie v es e ven better results than NLPCA at the same task, and is the best algorithm for imputation of which we are a ware. The primary dif ference between NLPCA and UBP is that UBP utilizes a three-phase training approach (described in Section 3) which makes it more robust ag ainst falling into a local optimum during training. UBP is comparable with the latter-half of an autoencoder (Hinton and Salakhutdinov, 2006). Autoencoders create a low dimensional representation of a training set by using the training examples as input features as well as the target values. The first half of the encoder reduces the input features into lo w dimensional space by only hav e n nodes in the middle layer where n is less than the number of input features. The latter-half of the autoencoder then maps the lo w dimensional representation of the training set back to the original input features. Howe ver , to capture non-linear dependencies in the data, autoencoders require deep architectures to allow for layers between the inputs and low dimensional representation of the data and between the lo w dimensional representation of the data and the output. The deep architecture makes training an autoencoder dif ficult and computationally expensi ve, generally requiring unsupervised layer-wise training (Bengio et al., 2007; Erhan et al., 2009). Because UBP trains a network with half the depth of a corresponding autoencoder , UBP is practical for many problems for which autoencoders are too computationally e xpensi ve. Since we demonstrate UBP with the application of imputing missing v alues in data, it is also rele v ant to consider other approaches that are classically used for this task. Simple methods, such as dropping patterns that contain missing values or randomly drawing v alues to replace the missing values, are often used based on simplicity for implementation. These methods, ho we ver , have significant obvious disadvantages when data is scarce. Another com- mon approach is to treat missing elements as ha ving a unique v alue. This approach, ho wev er has been shown to bias the parameter estimates for multiple linear regression models (Jones, 1996) and to cause problems for inference with many models (Shafer, 1997). W e take it for granted that better accuracy is desirable, so these methods should generally not be used, as better methods do exist. A simple improv ement ov er BL is to compute a separate centroid for each output class. The disadvantages of this method are that it is not suitable for regression problems, and it cannot generalize to unlabeled data since it depends on labels to impute. Methods based on maximum likelihood (Little and Rubin, 2002) hav e long been studied in statistics, but these also depend on pattern labels. Since it is common to have more unlabeled data than labeled 4 M I S S IN G V A L U E I M P U T A T I O N W I T H U N S U P ERV I S E D B A C K PR O P AG A T I O N data, we restrict our analysis to unsupervised methods that do not rely on labels to impute missing v alues. Another well-studied approach in v olves training a supervised learning algorithm to pre- dict missing v alues using the non-missing v alues as inputs (Quinlan, 1989; Lakshminarayan et al., 1996; Alireza Farhangf ar, 2008). Unfortunately , the case where multiple values are missing in one pattern present a difficulty for these approaches. Either a learning algorithm must be used that implicitly handles missing v alues in some manner, or an e xponential number of models must be trained to handle each combination of missing values. Further , it has also been sho wn that results with these methods tend to be poor when there are high percentages (more than about 15%) of missing v alues (Acu ˜ na and Rodriguez, 2004). One very effecti ve collaborative filtering method for imputation is to cluster the data, and then make predictions according to the centroid of the cluster in which each point falls (Adomavicius and T uzhilin, 2005). Luengo compared sev eral imputation methods by e valuating their ef fect on classification accuracy (Luengo, 2011). He found cluster -based imputation with Fuzzy k -Means (FKM) (Li et al., 2004) using Manhattan distance to out- perform other methods, including those in volving state of the art machine learning methods and other methods traditionally used for imputation. Our analysis, howe ver , finds that most of the methods we compared outperform FKM. A related imputation method called instance-based imputation (IBI) is to combine the non-missing values of the k -nearest neighbors of a point to replace its missing v alues. T o e valuate the similarity between points, cosine correlation is often used because it tends to be ef fectiv e in the presence of missing v alues (Adomavicius and T uzhilin, 2005; Li et al., 2008; Sarwar et al., 2001). UBP , as well as the aforementioned imputation techniques, are considered single impu- tation techniques because only one imputation for each missing v alue is made. Single im- putation has the disadvantage of introducing large amounts of bias since the imputed v alues do not reflect the added uncertainty from the fact that values are missing. T o ov ercome this, Rubin (1987) proposed multiple imputation that estimates the added variance by combining the outcomes of I imputed data sets. Similarly , ensemble techniques hav e also been shown to be effecti ve for imputing missing v alues (Schafer and Graham, 2002). In this paper, we do not compare against ensemble methods because UBP in volves a single model, and it may be included in an ensemble as well as any other imputation method. 3. UNSUPER VISED B A CKPROP A GA TION In order to formally describe the UBP algorithm, we define the following terms. The relationships between these terms are illustrated graphically in Figure 1. • Let X be a giv en n × d matrix, which may ha v e many missing elements. W e seek to impute v alues for these elements. n is the number of instances. d is the number of attributes. • Let V be a latent n × t matrix, where t < d . • If x r,c is the element at row r , column c in X , then ˆ x r,c is the v alue predicted by the MLP for this element when v r ∈ V is fed forward into the MLP . • Let w ij be the weight that feeds from unit i to unit j in the MLP . • For each network unit i on hidden layer j , let β j,i be the net input into the unit, let α j,i be the output or acti v ation value of the unit, and let δ j,i be an error term associated with the unit. • Let l be the number of hidden layers in the MLP . M I S S IN G V A L U E I M P U T A T I O N W I T H U N S U P ERV I S E D B A C K PR O P AG A T I O N 5 X W V h g B a ckpr opa gat i on Low dim e nsi onal H i gh dim e nsi onal ^ e x x r,c r,c = F I G U R E 1 . UBP trains an MLP to fit to high-dimensional observ ations, X . For each kno wn x r,c ∈ X , UBP uses backpropagation to compute the gradient vectors g and h , which are used to update the weights, W , and the input vector v r . • Let g be a v ector representing the gradient with respect to the weights of an MLP , such that g i,j is the component of the gradient that is used to refine w i,j . • Let h be a vector representing the gradient with respect to the inputs of an MLP , such that h i is the component of the gradient that is used to refine v r,i ∈ v r . Using backpropagation to compute g , the gradient with respect to the weights, is a com- mon operation for training MLPs (Rumelhart et al., 1986; W erbos, 1990). Using backpropa- gation to compute h , the gradient with respect to the inputs, howe v er , is much less common, so we provide a deriv ation of it here. In this deri viation, we compute each h i ∈ h from the presentation of a single element x r,c ∈ X . It could also be deriv ed from the presentation of a full row (which is typically called “on-line training”), or from the presentation of all of X (“batch training”), but since we assume that X is high-dimensional and is missing many v alues, it is significantly more ef ficient to train with the presentation of each kno wn element indi vidually . W e begin by defining an error signal, E = ( x r,c − ˆ x r,c ) 2 , and then express the gradient as the partial deri vati v e of this error signal with respect to the inputs: h i = ∂ E ∂ v r,i . (1) The intrinsic input v r,i af fects the value of E through the net value of a unit β i and further through the output of a unit α i . Using the chain rule, Equation 1 becomes: h i = ∂ E ∂ α 0 ,c ∂ α 0 ,c ∂ β 0 ,c ∂ β 0 ,c ∂ v r,i . (2) The backpropagation algorithm calculates ∂ E ∂ α 0 ,c ∂ α 0 ,c ∂ β 0 ,c (which is ∂ E ∂ β j,i for a network unit) as the error term δ j,i associated with a network unit. Thus, to calculate h i the only additional calculation that needs to be made is ∂ β j ∂ v r,i . For a netw ork with 0 hidden layers: ∂ β 0 ,c ∂ v r,i = ∂ ∂ v r,i X t w t,c v r,t , which is non-zero only when t equals i and is equal to w i,c . When there are no hidden layers ( l = 0 ): h i = − w i,c δ c . (3) 6 M I S S IN G V A L U E I M P U T A T I O N W I T H U N S U P ERV I S E D B A C K PR O P AG A T I O N If there is at least one hidden layer ( l > 0 ), then, ∂ β 0 ,c ∂ v r,i = ∂ β 0 ,c ∂ α 1 ∂ α 1 ∂ β 1 . . . ∂ α l ∂ β l ∂ β l v r,i , where the α k and β k represent the output v alues and the net values for the units in the k th hidden layer . As part of the error term for the units in the l th layer , backpropagation calculates ∂ β 0 ,c ∂ α 1 ∂ α 1 ∂ β 1 . . . ∂ α l ∂ β l as the error term associated with each network unit. Thus, the only additional calculation for h i is: ∂ β l ∂ v r,i = ∂ ∂ v r,i X j X t w j,t v r,t . As before, ∂ β l ∂ v r,i is non-zero only when t equals i . For network with at least one hidden layer: h i = − X j w i,j δ j . (4) Equation 4 is a strict generalization of Equation 3. Equation 3 only considers the one output unit, c , for which a kno wn target value is being presented, whereas Equation 4 sums ov er each unit, j , into which the intrinsic value v r,i feeds. 3.1. 3-phase T raining UBP trains V and W in three phases: 1) the first phase computes an initial estimate for the intrinsic vectors, V , 2) the second phase computes an initial estimate for the network weights, W , and 3) the third phase refines them both together . All three phases train using stochastic gradient descent, which we deriv e from the classic backpropagation algorithm. W e no w briefly give an intuiti ve justification for this approach. In our initial experimentation, we used the simpler approach of training in a single phase. W ith sev eral problems, we observed that early during training, the intrinsic point vectors, v i ∈ V , tended to separate into clusters. The points in each cluster appeared to be unrelated, as if the y were arbitrarily assigned to one of the clusters by their random initialization. As training continued, the MLP ef fectiv ely created a separate mapping for each cluster in the intrinsic representation to the corresponding v alues in X . This ef fecti vely places an unnecessary burden on the MLP , because it must learn a separate mapping from each cluster that forms in V to the expected target values. In phase 1, we give the intrinsic vectors a chance to self-organize while there are no hidden layers to form nonlinear separations among them. Like wise, phase 2 gi ves the weights a chance to organize without having to train against moving inputs. These two preprocessing phases initialize the system (consisting of both intrinsic vectors and weights) to a good initial starting point, such that gradient descent is more likely to find a local optimum of higher quality . Our empirical results v alidate this theory by showing that UBP produces more accurate imputation results than NLPCA, which only refines V and W together . Pseudo-code for the UBP algorithm, which trains V and W in three phases, is given in Algorithm 1. This algorithm calls Algorithm 2, which performs a single epoch of training. A detailed description of Algorithm 1 follo ws. A matrix containing the known data values, X , is passed in to UBP (See Algorithm 1). UBP returns V and W . V is a matrix such that each ro w , v i , is a lo w-dimensional represen- tation of the corresponding row , x i . W is a set or ragged matrix containing weight v alues for an MLP that maps from each v i to an approximation of x i ∈ X . v i may be forward- propagated into this MLP to estimate v alues for any missing elements in x i . Lines 1-9 perform the first phase of training, which computes an initial estimate for V . Line 1 of Algorithm 1 initializes the elements in V with small random v alues. Our M I S S IN G V A L U E I M P U T A T I O N W I T H U N S U P ERV I S E D B A C K PR O P AG A T I O N 7 Algorithm 1 UBP( X ) 1: Initialize each element in V with small random v alues. 2: Let T be the weights of a single-layer perceptron 3: Initialize each element in T with small random values. 4: η 0 ← 0 . 01 ; η 00 ← 0 . 0001 ; γ ← 0 . 00001 ; λ ← 0 . 0001 5: η ← η 0 ; s 0 ← ∞ 6: while η > η 00 7: s ← train epoch( X , T , λ, true , 0 ) 8: if 1 − s/s 0 < γ then η ← η / 2 9: s 0 ← s 10: Let W be the weights of a multi-layer perceptron with l hidden layers, l > 0 11: Initialize each element in W with small random v alues. 12: η ← η 0 ; s 0 ← ∞ 13: while η > η 00 14: s ← train epoch( X , W , λ, false , l ) 15: if 1 − s/s 0 < γ then η ← η / 2 16: s 0 ← s 17: η ← η 0 ; s 0 ← ∞ 18: while η > η 00 19: s ← train epoch( X , W , 0 , true , l ) 20: if 1 − s/s 0 < γ then η ← η / 2 21: s 0 ← s 22: retur n { V , W } implementation dra ws v alues from a Normal distrib ution with a mean of 0 and a de viation of 0.01. Lines 2-3 initialize the weights, T , of a single-layer perceptron using the same mecha- nism. This single-layer perceptron is a temporary model that is only used in phase 1 to assist the initial training of V . Line 4 sets some heuristic values that we used to detect con vergence. W e note that man y other techniques could be used to detect con ver gence. Our implementation, used the simple approach of dividing half of the training data for a validation set. W e decay the learning rate whenev er predictions fail to impro ve by a sufficient amount on the validation data. This simple approch always stops, and it yielded better empirical results than a few other v ariations that we tried. η 0 specifies an initial learning rate. Con ver gence is detected when the learning rate falls belo w η 00 . γ specifies the amount of improvement that is expected after each epoch, or else the learning rate is decayed. λ is a regularization term that is used during the first two phases to ensure that the weights do not become excessi vely saturated before the final phase of training. No regularization is used in the final phase of training because we want the MLP to ultimately fit the data as closely as possible. (Ov erfit can still be mitigated by limiting the number of hidden units used in the MLP .) W e used the default heuristic values specified on this line in all of our experiments because tuning them seemed to ha v e little impact on the final results. W e belie ve that these v alues are well-suited for most problems, but could possibly be tuned if necessary . Line 5 sets the learning rate, η , to the initial value. The value s 0 is used to store the pre vious error score. As no error has yet been measured, it is initialized to ∞ . Lines 6-9 train V and T until con ver gence is detected. T may then be discarded. Lines 10-16 perform the second phase of training. This phase dif fers from the first phase in two ways: 1) an MLP is used instead of a temporary single-layer perceptron, and 2) V is held constant during this phase. 8 M I S S IN G V A L U E I M P U T A T I O N W I T H U N S U P ERV I S E D B A C K PR O P AG A T I O N Algorithm 2 train epoch( X , W , λ, p, l ) 1: f or each known x r,c ∈ X in random order 2: Compute α c by forward-propagating v r into an MLP with weights W . 3: δ c ← ( x r,c − α c ) f 0 ( β c ) 4: f or each hidden unit i feeding into output unit c 5: δ i ← w i,c δ c f 0 ( β i ) 6: f or each hidden unit j in an earlier hidden layer (in backward order) 7: δ j ← P k w j,k δ k f 0 ( β j ) 8: f or each w i,j ∈ W 9: g i,j ← − δ j α i 10: W ← W − η ( g + λ W ) 11: if p = true then 12: f or i from 0 to t − 1 13: if l = 0 then h i ← − w i,c δ c else h i ← − P j w i,j δ j 14: v r ← v r − η ( h + λ v r ) 15: s ← measure RMSE with X 16: retur n s Lines 17-21 perform the third phase of training. In this phase, the same MLP that is used in phase 2 is used again, but V and W are both refined together . Also, no regularization is used in the third phase. 3.2. Stochastic gradient descent Next, we describe Algorithm 2, which performs a single epoch of training by stochastic gradient descent. This algorithm is v ery similar to an epoch of traditional backpropagation, except that it presents each element individually , instead of presenting each vector , and it conditionally refines the intrinsic vectors, V , as well as the weights, W . Line 1 presents each kno wn element x r,c ∈ X in random order . Line 2 computes a predicted v alue for the presented element giv en the current v r . Note that ef ficient implementations of line 2 should only propagate v alues into output unit c . Lines 3-7 compute an error term for output unit c , and each hidden unit in the network. The acti vation of the other output units is not computed, so the error on those units is 0. Lines 8-10 refine W by gradient descent. Line 11 specifies that V should only be refined during phases 1 and 3. Lines 12-14 refine V by gradient descent. Line 15 computes the root-mean-squared-error of the MLP for each kno wn element in X . In order to enable UBP to process nominal (cate gorical) attributes, we con vert such v alues to a vector representing a membership weight in each category . For e xample, a given v alue of “cat” from the value set { “mouse”,“cat”,“dog” } is represented with the vector in h 0 , 1 , 0 i . Unkno wn values in this attrib ute are con verted to 3 unkno wn real v alues, requiring the algorithm to make 3 predictions. After missing values are imputed, we con vert the data back to its original form by finding the mode of each categorical distribution. For example, the predicted vector h 0 . 4 , 0 . 25 , 0 . 35 i w ould be con verted to a prediction of “mouse”. 3.3. Complexity Because UBP uses a heuristic technique to detect conv er gence, a full analysis of the computational comple xity of UBP is not possible. Howe v er , it is suf ficiently similar to other M I S S IN G V A L U E I M P U T A T I O N W I T H U N S U P ERV I S E D B A C K PR O P AG A T I O N 9 well-kno wn techniques that comparisons can be made. Matrix factorization is generally considered to be a very efficient imputation technique (K oren et al., 2009). Nonlinear PCA is a nonlinear generalization of matrix factorization. If a linear activ ation function is used, then it is equiv alent to matrix factorization, with the additional (v ery small) computational ov erhead of computing the acti vation function. When hidden layers are added, computational complexity is increased, but remains proportional to the number of weights in the network. UBP adds two additional phases of training to Nonlinear PCA. Thus, in the worst case, UBP is 3 times slower than Nonlinear PCA. In practice, howe ver , the first two phases tend to be very fast (because they optimize fewer v alues), and these preprocessing phases may ev en cause the third phase to be much faster (by initializing the weights and intrinsic vectors to start at a position much closer to a local optimum). 4. EMPIRICAL V ALID A TION Because running time was not a significant issue with UBP , our empirical v alidation focuses on imputation accuracy . W e compared UBP with 5 other imputation algorithms. The imputation methods that we examined as well as their algorithmic parameter values (including UBP) are: • Mean/Mode or Baseline (BL). T o establish a “baseline” for comparison, we compare with the method of replacing missing values in continuous attributes with the mean of the non-missing v alues in that attribute, and replacing missing values in nominal (or categorical) attributes with the most common value in the non-missing values of that attribute. There are no parameters for BL. It is expected that any reasonable algorithm should outperform this baseline algorithm with most problems. • Fuzzy K-Means (FKM). W e varied k (the number of clusters) ov er the set { 4 , 8 , 16 } , we varied the L P -norm value for computing distance ov er the set { 1 , 1 . 5 , 2 } (Manhattan distance to Euclidean distance), and the fuzzification factor over the set { 1 . 3 , 1 . 5 } which were reported to be the most ef fectiv e v alues (Li et al., 2004). • Instance-Based Imputation (IBI). W e used cosine correlation to ev aluate similarity , and we varied k (the number of neighbors) ov er the set { 1 , 5 , 21 } . These values were selected because they were all odd, and spanned the range of intuiti v ely suitable values. • Matrix Factorization (MF). W e v aried the number of intrinsic v alues ov er the set { 2 , 8 , 16 } , and the regularization term over the set { 0 . 001 , 0 . 01 , 0 . 1 } . Again, these values were se- lected to span the range of intuiti vely suitable v alues. • Nonlinear PCA (NLPCA). W e varied the number of hidden units ov er the set { 0 , 8 , 16 } , and the number of intrinsic values o ver the set { 2 , 8 , 16 , 32 } . In the case of 0 hidden units, only a single layer of sigmoid units was used. • Unuspervised Backpropagation (UBP). The parameters were varied o ver the same ranges as those of NLPCA. For NLPCA and UBP , we used the logistic function as the activ ation function and summed squared error as the objecti ve function. Thus, we imputed missing v alues in a total of 66000 dataset scenarios. For each algorithm, we found the set of parameters that yielded the best results, and we compared only these best results for each algorithm a veraged o ver the ten runs of dif fering random seeds. T o facilitate reproduction of our results, and to assist with related research ef forts, we hav e integrated our implementation of UBP and all of the competitor algorithms into the W affles machine learning toolkit (Gashler, 2011) In order to ev aluate the effecti v eness of UBP and related imputation techniques, we gath- ered a set of 24 datasets from the UCI repository (Frank and Asuncion, 2010), the Promise 10 M I S S IN G V A L U E I M P U T A T I O N W I T H U N S U P ERV I S E D B A C K PR O P AG A T I O N S e gme nt V e hi cl e U B P S SE S SE U B P MF B L I B I F KM B L MF I B I F KM S pa r si ty S pa r si ty N LP C A N LP C A F I G U R E 2 . A comparison of the average sum-squared error in each pattern by 5 imputation techniques ov er a range of sparsity values with tw o representati v e datasets. (Lower is better .) repository (Sayyad Shirabad and Menzies, 2005), and mldata.or g: { abalone, arrhythmia, bupa, colic, credit-g, desharnais, diabetes, ecoli, eucalyptus, glass, h ypothyroid, ionosphere, iris, nursery , ozone, pasture, sonar , spambase, spectrometer, teaching assistant, vote, vo wel, wa veform-500, and yeast } . T o ensure an objecti ve e valuation, this collection was determined before e valuation was performed, and was not modified to emphasize f av orable results. T o ensure that our results would be applicable for tasks that require generalization, we remov ed the class labels from each dataset so that only the input features could be used for imputing missing values. W e normalized all real-valued attributes to fall within a range from 0 to 1 so that every attribute would carry approximately equal weight in our ev aluation. W e then remov ed completely at random 1 u % of the values from each dataset, where u ∈ { 10 , 30 , 50 , 70 , 90 } . For each dataset, and for each u , we generated 10 datasets with missing values, each using a different random number seed, to make a total of 1200 tasks for ev aluation. The task for each imputation algorithm was to restore these missing v alues. W e measured error by comparing each predicted (imputed) v alue with the corresponding original normalized v alue, summed ov er all attrib utes in the dataset. For nominal (categorical) v alues, we used Hamming distance, and for real values, we used the squared dif ference between the original and predicted values. The av erage error w as computed over all of the patterns in each dataset. Figure 2 shows two representati ve comparisons of the error scores obtained by each algorithm at varying lev els of sparsity . Comparisons with other datasets generally exhibited similar trends. MF , NLPCA, and UBP did much better than other algorithms when 50% or 70% of the v alues were missing. No algorithm was best in e very case, b ut UBP achie ved the best score in more cases than any other algorithm. T able 1 summarizes the results of these comparisons. UBP achie ved lo wer error than the other algorithm in 20 out of 25 pair-wise comparisons, each comparing imputation scores with 24 datasets averaged over 10 runs with dif ferent random seeds. In 15 pair-wise comparisons, UBP did better with a suf ficient number 1 Other categories of “missingness”, besides missing completely at random (MCAR), have been studied (Little and Rubin, 2002), but we restrict our analysis to the imputation of MCAR v alues. M I S S IN G V A L U E I M P U T A T I O N W I T H U N S U P E RV IS E D B AC K P RO PAG A T I O N 11 of datasets to establish statistical significance according to the W ilcoxon Signed Ranks test. These cases are indicated with a “ √ ” symbol. T A B L E 1 . A high-lev el summary of comparisons between UBP and five other imputation techniques. Results are shown for each of the 5 sparsity v alues. Each row in this table summarizes a comparison between UBP and a competitor imputation algorithm for predicting missing values. S PA R SI T Y A L G O R I T H M U B P C O M P A R A T I V E P - V A L U E W I N S , T I E S , L O S S E S 0 . 1 B L 20 , 0 , 4 0 . 0 0 1 √ F K M 19 , 0 , 5 0 . 0 0 4 √ I B I 15 , 0 , 9 0 . 2 5 0 M F 1 2 , 0 , 1 2 0 . 3 4 8 N L P C A 11 , 7 , 6 0 . 1 7 6 0 . 3 B L 20 , 0 , 4 0 . 0 0 1 √ F K M 22 , 0 , 2 0 . 0 0 0 √ I B I 19 , 0 , 5 0 . 0 2 2 √ M F 1 1 , 0 , 1 3 0 . 4 3 7 N L P C A 10 , 1 1 , 3 0 . 0 3 6 √ 0 . 5 B L 20 , 0 , 4 0 . 0 0 3 √ F K M 20 , 0 , 4 0 . 0 0 0 √ I B I 17 , 0 , 7 0 . 0 1 8 √ M F 1 2 , 0 , 1 2 0 . 3 9 4 N L P C A 10 , 1 1 , 3 0 . 0 4 6 √ 0 . 7 B L 16 , 0 , 8 0 . 0 2 2 √ F K M 17 , 0 , 7 0 . 0 0 4 √ I B I 15 , 0 , 9 0 . 0 4 0 √ M F 13 , 0 , 1 1 0 . 3 1 2 N L P C A 7 , 1 4 , 3 0 . 0 3 8 √ 0 . 9 B L 7 , 0 , 17 0 . 4 3 7 F K M 17 , 0 , 7 0 . 0 0 6 √ I B I 13 , 0 , 1 1 0 . 1 4 7 M F 16 , 0 , 8 0 . 1 2 3 N L P C A 2 , 1 0 , 1 2 0 . 4 9 5 T o help us further understand how UBP might lead to better classification results, we also performed experiments in volving classification with the imputed datasets. W e restored class labels to the datasets in which we had imputed feature values. W e then used 5 repetitions of 10-fold cross validation with each of 9 learning algorithms from the WEKA (W itten and Frank, 2005) machine learning toolkit: backpropagation (BP), C4.5 (Quinlan, 1993), 5- nearest neighbor (IB5), locally weighted learning (L WL) (Atkeson et al., 1997), na ¨ ıve Bayes (NB), nearest neighbor with generalization (NNGE) (Martin, 1995), Random Forest (RandF) (Breiman, 2001), RIpple DOwn Rule Learner (RIDOR) (Gaines and Compton, 1995), and RIPPER (Repeated Incremental Pruning to Produce Error Reduction) (Cohen, 1995). The learning algorithms were chosen with the intent of being div erse from one another , where di versity is determined using unsupervised meta-learning (Lee and Giraud-Carrier, 2011). 12 M I S S IN G V A L U E I M P U T A T I O N W I T H U N S U P E RV IS E D B AC K P RO PAG A T I O N T A B L E 2 . Results of classification tests at a sparsity lev el of 0.1., (meaning 10% of data values were remov ed and then imputed prior to classification). The 3 upper numbers in each cell indicate the wins, ties, and losses for UBP in a pair -wise comparison. The lower number in each cell indicates the W ilcoxon signed ranks test P-value ev aluated over the 24 datasets. (When the value is smaller than 0.05, indicated with a “ √ ” symbol, the performance of UBP was better with statistical significance.) B L F K M I B I M F N L P C A B P 16 , 1 , 7 14 , 0 , 1 0 1 2 , 0 , 1 2 9 , 0 , 15 6 , 7 , 11 0 . 0 1 3 √ 0 . 0 8 0 √ 0 . 8 3 5 0 . 8 7 4 0 . 9 1 5 C 4 . 5 16 , 0 , 8 18 , 0 , 6 1 2 , 0 , 1 2 14 , 1 , 9 9 , 7 , 8 0 . 0 3 9 √ 0 . 0 1 1 √ 0 . 7 2 7 0 . 2 1 9 0 . 7 1 5 I B 5 17 , 1 , 6 17 , 0 , 7 15 , 0 , 9 1 1 , 0 , 13 9 , 8 , 7 0 . 0 1 1 √ 0 . 0 0 5 √ 0 . 0 3 9 √ 0 . 6 1 6 0 . 6 8 8 LW L 16 , 2 , 6 12 , 5 , 7 7 , 4 , 13 9 , 2 , 13 7 , 9 , 8 0 . 0 1 5 √ 0 . 1 3 4 0 . 7 3 1 0 . 6 5 2 0 . 6 0 1 N B 1 2 , 0 , 1 2 13 , 0 , 1 1 1 2 , 0 , 1 2 13 , 2 , 9 9 , 7 , 8 0 . 3 7 4 0 . 3 0 2 0 . 5 9 4 0 . 4 8 7 0 . 5 3 8 N N G E 17 , 1 , 6 19 , 1 , 4 17 , 1 , 6 16 , 0 , 8 10 , 7 , 7 0 . 0 0 1 √ 0 . 0 0 0 √ 0 . 1 2 1 0 . 1 0 9 0 . 3 5 3 R A N D F 17 , 0 , 7 16 , 1 , 7 14 , 0 , 1 0 16 , 0 , 8 8 , 8 , 8 0 . 0 0 3 √ 0 . 0 0 6 √ 0 . 1 5 1 0 . 0 5 4 0 . 5 7 2 R I D O R 20 , 0 , 4 20 , 1 , 3 1 2 , 0 , 1 2 15 , 0 , 9 6 , 8 , 10 0 . 0 0 0 √ 0 . 0 0 0 √ 0 . 2 5 4 0 . 2 2 0 0 . 7 5 7 R I P P E R 16 , 1 , 7 12 , 1 , 1 1 1 2 , 0 , 1 2 15 , 0 , 9 10 , 7 , 7 0 . 0 0 9 √ 0 . 1 3 4 0 . 5 1 7 0 . 0 5 7 0 . 3 8 8 W e ev aluated each learning algorithm at each of the previously mentioned sparsity levels using each imputation algorithm with the parameters that resulted in the lowest SSE error score for imputation. The results from this experiment are summarized for each of the 5 sparsity lev els in T ables 2 through 6. Each cell in these tables summarizes a set of ex- periments with an imputation algorithm and classification algorithm pair . The three upper numbers in each cell indicate the number of times that UBP lead to higher , equal, and lower classification accuracy . When the left-most of these three numbers is biggest, this indicates that classification accuracy was higher in more cases when UBP was used as the imputation algorithm. The lower number in each cell indicates the P-value obtained from the W ilcoxon signed ranks test by performing a pair -wise comparison between UBP and the imputation algorithm of that column. When this value is below 0.05, UBP did better in a sufficient number of pair-wise comparisons to demonstrate that it is better with statistical significance. These cases are indicated with a “ √ ” symbol. Some classification algorithms were more responsiv e to the improved imputation accu- racy that UBP of fered than others. For example, UBP appears to ha ve a more beneficial ef fect on classification results when used with Random F orest than with na ¨ ıve Bayes. Overall, UBP was demonstrated to be more beneficial as an imputation algorithm than any of the other imputation algorithms. As expected, NLPCA was the closest competitor . UBP did better than NLPCA in a larger number of cases, b ut we were not able to demonstrate that it was M I S S IN G V A L U E I M P U T A T I O N W I T H U N S U P E RV IS E D B AC K P RO PAG A T I O N 13 T A B L E 3 . Results of classification tests at a sparsity level of 0.3. B L F K M I B I M F N L P C A B P 18 , 0 , 6 18 , 0 , 6 15 , 0 , 9 1 2 , 0 , 1 2 6 , 1 0 , 8 0 . 0 0 8 √ 0 . 0 0 2 √ 0 . 0 4 8 √ 0 . 5 5 0 0 . 8 8 4 C 4 . 5 15 , 0 , 9 15 , 1 , 8 15 , 0 , 9 13 , 0 , 1 1 7 , 1 0 , 7 0 . 0 2 4 √ 0 . 0 1 7 √ 0 . 0 2 5 √ 0 . 4 6 1 0 . 7 3 5 I B 5 16 , 0 , 8 18 , 0 , 6 1 0 , 0 , 14 1 0 , 0 , 14 4 , 1 0 , 10 0 . 0 1 6 √ 0 . 0 0 4 √ 0 . 5 3 9 0 . 6 5 8 0 . 9 7 0 LW L 17 , 1 , 6 16 , 2 , 6 15 , 2 , 7 12 , 2 , 1 0 7 , 1 2 , 5 0 . 0 0 5 √ 0 . 0 0 8 √ 0 . 1 0 9 0 . 2 1 8 0 . 4 8 4 N B 1 1 , 0 , 13 13 , 0 , 1 1 1 2 , 0 , 1 2 13 , 0 , 1 1 9 , 1 0 , 5 0 . 6 8 9 0 . 3 4 2 0 . 3 7 4 0 . 5 6 1 0 . 2 2 6 N N G E 14 , 0 , 1 0 16 , 0 , 8 15 , 0 , 9 14 , 0 , 1 0 7 , 1 0 , 7 0 . 1 3 2 0 . 0 2 0 √ 0 . 1 3 8 0 . 2 4 5 0 . 7 7 4 R A N D F 14 , 0 , 1 0 17 , 0 , 7 15 , 0 , 9 13 , 1 , 1 0 6 , 1 0 , 8 0 . 1 3 2 0 . 0 2 1 √ 0 . 0 8 4 0 . 0 8 3 0 . 8 4 2 R I D O R 16 , 1 , 7 17 , 1 , 6 16 , 0 , 8 10 , 0 , 1 4 4 , 1 0 , 10 0 . 0 0 4 √ 0 . 0 0 1 √ 0 . 0 1 8 √ 0 . 5 8 3 0 . 9 4 2 R I P P E R 14 , 0 , 1 0 16 , 0 , 8 17 , 0 , 7 9 , 0 , 15 7 , 1 0 , 7 0 . 0 5 1 0 . 0 4 2 √ 0 . 0 2 6 √ 0 . 8 6 8 0 . 5 0 0 better with statistical significance until sparsity lev el 0.7. It appears from these results that the impro vements of UBP ov er NLPCA generally hav e a bigger impact when there are more missing v alues in the data. This is significant because the difficulty of effecti ve imputation increases with the sparsity of the data. T o demonstrate the dif ference between UBP and NLPCA (and, hence, the adv antage of 3-phase training), we briefly examine UBP as a manifold learning (or non-linear dimension- ality reduction) technique. W e trained both NLPCA and UBP using data from the MNIST dataset of handwritten digits. W e used a multilayer perceptron with a 4 → 8 → 256 → 1 topology . 2 of the 4 inputs were treated as latent v alues, and the other 2 inputs we used to specify ( x, y ) coordinates in each image. The one output was trained to predict the grayscale pixel value at the specified coordinates. W e trained a separate network for each of the 10 digits in the dataset. After training these multilayer perceptrons in this manner , we uniformly sampled the tw o-dimensional latent inputs in order to visualize ho w each multilayer percep- tron org anized the digits that it had learned. Matrix factorization was not suitable for this application because it is linear, so it would predict only a linear gradient instead of an image. The other imputation algorithms are not suitable for this task because they are not designed to be used in a generati ve manner . Figure 4 sho ws a sample of ’4’ s generated by NLPCA and UBP . (Note that the images sho wn are not part of the original training data. Rather , each was generated by the multilayer perceptron after it w as trained. Because the multilayer perceptron is a continuous function, these digits could be generated with arbitrary resolution, independent of the original training data.) 14 M I S S IN G V A L U E I M P U T A T I O N W I T H U N S U P E RV IS E D B AC K P RO PAG A T I O N T A B L E 4 . Results of classification tests at a sparsity level of 0.5. B L F K M I B I MF N L P C A B P 13 , 1 , 1 0 14 , 1 , 9 20 , 1 , 3 14 , 0 , 1 0 6 , 1 1 , 7 0 . 0 5 5 0 . 0 3 3 √ 0 . 0 0 2 √ 0 . 1 9 5 0 . 4 7 2 C 4 . 5 14 , 0 , 1 0 14 , 0 , 1 0 14 , 0 , 1 0 15 , 0 , 9 9 , 1 1 , 4 0 . 2 4 5 0 . 0 7 6 0 . 0 8 0 0 . 1 1 5 0 . 1 0 4 I B 5 15 , 0 , 9 17 , 0 , 7 16 , 1 , 7 13 , 1 , 1 0 8 , 1 1 , 5 0 . 0 1 5 √ 0 . 0 0 1 √ 0 . 0 0 2 √ 0 . 0 9 3 0 . 3 3 8 LW L 14 , 1 , 9 14 , 2 , 8 13 , 2 , 9 14 , 1 , 9 4 , 1 3 , 7 0 . 0 1 6 √ 0 . 0 0 9 √ 0 . 0 7 7 0 . 1 9 3 0 . 8 2 5 N B 13 , 0 , 1 1 13 , 0 , 1 1 12 , 1 , 1 1 15 , 0 , 9 8 , 1 1 , 5 0 . 3 0 2 0 . 3 2 2 0 . 4 5 8 0 . 3 6 3 0 . 1 3 2 N N G E 13 , 0 , 1 1 15 , 0 , 9 18 , 0 , 6 15 , 0 , 9 7 , 1 1 , 6 0 . 2 4 5 0 . 0 4 8 √ 0 . 0 2 1 √ 0 . 0 8 4 0 . 3 3 8 R A N D F 14 , 0 , 1 0 17 , 0 , 7 19 , 0 , 5 14 , 1 , 9 7 , 1 0 , 7 0 . 1 0 9 0 . 0 1 5 √ 0 . 0 0 2 √ 0 . 2 0 2 0 . 4 0 1 R I D O R 15 , 1 , 8 16 , 0 , 8 19 , 0 , 5 13 , 0 , 1 1 6 , 1 1 , 7 0 . 0 2 2 √ 0 . 0 0 5 √ 0 . 0 0 0 √ 0 . 3 9 5 0 . 7 5 8 R I P P E R 15 , 0 , 9 16 , 0 , 8 19 , 0 , 5 14 , 0 , 1 0 7 , 1 1 , 6 0 . 0 3 0 √ 0 . 0 2 8 √ 0 . 0 0 1 √ 0 . 3 8 4 0 . 3 3 8 Both algorithms did approximately equally well at generating digits that appear natural ov er a range of styles. The primary difference between these results is ho w they ultimately formed order in the intrinsic values that represent the various styles. In the case of UBP , some what better intrinsic organization is formed. Three distinct styles can be observed in three horizontal stripes in Figure 4b: boxe y digits in the top two rows, slanted digits in the middle ro ws, and digits with a closed top in the bottom two ro ws. The height of the horizontal bar in the digit varies clearly from left-to-right. Along the left, the horizontal bar crosses at a lo w point, and along the right, the horizontal bar crosses at a high point. In the case of NLPCA, similar styles were or ganized into clusters, b ut they do not e xhibit the same degree of org anization that is apparent with UBP . For example, it does not appear to be able to v ary the height of the horizontal bar in each of the three main styles of this digit. This occurs because NLPCA does not hav e an extra step designed explicitly to promote organization in the intrinsic v alues. This demonstration also serv es to show that techniques for imputation and techniques for manifold learning are merging. In the case of these handwritten digits, the multilayer perceptron was flexible enough to generate visibly appealing digits, ev en when the intrinsic org anization was poor . Howe ver , better generalization can be expected when the mapping is simplest, which occurs when the intrinsic v alues are better organized. Hence, as imputation and manifold learning are applied to increasingly comple x problems, the importance of finding good organization in the intrinsic values will increase. Future work in this area will explore other techniques for promoting or ganization within the intrinsic values, and contrast them with the simple approach proposed by UBP . M I S S IN G V A L U E I M P U T A T I O N W I T H U N S U P E RV IS E D B AC K P RO PAG A T I O N 15 T A B L E 5 . Results of classification tests at a sparsity level of 0.7. B L F K M I B I M F N L P C A B P 13 , 0 , 1 1 17 , 0 , 7 17 , 1 , 6 16 , 0 , 8 8 , 1 4 , 2 0 . 2 6 4 0 . 0 0 8 √ 0 . 0 0 1 √ 0 . 0 5 1 0 . 0 2 6 √ C 4 . 5 9 , 0 , 15 9 , 2 , 13 16 , 0 , 8 13 , 0 , 1 1 5 , 1 4 , 5 0 . 7 6 3 0 . 4 7 4 0 . 0 6 4 √ 0 . 3 3 2 0 . 6 5 8 I B 5 14 , 0 , 1 0 17 , 0 , 7 15 , 0 , 9 17 , 0 , 7 8 , 1 4 , 2 0 . 1 6 5 0 . 0 0 5 √ 0 . 0 3 2 √ 0 . 0 0 8 √ 0 . 0 4 2 √ LW L 14 , 1 , 9 16 , 1 , 7 18 , 2 , 4 14 , 1 , 9 6 , 1 6 , 2 0 . 0 1 3 √ 0 . 0 0 2 √ 0 . 0 0 0 √ 0 . 1 2 1 0 . 1 4 7 N B 16 , 0 , 8 15 , 0 , 9 14 , 0 , 1 0 1 2 , 0 , 1 2 6 , 1 4 , 4 0 . 1 0 4 0 . 2 0 3 0 . 5 3 9 0 . 5 0 6 0 . 3 0 5 N N G E 12 , 1 , 1 1 15 , 0 , 9 14 , 0 , 1 0 14 , 0 , 1 0 8 , 1 4 , 2 0 . 1 6 9 0 . 0 1 1 √ 0 . 0 6 8 √ 0 . 0 1 8 √ 0 . 0 1 0 √ R A N D F 16 , 0 , 8 18 , 0 , 6 18 , 0 , 6 19 , 0 , 5 10 , 1 2 , 2 0 . 0 1 7 √ 0 . 0 0 1 √ 0 . 0 0 1 √ 0 . 0 0 2 √ 0 . 0 0 3 √ R I D O R 13 , 1 , 1 0 16 , 0 , 8 19 , 0 , 5 16 , 0 , 8 4 , 1 5 , 5 0 . 0 5 2 0 . 0 1 7 √ 0 . 0 0 0 √ 0 . 0 4 2 √ 0 . 4 5 3 R I P P E R 13 , 0 , 1 1 15 , 0 , 9 18 , 0 , 6 14 , 0 , 1 0 8 , 1 5 , 1 0 . 2 1 1 0 . 0 6 0 0 . 0 1 5 √ 0 . 1 9 5 0 . 0 3 8 √ to generate high-quality digits, UBP was able to organize the various styles more effec- ti vely . Note that the height of the horizontal bar varies from left-to-right. The results from NLPCA do not provide the ability to vary the height of this bar in each of the possible styles of the digit. 5. CONCLUSIONS Since the NetFlix competition, matrix factorization and related linear techniques have generally been considered to be the state-of-the-art in imputation. Unfortunately , the focus on imputing with a fe w large datasets has caused the community to lar gely ov erlook the v alue of nonlinear imputation techniques. One of the contrib utions of this paper is the observ ation that Nonlinear PCA, which was presented almost a decade ago, consistently outperforms matrix factorization across a di versity of datasets. Perhaps this has not yet been recognized because a thorough comparison between the two techniques across a diversity of datasets was not pre viously performed. The primary contribution of this paper , howe ver , is an improv ement to Nonlinear PCA, which improves its accuracy ev en more. This contribution consists of a 3-phase training process that initializes both weights and intrinsic vectors to better starting positions. W e theorize that this leads to better results because it bypasses many local optima in which the network could otherwise settle, and places it closer to the global optimum. W e empirically compared results from UBP with 5 other imputation techniques, includ- ing baseline, fuzzy k -means, instance-based imputation, matrix factorization, and Nonlinear 16 M I S S IN G V A L U E I M P U T A T I O N W I T H U N S U P E RV IS E D B AC K P RO PAG A T I O N T A B L E 6 . Results of classification tests at a sparsity level of 0.9. B L F K M I B I M F N L P C A B P 1 2 , 0 , 1 2 14 , 0 , 1 0 16 , 3 , 5 16 , 0 , 8 8 , 1 0 , 6 0 . 6 5 8 0 . 1 8 0 0 . 0 3 3 √ 0 . 0 5 7 0 . 2 6 5 C 4 . 5 8 , 0 , 16 1 0 , 0 , 14 13 , 0 , 1 1 15 , 0 , 9 6 , 1 0 , 8 0 . 9 3 6 0 . 6 8 9 0 . 2 8 2 0 . 1 5 1 0 . 8 2 7 I B 5 1 0 , 0 , 14 1 1 , 0 , 13 1 1 , 0 , 13 17 , 0 , 7 5 , 1 1 , 8 0 . 8 8 0 0 . 6 3 7 0 . 6 5 8 0 . 0 3 2 √ 0 . 7 1 2 LW L 12 , 1 , 1 1 14 , 1 , 9 15 , 1 , 8 14 , 1 , 9 4 , 1 0 , 10 0 . 4 5 8 0 . 0 7 0 0 . 1 4 0 0 . 1 0 9 0 . 9 4 9 N B 21 , 0 , 3 18 , 0 , 6 14 , 0 , 1 0 13 , 0 , 1 1 10 , 1 0 , 4 0 . 0 0 0 √ 0 . 0 0 1 √ 0 . 1 7 2 0 . 2 2 8 0 . 0 1 2 √ N N G E 14 , 0 , 1 0 17 , 0 , 7 14 , 0 , 1 0 18 , 0 , 6 8 , 1 0 , 6 0 . 1 8 0 0 . 0 0 4 √ 0 . 0 1 0 √ 0 . 0 0 8 √ 0 . 2 6 5 R A N D F 1 2 , 0 , 1 2 15 , 0 , 9 17 , 0 , 7 20 , 0 , 4 10 , 1 0 , 4 0 . 4 3 9 0 . 1 4 5 0 . 0 0 8 √ 0 . 0 0 1 √ 0 . 0 7 4 R I D O R 7 , 0 , 17 14 , 0 , 1 0 15 , 0 , 9 20 , 0 , 4 7 , 1 0 , 7 0 . 8 8 0 0 . 1 8 7 0 . 0 8 4 0 . 0 0 2 √ 0 . 5 9 9 R I P P E R 1 0 , 1 , 13 1 2 , 0 , 1 2 18 , 0 , 6 19 , 0 , 5 7 , 1 0 , 7 0 . 8 1 1 0 . 3 6 3 0 . 0 0 4 √ 0 . 0 1 0 √ 0 . 4 5 0 PCA, with 24 datasets across a range of parameters for each algorithm. UBP predicts missing v alues with lower error than any of these other methods in the majority of cases. W e also demonstrated that using UBP to impute missing v alues leads to better classification accuracy than any of the other imputation techniques ov er all, especially at higher le vels of sparsity , which is the most important case for imputation. W e demonstrated that UBP is also better- suited for manifold learning than NLPCA with a problem in volving handwritten digits. Ongoing research seeks to demonstrate that better or ganization in the intrinsic v ariables natu- rally leads to better generalization. This has significant potential application in unsupervised learning tasks such as automation. REFERENCES A C U ˜ N A , E D G AR , and C A RO L I NE R O D R I G U E Z . 2004. The treatment of missing values and its ef fect on classifier accuracy . In Classification, Clustering, and Data Mining Applications. Springer Berlin / Heidelberg, pp. 639–647. A D O M A V I C I U S , G ., and A . T U ZH I L I N . 2005. T ow ard the ne xt generation of recommender systems: A surv ey of the state-of-the-art and possible extensions. IEEE transactions on knowledge and data engineer- ing, 17 (6):734–749. ISSN 1041-4347. A L I R EZ A F A R HA N G FA R , L U K A S Z K U RG A N J E N N IF E R D Y . 2008. Impact of imputation of missing values on classification error for discrete data. Pattern Recognition, 41 :3692–3705. A T K E S O N , C H R I S TO P H E R G ., A N D R E W W . M O O R E , and S T E FAN S C H A A L . 1997. Locally weighted learning. Artificial Intelligence Revie w , 11 :11–73. ISSN 0269-2821. B E N G IO , Y O S HU A , P A S C A L L A MB L I N , D A N P O P OV I C I , and H U GO L A R OC H E L L E . 2007. Greedy layer-wise M I S S IN G V A L U E I M P U T A T I O N W I T H U N S U P E RV IS E D B AC K P RO PAG A T I O N 17 a b F I G U R E 3 . An MLP trained with NLPCA (a) and UBP(b) on digits from the MNIST dataset of handwritten digits. After training, the digits shown here were generated by uniformly sampling ov er the space of intrinsic v alues, and generating an image at each sampled intrinsic point.Although both NLPCA and UBP were able to generate high-quality digits, UBP was able to organize the various styles more effecti vely . Note that the height of the horizontal bar varies from left-to-right. The results from NLPCA do not provide the ability to vary the height of this bar in each of the possible styles of the digit. training of deep networks. In NIPS, pp. 153–160. B E N G IO , Y . , H . S C H W EN K , J . S . S E N ´ E C A L , F . M O R I N , and J . L . G A UV A I N . 2006. Neural probabilistic language models. In Innov ations in Machine Learning. Springer, pp. 137–186. B R E I MA N , L . 2001. Random forests. Machine Learning, 45 (1):5–32. C O H E H , D . , and J . S H AWE - T A Y L O R . 1990. Daugman’ s g abor transform as a simple generati ve back propagation network. Electronics Letters, 26 (16):1241–1243. C O H E N , W I L L I A M W . 1995. Fast ef fectiv e rule induction. In In Proceedings of the T welfth International Conference on Machine Learning, Morgan Kaufmann, pp. 115–123. E R H A N , D U M I T RU , P IE R R E - A N TO I N E M A N ZA G O L , Y OS H U A B E N G I O , S A M Y B E N G I O , and P A SC A L V I N - C E N T . 2009. The difficulty of training deep architectures and the effect of unsupervised pre-training. Journal of Machine Learning Research - Proceedings T rack, 5 :153–160. F R A N K , A . , and A . A S U N C IO N . 2010. UCI machine learning repository . http://archive.ics.uci. edu/ml . G A I N ES , B R I A N R . , and P AU L C O M PT O N . 1995. Induction of ripple-down rules applied to modeling large databases. Journal of Intelligent Information Systems, 5 :211–228. ISSN 0925-9902. G A S H LE R , M I C H A EL , D A N V E N T U RA , and T O N Y M A RT I N EZ . 2008. Iterativ e non-linear dimensionality reduction with manifold sculpting. In Adv ances in Neural Information Processing Systems 20. MIT Press, pp. 513–520. G A S H LE R , M IC H A E L S . 2011. W affles: A machine learning toolkit. Journal of Machine Learning Re- search, MLOSS 12 :2383–2387. H I N TO N , G . E . 1988. Generati ve back-propagation. In Abstracts 1st INNS. H I N TO N , G . E ., and R . R . S A L A K HU T D I N OV . 2006. Reducing the dimensionality of data with neural netw orks. Science, 28 (313 (5786)):504–507. J O N E S , M I CH A E L P . 1996. Indicator and stratificaion methods for missing explanatory variables in multiple linear regression. Journal of the American Statistical Association, 91 :222–230. K O R E N , Y ., R . B E LL , and C . V O L I N S K Y . 2009. Matrix factorization techniques for recommender systems. Computer , 42 (8):30–37. ISSN 0018-9162. L A K S HM I N A RAY A N , K . , S. A . H A R P , R . G O L D M A N , T. S A M A D , and O T H ER S . 1996. Imputation of missing data using machine learning techniques. In Proceedings of the Second International Conference on 18 M I S S IN G V A L U E I M P U T A T I O N W I T H U N S U P E RV IS E D B AC K P RO PAG A T I O N Knowledge Disco very and Data Mining, pp. 140–145. L E E , J U N , and C H RI S T O P H E G I R AU D - C AR R I E R . 2011. A metric for unsupervised metalearning. Intelligent Data Analysis, 15 (6):827–841. L I , D A N , J I T E N D E R D E O G U N , W I L L I AM S P AU L D I N G , and B I L L S H U AR T . 2004. T owards missing data imputation: A study of fuzzy k-means clustering method. In Rough Sets and Current T rends in Computing, V olume 3066 of Lecture Notes in Computer Science . Springer Berlin / Heidelberg, pp. 573–579. L I , Q . , Y . P . C H E N , and Z . L I N . 2008. Filtering T echniques For Selection of Contents and Products. In Per- sonalization of Interactiv e Multimedia Services: A Research and de velopment Perspecti ve, Nov a Science Publishers. ISBN 978-1-60456-680-2. L I T T LE , R . J .A . , and D . B . R U B IN . 2002. Statistical analysis with missing data. W iley series in probability and mathematical statistics. Probability and mathematical statistics. W iley . ISBN 9780471183860. L U E N GO , J . 2011. Soft Computing based learning and Data Analysis: Missi ng V alues and Data Complexity . Ph. D. thesis, Department of Computer Science and Artificial Intelligence, Univ ersity of Granada. M A RT I N , B R E N T . 1995. Instance-based learning: nearest neighbour with generalisation. T echnical Report 95/18, Univ ersity of W aikato, Department of Computer Science. Q U I NL A N , J . R . 1989. Unkno wn attrib ute values in induction. In Proceedings of the sixth international workshop on Machine learning, pp. 164–168. Q U I NL A N , J . R O S S . 1993. C4.5: Programs for Machine Learning. Morgan Kaufmann, San Mateo, CA, USA. R U B I N , D . B. 1987. Multiple Imputation for Nonresponse in Surveys. W iley . R U M E L H A RT , D . E ., G . E . H I N T O N , and R . J . W IL L I A M S . 1986. Learning representations by back-propagating errors. Nature, 323 :9. S A RW A R , B . , G . K A RY P I S , J . K O N S T A N , and J . R E ID L . 2001. Item-based collaborative filtering recommen- dation algorithms. In Proceedings of the 10th international conference on W orld W ide W eb, ACM. ISBN 1581133480. pp. 285–295. S A Y Y A D S HI R A B AD , J ., and T. J. M E N Z I ES . 2005. The PR OMISE Repository of Software Engineering Databases. School of Information T echnology and Engineering, Uni versity of Ottaw a, Canada. http: //promise.site.uottawa.ca/SERepository . S C H A FE R , J . L ., and J . W . G R A H A M . 2002. Missing data: Our view of the state of the art. Psychological methods, 7 (2):147. ISSN 1939-1463. S C H O LZ , M . , F. K A P L A N , C . L . G U Y , J . K O P KA , and J . S E L B I G . 2005. Non-linear pca: a missing data approach. Bioinformatics, 21 (20):3887–3895. S H A F ER , J . L . 1997. Analysis of Incomplete Multiv ariate Data. Chapman and Hall, London. T A K ´ A C S , G ., I . P I L ´ A S Z Y , B . N ´ E M E T H , and D . T I K K . 2009. Scalable collaborative filtering approaches for large recommender systems. The Journal of Machine Learning Research, 10 :623–656. T E N E NB AU M , J O S H UA B . , V I N D E S I LV A , and J O HN C . L A N G F OR D . 2000. A global geometric frame work for nonlinear dimensionality reduction. Science, 290 :2319–2323. W E R B OS , P . J . 1990. Backpropag ation through time: What it does and how to do it. Proceedings of the IEEE, 78 (10):1550–1560. ISSN 0018-9219. W I T T EN , I A N H ., and E I B E F R A N K . 2005. Data Mining: Practical machine learning tools and techniques (2nd ed.). Morgan Kaufmann, San Fransisco. Z H A N G , Z ., and J . W A N G . 2007. MLLE: Modified locally linear embedding using multiple weights. Adv ances in Neural Information Processing Systems, 19 :1593. ISSN 1049-5258.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment