Deep and Wide Multiscale Recursive Networks for Robust Image Labeling

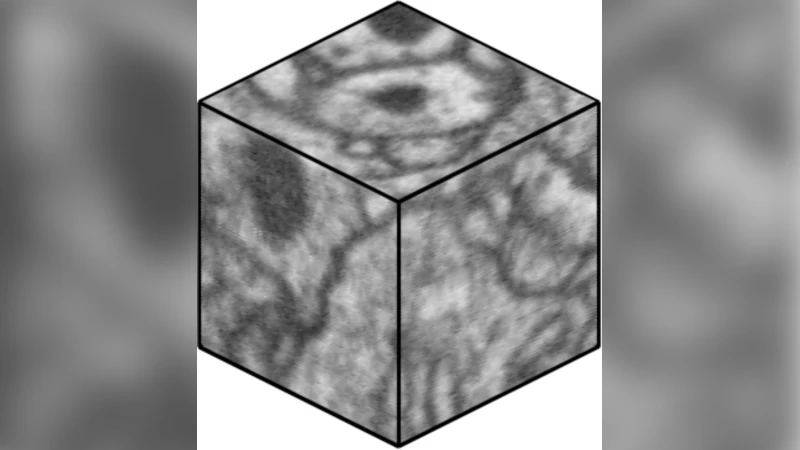

Feedforward multilayer networks trained by supervised learning have recently demonstrated state of the art performance on image labeling problems such as boundary prediction and scene parsing. As even very low error rates can limit practical usage of such systems, methods that perform closer to human accuracy remain desirable. In this work, we propose a new type of network with the following properties that address what we hypothesize to be limiting aspects of existing methods: (1) a `wide’ structure with thousands of features, (2) a large field of view, (3) recursive iterations that exploit statistical dependencies in label space, and (4) a parallelizable architecture that can be trained in a fraction of the time compared to benchmark multilayer convolutional networks. For the specific image labeling problem of boundary prediction, we also introduce a novel example weighting algorithm that improves segmentation accuracy. Experiments in the challenging domain of connectomic reconstruction of neural circuity from 3d electron microscopy data show that these “Deep And Wide Multiscale Recursive” (DAWMR) networks lead to new levels of image labeling performance. The highest performing architecture has twelve layers, interwoven supervised and unsupervised stages, and uses an input field of view of 157,464 voxels ($54^3$) to make a prediction at each image location. We present an associated open source software package that enables the simple and flexible creation of DAWMR networks.

💡 Research Summary

The paper introduces Deep and Wide Multiscale Recursive (DAWMR) networks, a novel architecture designed to push image‑labeling performance—particularly for 3‑D electron‑microscopy (EM) boundary prediction—closer to human‑level accuracy while keeping training time practical. The authors identify four perceived shortcomings of existing multilayer convolutional networks: (1) narrow feature representations (few filters per layer), (2) limited field‑of‑view (FOV), (3) lack of explicit modeling of statistical dependencies among neighboring labels, and (4) prohibitively long training times when scaling up. DAWMR addresses each point directly.

First, the “wide” aspect is realized by learning thousands of features per layer using unsupervised vector‑quantization (VQ). A dictionary is built with a greedy orthogonal matching pursuit (OMP‑1) algorithm, then soft‑thresholded to produce high‑dimensional encodings. Because the dictionary can contain many atoms, the network’s capacity to capture diverse image patterns is dramatically increased compared with prior 3‑D CNNs that typically use 12–48 filters per layer.

Second, the architecture is multiscale: the raw 3‑D volume and several down‑sampled versions (simple averaging) are processed in parallel. Each scale passes through its own VQ, pooling, and whitening stages, after which the resulting feature maps are concatenated. This yields an effective receptive field of 54³ voxels (≈157 k voxels) for a single prediction, allowing the network to resolve local ambiguities using global context.

Third, DAWMR introduces recursion. A core network (feature extraction + classifier) is applied repeatedly. The output label map from iteration N₁ is fed back, together with the original image, into a second instance N₂, and so on up to Nₖ. Each iteration expands the effective FOV and, crucially, lets the model learn statistical regularities in label space (e.g., continuity of boundaries, co‑occurrence of neighboring classes) that are otherwise only implicit in standard CNNs. Empirically, two to three recursive passes reduced boundary‑prediction error by more than 30 % relative to a single‑pass baseline.

The classifier component is a shallow multilayer perceptron (MLP) with one hidden layer trained by mini‑batch stochastic gradient descent. The authors found the MLP outperformed linear SVMs, especially when predicting multiple labels per voxel (three‑dimensional connectivity vectors).

A fourth contribution is a task‑specific example‑weighting scheme called Local Error Density (LED) weighting. In boundary prediction, errors tend to concentrate on thin membranes or junctions. LED assigns higher loss weights to voxels that have historically high error density, forcing the optimizer to focus learning on these difficult regions. Experiments show that LED improves both Rand error and Variation of Information metrics, translating into more reliable downstream segmentation and agglomeration steps in connectomics pipelines.

Training efficiency is achieved through a hybrid parallel implementation. Feature extraction (VQ encoding) runs on multi‑core CPU clusters, exploiting data parallelism across voxels, while the MLP classifier is executed on GPUs using highly optimized matrix‑multiply kernels. This division allows the full DAWMR model (including up to three recursive iterations) to be trained in roughly one day, compared with two weeks required for a comparable 3‑D CNN on the same hardware.

The authors validate the approach on a large FIB‑SEM dataset of Drosophila melanogaster brain tissue (isotropic 8 nm voxels, volume ≈ 1000³ voxels). Ground‑truth connectivity labels encode three binary edges per voxel. Using a standard train/validation/test split, they demonstrate that a single‑iteration DAWMR already surpasses a strong 3‑D CNN baseline by 2–3 % in pixel‑wise accuracy. Adding recursive iterations yields further gains, while LED weighting adds another measurable improvement.

In addition to the algorithmic contributions, the paper releases an open‑source software package that automates dictionary learning, multiscale preprocessing, recursive network construction, and distributed training. The package abstracts away the low‑level parallelism, making it straightforward for other researchers to experiment with different depths, widths, or loss functions.

Overall, DAWMR represents a comprehensive answer to the four identified limitations: it scales feature capacity (wide), captures long‑range context (large FOV, multiscale), explicitly models label dependencies (recursion), and remains computationally tractable (parallel hybrid training). The work is especially relevant for connectomics, where even a single pixel error can propagate into large reconstruction mistakes, and where rapid retraining is valuable for iterative annotation workflows. Future directions suggested include deeper recursion, application to multi‑class semantic segmentation, and integration with end‑to‑end differentiable agglomeration modules.

Comments & Academic Discussion

Loading comments...

Leave a Comment