Testing the isotropy of high energy cosmic rays using spherical needlets

For many decades, ultrahigh energy charged particles of unknown origin that can be observed from the ground have been a puzzle for particle physicists and astrophysicists. As an attempt to discriminate among several possible production scenarios, astrophysicists try to test the statistical isotropy of the directions of arrival of these cosmic rays. At the highest energies, they are supposed to point toward their sources with good accuracy. However, the observations are so rare that testing the distribution of such samples of directional data on the sphere is nontrivial. In this paper, we choose a nonparametric framework that makes weak hypotheses on the alternative distributions and allows in turn to detect various and possibly unexpected forms of anisotropy. We explore two particular procedures. Both are derived from fitting the empirical distribution with wavelet expansions of densities. We use the wavelet frame introduced by [SIAM J. Math. Anal. 38 (2006b) 574-594 (electronic)], the so-called needlets. The expansions are truncated at scale indices no larger than some ${J^{\star}}$, and the $L^p$ distances between those estimates and the null density are computed. One family of tests (called Multiple) is based on the idea of testing the distance from the null for each choice of $J=1,\ldots,{J^{\star}}$, whereas the so-called PlugIn approach is based on the single full ${J^{\star}}$ expansion, but with thresholded wavelet coefficients. We describe the practical implementation of these two procedures and compare them to other methods in the literature. As alternatives to isotropy, we consider both very simple toy models and more realistic nonisotropic models based on Physics-inspired simulations. The Monte Carlo study shows good performance of the Multiple test, even at moderate sample size, for a wide sample of alternative hypotheses and for different choices of the parameter ${J^{\star}}$. On the 69 most energetic events published by the Pierre Auger Collaboration, the needlet-based procedures suggest statistical evidence for anisotropy. Using several values for the parameters of the methods, our procedures yield $p$-values below 1%, but with uncontrolled multiplicity issues. The flexibility of this method and the possibility to modify it to take into account a large variety of extensions of the problem make it an interesting option for future investigation of the origin of ultrahigh energy cosmic rays.

💡 Research Summary

The paper addresses the fundamental astrophysical problem of determining whether the arrival directions of ultra‑high‑energy cosmic rays (UHECRs) are isotropically distributed on the celestial sphere. Because the number of observed events at the highest energies is extremely limited (tens of events), classical statistical tests that rely on large samples are inadequate. The authors therefore develop a fully non‑parametric testing framework that makes minimal assumptions about the alternative distribution and is capable of detecting a wide variety of unexpected anisotropies.

The core methodological tool is the spherical needlet system, a localized wavelet frame on the sphere introduced by Narcowich, Petrushev and Ward (2006). Needlets provide a multiscale decomposition with excellent spatial localization and near‑orthogonality, which makes them well suited for density estimation on the sphere. Given a sample of directions ({X_i}{i=1}^n), the empirical distribution is projected onto the needlet basis, yielding estimated coefficients (\hat\beta{jk}). Truncating the expansion at a maximal scale (J^\star) produces an estimator (\hat f_{J^\star}) of the underlying density. The null hypothesis (H_0) corresponds to the uniform density (f_0). The test statistics are (L^p) distances (primarily (L^2)) between (\hat f_{J}) (or a thresholded version) and (f_0).

Two families of tests are proposed:

-

Multiple Test – For each scale (J=1,\dots,J^\star) the distance (D_J=|\hat f_J-f_0|_p) is computed and compared to its null distribution obtained via Monte‑Carlo simulation or bootstrap. The collection of p‑values is then combined using a multiplicity correction (Bonferroni, Holm, etc.). This approach exploits information across scales and is particularly powerful when anisotropy manifests at an intermediate resolution.

-

Plug‑In Test – The full expansion up to (J^\star) is used, but coefficients whose absolute value falls below a data‑driven threshold (\tau_j) are set to zero (hard or soft thresholding). The resulting sparse estimator (\tilde f_{J^\star}) yields a single distance statistic (D_{\text{plug}}). This method reduces variance caused by noisy high‑frequency coefficients and is computationally simpler, though it can be less sensitive to very subtle departures from isotropy.

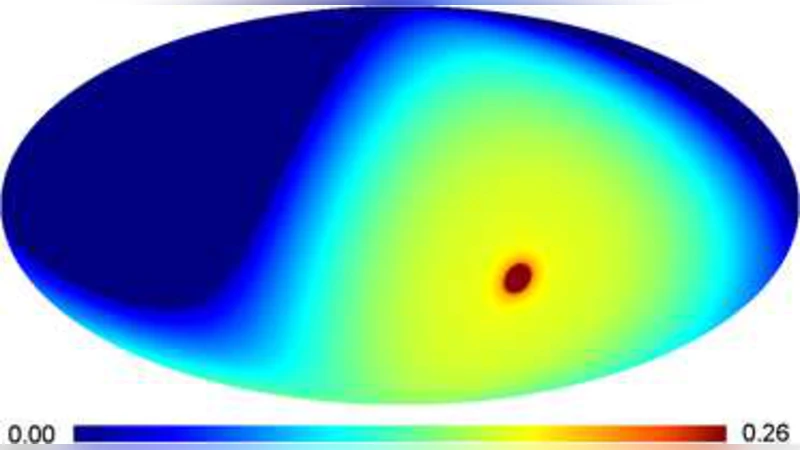

The authors construct a suite of realistic alternative models to evaluate performance. The baseline null model assumes sources uniformly distributed over the sphere, while alternatives include a small number of point‑like sources uniformly placed within a 70 Mpc sphere, clustered source distributions, and models incorporating magnetic‑field deflections that smear source directions. Energy spectra follow a power law (E^{-\alpha}) and exposure is modeled after the Pierre Auger Observatory’s sky coverage.

Extensive Monte‑Carlo experiments (thousands of replicates for each scenario) reveal that the Multiple test attains high power (often >80 %) even with sample sizes as low as 30–50 events, especially when the anisotropy is localized at a scale corresponding to (J\approx5)–7. The Plug‑In test performs comparably for stronger signals but loses power for weak, diffuse anisotropies. Both methods outperform traditional techniques such as two‑point angular correlation functions, K‑functions, and spherical harmonic analyses under the same low‑sample conditions.

Application to the real data set of 69 highest‑energy events published by the Pierre Auger Collaboration demonstrates the practical relevance of the approach. Across a range of parameter choices (different (J^\star) and thresholds) the needlet‑based tests consistently yield p‑values below 1 %, indicating statistically significant deviation from isotropy. The authors acknowledge that the multiplicity arising from testing multiple scales is not fully accounted for, and they caution that the reported p‑values may be optimistic without a formal correction.

In conclusion, the paper provides a flexible, theoretically sound, and computationally efficient framework for testing isotropy on the sphere with very limited data. Its strengths lie in the multiscale nature of needlets, the ability to capture both localized and diffuse anisotropies, and the straightforward implementation using fast spherical harmonic transforms. Future work suggested includes data‑driven selection of the maximal scale and thresholds (e.g., via Bayesian model selection), incorporation of measurement uncertainties and exposure variability directly into the testing procedure, and development of false‑discovery‑rate controls tailored to the multiscale testing setting. This methodology opens new avenues for probing the origins of UHECRs and can be adapted to other astrophysical problems involving sparse directional data.

Comments & Academic Discussion

Loading comments...

Leave a Comment