Bidirectional Recursive Neural Networks for Token-Level Labeling with Structure

Recently, deep architectures, such as recurrent and recursive neural networks have been successfully applied to various natural language processing tasks. Inspired by bidirectional recurrent neural networks which use representations that summarize th…

Authors: Ozan .Irsoy, Claire Cardie

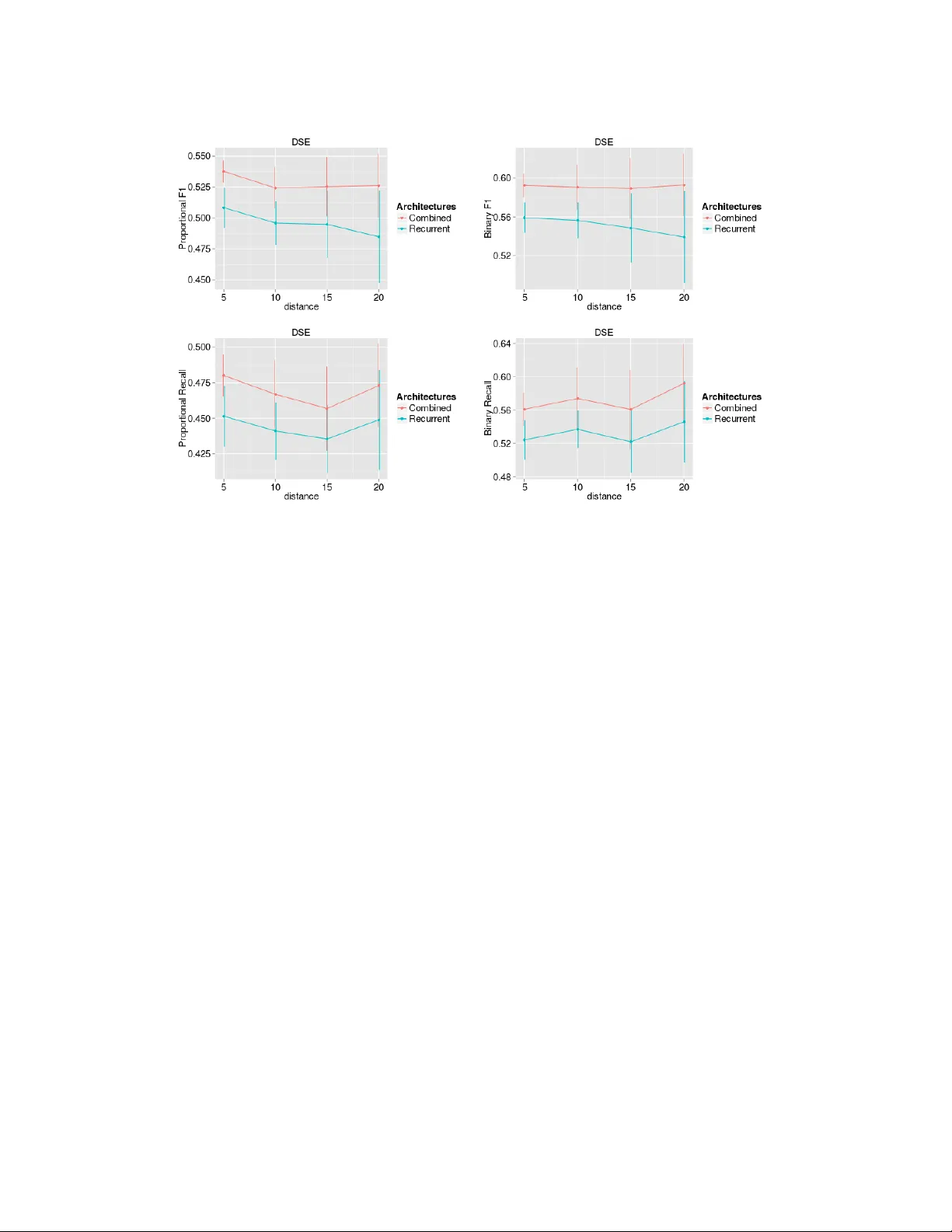

Bidir ectional Recursiv e Neural Networks f or T oken-Le v el Labeling with Structur e Ozan ˙ Irsoy Department of Computer Science Cornell Univ ersity Ithaca, NY 14853 oirsoy@cs.cornell.edu Claire Cardie Department of Computer Science Cornell Univ ersity Ithaca, NY 14853 cardie@cs.cornell.edu Abstract Recently , deep architectures, such as recurrent and recursive neural networks ha ve been successfully applied to various natural language processing tasks. Inspired by bidirectional recurrent neural networks which use representations that summa- rize the past and future around an instance, we propose a novel architecture that aims to capture the structural information around an input, and use it to label in- stances. W e apply our method to the task of opinion expression e xtraction, where we employ the binary parse tree of a sentence as the structure, and word vector representations as the initial representation of a single token. W e conduct prelim- inary experiments to in vestigate its performance and compare it to the sequential approach. 1 Introduction Deep connectionist architectures in v olve many layers of nonlinear information processing. This allows them to incorporate meaning representations such that each succeeding layer potentially has a more abstract meaning. Recent adv ancements on ef ficiently training deep neural networks enabled their application to many problems, including those in natural language processing (NLP). A key advance for application to NLP tasks was the inv ention of word embeddings that represent a single word as a dense, low-dimensional vector in a meaning space [1], from which numerous problems hav e benefited [2, 3]. In this work, we are interested in deep learning approaches for NLP sequence tagging tasks in which the goal is to label each token of the gi ven input sentence. Recurrent neural netw orks constitute one important class of naturally deep architecture that has been applied to many sequential prediction tasks. In the context of NLP , recurrent neural networks view a sentence as a sequence of tokens. W ith this view , they hav e been successfully applied to tasks such as language modeling [4], and spoken language understanding [5]. Since classical recurrent neural networks only incorporate information from the past (i.e. preceding tokens), bidirectional variants hav e been proposed to incorporate information from both the past and the future (i.e. follo wing tokens) [6]. Bidirectionality is especially useful for NLP tasks, since information provided by the following tok ens is usually helpful when making a decision on the current token. Even though bidirectional recurrent neural networks rely on information from both preceding and following words, capturing long term dependencies might be difficult, due to the v anishing gradient problem [7]: relev ant information that is distant from the token under inv estigation might be lost. On the other hand, depending on the task, a relev ant token might be structurally close to the token under in vestigation, even though it is far away in sequence. As an example, a verb and its corresponding object might be far away in terms of tokens if there are many adjecti ves before the object, but they would be very close in the parse tree. In addition to a distance-based argument, structure also provides a different way of computing representations: it allows for compositionality , i.e. the 1 T able 1: An example sentence with labels The Committee , as usual , and as B HOLDER I HOLDER O B ESE I ESE O O O expected by a group of activists and blog authors , O O O O O O O O O O has refused to make any statements . B DSE I DSE I DSE I DSE I DSE I DSE O meaning of a phrase is determined via a composition of the meanings of the words that comprise it. As a result, we believe that many NLP tasks might benefit from explicitly incorporating the structural information associated with a token. Recursiv e neural netw orks compose another class of architecture, one that operates on structured in- puts. They hav e been applied to parsing [8], sentence-lev el sentiment analysis [9], and paraphrase detection [10]. Given the structural representation of a sentence, e.g. a parse tree, they recursiv ely generate parent representations in a bottom-up fashion, by combining tokens to produce represen- tations for phrases, ev entually producing the whole sentence. The sentence-le vel representation (or , alternativ ely , its phrases) is then used to make a final classification for a giv en input sentence — e.g. whether it con veys a positive or a negati ve sentiment. Since recursive neural networks gener- ate representations only for the internal nodes in the structured representation, they are not directly applicable to the token-le vel labeling tasks in which we are interested. T o this end, we propose and explore an architecture that aims to represent the structural informa- tion associated with a single token. In particular , we extend the traditional recursi ve neural network framew ork so that it not only generates representations for subtrees (i.e. phrases) and the whole sen- tence upward, but also propagates downw ard representations tow ard the leaves, carrying information about the structural en vironment of each word. Our method is naturally applicable to any type of labeling task at the word level, howe ver we limit ourselves to an opinion expression extraction task in this work. In addition, although the method is applicable to any type of positional directed acyclic graph structure (e.g. the dependency parse of a sentence), we limit our attention to binary parse trees [8]. 2 Preliminaries 2.1 T ask description Opinion expression identification aims to detect subjectiv e expressions in a giv en text, along with their characterizations, such as the intensity and sentiment of an opinion, opinion holder and target, or topic [11]. This is important for tasks that require a fine-grained opinion analysis, such as opinion oriented question answering and opinion summarization. In this work, we focus on the detection of dir ect subjective expr essions (DSEs), expr essive subjective expr essions (ESEs) and opinion holders and targets, as defined in [11]. DSEs consist of explicit mentions of priv ate states or speech events expressing priv ate states, and ESEs consist of expressions that indicate sentiment, emotion, etc., without explicitly con ve ying them. An example sentence with the appropriate labels is giv en in T able 1 in which the DSE “has refused to make any statements” explicitly expresses an opinion HOLDER’ s attitude and the ESE “as usual” indirectly expresses the attitude of the writer . Previously , opinion e xtraction has been tackled as a sequence labeling problem. This approach views a sentence as a sequence of tokens labeled using the con ventional BIO tagging scheme: B indicates the beginning of an opinion-related expression, I is used for tokens inside the opinion expression, and O indicates tokens outside any opinion-related class. V ariants of conditional random field-based approaches hav e been successfully applied with this vie w [12 – 14]. Similar to CRF-based methods, recurrent neural networks can be applied to the problem of opinion expression extraction, with a sequential interpretation. Howe ver this approach ignores the structural 2 Figure 1: Binary parse tree of the example sentence information that is present in a sentence. Therefore we explore an architecture that incorporates structural information into the final decision. 2.2 W ord vector r epresentations Neural network-based approaches require a vector representation for each input token. In natural language processing, a common way of representing a single token as a vector is to use a “one-hot” vector per token, with a dimensionality of the vocab ulary size, such that the corresponding entry of the vector is 1, and all others are 0. This results in a very high dimensional, sparse representation. Alternativ ely , a distributed representation maps a token to a real v alued dense vector of smaller size (usually on the order of 100 dimensions). Generally , these representations are learned in an unsuper- vised manner from a large corpus, e.g. Wikipedia. V arious architectures have been explored to learn these embeddings [1, 2, 15, 16] which might hav e different generalization capabilities depending on the task [17]. The geometry of the induced word vector space might have interesting semantic properties [16]. In this work, we employ such word v ector representations. 2.3 Recurrent neural netw orks A recurrent neural network is a class of neural network that has recurrent connections, which allows a form of memory . This makes them applicable for sequential prediction tasks with arbitrary spatio- temporal dimension. This description fits many natural language processing tasks, when a single sentence is viewed as a sequence of tokens. In this work, we focus our attention on only the Elman type networks [18]. In the Elman-type network, the hidden layer h t at time step t is computed from a nonlinear trans- formation of the current input layer x t and the previous hidden layer h t − 1 . Then, the final output y t is computed using the hidden layer h t . One can interpret h t as an intermediate representation summarizing the past, which is used to make a final decision on the current input. More formally , h t = f ( W x t + V h t − 1 + b ) (1) y t = g ( W o h t + b o ) (2) where f is a nonlinearity , such as the sigmoid function, g is the output nonlinearity , such as the softmax function, W and V are weight matrices between the input and hidden layer , and among the hidden units themselves (connecting the previous intermediate representation to the current one), respectiv ely , while W o is the output weight matrix, and b and b o are bias vectors connected to hidden and output units, respectiv ely . 3 Observe that with this definition, we have information only about the past, when making a decision on x t . This is limiting for most tasks. A simple way to work around this problem is to include a fixed size future context around a single input vector . Howe ver this approach requires tuning the context size, and ignores future information from outside of the context window . Another way to incorporate information about the future is to add bidirectionality to the architecture [6]: h → t = f ( W → x t + V → h t − 1 + b → ) (3) h ← t = f ( W ← x t + V ← h t +1 + b ← ) (4) y t = g ( W o → h → t + W o ← h ← t + W o x t + b o ) (5) where W → and V → are the forward weight matrices as before, W ← and V ← are the backward counterparts of them, W o → , W o ← , W o are the output matrices, and b → , b ← , b o are biases. In this set- ting h → t and h ← t can be interpreted as a summary of the past, and the future, respectiv ely , around the time step t . When we make a decision on an input vector , we employ both the initial representation x t and the two intermediate representations h → t and h ← t of the past and the future. Therefore in the bidirectional case, we have perfect information about the sequence (ignoring the practical difficul- ties about capturing long term dependencies, caused by vanishing gradients), whereas the classical Elman type network uses only partial information. Note that forward and backward parts of the network are independent of each other until the out- put layer . This means that during training, after backpropagating the error terms from the output layer to the hidden layer , the two parts can be thought as separate, and trained with the classical backpropagation through time [19]. 2.4 Recursive neural netw orks Recursiv e neural networks comprise an architecture in which the same set of weights is recursively applied in a structural setting: giv en a positional directed acyclic graph, it visits the nodes in a topological order , and recursiv ely applies transformations to generate further representations from previously computed representations of children. In fact, a recurrent neural network is simply a re- cursiv e neural network with a particular structure. Even though they can be applied to any positional directed acyclic graph, we limit our attention to recursive neural networks over positional binary trees, as in [8]. Giv en a binary tree structure with leav es having the initial representations, e.g. a parse tree with word vector representations at the leaves, a recursiv e neural network computes the representations at the internal node η as follows: x η = f ( W L x l ( η ) + W R x r ( η ) + b ) (6) where l ( η ) and r ( η ) are the left and right children of η , W L an W R are the weight matrices that con- nect the left and right children to the parent, and b is a bias vector . Given that W L and W R are square matrices, and not distinguishing whether l ( η ) and r ( η ) are leaf or internal nodes, this definition has an interesting interpretation: initial representations at the leav es and intermediate representation at the nonterminals lie in the same space. In the parse tree example, recursiv e neural network com- bines representations of two subphrases to generate a representation for the larger phrase, in the same meaning space [8]. Depending on the task, we hav e a final output layer at the root ρ : y = g ( W o x ρ + b o ) (7) where W o is the output weight matrix and b o is the bias vector to the output layer . In a super- vised task, supervision occurs at this layer . Thus, during learning, initial error is incurred on y , backpropagated from the root, to wards lea ves [20]. 3 Methodology 3.1 Bidirectional r ecursive neural netw orks W e will extend the aforementioned definition of recursive neural networks, so that it propagates information about the rest of the tree, to ev ery leaf node, through structure. This will allow us to make decisions at the leaf nodes, with a summary of the surrounding structure. 4 First, we modify the notation in equation (6) so that it represents an upward layer through the tree: x ↑ η = f ( W ↑ L x ↑ l ( η ) + W ↑ R x ↑ r ( η ) + b ↑ ) (8) Note that x ↑ η is simply the initial representation x η if η is a leaf, similar to equation (6). Next, we add a downw ard layer on top of this upward layer: x ↓ η = f ( W ↓ L x ↓ p ( η ) + V ↓ x ↑ η + b ↓ ) , if η is a left child f ( W ↓ R x ↓ p ( η ) + V ↓ x ↑ η + b ↓ ) , if η is a right child f ( V ↓ x ↑ η + b ↓ ) , if η is root ( η = ρ ) (9) where p ( η ) is the parent of η , W ↓ L and W ↓ R are the weight matrices that connect the downward representations of parent to that of its left and right children, respectively , V ↓ is the weight matrix that connects the upward representation to the downward representation for any node, and b ↓ is a bias vector at the downward layer . Intuitiv ely , for any node, x ↑ η contains information about the subtree rooted at η , and x ↓ η contains information about the rest of the tree, since ev ery node in the tree has a contributon to the computation of x ↓ η . Therefore x ↑ η and x ↓ η can be thought as complete summaries of the structure around η . At the leav es, we use an output layer to make a final decision: y η = g ( W o ↓ x ↓ η + W o ↑ x ↑ η + b o ) (10) where W o ↓ and W o ↑ are the output weight matrices and b o is the output bias vector . In a supervised task, supervision occurs at the output layer . Then, during training, error backpropagates upwards, through the downward layer, and then downwards, through the upward layer , employing the back- propagation through structure method [20]. If desired, backpropagated errors can be used to update the initial representation x , which allows the possibility of fine tuning the word vector representa- tions, in our setting. Note that this definition is structurally similar to the unfolding recursiv e autoencoder [10]. Howe ver the goals of the two architectures are different. Unfolding recursiv e autoencoder downw ard propa- gates representations as well. Howe ver , the intention is to reconstruct the initial representations. On the other hand, we want the downward representations x ↓ to be as different as possible than the up- ward representations x ↑ , since our aim is to capture the information about the rest of the tree rather than the particular subtree under in vestigation. Thus, the unfolding recursiv e autoencoder does not use x ↑ when computing x ↓ (except at the root), whereas bidirectional recursi ve neural network does. 3.2 Incorporating sequential context Depending on the task, one might want to employ the sequential context around each input vector as well, if the task has the sequential view in addition to structure. T o this end, we can combine the bidirectional recurrent neural network with the bidirectional recursive neural network. This allows to use both the sequential information (past and future), and the structural information around a token to produce a final decision: y η = g ( W o → h → η + W o ← h ← η + W o ↓ x ↓ η + W o ↑ x ↑ η + b o ) (11) This architecture can be seen as an extension to both the recurrent and the recursive neural network. During training, after the error term backpropagates through the output layer , individual errors per each of the combined architectures can be handled separately , which allows us to use the previously noted training methods per architecture. 4 Experiments W e cast the problem of detecting DSEs and ESEs as two separate 3-class classification problems. W e also experiment with joint detection of DSEs, opinion holders, and opinion target — as a 7-class classification problem with one Outside class and one Beginning and Inside class for DSEs, opinion holders and opinion targets. W e compare the bidirectional recurrent neural network as described in Section 2.3 (B I - R E C U R R E N T ), the bidirectional recursive network as described in Section 3.1 (B I - R E C U R S I V E ), and the combined architecture as described in Section 3.2 (C O M B I N E D ). W e use the Stanford PCFG parser to extract binary parse trees of sentences [21]. 5 T able 2: Experimental results for DSE detection Architecture T opology Proportional Binary Prec. Recall F1 Prec. Recall F1 Bi-Recurrent (50, 75, 75) 56.59* 56.60* 56.60* 58.84 62.23 60.49 Bi-Recursiv e (50, 150) 53.93 55.05 54.48 58.21 62.29 60.23 Combined (50, 50, 50, 50) 54.22 53.25 53.73 58.59 62.72 60.59 W e use precision, recall and F-measure for performance e valuation. Since the boundaries of expres- sions are hard to define ev en for human annotators [11], we use two soft notions of the measures: Binary Overlap counts ev ery overlapping match between a predicted and true expression as cor- rect [13, 14], and Pr oportional Overlap imparts a partial correctness, proportional to the o verlapping amount, to each match [14, 22]. W e use the manually annotated MPQA corpus [11], which has 14492 sentences in total. For DSE and ESE detection, we separate 4492 sentences as a test set, and run 10-fold cross validation. For joint detection of opinion holder, DSE and target, we hav e 9471 manually annotated sentences, and we separate 2471 as a test set, and run 10-fold cross validation. A validation set is used to pick the best regularization parameter , simply a coefficient that penalizes the L2 norm. W e use standard stochastic gradient descent, updating weights after minibatches of 80 sentences. W e run 200 epochs for training. Furthermore, we fix the learning rate for every architecture, instead of tuning with cross validation, since initial experiments sho wed that in this setting, ev ery architecture successfully con verges without any oscillatory beha vior . As initial representations of tokens, we use pre-trained Collobert-W eston embeddings [2]. Initial experiments with fine tuning the word vector representations presented se vere overfitting, hence, we keep the word v ectors fixed in the e xperiments. W e employ the standard softmax activ ation for the output layer: g ( x ) = e x i / P j e x j . For the hidden layers we use the rectifier linear activ ation: f ( x ) = max { 0 , x } . Experimentally , rectifier activ ation giv es better performance, faster conv ergence, and sparse representations. Note that in the recursiv e network, we apply the same transformation to both the leaf nodes and the internal nodes, with the interpretation that they belong in the same meaning space. Employing the rectifier units at the upward layer causes the upward representations at the internal nodes to be always nonnegati ve and sparse, whereas the initial representations are dense, and might hav e negati ve values, which causes a conflict. T o test the impact of this, we experimented with the sigmoid activ ation at the upward layer and the rectifier activ ation at the do wnward layer , which caused a degradation in performance. Therefore, at a loss of interpretation, we use the rectifier acti vation at both layers in our e xperiments. The number of hidden layers per architecture is chosen so that every architecture to be compared has the same number of hidden units connected to the output layer as well as the same input dimen- sionality . 4.1 Results Experimental results for DSE and ESE detection are given in T ables 2 and 3. For the recurrent net- work, the topology ( a, b, c ) means that it has input dimensionality a , forward hidden layer dimen- sionality b , and backward dimensionality c . For the recursi ve network, ( a, b ) means that it has input dimensionality and upward layer dimensionality a and a downward layer dimensionality b . For the combined network, ( a, b, c, d ) means an input and upward layer dimensionality a , downw ard layer dimensionality b and forward and backward layer dimensionalities c and d . Asterisk indicates that the performance is statistically significantly better than others in the group, with respect to a two sided paired t-test with α = 0 . 05 . W e observ e that the bidirectional recurrent neural netw ork (B I - R E C U R R E N T ) has better performance than both the bidirectional recursi ve ( B I - R E C U R S I V E ) and the C O M B I N E D architectures on the task of DSE detection, with respect to the proportional ov erlap metrics (56.60 F-measure, compared to 54.48, and 53.73). W e do not observe a significant difference with respect to the binary ov eralp metrics. This might be explained by the fact that DSEs tend to be shorter (often e ven a single word, 6 T able 3: Experimental results for ESE detection Architecture T opology Proportional Binary Prec. Recall F1 Prec. Recall F1 Bi-Recurrent (50, 75, 75) 45.69 53.72 49.38 52.13 65.43 58.03 Bi-Recursiv e (50, 150) 42.64 53.49 47.45 47.15 71.19* 56.73 Combined (50, 50, 50, 50) 46.16* 53.33 49.49 51.95 67.49 58.71* T able 4: Experimental results for joint holder+DSE+target detection Architecture T opology DSE F1 Holder F1 T ar get F1 Prop. Bin. Prop. Bin. Prop Bin. Bi-Recurrent (50, 75, 75) 49.73 54.49 48.19 51.36 39.32* 50.53 Combined (50, 50, 50, 50) 50.04 54.88 49.06* 52.20* 38.58 49.77 such as “criticized” or “agrees”). Furthermore, since DSEs exhibit explicit subjectivity , they do not neccessarily require a contextual in vestigation around the phrase. Most of the time, a DSE can be detected just by looking at the particular phrase. On the task of ESE detection, the C O M B I N E D network has significantly better binary F-measure compared to others (58.71 compared to 58.03 and 56.73). Furthermore, the C O M B I N E D network has significantly better proportional precision than the two other architectures, at an insignificant loss in proportional recall. In terms of binary measures, the B I - R E C U R S I V E network has low precision and high recall, which might suggest a complementary behavior for the two architectures. ESEs tend to be longer relative to DSEs, which might explain the results. Aditionally , unlike DSEs, ESEs more often require contextual information for their interpretation. For instance, in the giv en example in T able 1, it is not clear that “as usual” should be labeled as an ESE, unless one looks at the context presented in the sentence. Experimental results for joint detection of opinion holder , DSE and target, are giv en in T able 4 (not to be compared with T able 2, since the datasets are different). Here, the C O M B I N E D architecture has insignificantly better performance in detecting DSEs (50.04 and 54.88 proportional and binary F-measures, compared to 49.73 and 54.49), and significantly better performance in detecting opin- ion holders (49.06 and 52.20 proportional and binary F-measures, compared to 48.19 and 51.36), whereas the B I - R E C U R R E N T network is better in detecting targets (39.32 and 50.53 proportional and binary F-measures, compared to 38.58 and 49.77). Again, a possible explanation might be a better utilization of contextual information. T o decide whether a named entity is an opinion holder or not, one must link (or fail to link) the entity to an opinion e xpression. Therefore, it is not possible to decide just by looking at the particular named entity . For the joint detection task, we also in vestigate the performance on a subset of sentences, such that each sentence has at least one DSE and opinion holder , and they are seperated by some distance. This is an attempt to explore the impact of the token-le vel sequential distance between an opinion holder and an opinion expression. The results are given in Figure 2. As the separation distance increases, on av erage, DSE detection performance of the combined architecture is steady for the C O M B I N E D network compared to the B I - R E C U R R E N T network. This might suggest that structural information helps to better capture the cues between opinion holders and expression. Note that each distance-based subset of instances is strictly smaller , since there are fewer number of sentences conforming to the constraints, which causes an increase in variance. 5 Conclusion and Discussion W e have proposed an extension to the recursi ve neural network to carry out labeling tasks at the token level. W e inv estigated its performance on the opinion expression extraction task. Experiments showed that, depending on the task, employing the structural information around a token might contribute to the performance. 7 Figure 2: Experimental results for joint detection ov er sentences with separation In the bidirectional recursiv e neural network, downw ard layer is built on top of the upward layer, whereas in the bidirectional recurrent neural network, forward and backward layers are separate. This causes the supervision to occur at a higher lev el in the recursiv e network relativ e to the recur- rent network, which makes training relatively more dif ficult. T o alleviate this difficulty , an unsu- pervised pre-training of the upward layer , or a similar semi-supervised training, as in [9], might be employed as a future research direction. A fine tuning of the word vector representations during this pre-training might have a positi ve impact on the performance of the recursive network, since the learned representations for phrases might be structurally more meaningful, compared to the rep- resentations learned by sequential, or context window based approaches. Future work will address these observ ations, in vestigate more ef fecti ve training of the bidirectional recursiv e network and explore the impact of dif ferent word vector representations on the architecture. Acknowledgments This work was supported in part by D ARP A DEFT Grant F A8750-13-2-0015 and a gift from Boeing. The views and conclusions contained herein are those of the authors and should not be interpreted as necessarily representing the of ficial policies or endorsements, either expressed or implied, of D ARP A or the U.S. Gov ernment. References [1] Y oshua Bengio, R ´ ejean Ducharme, and Pascal V incent. A neural probabilistic language model. In NIPS , 2001. [2] Ronan Collobert and Jason W eston. A unified architecture for natural language processing: Deep neural networks with multitask learning. In Pr oceedings of the 25th international con- fer ence on Machine learning , pages 160–167. A CM, 2008. [3] Ronan Collobert, Jason W eston, L ´ eon Bottou, Michael Karlen, K oray Kavukcuoglu, and Pav el Kuksa. Natural language processing (almost) from scratch. J. Mach. Learn. Res. , 12:2493– 2537, Nov ember 2011. [4] T omas Mikolov , Stefan Kombrink, Lukas Burget, JH Cernocky , and Sanjeev Khudanpur . Ex- tensions of recurrent neural network language model. In Acoustics, Speech and Signal Pr o- cessing (ICASSP), 2011 IEEE International Confer ence on , pages 5528–5531. IEEE, 2011. 8 [5] Gr ´ egoire Mesnil, Xiaodong He, Li Deng, and Y oshua Bengio. In vestigation of recurrent- neural-network architectures and learning methods for spoken language understanding. In Interspeech , 2013. [6] Mike Schuster and K uldip K P aliwal. Bidirectional recurrent neural networks. Signal Pr ocess- ing, IEEE T ransactions on , 45(11):2673–2681, 1997. [7] Y oshua Bengio, Patrice Simard, and Paolo Frasconi. Learning long-term dependencies with gradient descent is difficult. Neural Networks, IEEE T ransactions on , 5(2):157–166, 1994. [8] Richard Socher , Clif f C Lin, Andrew Ng, and Chris Manning. Parsing natural scenes and natural language with recursiv e neural networks. In Pr oceedings of the 28th International Confer ence on Machine Learning (ICML-11) , pages 129–136, 2011. [9] Richard Socher , Jef frey Pennington, Eric H Huang, Andrew Y Ng, and Christopher D Man- ning. Semi-supervised recursiv e autoencoders for predicting sentiment distributions. In Pro- ceedings of the Confer ence on Empirical Methods in Natural Languag e Pr ocessing , pages 151–161. Association for Computational Linguistics, 2011. [10] Richard Socher , Eric H Huang, Jef frey Pennin, Christopher D Manning, and Andrew Ng. Dynamic pooling and unfolding recursi ve autoencoders for paraphrase detection. In Advances in Neural Information Pr ocessing Systems , pages 801–809, 2011. [11] Janyce W iebe, Theresa Wilson, and Claire Cardie. Annotating expressions of opinions and emotions in language. Language r esources and evaluation , 39(2-3):165–210, 2005. [12] Y ejin Choi, Claire Cardie, Ellen Riloff, and Siddharth Patwardhan. Identifying sources of opin- ions with conditional random fields and extraction patterns. In Pr oceedings of the conference on Human Language T echnology and Empirical Methods in Natural Language Pr ocessing , pages 355–362. Association for Computational Linguistics, 2005. [13] Eric Breck, Y ejin Choi, and Claire Cardie. Identifying expressions of opinion in context. In IJCAI , pages 2683–2688, 2007. [14] Bishan Y ang and Claire Cardie. Extracting opinion e xpressions with semi-mark ov conditional random fields. In Pr oceedings of the 2012 Joint Confer ence on Empirical Methods in Natu- ral Language Pr ocessing and Computational Natural Language Learning , pages 1335–1345. Association for Computational Linguistics, 2012. [15] Andriy Mnih and Geoffre y Hinton. Three new graphical models for statistical language mod- elling. In Pr oceedings of the 24th international conference on Machine learning , pages 641– 648. A CM, 2007. [16] T omas Mikolo v , Kai Chen, Greg Corrado, and Jef frey Dean. Efficient estimation of word representations in vector space. arXiv pr eprint arXiv:1301.3781 , 2013. [17] Joseph T urian, Lev Ratinov , and Y oshua Bengio. W ord representations: a simple and general method for semi-supervised learning. In Pr oceedings of the 48th Annual Meeting of the As- sociation for Computational Linguistics , pages 384–394. Association for Computational Lin- guistics, 2010. [18] Jeffre y L Elman. Finding structure in time. Cognitive science , 14(2):179–211, 1990. [19] Paul J W erbos. Backpropagation through time: what it does and how to do it. Pr oceedings of the IEEE , 78(10):1550–1560, 1990. [20] Christoph Goller and Andreas Kuchler . Learning task-dependent distrib uted representations by backpropagation through structure. In Neur al Networks, 1996., IEEE International Confer ence on , volume 1, pages 347–352. IEEE, 1996. [21] Dan Klein and Christopher D Manning. Accurate unlexicalized parsing. In Pr oceedings of the 41st Annual Meeting on Association for Computational Linguistics-V olume 1 , pages 423–430. Association for Computational Linguistics, 2003. [22] Richard Johansson and Alessandro Moschitti. Syntactic and semantic structure for opinion expression detection. In Pr oceedings of the F ourteenth Confer ence on Computational Natural Language Learning , pages 67–76. Association for Computational Linguistics, 2010. 9

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment