Parallel Coordinate Descent Methods for Big Data Optimization

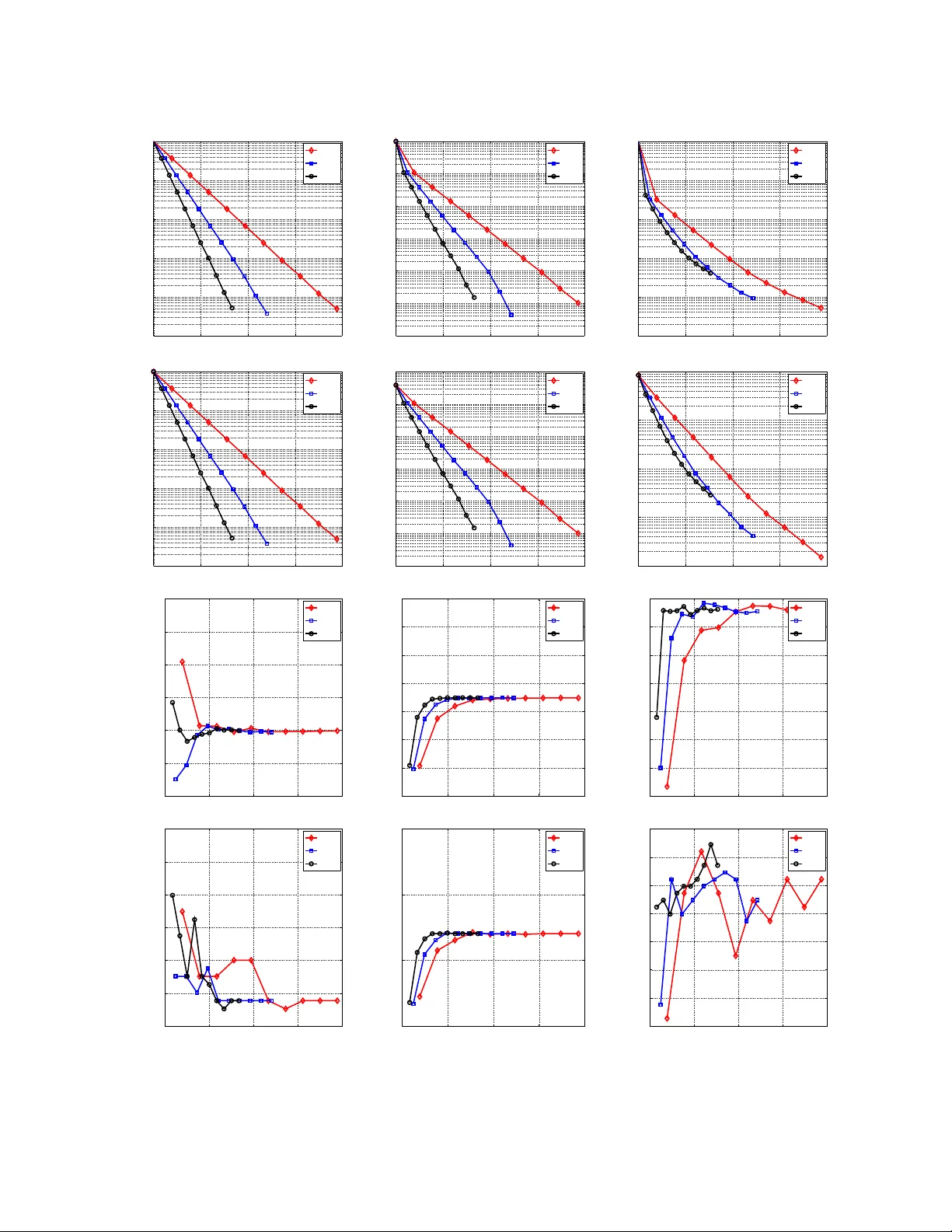

In this work we show that randomized (block) coordinate descent methods can be accelerated by parallelization when applied to the problem of minimizing the sum of a partially separable smooth convex function and a simple separable convex function. Th…

Authors: Peter Richtarik, Martin Takav{c}