Models and Simulations in Material Science: Two Cases Without Error Bars

We discuss two research projects in material science in which the results cannot be stated with an estimation of the error: a spectro- scopic ellipsometry study aimed at determining the orientation of DNA molecules on diamond and a scanning tunneling microscopy study of platinum-induced nanowires on germanium. To investigate the reliability of the results, we apply ideas from the philosophy of models in science. Even if the studies had reported an error value, the trustworthiness of the result would not depend on that value alone.

💡 Research Summary

The paper tackles a recurring problem in material‑science research: results are sometimes presented without any quantitative estimate of uncertainty. Using two concrete case studies—a spectroscopic ellipsometry investigation of DNA orientation on diamond surfaces and a scanning tunneling microscopy (STM) study of platinum‑induced nanowires on germanium—the authors examine how the trustworthiness of such “error‑free” findings can be assessed through the philosophy of scientific models.

First, the authors review contemporary model philosophy, drawing especially on Frigg and Hartmann (2006). They distinguish between (a) models that directly represent a part of the world (material or “iconic” models) and (b) models that represent a part of a theory (ideal or mathematical models). Three epistemic phases are highlighted: denotation (linking model symbols to real‑world entities), demonstration (deriving predictions or explanations within the model), and interpretation (translating model results back to statements about the world). The authors also stress the ontological claim that all models are approximations—no model perfectly mirrors its target system—so every model is “wrong” to some degree, but can still be useful if the approximations are irrelevant to the question at hand.

In the first case study, the researchers built a three‑layer optical model (diamond bulk, organic DNA layer, vacuum) and used the complex refractive index N = n + ik to fit ellipsometric spectra over a range of wavelengths, especially the 261 nm DNA absorption band. From the fitted in‑plane and out‑of‑plane contributions they derived an average tilt angle α (45°, 49°, 52° for different DNA lengths). No formal error bars were reported because the fitting involves many interdependent parameters and non‑linear optimization. The authors argue that the result’s reliability can be judged by (i) the explicit denotation of α as the average backbone angle, (ii) the demonstration phase where simulated spectra are overlaid on experimental data and visual agreement is confirmed, and (iii) the interpretation that the angle meaningfully reflects DNA orientation for biosensor performance. Because the model captures the essential optical physics and the qualitative fit is satisfactory, the lack of numerical uncertainties does not automatically invalidate the conclusion.

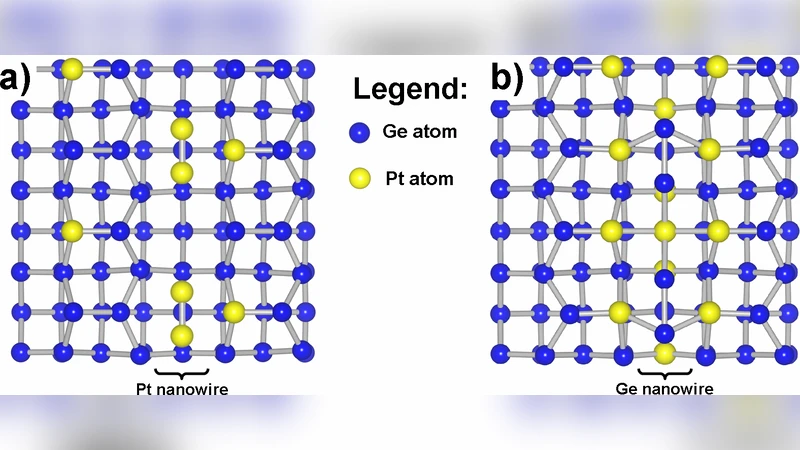

The second case study concerns STM imaging of single‑atom‑wide nanowires that appear after platinum deposition and high‑temperature annealing on Ge(001). STM provides topographic maps with atomic resolution but cannot identify chemical species. The researchers therefore combined a physical model of tunneling current (relating tip‑sample distance to measured height) with chemical reasoning based on prior adsorption experiments (e.g., CO) and literature on Pt‑induced reconstructions. They refer to the structures as “platinum nanowires” while acknowledging the provisional nature of this label. Again, no error estimates are given for the wire dimensions or composition. The authors demonstrate that, by cross‑checking STM morphology with independent chemical probes and by ensuring internal consistency of the physical tunneling model, the claim that Pt induces nanowires can be considered reliable despite the absence of formal uncertainties.

The comparative discussion extracts three general lessons. First, a model’s epistemic value lies in how clearly its denotation, demonstration, and interpretation steps are articulated; transparency can compensate for missing error bars. Second, material‑science research often employs hybrid models that blend empirical, physical, and idealized components; recognizing each component’s role clarifies the scope and limits of the conclusions. Third, because all models are approximations, critical scrutiny of the selected features and the assumptions that link model parameters to real phenomena is essential.

In conclusion, the paper argues that the presence or absence of error bars is not the decisive factor for scientific credibility. By applying a philosophically informed analysis of the models underlying experimental work, researchers can assess the robustness of results even when quantitative uncertainties are not reported. This framework is applicable beyond the two illustrated examples, offering a systematic way to evaluate “error‑free” findings across experimental and computational disciplines.

Comments & Academic Discussion

Loading comments...

Leave a Comment