Ants: Mobile Finite State Machines

Consider the Ants Nearby Treasure Search (ANTS) problem introduced by Feinerman, Korman, Lotker, and Sereni (PODC 2012), where $n$ mobile agents, initially placed at the origin of an infinite grid, collaboratively search for an adversarially hidden treasure. In this paper, the model of Feinerman et al. is adapted such that the agents are controlled by a (randomized) finite state machine: they possess a constant-size memory and are able to communicate with each other through constant-size messages. Despite the restriction to constant-size memory, we show that their collaborative performance remains the same by presenting a distributed algorithm that matches a lower bound established by Feinerman et al. on the run-time of any ANTS algorithm.

💡 Research Summary

The paper revisits the Ants Nearby Treasure Search (ANTS) problem—originally introduced by Feinerman, Korman, Lotker, and Sereni (PODC 2012)—under a dramatically weaker computational model. Instead of agents modeled as Turing machines with unbounded memory, the authors restrict each agent to a randomized finite‑state machine (FSM) with a constant number of states. Communication is also limited: agents can only sense, at a given grid cell, whether at least one other agent in that cell currently occupies each possible state. No global identifiers, no knowledge of the total number of agents n, and no knowledge of the treasure distance D are available to any individual agent.

Despite these severe constraints, the authors present a distributed algorithm—named RectSearch—that matches the optimal runtime previously proved for the unrestricted model: with high probability (w.h.p.) the treasure is found in O(D + D²/n) time, where D is the Manhattan distance from the origin to the treasure. The algorithm proceeds in two intertwined layers:

-

Emission Scheme – Agents are emitted from the origin in teams of five. The emission function fₙ(t) determines how many teams have been released by round t. In the ideal analysis the authors assume fₙ(t)=t (one team per round); a concrete near‑optimal scheme achieving fₙ(t)=Ω(t−log n) is described later.

-

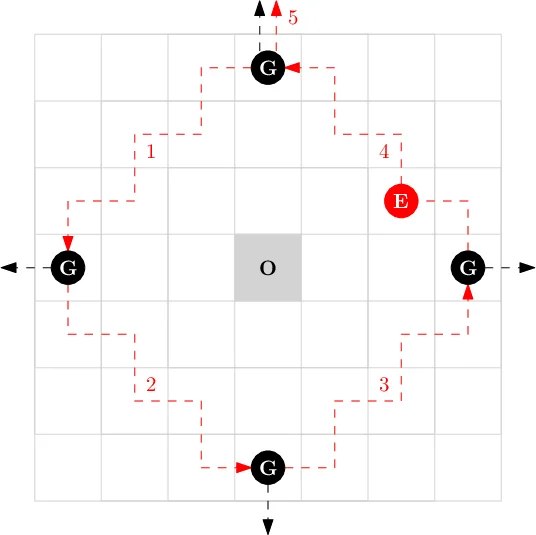

Team Structure and Roles – Within each team, four agents become NewGuides (one for each cardinal direction N, S, E, W) and one becomes a NewExplorer. Guides walk outward until they encounter the first cell not already occupied by another Guide, then become stationary Guides waiting for an Explorer. The Explorer follows the north Guide, and when it reaches the first empty cell at distance d from the origin it steps one cell west and becomes an Explorer.

The core of the algorithm is the rectangle search performed by an Explorer at a given distance d (called level d). Starting from the north Guide at (0,d), the Explorer traverses the perimeter of the L₁‑ball of radius d by moving diagonally southwest, southeast, northeast, and finally northwest, switching direction each time it meets a Guide. This deterministic pattern guarantees that the Explorer visits every cell at distance d (and almost all cells at distance d+1) in exactly 8·d rounds (Observation 1). After completing the rectangle, the Explorer and the north Guide move together one step north, thereby initiating the search on level d+1.

Key correctness lemmas are proved:

-

Lemma 3 shows that, except at the origin, no two agents of the same type ever occupy the same cell simultaneously. This follows from the fact that NewGuides/NewExplorers are emitted in distinct rounds and that Guides/MovingGuides/Explorers obey strict movement rules.

-

Lemma 4 establishes that when an Explorer finishes level d and becomes a MovingExplorer, every other MovingExplorer is at distance at least d + 8 from the origin. Consequently, the spacing between MovingExplorers prevents interference across levels.

With an ideal emission schedule, the d‑th Explorer starts level d at time 2d (unless a previous Explorer already covered that level), so cells with d ≤ n are explored in O(d) time. For levels d > n, only n teams exist, so each new level must wait for the previous teams to advance outward. Using Lemma 4 and the fact that each level requires 8·d rounds, the authors bound the waiting time for level d by O(d²/n). Hence the total runtime to reach distance D is O(D + D²/n) w.h.p., exactly matching the lower bound shown by Feinerman et al. for the unrestricted model.

The paper also situates its contribution within related work. Prior ANTS studies assumed agents could store visited cells, perform spiral searches, and communicate only at the nest. By contrast, this work adopts the communication model of Emek and Wattenhofer (2020), where agents only detect the presence of particular states in their cell—a strict 1‑to‑2 scheme with parameter b = 1. The authors also reference population protocols, finite‑state graph exploration, and classic cow‑path problems to highlight the novelty of achieving optimal search time with such limited agents.

In conclusion, the authors demonstrate that finite‑state, constant‑memory agents with only local state‑presence communication can collectively solve the ANTS problem as efficiently as agents with unbounded computational power. The algorithm’s reliance on simple, repeatable team dynamics and deterministic rectangle traversals makes it robust to the lack of global knowledge. The paper suggests several avenues for future research, including asynchronous rounds, obstacles in the grid, dynamic agent populations, and practical implementations in swarm robotics or nanoscale systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment