Mixed Human-Robot Team Surveillance

We study the mixed human-robot team design in a system theoretic setting using the context of a surveillance mission. The three key coupled components of a mixed team design are (i) policies for the human operator, (ii) policies to account for erroneous human decisions, and (iii) policies to control the automaton. In this paper, we survey elements of human decision-making, including evidence aggregation, situational awareness, fatigue, and memory effects. We bring together the models for these elements in human decision-making to develop a single coherent model for human decision-making in a two-alternative choice task. We utilize the developed model to design efficient attention allocation policies for the human operator. We propose an anomaly detection algorithm that utilizes potentially erroneous decision by the operator to ascertain an anomalous region among the set of regions surveilled. Finally, we propose a stochastic vehicle routing policy that surveils an anomalous region with high probability. Our mixed team design relies on the certainty-equivalent receding-horizon control framework.

💡 Research Summary

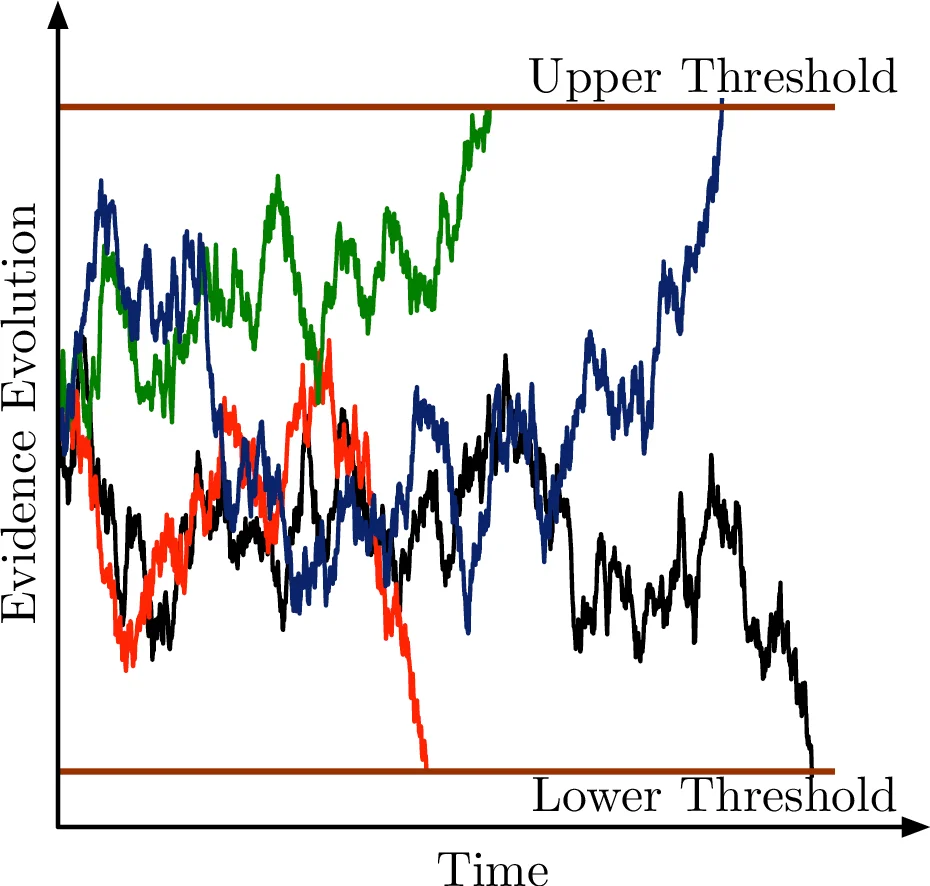

The paper presents a systems‑theoretic framework for designing mixed human‑robot teams tasked with persistent surveillance. A single unmanned aerial vehicle (UAV) repeatedly visits a set of spatial regions, collects sensor evidence, and forwards it to a human operator who must decide, for each region, whether an anomaly is present (binary two‑alternative choice). Human decision‑making is modeled using the drift‑diffusion model (DDM) under an interrogation paradigm: evidence evolves as a stochastic process (dx(t)=\mu dt+\sigma dW(t)) and a decision is rendered at a fixed deadline (T) by comparing the accumulated evidence (x(T)) to a threshold (\nu). The probability of a correct decision is expressed as a sigmoid‑shaped performance function (f(t,\pi)) that depends on the allocated decision time (t) and the prior anomaly probability (\pi).

Beyond the basic DDM, the authors incorporate exogenous cognitive factors—fatigue, situational awareness, memory decay, and boredom—by mapping them onto DDM parameters (e.g., fatigue reduces drift (\mu), memory influences the initial evidence (x_0)). This yields a unified performance model that predicts expected reward (correct‑decision reward) and latency penalty for any task‑time allocation.

The surveillance workflow is organized as a queue of decision‑making tasks. Each task arriving from region (R_k) is characterized by a triple ((f_k, w_k, T^{\text{ddln}}_k)) where (f_k) is the region‑specific performance function, (w_k) a weight reflecting importance, and (T^{\text{ddln}}_k) a soft deadline. Tasks are processed in a first‑come‑first‑served order, while the UAV’s routing policy determines the stochastic arrival process (probability (q^{\ell}_k) that the (\ell)-th arriving task originates from region (R_k)).

A central contribution is the use of Certainty‑Equivalent Receding‑Horizon Control (CE‑RHC) to jointly optimize three coupled components: (i) the decision‑support system that suggests optimal time allocations to the human operator, (ii) an anomaly detection module that treats each human binary decision as a random variable, and (iii) a stochastic vehicle‑routing algorithm that steers the UAV toward regions most likely to contain anomalies. CE‑RHC approximates the stochastic optimal control problem by replacing future uncertainties with their expected values, enabling real‑time computation of near‑optimal control actions over a moving horizon.

For anomaly detection the authors adopt a non‑Bayesian quickest‑change‑detection scheme: parallel CUSUM (Cumulative Sum) detectors—one per region—monitor the stream of human decisions. The CUSUM statistic accumulates the log‑likelihood ratio of “anomaly present” versus “absent” hypotheses; when it exceeds a preset threshold, an anomaly is declared. Crucially, the probability that a human decision is correct (derived from the DDM‑based performance function) is fed into the CUSUM update, thereby adapting detection sensitivity to the operator’s current cognitive state.

The routing problem is cast as a Markov Decision Process (MDP) where the transition probabilities are the travel times (d_{ij}) between regions and the UAV’s dwell time (T_k) for evidence collection. The reward structure combines (a) the posterior probability of an anomaly at each region (output of the ensemble CUSUM) and (b) the cost of travel. The optimal stochastic policy selects the next region with probability proportional to its anomaly likelihood, while respecting travel‑time constraints.

The overall architecture, termed the Cognition‑and‑Autonomy Management System (CAMS), integrates three subsystems: (1) the autonomous system (UAV and its low‑level motion planner), (2) the cognitive system (human operator with the unified DDM‑based performance model), and (3) CAMS itself, which houses the decision‑support module, the ensemble CUSUM detector, and the routing optimizer. By closing the loop—human performance influencing detection, detection influencing routing, and routing influencing the queue of tasks presented to the human—the framework achieves a synergistic balance between human cognitive limitations and robotic sensing capabilities.

The paper’s four main contributions are: (1) a unified human cognitive model that captures decision‑making, fatigue, situational awareness, and memory; (2) the application of CE‑RHC to compute real‑time attention‑allocation and routing policies; (3) the integration of human‑error‑aware CUSUM change detection into the surveillance loop; and (4) the extension of existing persistent‑surveillance algorithms to mixed human‑robot scenarios. The authors argue that this integrated approach can be adapted to a wide range of domains—disaster response, military reconnaissance, environmental monitoring—where human judgment remains essential but must be efficiently supported by autonomous agents.

Comments & Academic Discussion

Loading comments...

Leave a Comment