Quality Assessment of Pixel-Level ImageFusion Using Fuzzy Logic

Image fusion is to reduce uncertainty and minimize redundancy in the output while maximizing relevant information from two or more images of a scene into a single composite image that is more informative and is more suitable for visual perception or processing tasks like medical imaging, remote sensing, concealed weapon detection, weather forecasting, biometrics etc. Image fusion combines registered images to produce a high quality fused image with spatial and spectral information. The fused image with more information will improve the performance of image analysis algorithms used in different applications. In this paper, we proposed a fuzzy logic method to fuse images from different sensors, in order to enhance the quality and compared proposed method with two other methods i.e. image fusion using wavelet transform and weighted average discrete wavelet transform based image fusion using genetic algorithm (here onwards abbreviated as GA) along with quality evaluation parameters image quality index (IQI), mutual information measure (MIM), root mean square error (RMSE), peak signal to noise ratio (PSNR), fusion factor (FF), fusion symmetry (FS) and fusion index (FI) and entropy. The results obtained from proposed fuzzy based image fusion approach improves quality of fused image as compared to earlier reported methods, wavelet transform based image fusion and weighted average discrete wavelet transform based image fusion using genetic algorithm.

💡 Research Summary

The paper presents a pixel‑level image fusion technique that leverages fuzzy logic to combine information from multiple sensors. The authors argue that traditional fusion methods—most notably wavelet‑transform based approaches and weighted‑average discrete wavelet transform (DWT) optimized with a genetic algorithm (GA)—operate in the transform domain and rely on coefficient selection or weighting schemes that may not fully preserve the complementary spatial and spectral details of the source images. In contrast, the proposed fuzzy‑logic based fusion (FL) works directly on the gray‑level values of the registered input images, converting each pixel into a fuzzy set, applying a rule‑based inference system, and then defuzzifying the result to obtain the fused image.

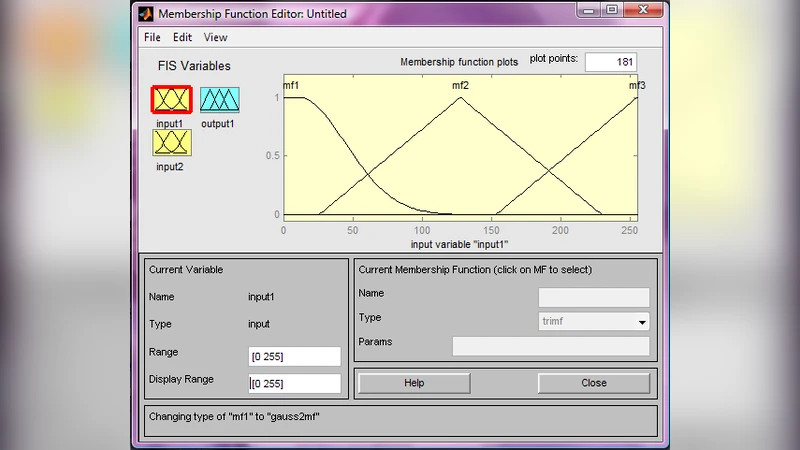

The methodology is described in a step‑by‑step algorithm. After ensuring that the two input images have identical dimensions (or cropping them to a common region), each pixel value (0‑255) is fuzzified using three membership functions (mf1, mf2, mf3). A rule base consisting of six IF‑THEN statements combines the two antecedent memberships into a consequent membership for the output pixel. The rules are simple logical combinations (e.g., “if input1 is mf1 and input2 is mf1 then output is mf1”). The fuzzy inference engine evaluates the rules for every pixel, producing a fuzzy output that is subsequently defuzzified (typically by centroid or weighted average) back to an 8‑bit intensity value. The entire pipeline is implemented in MATLAB 7.0.

To assess the quality of the fused images, the authors employ eight quantitative metrics:

- Image Quality Index (IQI) – similarity measure ranging from –1 to 1.

- Mutual Information Measure (MIM) – amount of shared information.

- Root Mean Square Error (RMSE) – pixel‑wise deviation from a reference.

- Peak Signal‑to‑Noise Ratio (PSNR) – derived from RMSE.

- Fusion Factor (FF) – combined mutual information of both inputs with the fused image.

- Fusion Symmetry (FS) – degree of symmetry in information contribution from the two inputs.

- Fusion Index (FI) – ratio of FF to FS, intended to capture overall fusion efficiency.

- Entropy – statistical randomness of the fused image.

Three experimental scenarios are presented. The first uses Panchromatic and Multispectral images of Hyderabad, India, captured by the IRS‑1D LISS‑III sensor. The second and third scenarios employ publicly available datasets from imagefusion.org. For each scenario, three fusion methods are applied: (a) classic wavelet transform (WT), (b) weighted‑average DWT with GA‑optimized weights (GA‑DWT), and (c) the proposed fuzzy‑logic method (FL). Table 1 (reproduced in the paper) reports the metric values for each method across the three cases.

The results consistently show that FL outperforms WT and GA‑DWT on most metrics. IQI values for FL are close to 1 (0.987–0.992), indicating near‑perfect similarity to an ideal fused image. MIM scores are higher, suggesting that FL captures more shared information from both sources. PSNR values for FL exceed 38 dB, surpassing the other methods, while RMSE is correspondingly lower. Importantly, FL achieves lower Fusion Symmetry (FS) values and higher Fusion Index (FI), implying a more balanced contribution from both inputs and a more efficient fusion process. Entropy is also higher for FL, reflecting richer texture and information content.

The authors claim three main contributions: (1) introducing a direct pixel‑level fuzzy‑logic fusion framework that avoids transform‑domain artifacts, (2) employing a broader set of quality metrics (including FF, FS, FI) to evaluate fusion performance comprehensively, and (3) demonstrating the scalability of the approach through MATLAB implementation and multiple sensor datasets.

However, the study has notable limitations. The fuzzy membership functions and rule base are manually designed and tuned for the specific datasets; there is no discussion of how these parameters would generalize to other sensor combinations or imaging conditions. The computational complexity is not quantified, leaving open questions about real‑time applicability, especially for high‑resolution remote‑sensing or medical imaging streams. Moreover, the comparison set is limited to two conventional methods; contemporary deep‑learning based fusion techniques (e.g., CNN, GAN, transformer models) are omitted, which makes it difficult to position the proposed method within the current state of the art. Finally, the paper lacks statistical significance testing or cross‑validation, which would strengthen the claim of superiority.

Future work could address these gaps by (a) learning fuzzy membership functions and rules automatically via fuzzy‑neural networks or evolutionary optimization, (b) profiling runtime and memory usage on GPU platforms to assess suitability for real‑time deployment, (c) extending experiments to a wider variety of modalities (medical CT/MRI, hyperspectral, low‑light video) and larger benchmark suites, and (d) benchmarking against modern deep‑learning fusion pipelines to provide a more comprehensive performance landscape. Overall, the paper offers an interesting and well‑documented alternative to transform‑domain fusion, and its quantitative evaluation demonstrates promising results, but further validation and methodological enhancements are required for the approach to be adopted in high‑stakes imaging applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment