Optimal Folding of Data Flow Graphs based on Finite Projective Geometry using Lattice Embedding

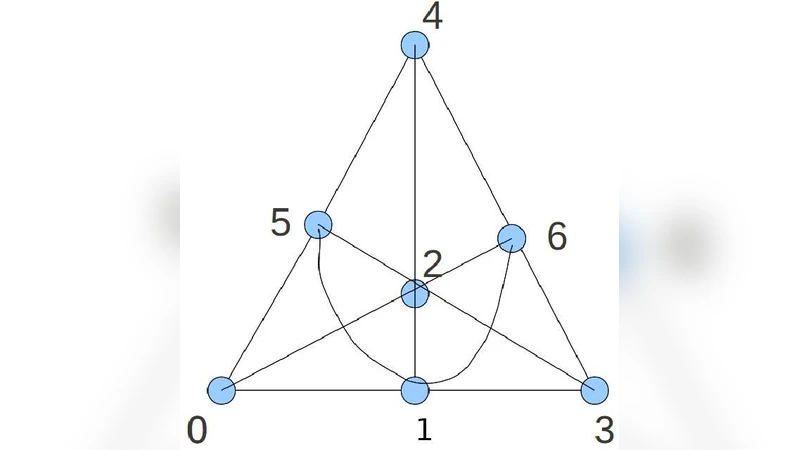

A number of computations exist, especially in area of error-control coding and matrix computations, whose underlying data flow graphs are based on finite projective-geometry(PG) based balanced bipartite graphs. Many of these applications are actively being researched upon. Almost all these applications need bipartite graphs of the order of tens of thousands in practice, whose nodes represent parallel computations. To reduce its implementation cost, reducing amount of system/hardware resources during design is an important engineering objective. In this context, we present a scheme to reduce resource utilization when performing computations derived from PG-based graphs. In a fully parallel design based on PG concepts, the number of processing units is equal to the number of vertices, each performing an atomic computation. To reduce the number of processing units used for implementation, we present an easy way of partitioning the vertex set. Each block of partition is then assigned to a processing unit. A processing unit performs the computations corresponding to the vertices in the block assigned to it in a sequential fashion, thus creating the effect of folding the overall computation. These blocks have certain symmetric properties that enable us to develop a conflict-free schedule. The scheme achieves the best possible throughput, in lack of any overhead of shuffling data across memories while scheduling another computation on the same processing unit. This paper reports two folding schemes, which are based on same lattice embedding approach, based on partitioning. We first provide a scheme for a projective space of dimension five, and the corresponding schedules. Both the folding schemes that we present have been verified by both simulation and hardware prototyping for different applications. We later generalize this scheme to arbitrary projective spaces.

💡 Research Summary

The paper addresses the practical problem of implementing very large bipartite data‑flow graphs that arise from finite projective geometry (PG) constructions. Such graphs are central to many high‑performance applications, notably iterative decoders for LDPC and expander codes, as well as large‑scale matrix computations (LU/Cholesky factorisation, conjugate‑gradient solvers). In a naïve fully‑parallel architecture each vertex of the PG‑derived graph would be mapped to a distinct processing element, leading to tens of thousands of processing units and prohibitive hardware cost.

To overcome this, the authors propose a systematic folding technique based on lattice embedding and coset decomposition of the underlying projective space. The vertex set is partitioned into equally sized blocks, each block being a subspace (or a coset) of the original projective space. Because these blocks inherit the symmetry of the projective lattice, a conflict‑free memory‑access schedule can be constructed: every processing unit sequentially executes the operations belonging to its assigned block, while the global schedule guarantees that in each clock cycle all units are active and no two units request the same memory location. Consequently, the throughput of the folded architecture equals that of the fully parallel one, but the number of processing elements is reduced by the factor equal to the block size.

Two concrete folding schemes are presented for a five‑dimensional projective space P(5, GF(q)). The first scheme uses point‑hyperplane incidence graphs and partitions the points into q⁴ cosets; the second scheme uses 2‑dimensional subspaces (lines) as the basis of partitioning, yielding q³ blocks. Both schemes are proved mathematically to be conflict‑free and are validated through extensive simulations and FPGA prototypes. The hardware results show more than 30 % reduction in logic resources with zero memory conflicts, while maintaining the same number of cycles as the fully parallel design.

The authors then generalise the method to arbitrary dimension d. By selecting a subspace of dimension (d‑k) and forming its cosets, the graph can be folded into q^{d‑k} blocks. The parameter k controls the trade‑off between the number of processing units and the per‑unit workload: larger k reduces hardware but increases the number of sequential steps per unit, and vice‑versa. The lattice‑embedding framework provides a systematic way to generate the conflict‑free schedule for any such choice.

Practical applications are demonstrated. One example is a semi‑parallel decoder for a new class of expander codes intended for use in CD‑ROM/DVD‑R drives; another is an FPGA‑based accelerator for LU/Cholesky factorisation that achieves roughly double the throughput compared with an un‑folded implementation. Both examples confirm the theoretical claims through hardware prototyping.

In summary, the paper contributes a mathematically rigorous, hardware‑friendly folding methodology that (1) drastically reduces the number of processing elements, (2) preserves the maximal throughput of the original parallel graph, (3) eliminates memory access conflicts, and (4) simplifies address generation to simple counters or small lookup tables. These advantages make the technique highly attractive for any large‑scale PG‑based computation, opening avenues for efficient implementations of next‑generation error‑control decoders and high‑performance linear algebra accelerators.

Comments & Academic Discussion

Loading comments...

Leave a Comment