An Automated Evaluation Metric for Chinese Text Entry

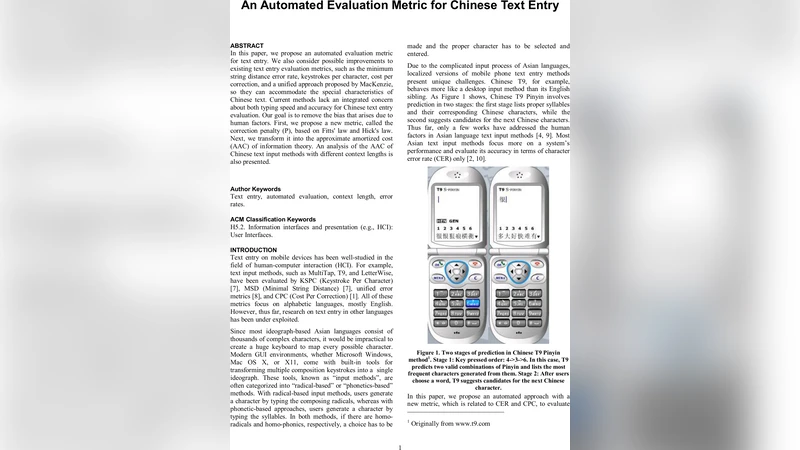

In this paper, we propose an automated evaluation metric for text entry. We also consider possible improvements to existing text entry evaluation metrics, such as the minimum string distance error rate, keystrokes per character, cost per correction, and a unified approach proposed by MacKenzie, so they can accommodate the special characteristics of Chinese text. Current methods lack an integrated concern about both typing speed and accuracy for Chinese text entry evaluation. Our goal is to remove the bias that arises due to human factors. First, we propose a new metric, called the correction penalty (P), based on Fitts’ law and Hick’s law. Next, we transform it into the approximate amortized cost (AAC) of information theory. An analysis of the AAC of Chinese text input methods with different context lengths is also presented.

💡 Research Summary

The paper addresses a critical gap in the evaluation of Chinese text entry systems: existing metrics either focus solely on error rates (minimum string distance error rate, MSDER) or on keystroke efficiency (keystrokes per character, KSPC), without adequately accounting for the time and effort required to correct mistakes. While Cost Per Correction (CPC) and MacKenzie’s unified framework incorporate some correction costs, they fall short in modeling the combined motor and cognitive demands that are especially pronounced in Chinese input, where users must navigate candidate lists, select characters, and often rely on context‑based predictions.

To fill this gap, the authors introduce a new metric called the correction penalty (P), derived from two well‑established human‑factors laws. Fitts’ law models the motor time needed to move a cursor or finger to a target (log₂(D/W) component), while Hick’s law captures the cognitive time required to choose among N alternatives (log₂(N) component). The penalty is expressed as:

P = a₁ + b₁·log₂(D/W) + a₂ + b₂·log₂(N),

where a₁, b₁, a₂, and b₂ are empirically determined constants. This formulation quantifies the total time a user spends correcting an error, integrating both the physical movement to the error location and the mental processing needed to select the correct candidate.

The authors then map P onto an information‑theoretic cost measure called the approximate amortized cost (AAC). AAC is defined as:

AAC = (total keystrokes + ΣP) / total output characters.

Unlike KSPC, which counts only raw keystrokes, AAC adds the summed correction penalties, yielding a single figure that reflects both speed and accuracy.

The experimental methodology consists of two phases. First, a simulation environment generates input streams for three representative Chinese input methods: Pinyin (phonetic), Cangjie (radical‑based), and Wubi (stroke‑based). The simulation injects errors according to a probabilistic model and records the size of the candidate list generated for each correction. Different context lengths (1‑gram, 2‑gram, 3‑gram) are applied to examine how linguistic context influences candidate set size. Second, the recorded D, W, and N values are fed into the Fitts‑Hick model to compute P for each correction, and AAC is calculated for each method‑context combination.

Results reveal a clear trend: longer contexts dramatically shrink the average candidate set. For Pinyin, the mean number of candidates drops from 8.2 (1‑gram) to 2.7 (3‑gram). This reduction cuts Hick’s term, decreasing selection time logarithmically. Simultaneously, fewer candidates lead to more compact UI layouts, shortening cursor travel distances and thus reducing the Fitts’ component. Consequently, the overall correction penalty falls, and AAC improves. Specifically, Pinyin’s AAC declines from 1.42 (1‑gram) to 0.97 (3‑gram). In contrast, Cangjie and Wubi start with relatively small candidate sets (≈3–4), so extending context yields only modest AAC gains, underscoring that the benefit of the new metric is most pronounced for input methods that rely heavily on candidate selection.

Beyond the quantitative findings, the paper argues that AAC provides actionable feedback for IME designers. By adjusting context window size, re‑ordering candidate display, or optimizing UI geometry, developers can directly influence the two components of P, thereby lowering the amortized cost experienced by users. The authors also note that AAC can be adapted to other platforms—mobile touchscreens, voice‑driven entry, or wearable devices—by recalibrating the Fitts’ and Hick’s constants to reflect different motor and perceptual characteristics.

Future work outlined includes: (1) validating the simulated parameters with real‑user studies to obtain personalized a and b coefficients; (2) extending the model to touch‑based Fitts’ formulations that account for finger size and screen density; (3) integrating sophisticated language‑model error distributions to generate more realistic error patterns; and (4) exploring real‑time adaptive IMEs that dynamically adjust context length based on the user’s current error rate, thereby continuously optimizing AAC.

In summary, the paper makes three major contributions: it identifies the inadequacy of current Chinese text entry metrics, proposes a theoretically grounded correction penalty that merges motor and cognitive costs, and demonstrates how transforming this penalty into an approximate amortized cost yields a comprehensive, bias‑reduced evaluation framework. This framework not only clarifies the trade‑offs inherent in different Chinese input methods but also offers a practical tool for guiding the design of faster, more accurate, and user‑friendly text entry solutions.