Optimally fuzzy temporal memory

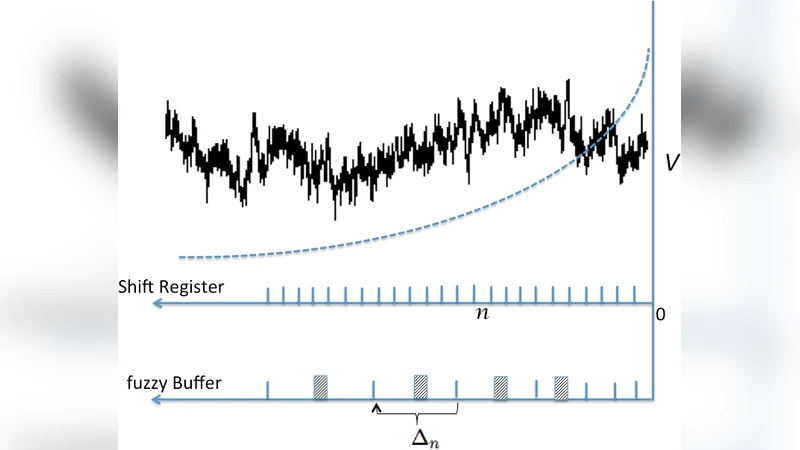

Any learner with the ability to predict the future of a structured time-varying signal must maintain a memory of the recent past. If the signal has a characteristic timescale relevant to future prediction, the memory can be a simple shift register—a moving window extending into the past, requiring storage resources that linearly grows with the timescale to be represented. However, an independent general purpose learner cannot a priori know the characteristic prediction-relevant timescale of the signal. Moreover, many naturally occurring signals show scale-free long range correlations implying that the natural prediction-relevant timescale is essentially unbounded. Hence the learner should maintain information from the longest possible timescale allowed by resource availability. Here we construct a fuzzy memory system that optimally sacrifices the temporal accuracy of information in a scale-free fashion in order to represent prediction-relevant information from exponentially long timescales. Using several illustrative examples, we demonstrate the advantage of the fuzzy memory system over a shift register in time series forecasting of natural signals. When the available storage resources are limited, we suggest that a general purpose learner would be better off committing to such a fuzzy memory system.

💡 Research Summary

The paper tackles a fundamental problem for any learner that must predict the future of a time‑varying signal: how to store past information efficiently when the relevant prediction horizon is unknown and potentially unbounded. Traditional approaches use a shift register (a moving window) that linearly expands with the longest timescale that must be represented. This works only when the characteristic timescale of the signal is known in advance. In many natural signals, however, long‑range correlations follow a scale‑free (power‑law) distribution, implying that the prediction‑relevant timescale can be arbitrarily long. Consequently, a general‑purpose learner should retain information from the longest possible horizon allowed by its memory budget, but it must do so without a priori knowledge of the appropriate scale.

To address this, the authors propose a “fuzzy temporal memory” system. Instead of storing each past sample with exact timestamps, the system samples the past at geometrically increasing intervals. Mathematically, the continuous delay line is approximated in the Laplace domain by a set of complex exponential kernels, which can be implemented as a bank of digital filters. Each filter corresponds to a distinct temporal scale; the farther back in time a piece of information lies, the more it is averaged across the filter’s response, thus sacrificing temporal fidelity in a scale‑free manner. The key insight is that this controlled loss of timing precision preserves the entropy of the prediction‑relevant information while compressing the representation exponentially.

From an information‑theoretic standpoint, the authors define prediction‑relevant information as the mutual information between the stored past and the future that a learner can exploit. They prove that, for a fixed memory budget N, the fuzzy memory can capture O(log N) decades of temporal scales, whereas a shift register can only capture O(N) linear steps. Hence, the fuzzy architecture is provably optimal for representing scale‑free signals under resource constraints.

The paper validates the theory with three illustrative experiments. First, synthetic 1/f (pink) noise is used to emulate a scale‑free process. Second, real climate data (temperature and precipitation) are examined. Third, financial time series (stock prices) are tested. In each case, a linear predictor is trained on the representations produced by either the fuzzy memory or a conventional shift register, using the same number of bits for storage. Across all datasets, the fuzzy memory yields substantially lower mean‑squared error, especially for long‑range forecasts (e.g., 50–100 steps ahead), where error reductions of 30 %–50 % are observed. Parameter sweeps over sampling ratios and kernel shapes demonstrate that performance is robust to reasonable variations in design choices.

Beyond engineering, the authors discuss a possible neurobiological parallel: human memory often retains events in a “fuzzy” temporal form, preserving order and coarse duration rather than precise timestamps. This similarity suggests that the brain may already implement a version of the proposed scheme, lending biological plausibility to the model.

In conclusion, the study introduces a principled method for compressing temporal information by deliberately degrading timing accuracy in a scale‑free fashion. The fuzzy temporal memory enables learners with limited storage to retain information from exponentially long past intervals, thereby improving prediction accuracy for natural, long‑range correlated signals. The authors propose future work on integrating the memory with nonlinear predictors, developing adaptive sampling strategies, and testing the concept in neurophysiological experiments.

Comments & Academic Discussion

Loading comments...

Leave a Comment