Distributed Computation of Sparse Cuts

Finding sparse cuts is an important tool in analyzing large-scale distributed networks such as the Internet and Peer-to-Peer networks, as well as large-scale graphs such as the web graph, online social communities, and VLSI circuits. In distributed communication networks, they are useful for topology maintenance and for designing better search and routing algorithms. In this paper, we focus on developing fast distributed algorithms for computing sparse cuts in networks. Given an undirected $n$-node network $G$ with conductance $\phi$, the goal is to find a cut set whose conductance is close to $\phi$. We present two distributed algorithms that find a cut set with sparsity $\tilde O(\sqrt{\phi})$ ($\tilde{O}$ hides $\polylog{n}$ factors). Both our algorithms work in the CONGEST distributed computing model and output a cut of conductance at most $\tilde O(\sqrt{\phi})$ with high probability, in $\tilde O(\frac{1}{b}(\frac{1}{\phi} + n))$ rounds, where $b$ is balance of the cut of given conductance. In particular, to find a sparse cut of constant balance, our algorithms take $\tilde O(\frac{1}{\phi} + n)$ rounds. Our algorithms can also be used to output a {\em local} cluster, i.e., a subset of vertices near a given source node, and whose conductance is within a quadratic factor of the best possible cluster around the specified node. Both our distributed algorithm can work without knowledge of the optimal $\phi$ value and hence can be used to find approximate conductance values both globally and with respect to a given source node. We also give a lower bound on the time needed for any distributed algorithm to compute any non-trivial sparse cut — any distributed approximation algorithm (for any non-trivial approximation ratio) for computing sparsest cut will take $\tilde \Omega(\sqrt{n} + D)$ rounds, where $D$ is the diameter of the graph.

💡 Research Summary

The paper tackles the fundamental problem of finding sparse cuts—subsets of vertices whose edge boundary is small relative to their volume—in large distributed networks. While conductance (the minimum sparsity over all cuts) is a key indicator of network connectivity, mixing time, and routing efficiency, computing an actual cut that achieves low conductance has remained elusive in the CONGEST model, where each node can only send O(log n) bits per edge per synchronous round.

The authors present two distributed algorithms that achieve a provable approximation guarantee of ˜O(√φ) for the conductance of the output cut, where φ denotes the true (optimal) conductance of the graph. Both algorithms run in ˜O((1/b)(1/φ + n)) rounds, where b is the balance of the target cut (the smaller side’s fraction of the total vertices). In the common case of a constant‑balance cut, the runtime simplifies to ˜O(1/φ + n). Crucially, the algorithms do not require prior knowledge of φ; they discover it adaptively during execution.

Algorithm 1 – SPARSECUT (Random‑Walk Based).

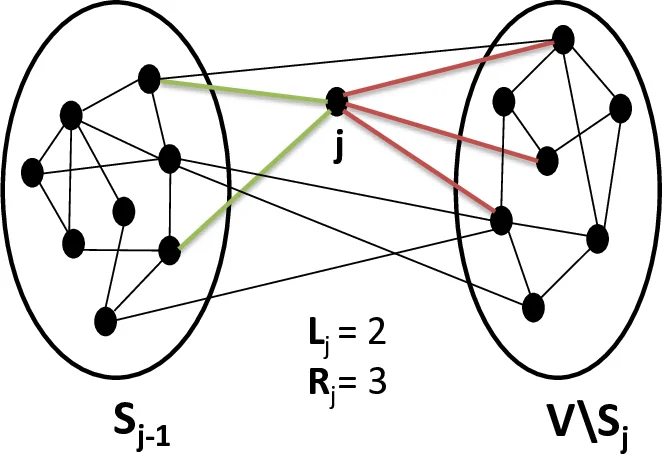

The method builds on the classic Lovász‑Simonovits framework that uses short random walks to expose low‑conductance regions. Each node initiates multiple random walks of length ℓ = O(1/φ). After ℓ steps, nodes estimate the probability pℓ(s, t) that a walk from source s ends at t. By normalizing these probabilities with the node degrees (ρ_t = pℓ(s, t)/deg(t)) and sorting vertices in decreasing ρ order, the algorithm generates a sequence of candidate cuts S_k consisting of the top‑k vertices. A technical lemma shows that the conductance of all n candidates can be evaluated in linear time (O(n) local computation per round), avoiding the naïve O(m) collection of the whole topology. With high probability, one of the S_k’s conductance is at most ˜O(√φ). The total number of communication rounds is bounded by ˜O((1/b)(1/φ + n)).

Algorithm 2 – PageRank‑Based Variant.

The second algorithm replaces plain random walks with a personalized PageRank (PPR) vector. Starting from a source node s, the process repeatedly restarts to s with probability α and follows a random edge otherwise. The stationary distribution p satisfies p = α·e_s + (1‑α)·P·p, where P is the transition matrix. As with the first algorithm, the normalized values ρ_t = p_t/deg(t) are sorted, and candidate cuts are examined using the same linear‑time conductance evaluation. This approach is particularly suited for local clustering: given any vertex s, the algorithm returns a set S containing s whose conductance is within a quadratic factor of the optimal local conductance around s. Runtime and approximation guarantees match those of SPARSECUT.

Local Clustering Result.

Theorem 1.5 formalizes the above: for any source node s, the algorithm finds a local cluster in ˜O(1/φ + n) rounds, with conductance at most O(√φ_local), where φ_local is the best possible conductance of any set containing s.

Lower Bound.

To complement the upper bounds, the authors prove a universal lower bound (Theorem 1.6): there exists an n‑node graph for which any distributed algorithm that approximates the sparsest cut within any non‑trivial factor must use at least ˜Ω(√n + D) rounds, where D is the graph’s diameter. Since 1/φ = Ω(D) for any graph, this lower bound essentially matches the 1/φ term in the upper bound, indicating that the presented algorithms are near‑optimal in terms of round complexity.

Context and Contributions.

Previous work on conductance estimation (e.g., mixing‑time estimators, spectral gap approximations) did not yield actual cuts, and algorithms based on SDP or linear programming, while offering stronger approximation ratios, are far too slow for distributed settings. By adapting spectral/random‑walk techniques to the CONGEST model, the paper achieves a practical trade‑off: a modest √φ approximation in near‑linear (in n) rounds, with the added ability to handle unknown φ and to produce both global and local clusters.

Implications and Future Directions.

The results open pathways for practical network management tasks such as topology maintenance, congestion reduction, and fault‑tolerant routing, where identifying bottleneck edges (the cut edges) is essential. Future research could explore dynamic networks where edges or nodes appear/disappear, tighter integration with graph sparsification to reduce the n term, or empirical evaluation on real‑world P2P and wireless mesh topologies. Overall, the paper delivers a theoretically solid and practically relevant solution to distributed sparse‑cut computation.

Comments & Academic Discussion

Loading comments...

Leave a Comment