Scaling of Geographic Space as a Universal Rule for Map Generalization

Map generalization is a process of producing maps at different levels of detail by retaining essential properties of the underlying geographic space. In this paper, we explore how the map generalization process can be guided by the underlying scaling of geographic space. The scaling of geographic space refers to the fact that in a geographic space small things are far more common than large ones. In the corresponding rank-size distribution, this scaling property is characterized by a heavy tailed distribution such as a power law, lognormal, or exponential function. In essence, any heavy tailed distribution consists of the head of the distribution (with a low percentage of vital or large things) and the tail of the distribution (with a high percentage of trivial or small things). Importantly, the low and high percentages constitute an imbalanced contrast, e.g., 20 versus 80. We suggest that map generalization is to retain the objects in the head and to eliminate or aggregate those in the tail. We applied this selection rule or principle to three generalization experiments, and found that the scaling of geographic space indeed underlies map generalization. We further relate the universal rule to T"opfer’s radical law (or trained cartographers’ decision making in general), and illustrate several advantages of the universal rule. Keywords: Head/tail division rule, head/tail breaks, heavy tailed distributions, power law, and principles of selection

💡 Research Summary

The paper proposes that the ubiquitous scaling property of geographic space—whereby small objects vastly outnumber large ones—constitutes a universal rule for map generalization. This scaling manifests as heavy‑tailed distributions (power‑law, lognormal, or exponential). The authors formalize a “head/tail division rule”: given a set of geographic objects ranked by a chosen attribute (e.g., length, connectivity), the arithmetic mean separates the data into a low‑percentage “head” (large, vital objects) and a high‑percentage “tail” (small, trivial objects). Generalization proceeds by retaining the head and discarding or aggregating the tail; the process is applied recursively until the head no longer exhibits a heavy‑tailed pattern or falls below a predefined minority threshold (e.g., 40 %).

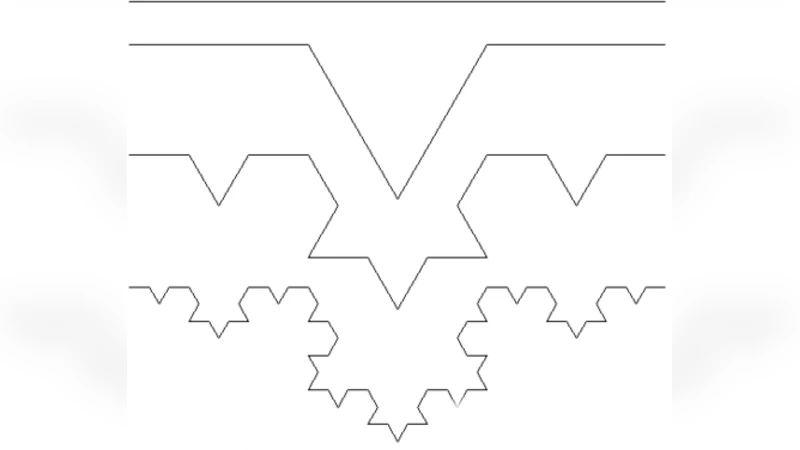

A universal algorithm is outlined: (1) rank objects, (2) test for heavy‑tailed behavior using maximum‑likelihood estimation and Kolmogorov‑Smirnov tests, (3) split at the mean, (4) keep the head, (5) repeat. The rule is rooted in fractal self‑similarity: the head is statistically similar to the whole, so a map at a smaller scale can be represented by the head alone.

Three empirical case studies validate the approach.

- Swedish street network – Using OpenStreetMap data, 166 479 natural streets were derived. Street length follows a lognormal distribution, while street connectivity follows a power‑law (exponent ≈ 3.5). By iteratively selecting streets longer than the mean length (or more connected than the mean degree), eight levels of detail were generated. Each level preserved the original heavy‑tailed distribution, demonstrating that the essential network structure survives successive generalizations.

- British coastline – The Douglas‑Peucker line‑simplification algorithm requires a distance threshold. The authors compute the perpendicular distances of all vertices to their segment, take the arithmetic mean as the threshold, and apply the head/tail rule to decide which vertices to keep. This yields a series of coastline representations at multiple scales without manually tuning the tolerance, illustrating the method’s applicability to graphic generalization.

- British drainage network – Stream segments were ranked by connectivity (number of intersecting streams). Low‑connectivity streams (the tail) were removed, while high‑connectivity streams (the head) were retained, producing successive simplified drainage networks. The connectivity distribution remained a power‑law across levels, confirming that the core hydrological structure is retained.

The authors compare their scaling‑based rule with Töpfer’s radical law, which predicts the number of objects to retain based solely on the square‑root of the scale ratio. While Töpfer’s law is empirical and independent of data distribution, the proposed rule is data‑driven, automatically adapts to the underlying distribution, and can be applied to any attribute or object type (points, lines, polygons). Advantages include objectivity, reproducibility, and the ability to handle diverse datasets without ad‑hoc parameter selection.

Limitations are acknowledged: the method relies on the presence of a clear heavy‑tailed distribution; datasets that are approximately Gaussian may not benefit. Using the mean as a split point can be sensitive to outliers, suggesting the need for preprocessing or alternative central measures (median, quantiles). Future work is suggested to explore multi‑criterion splits, hybrid distributions (e.g., power‑law with exponential cutoff), and integration with GIS workflows.

In sum, the paper demonstrates that the scaling property of geographic space provides a robust, universal principle for map generalization. By systematically applying the head/tail division rule, cartographers can produce multi‑scale maps that preserve the essential spatial structure, offering a theoretically grounded alternative to traditional, experience‑based generalization techniques.

Comments & Academic Discussion

Loading comments...

Leave a Comment