Distributed Low-rank Subspace Segmentation

Vision problems ranging from image clustering to motion segmentation to semi-supervised learning can naturally be framed as subspace segmentation problems, in which one aims to recover multiple low-dimensional subspaces from noisy and corrupted input…

Authors: Ameet Talwalkar, Lester Mackey, Yadong Mu

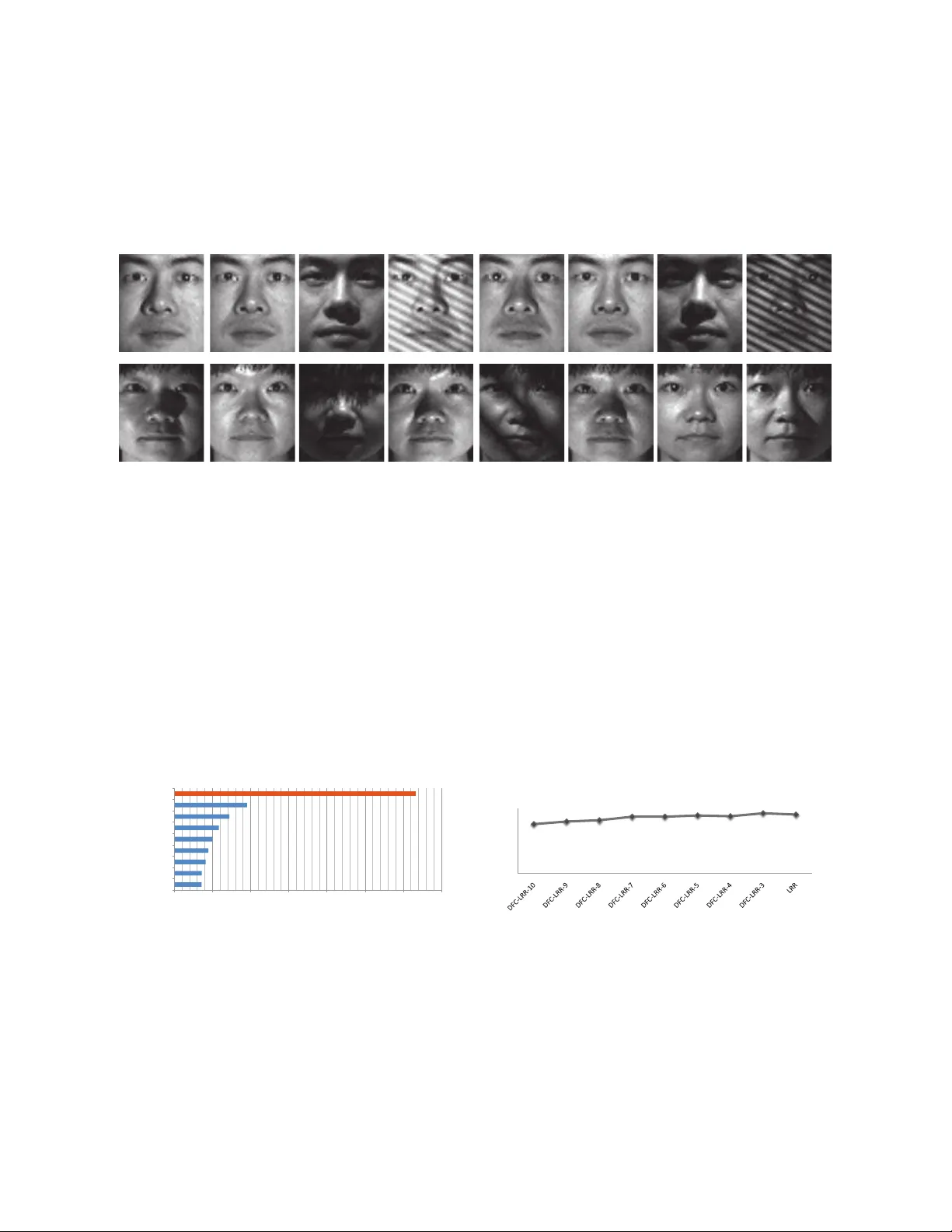

Distributed Low-rank Subspace Segmentation Ameet T alwalkar a Lester Mackey b Y a dong Mu c Shih-Fu Chang c Michael I. Jordan a, d a Departmen t of Electrical Engineer ing and Computer Science, UC Berkeley b Departmen t of Statis tics, Stanford c Departmen t of Electrical Engineering, C olumbia d Departmen t of Statis tics, UC Berkeley Abstract V ision problems ranging from image clustering to motion segmentation to semi-supervised learnin g can natur ally be fram ed as subspace se gmentation prob lems, in which o ne aims to recover multiple low-dimensional s ubspaces from no isy and corrup ted input data. Low-Rank Representation (LRR), a conv ex f ormulatio n of the subspace segmen tation pro blem, is provably an d empirica lly accu rate on small problem s but does not scale to the ma ssi ve si zes of mod ern vision datasets. Moreover , past work aimed at scaling up low-rank matrix factorization is no t applicab le to LRR giv en its non- decomp osable constraints. In this w ork, we propose a novel di vide-and -conq uer algorithm for large- scale subspace se gmentation that can cope with LRR’ s non-d ecompo sable constrain ts an d m aintains LRR’ s strong recovery guarantee s. T his has im mediate implications f or the scalability of subspace segmentation, which we demonstrate on a benchmark face rec ognition dataset and in simu lations. W e then introdu ce novel a pplications of LRR -based subspace se gmentation to large- scale semi- supervised learnin g f or multim edia event detection, concept detection , and ima ge taggin g. In each case, we obtain state-of-the-a rt results and order-of-mag nitude sp eed ups. 1 Intr oduction V isual data, th ough inna tely hig h d imensional, often reside in or lie close to a u nion of low-dimensional subspaces. These s ubspaces might reflect physical con straints on the objects comp rising images and video (e.g., faces under vary- ing illumination [2] or tra jectories of rigid objects [2 4]) or naturally o ccurring v ar iations in produ ction (e.g., digits hand-wr itten by different ind ividuals [12]). Subspa ce se gmentation techn iques model these classes o f da ta by recov- ering bases f or the multiple under lying subspaces [10, 7]. Ap plications includ e image clustering [7], segmentation of images, video, and motion [30, 6, 26], and affinity graph construction for semi-superv ised learning [32]. One pro mising, con vex for mulation of the subspace segmentation problem is the low-rank r epresentation (LRR) pro- gram of Liu et al. [17, 18]: ( ˆ Z , ˆ S ) = argmin Z , S k Z k ∗ + λ k S k 2 , 1 (1) subject to M = MZ + S . Here, M is an in put matrix of datapoin ts drawn f rom multiple subspaces, k·k ∗ is th e nuclear norm, k·k 2 , 1 is the sum of the co lumn ℓ 2 norms, an d λ is a para meter that tra des off b etween these penalties. LRR segments the colu mns o f M into subspaces using the solution ˆ Z , a nd, along with its extension s (e.g., La tLRR [19] and NNLRS [32]), admits strong guara ntees of correc tness and stron g empir ical per forman ce in clustering and graph constructio n applicatio ns. Howe ver, the standard algorithm s for solvin g Eq. (1 ) are unsuitable f or large-scale pr oblems, d ue to their seq uential nature and their reliance on the repeated computatio n of costly truncated SVDs. Much of the computation al burden in solving LRR stems from the nuclear norm penalty , which is k nown to encourag e low-rank solutions, so one might hope to lev erage the large body of past work on par allel and distributed matrix factorization [11, 23, 8, 31, 21] to improve the scalability of LRR. Unfo rtunately , these techniqu es are tailored to optimization proble ms with losses and constraints that decoup le across the entries of the in put matrix. Th is d ecoup ling requirem ent is violated in the LRR problem due to the M = MZ + S co nstraint o f E q. ( 1), and this non-d ecompo sable constraint introd uces new alg orithmic a nd analy tic challen ges th at do no t arise in deco mposable matrix factorizatio n problem s. 1 T o address these challeng es, we develop, analyze , and e valuate a provably accura te divide-and-con quer app roach to large-scale subspace segmentation that sp ecifically accounts for the non-decompo sable structure of the LRR problem. Our contributions are three-fold: Algorithm: W e introdu ce a parallel, divide-and-con quer approximation algor ithm for LRR th at is suitable for large- scale subspace segmentation prob lems. Scalability is achieved by dividing the origin al LRR prob lem in to co mputa- tionally tractab le and co mmunic ation-fre e subproblems, solving the subp roblems in parallel, an d comb ining the results using a techniqu e from rando mized matr ix appr oximation . Our algorith m, wh ich we call D F C-L RR, is based on the principles o f th e Divide-Factor-Combine (D F C ) fram ew ork [21] f or decomp osable matrix factor ization but can cop e with the non- decomp osable constraints of LRR. Analysis: W e characterize th e segmentation behavior o f ou r new algo rithm, sho wing tha t D F C-LRR maintains the segmentation guara ntees of the or iginal LRR algorith m with high p robab ility , e ven while en joying substan tial speed- ups o ver its na mesake. Our ne w analysis featur es a significant broa dening of the orig inal LRR theory to t reat the richer class of LRR-type subpro blems that arise in D F C -LRR. M oreover , since o ur ultimate goal is subspace segmentation and not matr ix recovery , o ur theory gu arantees correctness under a more substan tial reduction of pro blem complexity than the work of [21] (see Sec. 3.2 for more details). Ap plications: W e first presen t results on face clustering and sy nthetic subspace segmentatio n to demonstrate that D F C-L RR achieves accuracy comparable to LRR in a fraction of the time. W e then propose and v alid ate a novel application of the L RR method ology to large-scale grap h-based semi-superv ised learning. While LRR h as be en used to co nstruct affinity gr aphs for semi-superv ised lear ning in th e past [4 , 3 2], prio r attempts have failed to scale to the sizes o f real-world datasets. Leveraging the fav orable co mputatio nal properties o f D F C -LRR, we prop ose a scalable strategy for c onstructing such su bspace a ffinity grap hs. W e apply our methodolog y to a variety of computer vision tasks – mu ltimedia event detection, co ncept d etection, and image taggin g – demonstra ting an or der of magn itude improvement in speed and accuracy that exceeds t he state of the art. The remainde r of the pap er is organized as f ollows. In Section 2 we first review the low-rank representation approach to sub space segmen tation and then introduce our novel D F C -LRR algorithm. Next, we present our theoretical analysis of D F C -LRR in Section 3. Section 4 highlig hts the accuracy and efficiency of D F C -LRR on a variety of comp uter vision tasks. W e present subspace segmen tation results on simulated and real-world data in Section 4.1. In Section 4.2 we pr esent our n ovel app lication of D F C -LRR to graph -based semi- supervised learn ing problem s, and we conclud e in Section 5. Notatio n Gi ven a matrix M ∈ R m × n , we define U M Σ M V ⊤ M as th e com pact sin gular value d ecompo sition (SVD) of M , where r ank( M ) = r , Σ M is a diagon al matrix o f the r n on-zer o singular values an d U M ∈ R m × r and V M ∈ R n × r are the associated left an d r ight singular vectors of M . W e d enote the ortho gonal pro jection onto the column space of M as P M . 2 Divide-and-Conq uer Segmentation In this section, we revie w the LRR appr oach to subspace se gmentation and present our novel alg orithm, D F C-L RR. 2.1 Subspace Segmentation via LRR In the r obust subspac e se g mentation problem, we observe a matrix M = L 0 + S 0 ∈ R m × n , where the column s of L 0 are d atapoints drawn fr om multip le indepe ndent subspaces, 1 and S 0 is a colu mn-sparse outlier matrix. Ou r goal is to identify th e subspace associated with each colu mn of L 0 , despite the potentially g ross cor ruption introd uced by S 0 . An importan t observation for this task is that th e projectio n matrix V L 0 V ⊤ L 0 for the row space o f L 0 , sometimes termed the shape iteration matrix , is b lock diago nal whenev er the column s of L 0 lie in m ultiple inde penden t subspaces [1 0]. Hence, we can achieve accu rate se gmentatio n by first recovering the ro w space of L 0 . The LRR appr oach of [17] seeks to recover the ro w space of L 0 by solving the con vex optimization problem presen ted in Eq. (1). Importantly , the LRR solution comes with a gu arantee of cor rectness: th e colu mn sp ace of ˆ Z is exactly equal to the row s pace of L 0 whenever certain technical condition s are met [18] (see Sec. 3 for more details). Moreover , as we will show in this work, LRR is also well-suited to the constructio n of affinity graph s for sem i- supervised learning. In this setting, the goal is to define an af finity graph in which nodes co rrespon d to data points an d 1 Subspaces are independen t if the dimension of their direct sum is the sum of their dimensions. 2 edge weights exist between nod es drawn fro m th e same sub space. LRR can thus be u sed to recover the blo ck-sparse structure of the graph’ s affinity matrix, and these af finities can be used fo r semi-supervised label propagatio n. 2.2 Divide-F actor-Combine LRR (DFC- LRR) W e now present our scalable di v ide-and -conqu er algorithm, called D F C-L RR, for LRR-based subspace segmenta tion. D F C-L RR extends the principle s of the D F C framew ork of [2 1] to a ne w n on-dec omposab le problem . Th e D F C-LRR algorithm is summarized in Algorithm 1, and we next describe each step in further detail. D step - Divide input matrix into submatrice s: D F C-L RR rando mly partitions th e columns o f M into t l - column submatrices, { C 1 , . . . , C t } . For simplicity , we assume that t d ivides n ev enly . F step - Factor submatrices in para llel: D F C-L RR solves t subpr oblems in parallel. The i th LRR subpro b- lem is of the form min Z i , S i k Z i k ∗ + λ k S i k 2 , 1 (2) subject to C i = MZ i + S i , where the input matrix M is used as a d ictionary but only a subset of co lumns is used as the observations. 2 A typ ical LRR algorithm can be easily modified to solve Eq. (2) and will return a lo w -rank estimate ˆ Z i in factored form. C step - Combine submatrix estima tes: D F C-L RR gen erates a final ap prox imation ˆ Z pro j to the low-rank LRR so lution ˆ Z by projectin g [ ˆ Z 1 , . . . , ˆ Z t ] on to the colum n space of ˆ Z 1 . Th is colu mn pr ojection techn ique is common ly used to produce ran domized low-rank ma trix factorizations [15] an d w a s also emp loyed b y the D F C - P RO J algorithm of [21]. Runtime: As n oted in [21], many state-of-th e-art solvers fo r nuclear-norm r egularized prob lems like Eq. (1) have Ω( mnk M ) per-iteration time complexity due to the rank- k M truncated SVD requir ed on each iteration. D F C-L RR reduces th is per -iteration complexity sign ificantly and req uires ju st O ( mlk C i ) time for the i th subpro blem. Performin g th e subsequent co lumn projectio n step is relati vely cheap computationally , since an LRR solver can return its solution in factored form . Indeed, if we define k ′ , max i k C i , then the colum n projection step of D F C -LRR requires only O ( mk ′ 2 + l k ′ 2 ) time. Algorithm 1 D F C -LRR Input: M , t { C i } 1 ≤ i ≤ t = S A M P L E C O L S ( M , t ) do in parallel ˆ Z 1 = L R R ( C 1 , M ) . . . ˆ Z t = L R R ( C t , M ) end do ˆ Z pro j = C O L P RO J ( [ ˆ Z 1 , . . . , ˆ Z t ] , ˆ Z 1 ) 3 Theor etical Analysis Despite the significant redu ction in computation al com plexity , D F C-LRR p rovably maintain s th e strong theoretical guaran tees o f the LRR algorithm. T o make this statement precise, we first re view the technical conditions for accurate row s pace recovery required by LRR. 2 An alternativ e formulation inv olves replacing both instances of M wi th C i in Eq. (1). The resulting low-ran k estimate ˆ Z i would ha ve dimensions l × l , and the C step of D F C -LRR w ould compu te a low-rank approx imation on the block-diago nal matrix diag( ˆ Z 1 , ˆ Z 2 , . . . , ˆ Z t ). 3 0 0.1 0.2 0.3 0.4 0.5 0.6 0 0.2 0.4 0.6 0.8 1 LRR success rate outliers fraction γ DFC−LRR−10% DFC−LRR−25% LRR 0.1 0.2 0.3 0.4 0.5 0.6 500 1000 1500 2000 2500 3000 3500 LRR Timing time (seconds) outliers fraction γ DFC−LRR−10% DFC−LRR−25% LRR 500 1000 1500 2000 2500 3000 0 100 200 300 400 500 LRR Timing time (seconds) number of data points DFC−LRR−10% DFC−LRR−25% LRR (a) (b) (c) Figure 1: Results on synthetic data (reported r esults are a verages ov er 10 trials). (a) Phase tran sition of LRR an d D F C-L RR. (b,c) T iming results of LRR and D F C -LRR as f unctions of γ and n respectively . 3.1 Conditions f or LRR Correctness The LRR an alysis of L iu et al. [ 18] relies o n two key qu antities, the rank of the clean d ata matrix L 0 and the co her- ence [22] of the singular vectors V L 0 . W e combin e these pro perties into a single definition: Definition 1 ( ( µ, r ) -Coher ence) . A matrix L ∈ R m × n is ( µ, r ) -coheren t if rank( L ) = r and n r k V ⊤ L k 2 2 , ∞ ≤ µ, wher e k·k 2 , ∞ is the maximum column ℓ 2 norm. 3 Intuitively , when the cohe rence µ is small, information is well-distrib uted across the rows of a matrix, and the r ow space is easier to recover f rom outlier corruption. Using these properties, Liu et al. [ 18] established the f ollowing recovery guara ntee for LRR. Theorem 2 ([18]) . Sup pose th at M = L 0 + S 0 ∈ R m × n wher e S 0 is supported on γ n column s, L 0 is ( µ 1 − γ , r ) - coherent, and L 0 and S 0 have indepe ndent column suppo rt with range( L 0 ) ∩ range( S 0 ) = { 0 } . Let ˆ Z be a solution r etu rned b y LRR. Then ther e exists a constant γ ∗ (depen ding on µ and r ) for whic h the column space of ˆ Z e xa ctly equals the r ow spac e of L 0 whenever λ = 3 / (7 k M k √ γ ∗ l ) an d γ ≤ γ ∗ . In other word s, LRR can exactly recov er the row space of L 0 ev en when a co nstant fraction γ ∗ of the colu mns h as been corru pted by outliers. As the ran k r an d coherence µ shr ink, γ ∗ grows allo win g greater outlier tolerance. 3.2 High Probability Subspace Segmentatio n Our main theoretical re sult shows that, with h igh pr obability and un der the same conditions that guarantee the accuracy of L RR, D F C- LRR als o exactly recovers th e row space of L 0 . Recall that in ou r indep endent subspace setting accur ate row space recovery is tantamoun t to co rrect segmen tation of th e columns of L 0 . The pro of o f our result, wh ich generalizes the LRR analysis of [18] to a broader c lass of optimizatio n p roblems and adapts the D F C analysis of [21], can be found in the append ix. Theorem 3. F ix any failur e pr o bability δ > 0 . Un der the cond itions o f Thm, 2, let ˆ Z pro j be a solution r e turned by D F C -LRR . Then ther e e xists a con stant γ ∗ (depen ding on µ and r ) for which the column space of ˆ Z pro j exactly equ als the r ow space of L 0 whenever λ = 3 / (7 k M k √ γ ∗ l ) for each D F C -LRR subpr oblem, γ ≤ γ ∗ , and t = n/l for l ≥ c rµ log(4 n /δ ) / ( γ ∗ − γ ) 2 and c a fixed constan t lar ger than 1. Thm. 3 establishes that, lik e LRR, D F C-LRR can tolerate a con stant frac tion o f its d ata points bein g co rrupted an d still recover the correct subspace segmentation of the clean data points with high probab ility . When the n umber o f 3 Although [18] uses the notion of column co herence to analyze LRR, we work with the closely related notion of ( µ, r ) -coherence for ease o f notation in our pro ofs. Moreov er , we note that if a rank- r matri x L ∈ R m × n is sup ported on (1 − γ ) n columns then the column coherence of V L is µ if and only if V L is ( µ/ (1 − γ ) , r ) -coherent. 4 datapoints n is large, solving LRR directly m ay be proh ibitiv e, but DFC-LRR need on ly solve a co llection of sma ll, tractable subproblems. Indeed , Th m. 3 g uarantees high probability r ecovery for DFC-LRR even when the sub prob lem size l is logarithmic in n . The co rrespon ding reductio n in computatio nal comp lexity allows D F C-LRR to scale to large problems with lit tle sacrifice in accura cy . Notably , t his column samp ling complexity is better than that established by [21] in the matrix factorization setting: we require O ( r log n ) column s sampled, while [21] requir es in the worst case Ω( n ) columns f or matrix completion and Ω(( r log n ) 2 ) for robust matrix factorization. 4 Experiments W e no w explore the empirical perfo rmance of D F C-LRR on a variety o f simulate d an d real-world d atasets, first for the traditional task of robust subspace segmentation an d n ext for the more com plex task of graph -based semi-supervised learning. Our exper iments are designed to sho w the ef fectiv eness of D F C-LRR both when the theory of Sectio n 3 holds and when it is violated. Our synthetic datasets satisfy the theor etical assumptions of lo w rank , incoheren ce, a nd a small fraction of corrup ted column s, while our real-world datasets violate these criteria. For all o f our expe riments we use the inexact Aug mented Lagr ange Mu ltiplier (ALM) alg orithm of [17] as our ba se LRR algo rithm. For the subspace segmentation experiments, we set th e regularizatio n param eter to the values sug - gested in previous works [ 18, 17], while in our semi-supervised learning e xperimen ts we set it to 1 / p max ( m, n ) as su ggested in prior work. 4 In all exper iments we repo rt parallel runn ing times for D F C-LRR, i.e. , the time o f the longest running sub prob lem plu s the time required to com bine submatrix estimates via co lumn projection. All ex- periments were implemented in Matlab. The simulation studies were r un on an x 8 6 - 64 architecture using a sing le 2 . 60 Ghz core and 30 GB of ma in memory , while the real data experiments were performed on an x 86 - 64 architecture equippe d with a 2.67 GHz 12-core C PU and 64GB of main memo ry . 4.1 Subspace Segmentation: LRR v s. DFC-LRR W e first aim to verify that DFC -LRR p rodu ces ac curacy comparab le to LRR in significantly less time, both in s ynthetic and real-world settings. W e f ocus on the standar d robust subspa ce segmentation task o f iden tifying th e subsp ace associated with each input datapoin t. 4.1.1 Simulations T o construct our synthetic robust su bspace segmentation datasets, we first gener ate n s datapoints from each o f k indepen dent r -d imensional subspaces of R m , in a manner similar to [18]. For each subspace i , we ind epend ently select a basis U i unifor mly from all matrice s in R m × r with orthon ormal colum ns and a m atrix T i ∈ R r × n s of indepen dent entrie s each distributed u niform ly in [0 , 1] . W e form t he matrix X i ∈ R m × n s of samples from subspace i via X i = U i T i and let X 0 ∈ R m × kn s = [ X 1 . . . X k ] . F o r a given outlier fraction γ we next generate an addition al n o = γ 1 − γ k n s indepen dent outlier samp les, denoted by S ∈ R m × n o . Each ou tlier samp le has indep endent N (0 , σ 2 ) entries, wh ere σ is the average ab solute value of the en tries of th e k n s original samples. W e cr eate the in put matrix M ∈ R m × n , where n = k n s + n o , as a random permutation of the column s of [ X 0 S ] . In o ur first e xperimen ts we fix k = 3 , m = 150 0 , r = 5 , and n s = 200 , set the regularizer to λ = 0 . 2 , and vary the fraction of outliers. W e measu re with what fr equency LRR and D F C-LRR are able to recover of th e row space of X 0 and iden tify th e ou tlier co lumns in S , u sing the same criterion as defined in [18]. 5 Figure 1( a) shows average perfor mance over 10 trials. W e see that D F C -LRR perfor ms quite well, as the gap s in the phase transition s between LRR an d D F C -LRR are small when samplin g 10% of th e colu mns (i.e., t = 10 ) and are virtually n on-existent when sampling 25% of the columns (i.e., t = 4 ). Figure 1(b) shows corresponding timing results for the accuracy results presented in Figure 1(a). T hese timing results show sub stantial speedups in D F C-LRR relative to LRR with a modest trad eoff in accuracy as denoted in Figure 1(a). Note that we o nly repo rt timin g results for values of γ for which D F C-L RR was successful in all 10 tr ials, i.e ., fo r which the success r ate equaled 1 . 0 in Fig ure 1( a). Moreover, Figure 1(c) shows timin g results using the sam e parame ter values, except with a fixed fr action of outliers ( γ = 0 . 1 ) and a variable nu mber of sam ples in e ach su bspace, i.e., n s ranges from 7 5 to 1000 . Th ese timing results also sho w speedup s with minimal loss of a ccuracy , as in all o f these 4 http://percep tion.csl.illi nois.edu/mat rix- rank 5 Success is determined by whether the oracle constraints of Eq. (8) in the Appendix are satisfied within a tolerance of 10 − 4 . 5 Figure 2: E xemplar face imag es fro m Extend ed Y ale Database B. Ea ch row shows rando mly selected imag es for a human subject. 0 100 200 300 400 500 600 700 DFC-LRR-10 DFC-LRR-9 DFC-LRR-8 DFC-LRR-7 DFC-LRR-6 DFC-LRR-5 DFC-LRR-4 DFC-LRR-3 LRR Computing Time (unit: second) 0.455 0.479 0.491 0.524 0.524 0.535 0.528 0.556 0.544 0 0.1 0.2 0.3 0.4 0.5 0.6 Segmentation Accuracy (0-1) (a) (b) Figure 3: T rad e-off b etween compu tation and segmentation accuracy on f ace recog nition experiments. All results ar e obtained by averaging acro ss 1 00 in depend ent runs. (a) Run time of LRR and D F C-LRR with varying n umber of subprob lems. (b) Segmentation accuracy for these same experimen ts. 6 timing e xperimen ts, LRR and D F C-LRR were successful in all trials u sing the same criterion defined in [18] and used in our phase transition experiments of Figure 1(a). 4.1.2 F ace Clustering W e next demo nstrate the com parable quality and increa sed perfo rmance of D F C-LRR relative to LRR o n re al data, namely , a sub set o f Ex tended Y ale Databa se B, 6 a standard face benchm arking dataset. Following the expe rimental setup in [17], 6 4 0 frontal face images of 10 hu man subjects are chosen, ea ch of wh ich is r esized to be 48 × 42 pixels and forms a 2 016-d imensional fe ature vector . As noted in pr evious w ork [3], a low-dimensio nal subsp ace can be effecti vely used to mod el face images from on e person, an d hence face clusterin g is a natu ral applicatio n of subspace segmentation. Moreover , as illustrated in Figure 2 , a significan t portion of th e faces in th is dataset ar e “corrupted ” by shadows, and hence this collection of images is an ideal ben chmark for r obust subspace segmentation. As in [ 17], we use the featur e vector representation of these images to cre ate a 2016 × 6 40 dictionary matr ix, M , and run both LRR and D F C-LRR with th e parameter λ set to 0 . 1 5 . Next, we use the resultin g low-rank c oefficient matrix ˆ Z to compute an affinity matrix U ˆ Z U ⊤ ˆ Z , where U ˆ Z contains the top left singular vectors of ˆ Z . The affinity matrix is u sed to cluster t he d ata into k = 10 clu sters ( correspo nding to the 10 human subjects) via spectral embedd ing (to o btain a 10 D f eature re presentation ) follo wed by k -mean s. Follo win g [17], the co mparison of different clustering methods relies on segmentation a ccuracy . Each of the 1 0 clusters is assigned a lab el b ased o n majority vote of the groun d truth labels of the points assigned to the cluster . W e ev aluate clustering per forman ce of both LRR an d D F C - LRR by com puting segmentation accu racy as in [1 7], i.e., each cluster is assign ed a lab el based on major ity vote of the ground tru th l abels of th e points assigne d to the cluster . The se gmentation accuracy is then computed by av eraging the percentag e of correctly classified data over all classes . Figures 3(a) and 3(b ) show the com putation time and the segmentation accuracy , respecti vely , for LRR and for D F C - LRR with varying number s of subp roblems (i.e., values of t ). On this r elativ ely-small data set ( n = 64 0 faces), LRR requires over 10 m inutes to co n verge. D F C-LRR demon strates a ro ughly linear compu tational speedup as a f unction of t , comparab le accuracies to LRR for smaller values of t an d a quite gradual decrease in accuracy for larger t . 4.2 Graph-based Semi-Super vised Learning Graph r epresentation s, in which samples are vertices and weig hted ed ges express affinity relatio nships between sam- ples, are crucial in various comp uter vision ta sks. Classical graph co nstruction m ethods separa tely calcu late the outgoin g edges for each sample. This local strategy makes the graph vuln erable to contaminated data or o utliers. Recent work in comp uter vision has illustrated the u tility of glob al gra ph con struction strategies u sing g raph La pla- cian [9] or matrix lo w-rank [32] based regularizers. L1 regularizatio n h as also been ef f ectiv ely used to en courag e sparse gr aph construc tion [ 5, 13]. Building upon the success of glo bal constru ction meth ods and n oting th e connec- tion b etween subsp ace segmentatio n and grap h constru ction as described in Sectio n 2. 1, we present a n ovel application of the low-rank representation method ology , relying on our D F C -LRR alg orithm to scalably y ield a sparse, low-rank graph (SLR-graph ). W e presen t a variety of results on large-scale semi-superv ised learning visual classification tasks and provide a detailed comparison with leading baseline algorithms. 4.2.1 Benchmarking Data W e ad opt the following three large-scale benchmark s: Columbia Consumer V ideo (CCV) Content Detection 7 : Compiled to stimu late research on recogn izing highly- div erse v isual con tent in unco nstrained vide os, this dataset co nsists o f 9317 Y ouT u be v ideos over 2 0 seman tic cate- gories (e.g., baseball, beach, music perfo rmance) . Three popular au dio/visual fea tures (500 0-D SIFT , 500 0-D STIP , and 4000 -D MFCC) are extracted. MED12 Multimedia Event Detection : T he MED12 video corpus consists of ∼ 1 50 K multimedia videos, with an av erage du ration of 2 minutes, and is used f or d etecting 20 specific seman tic events. For each event, 1 30 to 3 67 videos are provided as positive examples, and th e remaind er of the video s are “null” videos that do not co rrespond to any ev ent. In this work, we keep all positive examples and sam ple 10 K n ull vid eos, resulting in a dataset of 13 , 876 videos. W e extract six features f rom each video , first at sample d frames and the n accu mulated to ob tain video- lev el 6 http://vision .ucsd.edu/ ˜ leekc/ExtYale Database 7 http://www.ee .columbia.edu /ln/dvmm/CCV / 7 representatio ns. The features are either visual (1000-D s parse-SIFT , 1000-D dense-SIFT , 15 00-D color-SIFT , 5000-D STIP), audio (2000 -D MFCC), or semantic featur es (2659-D C LASSEME [25]). NUS-WIDE-Lite Image T agging : NUS-WIDE is amo ng the largest a vailable image tagging benchm arks, consisting of over 269 K crawled images fr om Flickr th at are a ssociated with over 5 K user-provided tags. Groun d-truth im ages ar e manually provided for 81 selected co ncept tags. W e generate a lite version by sampling 20 K images. For each image, 128-D wavelet texture, 225-D bloc k-wise LAB-based color moments and 500-D bag of visual words are extracted, normalized and finally concatena ted to form a single feature representatio n for the image. !" #" $!" $#" %!" %#" &!" &#" '!" ()*+,--+$#" ()*+,--+$!" ()*+,--+#" ,--" !"##$%&'$()*+,-.$ ./)0" .0/1" 2)**" !" !#!$" !#%" !#%$" !#&" !#&$" !#'" !#'$" !#(" !#($" !#$" )*+,-..,%$" )*+,-..,%!" )*+,-..,$" -.." !"#$%&'"(#)"%*("+,-,.$%/0&*1%/2341% /0*1" /102" 3*++" (a) (b) Figure 4: T rad e-off between com putation and accu racy for the SLR-graph on the CCV d ataset. ( a) W all time of LRR and D F C-L RR with varying numb ers of subproblems. ( b) mAP scores for these same experiments. 4.2.2 Graph Construction Algorithms The three graph co nstruction scheme s we e valuate are described b elow . Note that we exclude other baselines (e.g., NNLRS [32], LL E graph [28], L1- graph [5]) due to either scalability concerns or b ecause prior work has alread y demonstra ted inferio r performance relati ve to the SPG algorithm defined below [32]. k NN-gra ph : W e constru ct a nearest n eighbo r graph by con necting (via und irected edges) each vertex to its k nearest neighbo rs in ter ms of l 2 distance in the specified featur e space. Exp onential weig hts are associated with ed ges, i.e., w ij = exp − d 2 ij /σ 2 , where d ij is the distance between x i and x j and σ is an empiric ally-tuned parameter [27]. SPG : Chen g et al. [5] p roposed a noise-r esistant L1-gr aph which en courag es sparse vertex connected ness, motiv ated by the work of sparse representation [2 9]. Subsequent work, entitled sparse pr ob ability graph ( SPG) [13] en forced positive graph weights. Follo wing the ap proach of [32], we implem ented a variant o f SPG by solving the f ollowing optimization prob lem for each sample: min w x k x − D x w x k 2 2 + α k w x k 1 , s .t. w x ≥ 0 , (3) where x is a feature r epresentation of a sample an d D x is the basis matrix for x constru cted fro m its n k nearest neighbo rs. W e use an open -source t ool 8 to solve this non-negative Lasso problem. SLR-graph : Our n ovel gr aph con struction metho d contain s two-step s: first L RR or D F C-LRR is perf ormed on the entire data set to recover the in trinsic low-rank clustering structu re. W e then treat the r esulting low-rank coe fficient matrix Z as an affinity matrix , and for sample x i , the n k samples with largest affinities to x i are selected to for m a basis matrix and used to solve the SP G optimization describe d b y Problem (3). T he resulting non- negativ e coefficients (typically sparse owing to the ℓ 1 regularization term on w x in (3)) are used to define the graph. 4.2.3 Experimental Design For ea ch bench markin g dataset, we first construc t gra phs b y treating sample images/vid eos as vertices an d using th e three algorithms outlined in Section 4.2.2 to create (sparse) weighted ed ges between vertices. For fair compariso n, we use the same parameter settings, namely α = 0 . 05 and n k = 500 for bo th SPG and SLR-gr aph. Moreover , we set k = 40 for k NN- graph after tuning over the range k = 10 throug h k = 60 . W e the n use a given graph structure to perform semi-supervised label p ropag ation u sing an ef ficient label propagation algorithm [27] th at enjoys a closed-f orm solu tion a nd often achieves the state-of -the-art p erfor mance. W e per form a separate la bel prop agation f or e ach category in o ur benchm ark, i.e., w e run a series of 20 binary classification lab el 8 http://sparse lab.stanford. edu 8 T ab le 1: Mean average precision (m AP) (0 -1) scores fo r various graph construction method s. D F C -LRR-10 is p er- formed for SLR-Graph. The best mAP score for each feature is highligh ted i n bold . (a) CCV k N N - G R A P H S P G S L R - G R A P H S I F T . 2 6 3 1 . 3 8 6 3 . 3 9 4 6 S T I P . 2 0 1 1 . 3 0 3 6 . 3 2 2 7 M F C C . 1 4 2 0 . 2 1 2 9 . 2 0 8 5 (b) MED12 k N N - G R A P H S P G S L R - G R A P H C O L O R - S I F T . 0 7 4 2 . 1 2 0 2 . 1 4 3 2 D E N S E - S I F T . 0 9 2 8 . 1 3 5 0 . 1 5 2 5 S P A R S E - S I F T . 0 7 8 0 . 1 2 5 8 . 1 4 6 4 M F C C . 0 9 6 2 . 1 3 7 1 . 1 3 7 1 C L A S S E M E . 1 3 0 2 . 1 8 7 2 . 2 1 2 0 S T I P . 0 6 2 0 . 0 8 3 5 . 0 8 0 3 (c) NUS-WIDE- Lite k N N - G R A P H S P G S L R - G R A P H . 1 0 8 0 . 1 0 0 3 . 1 1 7 9 propag ation experim ents fo r CCV/MED12 and 81 expe riments f or NUS-WIDE-Lite. For each cate gory , we random ly select half o f the samples as tra ining points (and use the ir groun d truth lab els f or label pr opagatio n) and u se the remaining half as a te st set. W e r epeat this pro cess 2 0 time s for each ca tegory with different ran dom splits. Fin ally , we compu te Mean A verage Precision (mAP) based on the results on the test sets acro ss all runs of label propagatio n. 4.2.4 Experimental Results W e first per formed experiments u sing the CCV b enchmar k, th e smallest of ou r datasets, to explor e the tr adeoff between computatio n a nd accur acy when using D F C -LRR a s part of our p roposed SLR-gr aph. Figu re 4 (a) pre sents the time required to run SLR-graph with LRR versus D F C -LRR with thr ee different num bers of subprob lems ( t = 5 , 10 , 1 5 ), while Figure 4(b ) presents the co rrespond ing accu racy results. The figures sh ow tha t D F C-LRR perform s com parably to LRR for smaller values of t , and perfor mance g radually degrad es f or larger t . Moreover, D F C -LRR is up to two orders of magnitude faster and achie ves superlinear speedu ps relative to LRR. 9 Giv en the scalab ility issues of LRR on this modest-sized dataset, along with th e co mparable accuracy of D F C -LRR, we r an SLR-g raph exclu si vely with D F C-L RR ( t = 10 ) for our two larger datasets. T ab le 1 summa rizes the results of our semi-su pervised lea rning experimen ts using the three g raph c onstruction tech- niques defined in Section 4.2.2. Th e results show that our propo sed SLR-g raph appro ach leads to significant per- forman ce gains in term s of mAP across all benchmar king datasets fo r the v ast majority of features. These results demonstra te the benefit of enforc ing both low-rankedness and sparsity du ring grap h construction . Moreover , con ven- tional lo w-rank oriented algorithms, e.g., [32 , 16] w ould be computationally infeasible on our benchmark ing datasets, thus highlightin g t he utility of employing D F C ’ s d i vide-an d-conq uer appro ach to generate a scalable algorithm. 5 Conclusion Our p rimary g oal in this work was to introd uce a provably accur ate algorith m suitable for large-scale low-rank su b- space se gmentation . While some contemporaneo us work [ 1] also aims at scalable subspac e segmentation, this method offers n o guara ntee of co rrectness. In contrast, D F C-LRR provably preser ves the theoretical re covery guaran tees of the LRR pro gram. Mor eover , our divide-and- conqu er approach achieves empir ical accuracy com parable to state-of- the-art methods while obtaining linea r to superlinear co mputation al gains, both on standard su bspace segmen tation tasks and on novel application s to semi-super vised learning. D F C -LRR also lays the groundwork for scaling up LRR deriv ati ves known to offer improved performance, e.g., LatLRR in the settin g of stan dard subspace segmentation and NNLRS in th e gr aph-based semi-superv ised lear ning setting. The same techniqu es may prove usef ul in developing scalable approx imations to other conve x formula tions for subspac e se gmentation, e.g., [20]. 9 W e restri cted the maximum number of internal LRR iterations to 500 to ensure t hat LRR ran to completion in less than two days. 9 Refer ences [1] A. Adler, M. Elad, and Y . Hel-Or . Probabilistic subspace cl ustering via sparse representations. I EEE Signal Pr ocess. Lett. , 20(1):63–66 , 2013. [2] R. Basri and D. Jacobs. R obus t face r ecognition via sparse representation. IEEE T rans. P attern Anal. Mac h. Intell. , 25(3):218– 233, 2003. [3] E. J. Cand ` es, X. Li, Y . Ma, and J. Wright. Rob ust principal component analysis? Journal of the ACM , 58(3):1–37, 2011. [4] B. Cheng, G. Liu, J. W ang, Z. Huang, and S. Y an. Multi-task l o w-rank affinity pursuit for image segmentation. In ICCV , 2011. [5] B. Cheng, J. Y ang, S. Y an, Y . Fu, and T . S. Huang. Learning with l1-graph for image analysis. IE EE T ransactions on Image Pr ocessing , 19(4):85 8–866, 2010. [6] J. Costeira and T . Kanade. A multibody factorization method for independently moving objects. International Journal of Computer V ision , 29(3), 1998. [7] E. Elhamifar and R. V idal. S parse subspace clustering. In CVPR , 200 9. [8] B. R. Feng Niu, C. R ´ e, and S . J. Wright. Hogwild!: A lock-free approach to parallelizing stochastic gradient descent. In NIPS , 2011. [9] S. Gao, I. W . H. Tsang, L. T . C hia, and P . Zha o. Local features are not lonely - laplacian sparse coding fo r image classification. In CVPR , 2010. [10] C. W . Gear . Multibody grouping from motion images. Int. J. Co mput. V ision , 29:133–1 50, August 1998. [11] R. Gemulla, E. Ni jkamp, P . J. H aas, and Y . S ismanis. Large-scale matrix factorization with distributed stochastic gradient descent. In KDD , 2 011. [12] T . Hastie and P . Simard. Metrics and models for handwritten character recognition. Statistical Scienc e , 13(1):54–65, 1998. [13] R. He, W .-S. Zheng, B.-G. Hu, and X.-W . K ong. Nonnegati ve sparse coding for discriminative semi-supervised learning. In CVPR , 2011. [14] W . Hoef fding. P robability inequalities for sums of bounded r andom v ariables. Journ al o f the American Statistical Association , 58(301):13– 30, 1963. [15] S. Kumar , M. Mohri, and A. T alw alkar . On sampling -based approximate spectral decomposition. In ICML , 2009. [16] Z. Lin, A. Ganesh, J. Wri ght, L. Wu , M. Chen, and Y . Ma. Fast con vex optimization algo rithms for e xact recovery of a corrupted lo w-rank matrix. UI UC T echnical Report UILU-ENG-09-2214, 2009. [17] G. Liu, Z. Lin, and Y . Y u. Robust subsp ace segmentation by low-ran k representation. In ICML , 20 10. [18] G. Liu, H. Xu, and S . Y an. Exact subspace segmentation and outlier detection by low-rank representation. arXiv:110 9. 1646v2[cs.IT] , 2011. [19] G. Liu and S. Y an. Latent lo w-rank representation for subspace segmentation and feature extraction. In ICCV , 2011. [20] G. Liu and S. Y an. Acti ve su bspace: T o ward scalable low-rank learning. Neural Computation , 24:3371–33 94, 2012. [21] L. Mack ey , A. T alwa lkar , and M. I. Jordan. Divide-and-conq uer matri x factorization. In NIPS , 2011. [22] B. Recht. A simpler approach to matrix completion. arXiv:0910.0651v2[cs .IT] , 2009. [23] B. Recht and C. R ´ e. Parallel stochastic gradient algorithms for large-scale matrix comp letion. In Optimization Online , 2011. [24] C. T omasi and T . Kanade. Shape and motion from image streams under orthography . International Journ al of Computer V ision , 9(2):137– 154, 1992. [25] L. T orresani, M. Szumm er , and A. W . Fitzgibbon. Efficient object cate gory recognition using classemes. In ECCV , 2 010. [26] R. V idal, Y . Ma, and S. Sastry . Generalized principal component analysis (gpca). IEE E Tr ans. P attern A nal. Mach. Intell. , 27(12):1–15 , 2005. [27] F . W ang and C. Zhang. Label propag ation through li near neigh borhoods . In ICML , 2006. [28] J. W ang, F . W ang, C. Zhang, H. C. Shen, and L . Quan. Li near neighborhood propagation and its applications. IEEE T rans. P attern Anal. Mac h. Intell. , 31(9):1600–161 5, 2009. [29] J. Wri ght, A. Y . Y ang, A. Ganesh, S. S . Sastry , and Y . Ma. Robust face recognition via sparse representation. IE EE T rans. P attern Anal. Mac h. Intell. , 31(2):210–227, 2009. [30] A. Y ang, J. Wright, Y . Ma, and S. Sastry . Unsupervised se gmentation of n atural imag es v ia lossy d ata c ompression. Computer V ision and Imag e Understanding , 110(2):212–22 5, 2008. [31] H.-F . Y u, C.-J. Hsieh, S. Si, and I . Dhillon. Scalable coordin ate descent approaches to parallel matrix factorization for recommender systems. I n ICDM , 2012. [32] L. Zhuang, H. Gao , Z. Lin, Y . Ma, X. Zhang, an d N. Y u. Non-negati ve low rank an d sparse graph for semi-supervised learning. In CVPR , 2012. 10 A Proof of T heor em 3 Our proof of Thm. 3 rests upon three key r esults: a new d eterministic recovery guara ntee for LRR-type problems that gener alizes the g uarantee of [18], a pro babilistic estimation g uarantee for column pr ojection estab lished in [2 1], and a prob abilistic guarantee of [21] showing that a u niform ly chosen submatrix of a ( µ, r ) -coh erent matr ix is ne arly ( µ, r ) -coheren t. These re sults a re presented in Secs. A.1, A.2, an d A.3 r espectiv ely . Th e proo f o f Thm. 3 follows in Sec. A.4. In wh at follows, the unad orned n orm k·k repr esents the spectr al nor m of a matrix. W e will also make use o f a technical co ndition, introdu ced by Liu et al. [ 18] to ensure that a corru pted data ma trix is well- behaved when u sed as a dictionar y: Definition 4 (Relativ ely W ell-Definedn ess) . A matrix M = L 0 + S 0 is β - RWD if k Σ − 1 M V T M V L 0 k ≤ 1 β k M k . A larger v alue of β corr esponds to improved recovery properties. A.1 Analysis of Low-Rank Repr esentation Thm. 1 of [1 8] analy zes LRR recovery u nder the c onstraint O = DZ + S when the o bservation matrix O and the dictionary D are bo th eq ual to the inp ut matr ix M . Our next theore m provides a compar able analy sis when the observation matrix is a column submatrix of the dictionar y . Theorem 5. Su ppose that M = L 0 + S 0 ∈ R m × n is β -RWD with rank r an d tha t L 0 and S 0 have independen t column support with range( L 0 ) ∩ r ange( S 0 ) = { 0 } . Let S 0 ,C ∈ R m × l be a column submatrix of S 0 supported on γ l columns, and suppo se that C , the corr esponding column submatrix of M , is ( µ 1 − γ , r ) -coherent. Define γ ∗ , 324 β 2 324 β 2 + 49(11 + 4 β ) 2 µr , and let ( ˆ Z , ˆ S ) be a solution to the pr ob lem min Z , S k Z k ∗ + λ k S k 2 , 1 subject to C = MZ + S (4) with λ = 3 / (7 k M k √ γ ∗ l ) . If γ ≤ γ ∗ , then the column space of ˆ Z equals the r ow spa ce of L 0 . The proof of Thm. 5 can be found in Sec. B. A.2 Analysis of Column Projection The following lem ma, d ue to [21], shows that, with h igh probability , colum n projection exactly recovers a ( µ, r ) - coheren t matrix by sampling a num ber of columns proportional to µr log n . Corollary 6 (Co lumn Projection under Inco herence [21, Cor . 6]) . Let L ∈ R m × n be ( µ, r ) -coh er e nt, a nd let L C ∈ R m × l be a matrix of l c olumns of L sampled u niformly without r ep lacement. If l ≥ cr µ lo g( n ) log(1 /δ ) , where c is a fixed positive constan t, then, L = L pro j , U L C U ⊤ L C L exactly w ith pr o bability at least 1 − δ . A.3 Conservation of Incoherence The f ollowing lemma of [21] shows that, with high probab ility , L 0 ,i captures the f ull rank o f L 0 and has coheren ce not much larger than µ . Lemma 7 (Co nservation of Incoh erence [21, Lem. 7]) . Let L ∈ R m × n be ( µ, r ) -co her ent, a nd let L C ∈ R m × l be a matrix of l co lumns o f L sampled unifo rmly without r eplacement. If l ≥ cr µ log ( n ) lo g(1 /δ ) /ǫ 2 , wher e c is a fixed constant lar ger tha n 1, then L C is ( µ 1 − ǫ/ 2 , r ) -coherent w ith pr oba bility at least 1 − δ /n . 11 A.4 Proof of DFC-LRR Guarantee Recall th at, u nder Alg. 1, the input ma trix M has been partitioned into column su bmatrices { C 1 , . . . , C t } . Let { C 0 , 1 , . . . , C 0 ,t } and { S 0 , 1 , . . . , S 0 ,t } be the corresponding partition s o f L 0 and S 0 , let s i , γ i l be th e size of th e column suppor t of S 0 ,i for each index i , and let ( ˆ Z i , ˆ S i ) be a solution to the i th DFC-LRR subpro blem. For each index i , we furth er define A i as th e event that C 0 ,i is (4 µ/ (1 − γ i ) , r ) -coheren t, B i as th e event that s i ≤ γ ∗ l , and G ( Z ) as the event that the column space of the matrix Z is equ al to the row space of L 0 . Und er our choice of γ ∗ , Thm. 5 implies that G ( ˆ Z i ) h olds when A i and B i are both realized. H ence, when A i and B i hold for all indices i , the column space of ˆ Z = [ ˆ Z 1 , . . . , ˆ Z t ] precisely equ als the row space of L 0 , and the me dian rank of { ˆ Z 1 , . . . , ˆ Z t } equa ls r . Applying Cor . 6 with l ≥ cr µ log 2 (4 n/δ ) / ( γ ∗ − γ ) 2 ≥ c rµ log( n ) log(4 / δ ) , shows that, given A i and B i for all ind ices i , ˆ Z pro j equals ˆ Z with probab ility at least 1 − δ / 4 . T o estab lish G ( ˆ Z r p ) with probability at least 1 − δ , it therefo re remains to show that P ∩ t i =1 ( A i ∩ B i ) = 1 − P ∪ t i =1 ( A c i ∪ B c i ) (5) ≥ 1 − t X i =1 ( P ( A c i ) + P ( B c i )) (6) ≥ 1 − 3 δ / 4 . (7) Because D F C-LRR partitions columns un iformly at r andom , the variable s i has a hypergeometric distribution with E s i = γ l an d therefore satisfies Hoef fding’ s inequality for the hypergeometr ic distribution [14, Sec. 6]: P ( s i ≥ E s i + l τ ) ≤ exp − 2 l t 2 . It follows that P ( B c i ) = P ( s i > γ ∗ l ) = P ( s i > E s i + l ( γ ∗ − γ )) ≤ exp − 2 l ( γ ∗ − γ ) 2 ≤ δ / (4 t ) by our assumption that l ≥ crµ log 2 (4 n/δ ) / ( γ ∗ − γ ) 2 ≥ log(4 t/ δ ) / [2( γ ∗ − γ ) 2 ] . By Lem. 7 and our choice of l ≥ cr µ log 2 (4 n/δ ) / ( γ ∗ − γ ) 2 ≥ cr µ log ( n ) log (4 /δ ) / (1 − γ ) , each subm atrix C 0 ,i is (2 µ/ (1 − γ ) , r ) -co herent with proba bility at least 1 − δ / (4 n ) ≥ 1 − δ/ (4 t ) . A second app lication of Hoeffding’ s inequality for the hypergeometric fu rther implies that P 2 µ 1 − γ > 4 µ 1 − γ i = P ( s i < E s i − l (1 − γ )) ≤ exp − 2 l (1 − γ ) 2 ≤ δ / (4 t ) , since l ≥ cr µ lo g(4 n /δ ) / ( γ ∗ − γ ) 2 ≥ log(4 t/ δ ) / [2(1 − γ ) 2 ] . Hence, P ( A c i ) ≤ δ / (2 t ) . Combining our results, we find t X i =1 ( P ( A c i ) + P ( B c i )) ≤ 3 δ / 4 as desired. B Proof of Th eor em 5 Let I 0 be the column suppor t of S 0 ,C , and let I c 0 be its set com plement in { 1 , . . . , l } . For any ma trix S ∈ R a × b and index set I ⊆ { 1 , . . . , b } , we let P I ( S ) be the orth ogon al projectio n of S on to th e space of a × b m atrices with column support I , so that ( P I ( S )) ( j ) = S ( j ) , if j ∈ I an d ( P I ( S )) ( j ) = 0 otherwise . 12 B.1 Oracle Constraints Our proof of Thm. 5 will p arallel Thm. 1 of [18]. W e b egin by introducin g two oracle constraints that would guarantee the desired outcom e if satisfied. Lemma 8. Under the a ssumptions of Thm. 5 , suppo se tha t C = MZ + S for some matrices ( Z , S ) . If ( Z , S ) addition ally satisfy the oracle constrain ts P L ⊤ 0 Z = Z and P I 0 ( S ) = S (8) then the column space of Z equals the r ow space of L 0 . Proof By Eq. 8, the row space of L 0 contains the colum n space of Z , so the two will be equal if rank( L 0 ) = rank( Z ) . This equ ality indeed holds, since C 0 = P I c 0 ( C ) = P I c 0 ( MZ + S ) = M P I c 0 ( Z ) , and therefo re rank( L 0 ) = rank( C 0 ) ≤ rank( M P I c 0 ( Z )) ≤ rank( P I c 0 ( Z )) ≤ rank( Z ) ≤ r ank( L 0 ) . Thus, to prove Thm. 5, it suffices to s how that an y solution to Eq. 4 also satisfies the oracle constraints of Eq. 8. B.2 Conditions f or Optimality T o this en d, we derive suf ficien t conditions for solvin g Eq. 4 an d moreover s how that if any solution to Eq. 4 satisfies the oracle constraints of Eq. 8, then all solutions do. W e will requir e some ad ditional n otation. For a m atrix Z ∈ R n × l we define T ( Z ) , { U Z X + YV ⊤ Z : X ∈ R r × l , Y ∈ R n × r } , P T ( Z ) as the orth ogon al pr ojection on to th e set T ( Z ) , and P T ( Z ) ⊥ as the ortho gonal proje ction onto the o rthog onal com plement of T ( Z ) . For a matrix S with column supp ort I , we defin e the colum n n ormalized version, B ( S ) , which satisfies P I c ( B ( S )) = 0 and B ( S ) ( j ) , S ( j ) / k S ( j ) k ∀ j ∈ I . Theorem 9. Und er the assumption s of Thm . 5, suppose that C = MZ + S for some matrices ( Z , S ) . If there exists a matrix Q satisfying (a) P T ( Z ) ( M ⊤ Q ) = U Z V ⊤ Z (b) kP T ( Z ) ⊥ ( M ⊤ Q ) k < 1 (c) P I 0 ( Q ) = λ B ( S ) (d) kP I c 0 ( Q ) k 2 , ∞ < λ. then ( Z , S ) is a solution to Eq . 4. If, in additio n, P I 0 ( Z + Z ) = 0 , and ( Z , S ) sa tisfy the oracle constraints of E q. 8, then all solutions to Eq. 4 satisfy the oracle constraints o f Eq. 8. Proof The proof o f this theorem is identical to that of [18, Th m. 3] which estab lishes the same r esult when the observation C is repla ced by M . It remains to construct a feasible pair ( Z , S ) satisfy ing th e oracle constrain ts and P I 0 ( Z + Z ) = 0 and a dua l certifica te Q satisfying the condition s of Thm. 9. B.3 Constructing a Dual Certificate T o this en d, we consider the oracle pr oblem : min Z , S k Z k ∗ + λ k S k 2 , 1 (9) subject to C = MZ + S , P L ⊤ 0 Z = Z , and P I 0 ( S ) = S . Let Y be the bin ary matrix tha t selects th e column s o f C f rom M . The n ( P L ⊤ 0 Y , S 0 ,i ) is feasible for this problem , and hence an op timal solution ( Z ∗ , S ∗ ) must exist. By explicitly co nstructing a dual certificate Q , we will show that ( Z ∗ , S ∗ ) also solves the LRR subproblem of Eq. 4. 13 W e will n eed a v ariety of lemmas paralleling those dev eloped in [18]. Let ¯ V , V Z ∗ U ⊤ Z ∗ V L 0 . The following lemma was established in [18]. Lemma 10 (Lem. 8 of [18]) . ¯ V ¯ V ⊤ = V Z ∗ V ⊤ Z ∗ . Mor eover , for any A ∈ R m × l , P T ( Z ∗ ) ( A ) = P L ⊤ 0 A + AP ¯ V − P L ⊤ 0 AP ¯ V . The next lemma parallels Lem. 9 of [18]. Lemma 11. Let ˆ H = B ( S ∗ ) . Then V L 0 P I 0 ( ¯ V ⊤ ) = λ P L ⊤ 0 M ⊤ ˆ H . Proof The proo f is identical to that of Lem. 9 of [18]. Define G , P I 0 ( ¯ V ⊤ )( P I 0 ( ¯ V ⊤ )) ⊤ and ψ , k G k . The next lemma parallels Lem. 10 of [18]. Lemma 12. ψ ≤ λ 2 k M k 2 γ l . Proof The proo f is identical to that of Lem. 10 of [18], sa ve for the size of I 0 , which is now bounded by γ l . Note that under the assumption λ ≤ 3 / (7 k M k √ γ l ) , we have ψ ≤ 1 / 4 . The next lemma w as established in [18]. Lemma 13 (Lem. 11 of [18]) . If ψ < 1 , then P I 0 (( Z ∗ ) + Z ∗ ) = P I 0 ( P ¯ V ) = 0 . Lem. 12 of [18] is uncha nged in our setting. Th e next lemma parallels Lem. 13 of [18]. Lemma 14. kP I c 0 ( ¯ V ⊤ ) k 2 , ∞ ≤ q µr (1 − γ ) l Proof By assumptio n, C = MZ ∗ + S ∗ , rank( C 0 ) = r , and P I c 0 ( C ) = C 0 = P I c 0 ( C 0 ) . Hence, C 0 = P I c 0 ( C 0 ) = M P I c 0 ( Z ∗ ) , and thus V ⊤ C 0 = P I c 0 ( V ⊤ C 0 ) = Σ − 1 C 0 U ⊤ C 0 MU Z ∗ Σ Z ∗ P I c 0 ( V ⊤ Z ∗ ) . This relationship implies that r = rank( V ⊤ C 0 ) ≤ rank( P I c 0 ( V ⊤ Z ∗ )) ≤ ra nk( V ⊤ Z ∗ ) = r and therefor e that P I c 0 ( V ⊤ Z ∗ ) is of full row ran k. Th e rem ainder of the proo f is identical to that o f Le m. 1 3 of [1 8], sa ve for the coherence factor of (1 − γ ) l in place of (1 − γ ) n . W ith these lem mas in hand, we define Q 1 , λ P L ⊤ 0 M ⊤ ˆ H = V L 0 P I 0 ( ¯ V ⊤ ) Q 2 , λ P ( L ⊤ 0 ) ⊥ P I c 0 (( I + ∞ X i =1 ( P ¯ V P I 0 P ¯ V ) i ) P ¯ V ) M ˆ HP ¯ V = λ P I c 0 (( I + ∞ X i =1 ( P ¯ V P I 0 P ¯ V ) i ) P ¯ V ) P ( L ⊤ 0 ) ⊥ M ˆ HP ¯ V , where the first relation follows from Lem. 11. Our final theor em parallels Thm. 4 of [18]. Theorem 15. Assume ψ < 1 , and let Q , ( M + ) ⊤ ( V L 0 ¯ V ⊤ + λ M ⊤ ˆ H − Q 1 − Q 2 ) . If γ 1 − γ < β 2 (1 − ψ ) 2 (3 − ψ + β ) 2 µr , 14 (1 − ψ ) q µr 1 − γ k M k √ l ( β (1 − ψ ) − (1 + β ) q γ 1 − γ µr ) < λ , and λ < 1 − ψ k M k √ γ l (2 − ψ ) , then Q satisfies the condition s in T hm. 9. Proof The pro of of pro perty S3 r equires a sma ll mod ification. Thm. 4 of [ 18] establish es that P I 0 ( Q ) = λ P M ˆ H . T o con clude that P I 0 ( Q ) = λ ˆ H , we n ote that S ∗ i = C − MZ ∗ and that the column space of C con tains the column space of M by assumption. Hence, P M S ∗ i = S ∗ i and therefo re P I 0 ( Q ) = λ P M ˆ H = λ ˆ H . The p roofs of properties S4 an d S5 are unch anged except for the dimensiona lity factor which changes f rom n to l . Finally , Lem. 14 of [18] guarantees that the preconditio ns of Thm. 15 are met under our assumptions on λ, γ ∗ , a nd γ . 15

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment