Practical Verification of Decision-Making in Agent-Based Autonomous Systems

We present a verification methodology for analysing the decision-making component in agent-based hybrid systems. Traditionally hybrid automata have been used to both implement and verify such systems, but hybrid automata based modelling, programming and verification techniques scale poorly as the complexity of discrete decision-making increases making them unattractive in situations where complex logical reasoning is required. In the programming of complex systems it has, therefore, become common to separate out logical decision-making into a separate, discrete, component. However, verification techniques have failed to keep pace with this development. We are exploring agent-based logical components and have developed a model checking technique for such components which can then be composed with a separate analysis of the continuous part of the hybrid system. Among other things this allows program model checkers to be used to verify the actual implementation of the decision-making in hybrid autonomous systems.

💡 Research Summary

The paper addresses a pressing challenge in modern autonomous systems: how to verify the decision‑making component when the system is built as a hybrid of continuous control and a separate logical agent. Traditional hybrid automaton models embed both continuous dynamics and discrete decision logic in a single formalism, but this approach quickly becomes intractable as the logical reasoning grows in complexity. The authors propose a compositional verification methodology that isolates the rational agent (typically modeled in a BDI framework) from the continuous plant and applies model‑checking techniques directly to the agent’s program.

The methodology consists of five steps. First, the agent’s behavior is implemented and its input/output interface to the environment is formally described. Second, an over‑approximation of all possible perceptual inputs (sensor readings) is constructed; the agent is then model‑checked against a property φ under this coarse abstraction. Third, any environmental hypotheses—expressed as logical statements about how perceptions may arise—are incorporated to strengthen the verification results. Fourth, the process iterates whenever the agent or the environmental assumptions are refined. Finally, for multi‑agent systems, the authors employ assume‑guarantee reasoning: each agent is verified in isolation, and the individual results are combined deductively to infer system‑wide safety and liveness guarantees.

A key technical contribution is the introduction of the operator B_agent, which allows properties to be phrased in terms of the agent’s beliefs rather than the global state. For example, instead of verifying □¬bad (nothing bad ever happens), the method verifies □B_agent¬bad (the agent never believes that something bad will happen). This shift enables verification to focus on the finite set of internal decisions, sidestepping the infinite state space of the physical world. The approach also treats the continuous part as a black box, assuming that separate analysis (e.g., control‑theoretic proofs) provides the necessary guarantees about the outcomes of actions.

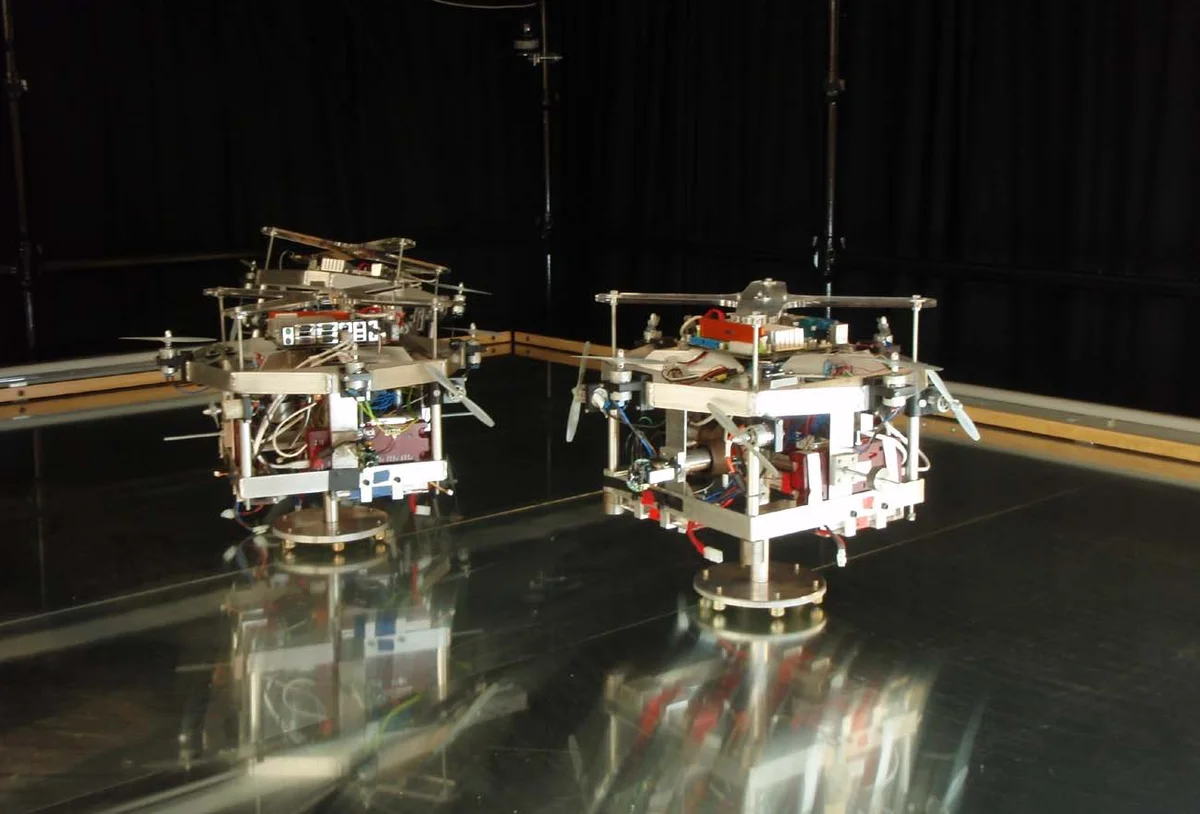

The paper demonstrates the methodology on three diverse case studies. In an urban search‑and‑rescue scenario, a robot’s high‑level planning agent is verified to avoid hazardous zones and to guarantee eventual discovery of victims, regardless of the myriad possible sensor readings. In a low‑Earth‑orbit satellite example, the agent’s mission‑level decisions about orbit maintenance and power management are shown to satisfy safety constraints under varying solar flux and communication delays. In an adaptive cruise‑control case, the agent’s speed‑selection logic is proved to maintain safe following distances for all admissible combinations of lead‑vehicle speed and distance, using an “abstraction engine” to hide the underlying vehicle dynamics. These examples illustrate how the method can handle both single‑agent and multi‑agent contexts, and how environmental hypotheses can be explicitly encoded to tighten the verification.

Compared with prior work on hybrid automata verification, this approach flips the focus: it verifies the discrete reasoning component with program model checkers (e.g., Java PathFinder, SPIN) while leaving the continuous dynamics to be handled by traditional control analysis. This separation yields several benefits: (1) the decision‑making code can be checked early, catching implementation bugs before integration; (2) the verification scales better because the state space is limited to the agent’s belief‑goal‑intention space; (3) assumptions about the physical world are made explicit, improving traceability for certification.

The authors acknowledge limitations. Exhaustively exploring all perceptual combinations can still lead to state‑space explosion, especially in highly noisy environments. The correctness of the verification hinges on the fidelity of the environmental hypotheses; inaccurate assumptions may render the guarantees meaningless in practice. Moreover, the approach does not provide quantitative guarantees about the continuous plant itself—it relies on separate proofs that the plant behaves as expected when the agent issues commands.

In summary, the paper presents a practical, compositional verification framework that leverages model checking of rational agents to ensure safe decision‑making in hybrid autonomous systems. By treating the agent’s beliefs as the primary verification target and by making environmental assumptions explicit, the methodology bridges the gap between high‑level logical reasoning and low‑level control, offering a scalable path toward certifiable autonomous technologies.

Comments & Academic Discussion

Loading comments...

Leave a Comment