Accounting for Secondary Uncertainty: Efficient Computation of Portfolio Risk Measures on Multi and Many Core Architectures

Aggregate Risk Analysis is a computationally intensive and a data intensive problem, thereby making the application of high-performance computing techniques interesting. In this paper, the design and implementation of a parallel Aggregate Risk Analysis algorithm on multi-core CPU and many-core GPU platforms are explored. The efficient computation of key risk measures, including Probable Maximum Loss (PML) and the Tail Value-at-Risk (TVaR) in the presence of both primary and secondary uncertainty for a portfolio of property catastrophe insurance treaties is considered. Primary Uncertainty is the the uncertainty associated with whether a catastrophe event occurs or not in a simulated year, while Secondary Uncertainty is the uncertainty in the amount of loss when the event occurs. A number of statistical algorithms are investigated for computing secondary uncertainty. Numerous challenges such as loading large data onto hardware with limited memory and organising it are addressed. The results obtained from experimental studies are encouraging. Consider for example, an aggregate risk analysis involving 800,000 trials, with 1,000 catastrophic events per trial, a million locations, and a complex contract structure taking into account secondary uncertainty. The analysis can be performed in just 41 seconds on a GPU, that is 24x faster than the sequential counterpart on a fast multi-core CPU. The results indicate that GPUs can be used to efficiently accelerate aggregate risk analysis even in the presence of secondary uncertainty.

💡 Research Summary

The paper addresses the computational challenges of performing aggregate risk analysis (ARA) for large reinsurance portfolios when both primary and secondary uncertainties must be modeled. Primary uncertainty refers to the stochastic occurrence of catastrophic events, while secondary uncertainty captures the variability of loss amounts conditional on an event’s occurrence. Traditional ARA implementations typically ignore secondary uncertainty, substituting mean loss values for full loss distributions, which can lead to inaccurate risk metrics such as Probable Maximum Loss (PML) and Tail Value‑at‑Risk (TVaR).

The authors propose a comprehensive algorithm that integrates secondary uncertainty directly into the Monte‑Carlo simulation pipeline. Input data consist of three tables: (1) the Year Event Table (YET) containing pre‑simulated event sequences, timestamps, and a program‑event specific uniform random number z_prog,E; (2) the Extended Event Loss Table (XELT) that stores, for each event, the mean loss µₗ, independent standard deviation σ_I, correlated standard deviation σ_C, maximum expected loss max l, and an event‑specific uniform random number z_E; and (3) the Portfolio definition (PF) which groups programs, layers, and contractual terms (occurrence retention/limit and aggregate retention/limit).

The core of the secondary‑uncertainty computation follows a five‑step statistical transformation: (i) combine σ_I and σ_C into a raw standard deviation σ = σ_I + σ_C; (ii) map the uniform random numbers z_prog,E and z_E to standard normal variates v_prog,E and v_E using the inverse Gaussian CDF; (iii) compute a linear combination LC = v_prog,E·(σ_I/σ) + v_E·(σ_C/σ); (iv) generate a mixed normal variate v = LC·√(σ_I²+σ_C²); and (v) transform v back to a uniform variable z = Φ(v). The resulting uniform variable is then used to sample from a Beta‑Normal mixture that represents the full loss distribution for the event. This procedure is executed for every event in every trial, making it computationally intensive.

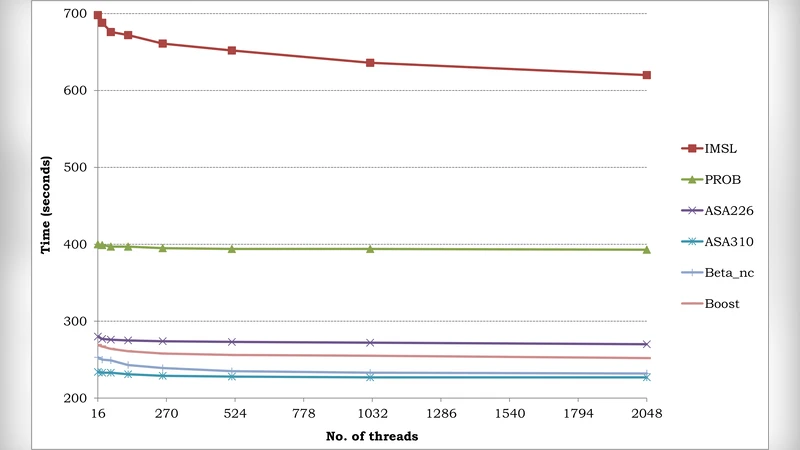

To achieve high performance, the algorithm is parallelized on both multi‑core CPUs and many‑core GPUs. Data structures are flattened into contiguous memory blocks to maximize bandwidth utilization. On the GPU, the authors exploit CUDA’s global and shared memory, assigning each thread to a specific trial‑layer combination while keeping the inner event loop sequential to avoid race conditions. They evaluate several statistical libraries, ultimately selecting cuRAND for GPU‑based normal and beta sampling, and Intel MKL for CPU vectorized math. Load balancing is addressed by ensuring that each thread performs a similar amount of work despite the variable cost of the iterative statistical calculations.

Experimental evaluation uses a realistic scenario: 800 000 trials, 1 000 events per trial, one million exposure locations, and a complex contract structure that includes Per‑Occurrence XL, Catastrophe XL, and Aggregate XL layers. On an NVIDIA Tesla K20X GPU, the full simulation completes in 41 seconds, delivering a 24× speed‑up over a sequential implementation on a high‑end 16‑core Intel Xeon CPU. Memory consumption stays within the GPU’s 12 GB limit, demonstrating that even data‑intensive workloads can be accommodated. The authors also compare risk metrics obtained with and without secondary uncertainty, showing that neglecting loss variability can substantially under‑estimate PML and TVaR.

In conclusion, the paper presents a viable high‑performance framework for ARA that faithfully incorporates secondary uncertainty, enabling near‑real‑time risk assessment for large reinsurance portfolios. The work opens avenues for further scaling via multi‑GPU clusters, dynamic contract updates, and integration with machine‑learning‑based loss‑distribution models.

Comments & Academic Discussion

Loading comments...

Leave a Comment