Likelihood method and Fisher information in construction of physical models

The subjects of the paper are the likelihood method (LM) and the expected Fisher information (FI) considered from the point od view of the construction of the physical models which originate in the statistical description of phenomena. The master equation case and structural information principle are derived. Then, the phenomenological description of the information transfer is presented. The extreme physical information (EPI) method is reviewed. As if marginal, the statistical interpretation of the amplitude of the system is given. The formalism developed in this paper would be also applied in quantum information processing and quantum game theory.

💡 Research Summary

The paper presents a unified information‑theoretic framework for constructing physical models, built on two statistical tools: the likelihood method (LM) and the expected Fisher information (FI). It begins by recalling that the likelihood function quantifies how well a set of model parameters explains observed data, and that maximizing this function (maximum‑likelihood estimation) provides the most efficient statistical inference in the presence of measurement noise. By importing this approach into physics, the authors argue that experimental data can be matched to theoretical models in a rigorously optimal way, surpassing traditional least‑squares techniques.

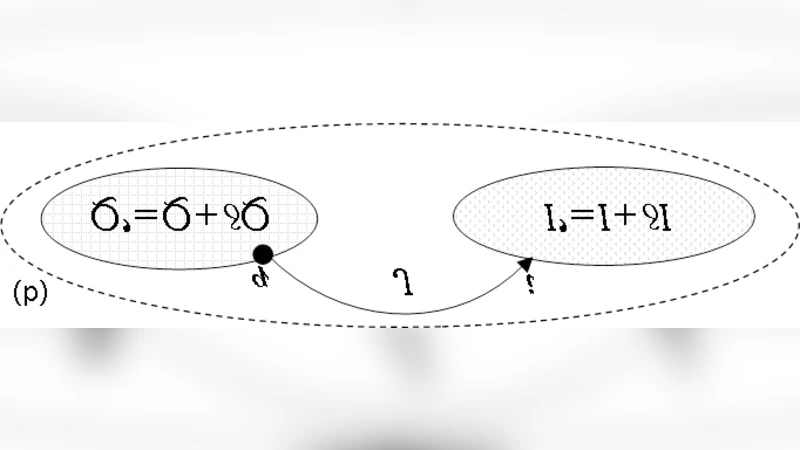

FI is then introduced as a measure of the sensitivity of the probability distribution to changes in the underlying parameters. Through the Cramér‑Rao bound, FI sets the lower limit on the variance of any unbiased estimator, thereby defining the ultimate precision achievable in parameter estimation. The authors decompose FI into two conceptual components: structural information, which encodes the intrinsic constraints of the system, and transfer information, which captures the contribution of external interactions or measurements. This decomposition allows a clear separation between the internal “shape” of a physical system and the information that flows across its boundaries.

A central technical development is the derivation of a master equation that expresses the conservation of probability flow for a density ρ(x,t). By applying a variational principle to FI, the authors obtain a “structural information principle”: the system evolves along trajectories that minimize the total FI subject to the constraints imposed by its structural information. This principle leads naturally to an extremal functional, the Extreme Physical Information (EPI) Lagrangian L = I − J, where I denotes the total FI and J represents the structural information term. Minimizing L yields Euler‑Lagrange equations that are shown to reproduce familiar physical laws when appropriate statistical models are chosen.

The EPI method proceeds in two stages. First, a statistical model is built that relates observable quantities to latent variables; this model may involve complex probability amplitudes ψ(x). Second, the model is constrained by FI‑based variational conditions. Performing the variation yields differential equations identical in form to the Schrödinger equation for non‑relativistic particles, Maxwell’s equations for electromagnetic fields, and even the Einstein field equations for gravitation, thereby demonstrating that these fundamental laws can be derived from an information‑extremization principle rather than from ad‑hoc postulates.

A noteworthy contribution is the statistical interpretation of the system’s amplitude. By treating ψ as a complex probability amplitude whose modulus squared |ψ|² equals the probability density, the authors bridge the formalism with quantum mechanics. This interpretation enables the direct application of FI to quantum information tasks: state tomography, entanglement quantification, and channel capacity estimation can all be framed as FI‑minimization problems, guaranteeing optimal precision under given experimental constraints.

The paper also develops a phenomenological description of information transfer. When information flows into or out of a system, the change in FI is identified as the “information transfer” quantity, which the authors relate to entropy production in non‑equilibrium thermodynamics. This connection provides a quantitative tool for analyzing irreversible processes, linking thermodynamic irreversibility with the degradation of Fisher information.

Finally, the authors illustrate the utility of the framework in quantum information processing and quantum game theory. In quantum computing, FI‑guided estimation of qubit states leads to minimal error rates, while in quantum games the optimal strategies emerge as solutions of the same variational problem that minimizes FI, offering an information‑theoretic reinterpretation of Nash equilibria in quantum settings.

In summary, the paper elevates the likelihood method and Fisher information from statistical instruments to foundational principles for physics. By unifying variational calculus, information theory, and statistical inference, it provides a powerful, model‑independent route to derive and understand physical laws, and opens new avenues for research in quantum information science, complex systems, and non‑equilibrium thermodynamics.

Comments & Academic Discussion

Loading comments...

Leave a Comment