Structured Convex Optimization under Submodular Constraints

A number of discrete and continuous optimization problems in machine learning are related to convex minimization problems under submodular constraints. In this paper, we deal with a submodular function with a directed graph structure, and we show tha…

Authors: Kiyohito Nagano, Yoshinobu Kawahara

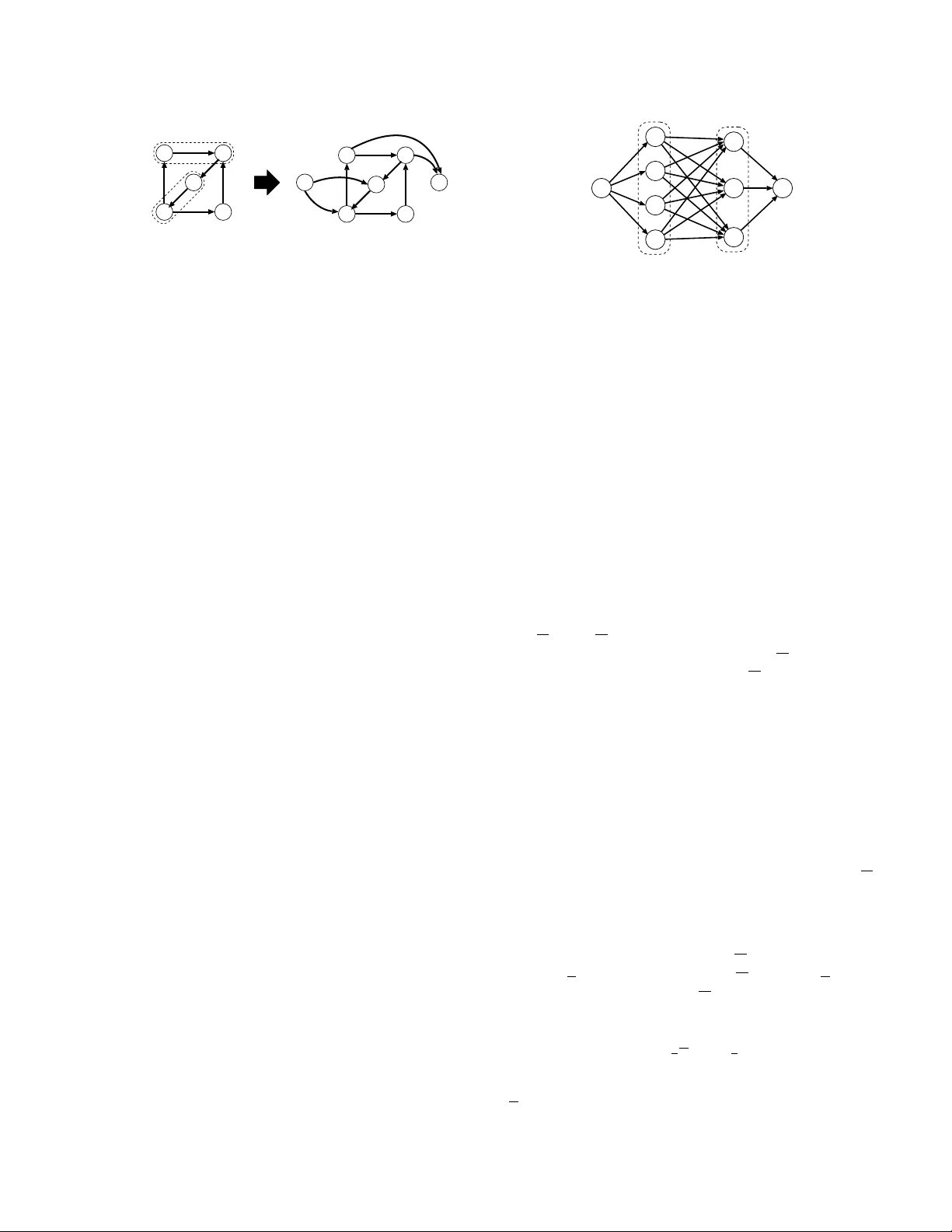

Structured Con v ex Optimization under Submo dular Constrain ts Kiy ohito Nagano Dept. of Complex and In telligent Systems F uture Univ ersity Hak o date k nagano@fun.ac.jp Y o s hi n ob u K awah a ra The Institute of Scien tific and Industrial Research Osak a Univ ersity kawahara@ar.sanken.osaka-u.ac.jp Abstract A n umber of discrete and con tinuous opti- mization problems in machine learning are related to con vex minimization problems un- der submodular constrain ts. In this pap er, w e deal with a submo dular function with a directed graph structure, and we sho w that a wide range of conv ex optimization problems under submo dular constraints can b e solved m uch more efficiently than general submo d- ular optimization metho ds by a reduction to a maxim um flow problem. F urthermore, we giv e some applications, including sparse op- timization metho ds, in whic h the proposed metho ds are effective. Additionally , we ev al- uate the p erformance of the proposed metho d through computational exp erimen ts. 1 In tro duction A submo dular function is a fundamental to ol in dis- crete optimization, mac hine learning and other re- lated fields and has been recognized as an in terest- ing sub ject of research. A submodular function is kno wn to b e a discrete counterpart of a conv ex func- tion (Lov´ asz [17]). Especially , the submo dular func- tion minimization problem is an elemental problem, and many com binatorial problems arising in mac hine learning, such as clustering [25, 24], image segmen ta- tion [31] and feature selection [2], can b e reduced to this problem. F or example, Narasimhan, Joic and Bilmes [25] show ed that clustering problems with some specific natural cri- teria, such as the minimum description length, can b e solv ed as the problem of minimizing a s ymmetric sub- mo dular function. Also, Bac h [2] recently sho wed that man y of the kno wn structured-sparsit y inducing norms can b e in terpreted as contin uous relaxations, called the Lo v´ asz extensions, of submo dular functions. Based on this corresp ondence relationship, proximal operators, whic h are required for learning with structured regu- larization, can b e computed as minimum-norm-point problems on submo dular p olyhedra. Similarly to con vex functions, submo dular functions can be exactly minimized in polynomial time. The fastest kno wn algorithm of Orlin [27] runs in O( n 5 EO+ n 6 ) time, where n is the size of the ground set and EO is the time for function ev aluation. On the other hand, the minim um norm p oin t algorithm (F ujishige [9]) is usually muc h faster in practice [10], although it has w orse time complexit y . Ho wev er, the existing algo- rithms for the general submo dular minimization prob- lem, ev en including the minim um norm p oin t algo- rithm, do not scale sufficiently to large problems from a practical p oin t of view. Mean while, it is kno wn that submodular function min- imization problems can b e solved more efficien tly when the submo dular functions hav e particular structure. F or symmetric submo dular functions, Queyranne [29] ga ve a minimization algorithm that runs in O( n 3 EO). Also recen tly , Stobb e and Krause [31] in tro duced a decomp osable submo dular function and dev elop ed the Smo othed Lo v´ asz Gradien t (SLG) algorithm, whic h is based on the smo othing technique of Nesterov [26] and the discrete conv exity of a submo dular function. In addition, Jegelk a et al. [14] introduced a generalized graph cut function, which generalizes a large subfam- ily of submo dular functions, and prop osed an efficient net work flow based minimization algorithm. In this pap er, we consider a separable con vex opti- mization problem ov er a base polyhedron, which is a discrete structure determined by a submodular func- tion. Separable conv ex optimization under submo du- lar constraints is related to v arious discrete and contin- uous optimization problems, including netw ork anal- ysis methods [23], sparse learning methods [2], and appro ximation algorithms for NP-hard combinatorial optimization problems [13]. F or a general submo dular function, separable con vex optimization problems can b e solved within the same running time as submodular function minimization [6, 21], that is, O( n 5 EO + n 6 ) time. Thus, such algorithms are impractical when the size of the ground set is large. Even though the mini- m um norm point algorithm [9] and its weigh ted v ersion [22] would solv e such quadratic minimization problem m uch faster, it does not hav e goo d time complexity b ounds and still do es not scale to large problems. W e show that if a submodular function has a sp e- cific graph structure, the con v ex optimization prob- lem can be solv ed efficien tly with the aid of a gen- eral framework of the decomp osition algorithm [9, 22] and netw ork flo w algorithms [11, 12, 28]. W e develop a parametrized directed graph structure that deter- mines a parametric submo dular function minimization problem, and show that the decomp osition algorithm can b e p erformed successfully by computing the max- imal minimum cuts iterativ ely . F urthermore, w e men- tion that sev eral machine learning applications can b e solv ed in this conv ex optimization problem. W e re- mark that the prop osed metho d can deal with a rela- tiv ely general submodular function and v arious sepa- rable con vex ob jectiv e functions. The remainder of the paper is organized as follo ws. In Section 2, we provide the definitions of basic con- cepts and giv e a definition of a con vex optimization problem under submo dular constraints. In Section 3, w e give examples of submo dular functions that hav e go od graph structures. In Section 4, w e sho w some optimization problems related to separable conv ex op- timization problems under submodular constraints. In Section 5, we describ e a general decomp osition algo- rithm for solving separable conv ex optimization prob- lems, and in Section 6, we further sho w that structured con vex optimization problems under submo dular con- strain ts can b e solved efficiently with the aid of net- w ork flow algorithms. Finally , we show some empirical results of computational exp erimen ts in Section 7, and giv e concluding remarks in Section 8. 2 Submo dular functions and con vex optimization problems W e give basic definitions of a submo dular function and related concepts (for details on the theory of submo du- lar functions, see [9, 30]). Then, w e give the definition of a conv ex optimization problem under submo dular constrain ts. 2.1 Submo dular functions and related p olyhedra Let V = { 1 , ..., n } be a gi v en se t o f n elements, and let g :2 V → R b e a real-v alued function defined on all the subset of V . Such a function g is called a set function with a ground set V . The set function g : 2 V → R is called submo dular if g ( S )+ g ( T ) ≥ g ( S ∪ T )+ g ( S ∩ T ) , ∀ S, T ⊆ V. (1) A set function g is called s u pe r m od u l a r if − g is sub- mo dular. A set function is called mo dular if it al- w ays satisfies (1) with equalit y . A set function is called nonde cr e asing if g ( S ) ≤ g ( T ) for any S, T ⊆ V with S ⊆ T . F or an n -dimensional v ector a ∈ R n with comp onen ts a i , i ∈ V , and a subset S ⊆ V ,w ed e n o t e a ( S )= i ∈ S a i . F or conv enience, we let a ( ∅ )=0 . A set function a :2 V → R corresp onding to the v ector a is a mo dular function. Submo dular function minimization A submodular function minimization problem is a fun- damen tal unifying discrete optimization problem. F or a submodular function g :2 V → R , the submo d- ular function minimization problem asks for finding a subset S ⊆ V that minimizes f ( S ). This prob- lem is kno wn to b e solv able in p olynomial time, and the fastest known p olynomial time algorithm [27] that runs in O( n 5 EO + n 6 ) time, where EO is the time of one function ev aluation of g . The algorithms for gen- eral submo dular function minimization are impractical when n = | V | is large. In addition, the minim um norm p oin t algorithm [9] is kno wn to be usually m uch faster in practice, although it has worse time complexity . Let Arg min g ⊆ 2 V denote the family of all minimiz- ers of g .T h a t i s , A r g m i n g = { S ∗ ⊆ V : f ( S ∗ )= min S f ( S ) } .F o r S ∗ ,T ∗ ∈ Arg min g , the submo du- larit y of g implies that S ∗ ∪ T ∗ ,S ∗ ∩ T ∗ ∈ Arg min g . Th us, there exist the (unique) minimal minimizer and the (unique) maximal minimizer of g . Man y submo d- ular function minimization algorithms can b e mo di- fied to find the maximal minimizer and/or the minimal minimizer (see, e.g., [21]). Base p olyhedron F or a submo dular function g :2 V → R with g ( ∅ )=0 , the submo dular p olyhe dr on P( g ) ⊆ R n and the ba s e p olyhe dr on B( g ) ⊆ R n are giv en by P( g )= { x ∈ R n : x ( S ) ≤ g ( S )( ∀ S ⊆ V ) } , B( g )= { x ∈ P( g ): x ( V )= g ( V ) } . Figure 1 illustrates examples of the base p olyhedra. B( g ) is determined by 2 n − 2 inequalities and one equal- it y . W e see that B( g ) is nonempty and b ounded. The base p olyhedron B( g ) is included in the nonnegative orthan t R n ≥ 0 i fa n do n l yi f g is nondecreasing. x 2 B( g ) 0 B( g ) 0 n = 2 n = 3 x 1 x 1 x 3 x 2 Figure 1: Examples of base p olyhedra 2.2 Con vex optimization under submo dular constrain ts Throughout this pap er, we supp ose that set function f :2 V → R is submo dular and satisfies f ( ∅ )=0 . L e t w i : R → R b e a con vex function for each i ∈ V .W e consider the separable conv ex function minimization problem o ver the base p olyhedron : min x ∈ B( f ) i ∈ V w i ( x i ) . (2) It is kno wn that a num ber of optimization problems of this form are equiv alen t. Theorem 1 (Nagano and Aihara [22]) . Supp ose that f :2 V → R is a nonde cr e asing submo dular function with f ( ∅ )=0 .L e t b ∈ R n b e a p ositive ve ctor, and let w 0 : R → R b e a differ entiable and strictly c onvex function. The fol lowing pr oblems (1.a) – (1.f ) have the same (optimal) solution: pr oblem (1.a) min x ∈ B( f ) n i =1 x 2 i b i ; pr oblem (1.b) min x ∈ B( f ) n i =1 x p +1 i b p i for p> 0 ; pr oblem (1.c) max x ∈ B( f ) n i =1 x p +1 i b p i for p< 0 with p = − 1 ; pr oblem (1.d) max x ∈ B( f ) n i =1 b i ln x i ; pr oblem (1.e) min x ∈ B( f ) n i =1 ( x i ln x i b i + b i − x i ) ; pr oblem (1.f ) min x ∈ B( f ) n i =1 x i g 0 ( b i x i ) . In view of Theorem 1, we fo cus on the case where the ob jective function is quadratic. F or a p ositiv e v ector b ∈ R n , we mainly deal with problem (1.a). By using the follo wing tw o observ ations, w.l.o.g., w e can assume that the submo dular function f is nondecreasing. Lemma 2. Fo r a n y β ∈ R , x ∗ is optimal for min { i x 2 i b i : x ∈ B( f ) } if and only if x ∗ + β b is opti- mal for min { i x 2 i b i : x ∈ B( f + βb ) } . Lemma 3. Set β := max { 0 , max i =1 ,..., n f ( V \{ i } ) − f ( V ) b i } . Then f + βb is a nonde cr e asing submo dular function. Problem (1.a) is known as the lexicographically opti- mal base problem [8]. If b is the all-one vector, problem (1.a) b ecomes the minimum norm base problem. F or a general submo dular function, problem (1.a) can b e solv ed within the same running time as the submo du- lar function minimization [6, 21], that is, O( n 5 EO + n 6 ) time, where EO is the time of one function ev aluation. Th us, such algorithms are impractical when n = | V | is large. Although the minimum norm p oin t algorithm [9] and its w eighted version [22] can solve problem (1.a) m uch faster, it has worse time complexity and still do es not scale to large problems. In this paper, we point out that if the function f has a go od graph structure, problem (1.a) can b e solved ef- ficien tly with the aid of netw ork flo w algorithms. F ur- thermore, we show a n um b er of applications of the con vex optimization problem (1.a). 3 Structured submodular functions and minimization problems Man y basic submodular functions can b e represented b y using graphs. In suc h cases, a minimum cut algo- rithm, which runs m uch faster in practice, is useful to solv e submo dular optimization problems. In this section, we will see some examples of submo d- ular functions with directed graph structures, whic h are imp ortan t from the viewp oin t of applications. 3.1 Minimizing graph cut functions In this subsection, we will see that an s - t cut function κ s - t and a generalized graph cut function γ of [14], b oth of which are submo dular, can b e minimized ef- ficien tly with the aid of net work flow algorithms. In particular, we will see that the maximal minimizer can b e computed efficiently in b oth cases. In the general algorithm describ ed in Section 5, the maximal mini- mizer of a submo dular function has to b e computed. Minim um cut problem W e start with the minim um s - t cut problem. Let G = ( { s }∪{ t }∪V , E ) b e a directed graph, where s is a sp ecial source no de, t is a sp ecial sink no de, V is a set of other no des, and E is a set of directed edges. F or each e ∈E , a nonnegativ e capacit y v alue c ( e ) is assigned. An s - t cut is an ordered bipartition ( V 1 , V 2 )o ft h e no de set of G suc h that s ∈V 1 and t ∈V 2 . Clearly , an y s - t cut can b e expressed as ( { s }∪ S, { t }∪ ( V\ S )) for some S ⊆V . F or an s - t cut ( { s }∪ S, { t }∪ ( V\ S )), its capacit y κ s - t is defined b y κ s - t ( S )= { c ( e ): e ∈ δ out G ( { s }∪ S ) } (3) for each S ⊆V ,w h e r e δ out G ( V )i sas e to fe d g e sl e a v - ing V ⊆V in G . The minimum cut problem asks for finding an s - t cut of G that minimizes the capacit y . The set function κ s - t :2 V → R , whic h is called an s - t cut function , is known to b e submodular. There- fore, the minim um cut problem is a sp ecial case of a submo dular function minimization problem. The minim um cut problem is closely related to the maxim um flow problem, which is a fundamen tal prob- lem in combinatorial optimization [1]. It can b e solved quite efficien tly . F or example, it can b e solved in O( |V ||E | log ( |V | 2 / |E | )) time [12] or O( |V ||E | ) time [28]. As κ s - t is submo dular, there exists the maximal min- imizer S ∗ max of κ s - t .T h e s - t cut ( { s }∪ S ∗ max , { t }∪ ( V\ S ∗ max )) is called the maximal minimum s - t cut . Once a maximal flow is computed, we can obtain the maximal minimum s - t cut in additional O( |V | + |E | ) time (we just need to consider the set of no des reach- able to the sink t and its complement in the residual net work [1]). The minimal minim um s - t cut can b e defined and computed in a similar wa y . Lemma 4. The maximal minimizer of the s - t cut function κ s - t :2 V → R define d in (3) c an b e c ompute d in O( |V ||E | log( |V | 2 / |E | )) time, or, O( |V ||E | ) time. Generalized graph cut functions Next w e give a definition of the generalized graph cut function γ :2 V → R of Jegelk a et al. [14], which gen- eralizes a large subfamily of submo dular functions. Let G =( { s }∪{ t }∪V , E ) be a directed graph with nonnegativ e edge capacities c ( e ) ≥ 0( e ∈E ). Suppose that the set V is partitioned as V = V ∪ U ,w h e r e V = { 1 ,..., n } is a set of no des, eac h of which ma y b ecome a source, and U is a set of auxiliary no des ( U can b e empty). Figure 2 illustrates an example of the graph G =( { s }∪{ t }∪ V ∪ U, E ). A generalized graph cut function [14] γ :2 V → R is defined by γ ( S )= m i n W ⊆ U { c ( e ): e ∈ δ out G ( { s }∪ S ∪ W ) } (4) for eac h S ⊆ V .I f U is empt y , the function γ coincides with the function κ s - t defined in (3). The submo du- larit y of γ can b e derived from the classical result of Megiddo [20] on netw ork flow problems with multiple terminals (for details, see the app endix of this pap er). Let us consider the minimization of γ :2 V → R .B y the definition of γ ,t h e v a l u e γ ∗ := min S ⊆ V γ ( S )i s equal to the capacity of a minimum s - t cut in G .F o r an y minim um s - t cut ( { s }∪P , { t }∪ ( V ∪ U \P )) in G , we h ave γ ( P∩ V )= γ ∗ and thus P∩ V is a minimizer of γ :2 V → R . Therefore, a minimizer of γ can b e computed b y solving the minimum s - t cut problem on G =( { s }∪{ t }∪ V ∪ U, E ). 1 source t u 1 u 2 sink s 2 3 V U Figure 2: A directed graph G =( { s }∪{ t }∪ V ∪ U, E ) that generates a generalized graph cut function γ : 2 V → R C onve rs e ly , le t S ∗ ⊆ V b e a minimizer of γ ,a n dl e t W ∗ b e a subset W ⊆ U that attains the minimum in the righ t hand side of (4) with resp ect to S = S ∗ . Then S ∗ ∪ W ∗ ⊆V minimizes the s - t cut function (see [14]). Therefore, given the maximal minimum s - t cut ( { s }∪ P ∗ max , { t }∪ ( V ∪ U \P ∗ max )), the subset P ∗ max ∩ V is the maximal minimizer of γ . Lemma 5. The maximal minimizer of the gener alize d gr aph cut function γ :2 V → R define d in (4) ca n be c ompute d in O( |V ||E | log( |V | 2 / |E | )) time, or, O( |V ||E | ) time, wher e V = V ∪ U . 3.2 T ransformed graph cut functions W e define a transformed graph cut function, and w e sho w that the function can b e regarded as an s - t cut function defined in Subsection 3.1. In Subsection 4.1, w e will see that the conv ex minimization problem (1.a) under the constraints of this function is related to the densest subgraph problem. Let G =( V, E ) be a directed graph with no de set V = { 1 , ..., n } and edge set E . Given nonnegative edge capacities c ( e )( e ∈ E ), a cut function κ :2 V → R defined by κ ( S )= { c ( e ): e ∈ δ out G ( S ) } for each S ⊆ V is submo dular. Let a ∈ R n . Then, a tr ansforme d gr aph cut function κ a :2 V → R defined by κ a = κ + a is also submo dular. Let us see that the function κ a :2 V → R can b e regarded as an s - t cut function on a new graph G a . Define A + = { i ∈ V : a i > 0 } and A − = { i ∈ V : a i < 0 } . By adding new no des s , t and new edges E + ∪ E − to G , we construct a new directed graph G a =( { s }∪ { t }∪ V, E ∪ E + ∪ E − ), where E + = { ( i, t ): i ∈ A + } and E − = { ( s, i ): i ∈ A − } . The capacities of new e dges are determined as follows: we set c ( i, t )= a i ( ≥ 0) for eac h ( i, t ) ∈ E + ,a n ds e t c ( s, i )= − a i ( ≥ 0) for eac h ( s, i ) ∈ E − . Figure 3 shows the construction of G a . Fo r a n s - t cut ( { s }∪ S, { t }∪ ( V \ S )) of G a , its capacity 1 2 3 4 5 s t A - A + 1 2 3 4 5 - a 2 - a 3 a 4 a 1 G Figure 3: Construction of the directed graph G a is equal to κ ( S )+ a ( S ∩ A + )+( − a ( A − \ S )) = κ ( S )+ a ( S ) − a ( A − ) = κ a ( S )+c o n s t . Th us, κ a c a nb er e g a r d e da sa n s - t cut function on G a . 3.3 Decomp osable submo dular functions Decomp osable submo dular function (see [31]) are one of the most important sp ecial case of generalized graph cut functions [14]. F or more examples of generalized graph cut functions, refer to Jegelk a et al. [14]. A de c omp osable submo dular function τ :2 V → R is a set function that can b e represented as a sum of a mod- ular set function and submo dular set functions arising from conca ve functions. As to Stobb e and Krause [31], w e will fo cus on the case where each concav e function is a threshold p oten tial. That is, we consider the fol- lo wing decomp osable submodular function τ :2 V → R defined b y τ ( S )= − d ( S )+ k j =1 min { y j ,w j ( S ) } (5) for each S ⊆ V ,w h e r e d ∈ R n is a p ositiv e vec- tor, w 1 , ..., w k ∈ R n are nonnegativ e v ectors, and y 1 , ..., y k > 0. No w we observ e that the function τ defined in (5) can b e represented as a generalized graph cut function de- fined in Subsection 3.1. Consider a directed graph G τ =( { s }∪{ t }∪ V ∪ U, E ), where V = { 1 ,..., n } , U = { u 1 , ..., u k } ,a n d E = { ( s, i ): i ∈ V }∪{ ( i, u j ): i ∈ V, u j ∈ U }∪{ ( u j ,t ): u j ∈ U } . The edge capaci- ties are determined as c ( s, i )= d i , ∀ i ∈ V, c ( i, u j )= w j i , ∀ i ∈ V, ∀ u j ∈ U, c ( u j ,t )= y j , ∀ u j ∈ U. Figure 4 illustrates the directed graph G τ . W e can ob- serv e that G τ generates the decomp osable submo dular function τ . 1 2 3 4 source s d 1 d 2 d 3 d 4 t sink u 1 u 2 u 3 y 1 y 2 y 3 w 3 4 w 1 1 V U Figure 4: Directed graph G τ asso ciated with a decom- p osable submo dular function τ with n =4 a n d k =3 The function τ corresp onds to the sum of truncated functions describ ed in [14], and the construction of G τ is widely used in computer vision [15]. 4 Applications It is known that the conv ex optimization problem (2) under submo dular constraints is r elated to some dis- crete and c on tin uous optimization problems. In this section, w e show some examples in which the submo d- ular functions hav e graph structures considered in Sec- tion 3. 4.1 Finding dense subgraphs Let G =( V, E ) b e an undirected graph with no de set V = { 1 ,..., n } and undirected edge set E . Giv en non- negativ e edge capacities c ( e )( e ∈ E ) and an in teger k , the densest k -subgraph problem asks for finding a k -subset S ⊆ V that maximizes θ ( S ), where θ ( S )i s the sum of weigh ts of edges in the subgraph induced by S . The function θ :2 V → R is a sup ermodular function with θ ( ∅ ) = 0, and the minim um norm base problem min x ∈ B( − θ ) n i =1 x 2 i (6) pla ys an imp ortan t role to find dense subgraphs of G [23]. We s h o w t h a t − θ is a transformed graph cut function (Subsection 3.2). Let m ∈ R n b e a vector defined by m i = i { c ( { i, i } ): { i, i }∈ E } for eac h i ∈ V , and let κ b e a cut function of G ,t h a t i s , κ ( S )= { c ( { i, i } ): { i, i }∈ E , i in S and i in V \ S } ( S ⊆ V ). Then we hav e − θ ( S )= 1 2 κ ( S ) − 1 2 m ( S ) for each S ⊆ V . It is easy to see that the function κ :2 V → R can be regarded as a cut function of a directed graph. Thus, − θ is a transformed graph cut function. 4.2 Pro ximal metho ds Regularized learning is a fundamental formulation for man y supervised problems. Let { ( z i ,y i ) } N i =1 be a se t of samples, β ∈ R n a model parameter v ector and l ( z ,y ; β ) a (differentiable) conv ex loss. Then, the op- timization for regularized learning is represented as min β ∈ R n N i =1 l ( z i ,y i ; β )+ λ · Ω( β ) , where Ω( β ) is a regularization term and λ is the regu- larization parameter. If Ω( β ) is non-differentiable on β , which is usually true for structured regularization, the proximal metho d is a p opular approac h to solve this optimization problem [3]. As is w ell known, its up date pro cedure at each iteration can b e reduced to the calculation of the following problem: min β ∈ R n 1 2 β − s 2 2 + λ · Ω( β ) , (7) where s ∈ R n . Recently , Bach [2] sho wed that man y of the popular structured norms can be represen ted as con tinuous relaxations, called Lo v´ asz extensions, of submo dular functions. And in this case, Problem (7) can b e transformed to min t { n i =1 t 2 i : t ∈ B( g − λ − 1 s ) } , where g is a submo dular function whose Lo v´ asz exten- sion is Ω ( β is solved as λ t ). Note that many of p opular structured norms can be expressed as the Lov´ asz ex- tensions of generalized graph cut functions, suc h as cut functions (that corresp ond to fused-regularization)[4] and co v erage functions (that corresp ond to o verlap- ping group-regularization). 4.3 Minim um ratio problems F or a nonnegative submo dular function g :2 V → R with g ( ∅ ) = 0 and a p ositiv e vector b ∈ R n ,c o n s i d e r the minimum ratio problem which asks for a subset S ∈ 2 V \{ ∅ } minimizing g ( S ) /b ( S ). This kind of optimization problems ha ve to be solv ed iterativ ely , e. g., in the primal-dual approximation algorithm for a submo dular cost cov ering problem [13]. Supp ose that we ha ve the optimal solution x ∗ to min { n i =1 x 2 i b i : x ∈ B( f ) } .L e t ξ 1 =m i n i ∈ V x ∗ i b i and let S 1 = { i ∈ V : x ∗ i b i = ξ 1 } . Then the subset S 1 is an optimal solution to the minimum ratio problem (see [9]). Therefore, by solving the separable quadratic minimization problem o ver B( g ), an optimal solution to the minim um ratio problem can be obtained. If the function g has a graph structure, the running time of the appro ximation algorithm of [13] could be im- pro ved. 5 A general framew ork for separable con v ex minimization under submo dular constraints In this section, we describ e the decomp osition algo- rithm, which is a general framew ork to solve the sepa- rable conv ex minimization problem under submo dular constrain ts. Before describing the decomp osition al- gorithm, we give a parametric formulation of problem (1.a). F or the v alidity of the decomposition algorithm de- scrib ed here, e. g., refer to F ujishige [9], and Nagano and Aihara [22]. 5.1 A parametric formulation Let f :2 V → R b e a general nondecreasing submo du- lar function and b ∈ R n a p ositiv e vector. Recall that the set function b asso ciated to b is mo dular. F or a parameter α ≥ 0, define f α :2 V → R by f α = f − αb , whic h is submo dular. Let us see how problem (1.a) can be reduced to the parametric submodular minimization problem: minimize f α for all α ≥ 0. It is kno wn that there exist + 1 subsets, ( ∅ =) S 0 ⊂ S 1 ⊂ ··· ⊂ S (= V ) , and + 1 subinterv als of R ≥ 0 = { α ∈ R : α ≥ 0 } , R 0 =[ 0 ,α 1 ) ,R 1 =[ α 1 ,α 2 ) , ..., R j =[ α j ,α j +1 ) , ..., R =[ α , + ∞ ) , suc h that, for each j ∈{ 0 , ..., } , the subset S j is the unique maximal minimizer of f α = f − αb for all α ∈ R j . The vector x ∗ ∈ R n determined b y , for each i ∈ V with i ∈ S j +1 \ S j ( j ∈{ 1 ,..., } ), x ∗ i = f ( S j +1 ) − f ( S j ) b ( S j +1 \ S j ) b i (8) is the unique optimal solution to the quadratic mini- mization problem (1.a). The equation (8) implies that problem (1.a) can b e immediately solved if the collec- tion S ∗ = { S 0 ,S 1 , ..., S } is computed. 5.2 The decomp osition algorithm By successiv ely minimizing f α = f − αb for s ome appropriately chosen α ≥ 0, the decomp osition al- gorithm finds S j one by one, and finally the chain S 0 ⊂ S 1 ⊂ ··· ⊂ S and the optimal solution x ∗ to problem (1.a) are obtained. The decomposition algorithm DA is recursiv e. Sup- p ose that we are giv en tw o subsets S j ,S j ∈S ∗ with 0 ≤ j< j ≤ n . The algorithm DA ( S j ,S j ) finds the collection S ∗ ( S j ,S j ): = { S ∈S ∗ : S j ⊆ S ⊆ S j } . It can b e verified that α j +1 ≤ f ( S j ) − f ( S j ) b ( S j \ S j ) ≤ α j . Therefore, we can decide if ( j +1 = j )o r( j +1

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment