mTim: Rapid and accurate transcript reconstruction from RNA-Seq data

Recent advances in high-throughput cDNA sequencing (RNA-Seq) technology have revolutionized transcriptome studies. A major motivation for RNA-Seq is to map the structure of expressed transcripts at nucleotide resolution. With accurate computational t…

Authors: Georg Zeller, Nico Goernitz, Andre Kahles

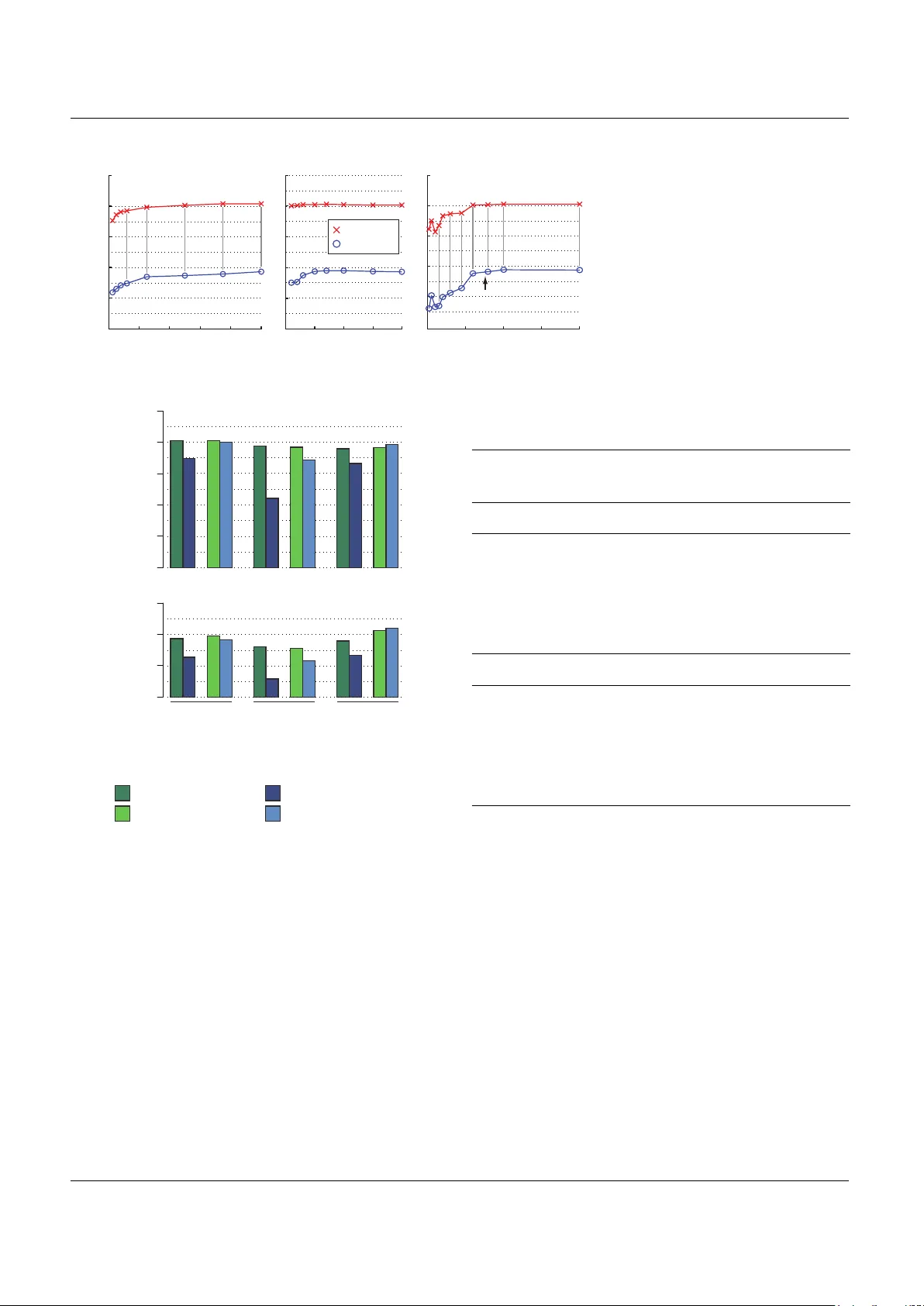

mTim: rapid and accurate transcript reconstruction fr om RNA-Seq data Georg Zeller ∗ , 1 , 2 , 4 , Nico G ¨ or nitz ∗ , 3 , Andr ´ e Kahles 1 , 5 , Jonas Behr 1 , 5 , Pramod Mudrakar ta 1 , S ¨ oren Sonnenb urg 6 , and Gunnar R ¨ atsch 5 ∗ 1 F riedrich Miescher Laborator y , Max Planck Society , 72076 T ¨ ubingen, Germany 2 Depar tment of Molecular Biology , Max Planck Society , 72076 T ¨ ubingen, Germany 3 Machine Learning Group, T echnical University Berlin, 10587 Berlin, Ger many 4 Structural and Computational Biology Unit, EMBL, 69117 Heidelberg, Ger many 5 Computational Biology Center , Sloan-K ettering Institute, NY 10065, USA 6 T omT om, An den T reptow ers 1, 12435 Berlin, Ger many ∗ A uthors contributed equally . ABSTRA CT Motivation: Recent adv ances in high-throughput cDNA sequencing (RNA-Seq) technology hav e rev olutionized transcriptome studies. A major motivation for RNA-Seq is to map the structure of expressed transcripts at nucleotide resolution. With accurate computational tools for tr anscript reconstr uction, this technology ma y also become useful for genome (re-)annotation, which has mostly relied on de nov o gene finding where gene structures are primarily inferred from the genome sequence. Results: We dev eloped a machine-lear ning method, called mTim ( m argin-based t ranscript i nference m ethod) f or transcript reconstruction from RNA-Seq read alignments that is based on discriminatively trained hidden Marko v suppor t vector machines . In addition to features deriv ed from read alignments, it utilizes characteristic genomic sequences, e .g. around splice sites, to improv e transcript predictions. mTim inf erred transcripts that were highly accurate and relatively robust to alignment errors in comparison to those from Cufflinks, a widely used transcript assembly method. A vailability: Source code in Matlab/C is availab le from https:// github.com/nicococo/mTIM . An mTim predictor is also provided as par t of Oqtans, a Galaxy-based RNA-Seq analysis pipeline ( http://oqtans.org/ ). Contact: ratschg@mskcc.org 1 INTRODUCTION High-throughput sequencing technology applied to cellular mRNA (RN A-Seq) has rev olutionized transcriptome studies [19, 17, 35, among many others]. In contrast to microarray platforms, which it has replaced in man y applications, RNA-Seq can not only be used to accurately quantify known transcripts, but also to re veal the precise structure of transcripts at single-nucleotide resolution. RN A-Seq based transcript reconstruction has therefore become a valuable tool for the completion of genome annotations [22, 11, for instance] and further enabled subsequent analyses of dif ferentially expressed genes [2], transcript isoforms [6, 4] and e xons [3], all of which generally rely on correctly inferred transcript in ventories. De ∗ to whom correspondence should be addressed nov o transcript reconstruction is thus a piv otal step in the analysis of RN A-Seq data. There are two conceptually different strategies to approach this problem: one can either assemble transcripts directly from RN A-Seq reads using methodology that originated from genome assembly approaches [13, 23, 25]. Alternatively , the problem can be decomposed into two steps: RNA-Seq reads are first aligned to the genome of origin followed by the actual transcript reconstruction on the basis of these alignments. While the first, assembly-based strategy does not require a high-quality genome sequence and is thus applicable to non-model organisms, it is arguably addressing a more difficult problem than the latter, mapping-based approach. Consequently , transcripts, in particular ones with low expression, may be more accurately reconstructed by methods implementing the mapping-based approach [32, 15, 18] (see also [13, 25] for a comparison). The performance of mapping-based methods howe ver strongly depends on the quality of the RNA-Seq read alignments. Considerable attention has therefore been payed to solve the problem of correctly aligning RN A fragments across splice junctions [7, 31, 16]. Follo wing the mapping-based paradigm, we developed a novel machine learning-based method, which we call mT im: m argin- based t ranscript i nference m ethod. In contrast to algorithmic transcript assembly [32, 15], we formalize the problem as a supervised label sequence learning task and apply state-of-the-art techniques, namely Hidden Markov support vector machines (HM- SVMs) [1, 33, 20, 37]. This way of approaching the problem is similar to recently developed gene finders [26, 14], and mTim is indeed a hybrid method that can utilize both, RN A-Seq read alignments and characteristic features of the genome sequence, e.g. around splice sites [28]. Ho wever , mT im’s emphasis is on inference from aligned RNA-Seq reads, and its model is only augmented by a few genic sequence motif sensors [26], which can moreov er be disabled. W e thus make weak assumptions, if any , about the inferred transcripts: importantly , we do not model protein-coding sequences (CDS) and are thus able to predict noncoding transcripts as well as coding ones with similar expression. 1 G.Zeller et al. 2 METHODS 2.1 T ranscript reconstruction The task of reconstructing the exon-intron structure of expressed genes can be con verted into a label sequence learning problem, where we attempt to label each nucleotide in the genome as either intergenic, exonic or intronic. Our prior knowledge about what constitutes a valid gene structure is incorporated into a state model to restrict the space of possible labelings to valid ones. 2.1.1 The state model used by mT im Starting from a naive state model that would consist of a single state for each of the atomic labels, exonic, intronic, and intergenic, we extended it as follo ws (see Figure 1): first, we devised a strand-specific model. Second, we created expression- dependent submodels. This allows us to maintain sev eral parameter sets, each of which is optimized for transcripts with a certain read support. Due to non-uniform read coverage along transcripts, transitions between expression lev els proved useful in practice. Finally , the simple model was extended by states that mark segment boundaries (e.g. when transitioning from exon to intron), as this facilitates boundary recognition from features such as spliced reads (Fig. 1). 2.1.2 F eatur e derivation and training data The inference of transcript structures is based on sequences of observations or features deriv ed from RN A-Seq read alignments and predicted splice sites. Specifically , we derive the following position-wise features from RNA-Seq alignments: • number of reads aligned at the given position, indicating an e xon. • a gradient of the read coverage; high absolute values correspond to sharp in- or decreases in cov erage typical of the start and end of e xonic regions, respecti vely • number of reads that are spliced over the given position (strand- specific), thus indicating an intronic position. • number of spliced reads supporting a donor splice site at the given position (strand-specific). • number of spliced reads supporting an acceptor splice site at the given position (strand-specific). • number of paired-read alignments for which the insert spanned the giv en position (only used if read pair information is available, strand- specific), an indicator of transcript connectivity . Additionally , we deriv e features from the genome sequence around a given position such as strand-specific donor and acceptor splice site prediction. As a ground truth for guiding the supervised training process, annotated gene models with a portion of the surrounding intergenic region are excised and conv erted into label sequences by assigning one of the above atomic labels to each nucleotide (see color coding in Figure 1). In the presence of alternati ve transcripts, this labeling was based on a single isoform (the one that was best supported by RNA-Seq reads), and additionally a mask of alternativ e transcript regions was generated to a void that learning the correct alternativ es is penalized during training. 2.1.3 T raining and optimization Label sequence learning problems are often addressed using Hidden Markov Models (HMMs) [10]. Howev er , discriminativ e learning algorithms have recently been developed that combine the versatility of HMMs with the advantages of discriminative training, and these include hidden Markov support vector machines (HMSVMs)[1, 33, 20]. Inspired by support vector machines [34, 24, 5], the HMSVM training problem amounts to maximizing the mar gin of separation between the correct and any wrong label sequence. Formally , training an HMSVM in volves learning a function f : X → S ? intergenic intronic exonic S E 2 + I 2 + E 1 + I 1 + intronic exonic plus strand minus strand expression level 1st 2nd ... ... ... ... ... ... ... acc 2 - acc 1 - don 1 - don 2 - acc 2 + acc 1 + don 1 + don 2 + E 2 - I 2 - E 1 - I 1 - p 1 + p 2 + t 1 + t 2 + p 1 - p 2 - t 2 - t 1 - Fig. 1. State model used by mTim. Ov als correspond to states, whereas arrows indicate allowed transitions. The correspondence between states and atomic labels (exon, E , intron, I , and intergenic, S ; see Methods) is color- coded. The first and last nucleotide of introns and transcripts were modelled with particular care: The former are associated with splice site signals at exon-intron junctions (states denoted acc and don ), whereas the latter correspond to transcript start and end (denoted p and t , respectiv ely). The model is strand-specific (superscripts + and - in state labels) and consists of expression-specific submodels (columns and subscripts, see also label at bottom) which allows us to optimize different parameter sets depending on the read support. that yields a label sequence (or simply a path) π ∈ S ? giv en the corresponding sequence of observations x ∈ X (an m × t matrix of m different features), both of length t , where S ? denotes the Kleene closure of the state set (see Figure 1). This is done indirectly via a θ -parametrized discriminant function F θ : X × S ? → R that assigns a real-valued score to a pair of observation and state sequence [1]. Once F is known, f can be obtained as f ( x ) = argmax π ∈S ? F θ ( x , π ) . In our case, F satisfies the Markov property and can hence be efficiently decoded using the V iterbi algorithm [10]. The discriminant function is essentially a linear combination of feature scoring functions g j,k (piece-wise linear transformations of feature values 2 T ranscript inference with mTim into real-valued scores, see [21] for details) and transition scores φ . F θ ( x , π ) = t X p =1 m X j =1 X k ∈S [[ π p = k ]] g j,k ( x j,p ) + φ ( π p − 1 , π p ) where [[.]] denotes the indicator function. The parametrization of the feature scoring functions g j,k , together with the transition scores φ constitutes the parametrization of the model denoted by θ . Let n be the number of training examples ( x ( i ) , π ( i ) ) , i = 1 , . . . , n . Follo wing the discriminative learning paradigm, we want to enforce a large margin of separation between the score of the correct path π ( i ) and any other wrong path π 6 = π ( i ) , i.e., F θ ( x ( i ) , π ( i ) ) − F θ ( x ( i ) , π ) 0 ∀ π 6 = π ( i ) ∀ i = 1 , . . . , n T o achie ve this, we solve the follo wing optimization problem: min θ , ξ ≥ 0 1 n n X i =1 ξ ( i ) + C Ω( θ ) s.t. F θ ( x ( i ) , π ( i ) ) − F θ ( x ( i ) , π ) ≥ ∆( π ( i ) , π ) − ξ ( i ) ∀ π 6 = π ( i ) ∀ i (1) where Ω is a regularization term to restrict model complexity (see [36, 37] for details), whose weight is adjusted through the hyper-parameter C . So- called slack variables ξ ( i ) implement a soft-margin [8] allowing for some prediction errors on the training set. A Bundle Method for Efficient Optimization A common approach to obtain a solution to (1) is to use so-called cutting plane or column generation methods. These accumulate gro wing subsets (working sets) of all possible structures and solve restricted optimization problems [33]. Howe ver , these techniques often con verge slowly . Moreover , the size of the restricted optimization problems grows steadily and solving them becomes more expensi ve in each iteration. Simple gradient descent or second order methods can not be directly applied as alternati ves, because the abo ve objective function is continuous but non-smooth. Our approach is instead based on bundle methods for regularized risk minimization [27, 30, 9]. In order to achiev e fast conver gence, we use a variant of these methods adapted to structured output learning. W e consider the objecti ve function J ( θ ) = Ω p,γ ( θ ) + L ( θ ) , where L ( θ ) := C n X i =1 ` (max ¯ π ∈ Υ {h θ , Ψ( x i , ¯ π ) i + ∆( π i , ¯ π ) } − h θ , Ψ( x i , π i ) i ) . Direct optimization of J is very expensiv e as computing L in volves computing the maximum over the output space. Hence, we propose to optimize an estimate of the empirical loss ˆ L ( θ ) , which can be computed efficiently . W e define the estimated empirical loss ˆ L ( θ ) as ˆ L ( θ ) := C N X i =1 ` max (Ψ , ∆) ∈ Γ i {h θ , Ψ i + ∆ } − h θ , Ψ( x i , π i ) i . Accordingly , we define the estimated objective function as ˆ J ( θ ) = Ω p,γ ( θ ) + ˆ L ( θ ) . It is easy to verify that J ( θ ) ≥ ˆ J ( θ ) . Γ i is a set of pairs (Ψ( x i , π ) , ∆( π i , π )) defined by a suitably chosen, growing subset of Υ , such that ˆ L ( θ ) → L ( θ ) (cf. Algorithm 1). In general, bundle methods are extensions of cutting plane methods that use a prox-function to stabilize the solution of the approximated function. In the framework of regularized risk minimization, a natural prox-function is giv en by the regularizer . W e apply this approach to the objective ˆ J ( θ ) and solve min θ Ω p,γ ( θ ) + max i ∈ I {h a i , θ i + b i } (2) where the set of cutting planes a i , b i lower bound ˆ L . As proposed in [9, 30], we use a set I of limited size. Moreo ver, we calculate an aggregation cutting plane ¯ a , ¯ b that lower bounds the estimated empirical loss ˆ L . T o be able to solve the primal optimization problem in (2) in the dual space as proposed by [9, 30], we adopt an elegant strategy described in [9] to obtain the aggre gated cutting plane ( ¯ a 0 , ¯ b 0 ) using the dual solution α of (2): ¯ a 0 = X i ∈ I α j a i and ¯ b 0 = X i ∈ I α i b i . (3) The following two formulations reach the same minimum when optimized with respect to θ : min θ ∈H Ω p,γ ( θ ) + max i ∈ I h a i , θ i + b i = min θ ∈H { Ω p,γ ( θ ) + h ¯ a 0 , θ i + ¯ b 0 } . This new aggregated plane can be used as an additional cutting plane in the next iteration step. W e therefore have a monotonically increasing lower bound on the estimated empirical loss and can remove previously generated cutting planes without compromising con ver gence (see [9] for details). The algorithm is able to handle any (non-)smooth conv ex loss function ` , since only the subgradient needs to be computed. This can be done efficiently for the hinge-loss, squared hinge-loss, Huber-loss, and logistic-loss. The resulting optimization algorithm is outlined in Algorithm 1. Algorithm 1 Bundle Methods for Structured Output Algorithm 1: S ≥ 1 : size of the b undle set 2: τ > 0 : linesearch trade-of f (cf. [ ? ] for details) 3: θ (1) = θ p 4: k = 1 and ¯ a = 0 , ¯ b = 0 , Γ i = ∅ ∀ i 5: repeat 6: for i = 1 , .., n do 7: π ∗ = argmax π ∈ Υ {h θ ( k ) , Ψ( x i , π ) i + ∆( π i , π ) } 8: MMV true := max π ∈ Υ {h θ , Ψ( x i , π ) i + ∆( π i , π ) } 9: MMV est := max (Ψ , ∆) ∈ Γ i h θ , Ψ i + ∆ 10: if ` ( MMV true ) > ` ( MMV est ) then 11: Γ i = Γ i ∪ (Ψ( x i , π ∗ ) , ∆( π i , π ∗ )) 12: end if 13: Compute a k ∈ ∂ θ ˆ L ( θ ( k ) ) 14: Compute b k = ˆ L ( θ ( k ) ) − h θ ( k ) , a k i 15: Define Z k ( θ ) := max ( k − S ) +

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment