The Geometry of Generalized Binary Search

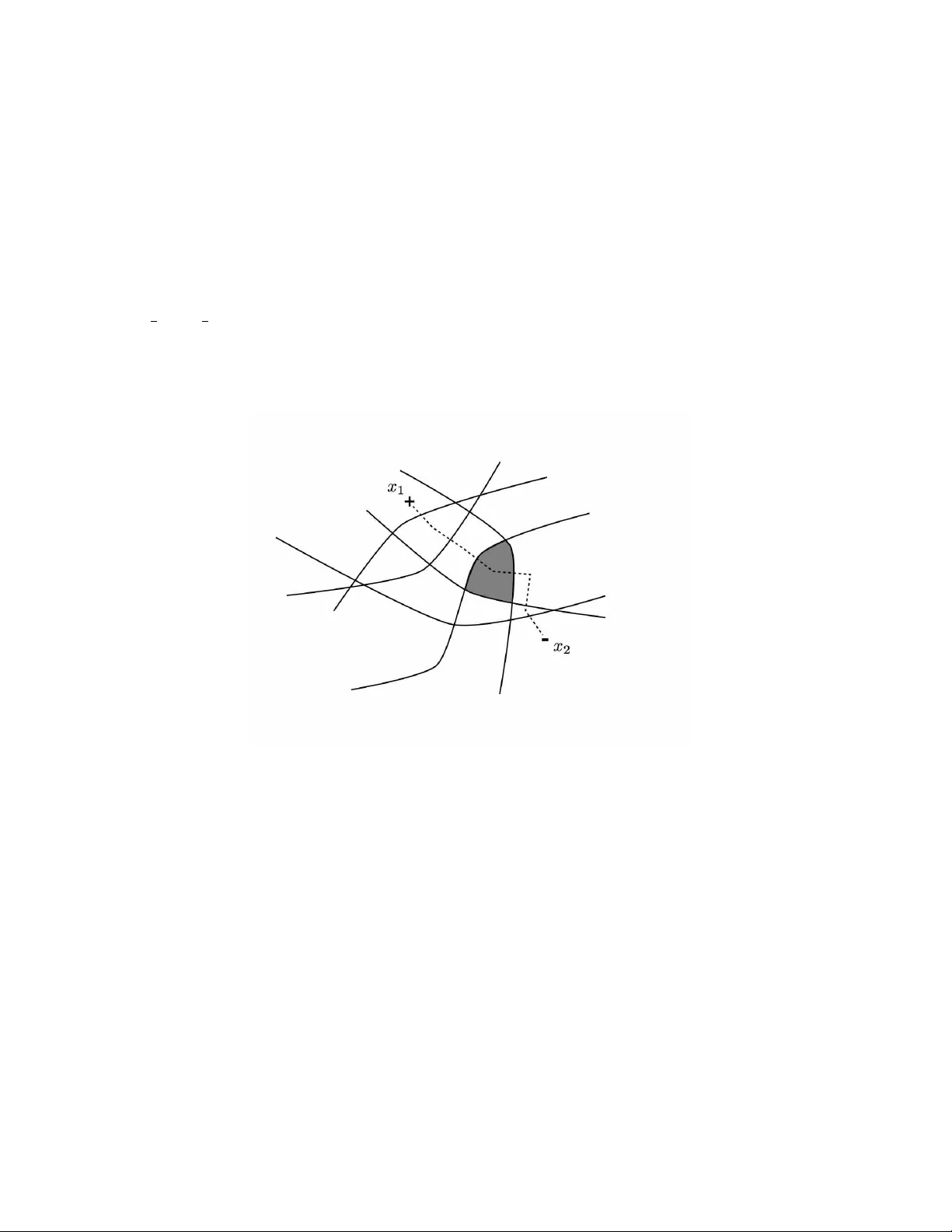

This paper investigates the problem of determining a binary-valued function through a sequence of strategically selected queries. The focus is an algorithm called Generalized Binary Search (GBS). GBS is a well-known greedy algorithm for determining a…

Authors: Robert D. Nowak