Hacking Smart Machines with Smarter Ones: How to Extract Meaningful Data from Machine Learning Classifiers

Machine Learning (ML) algorithms are used to train computers to perform a variety of complex tasks and improve with experience. Computers learn how to recognize patterns, make unintended decisions, or react to a dynamic environment. Certain trained m…

Authors: Giuseppe Ateniese, Giovanni Felici, Luigi V. Mancini

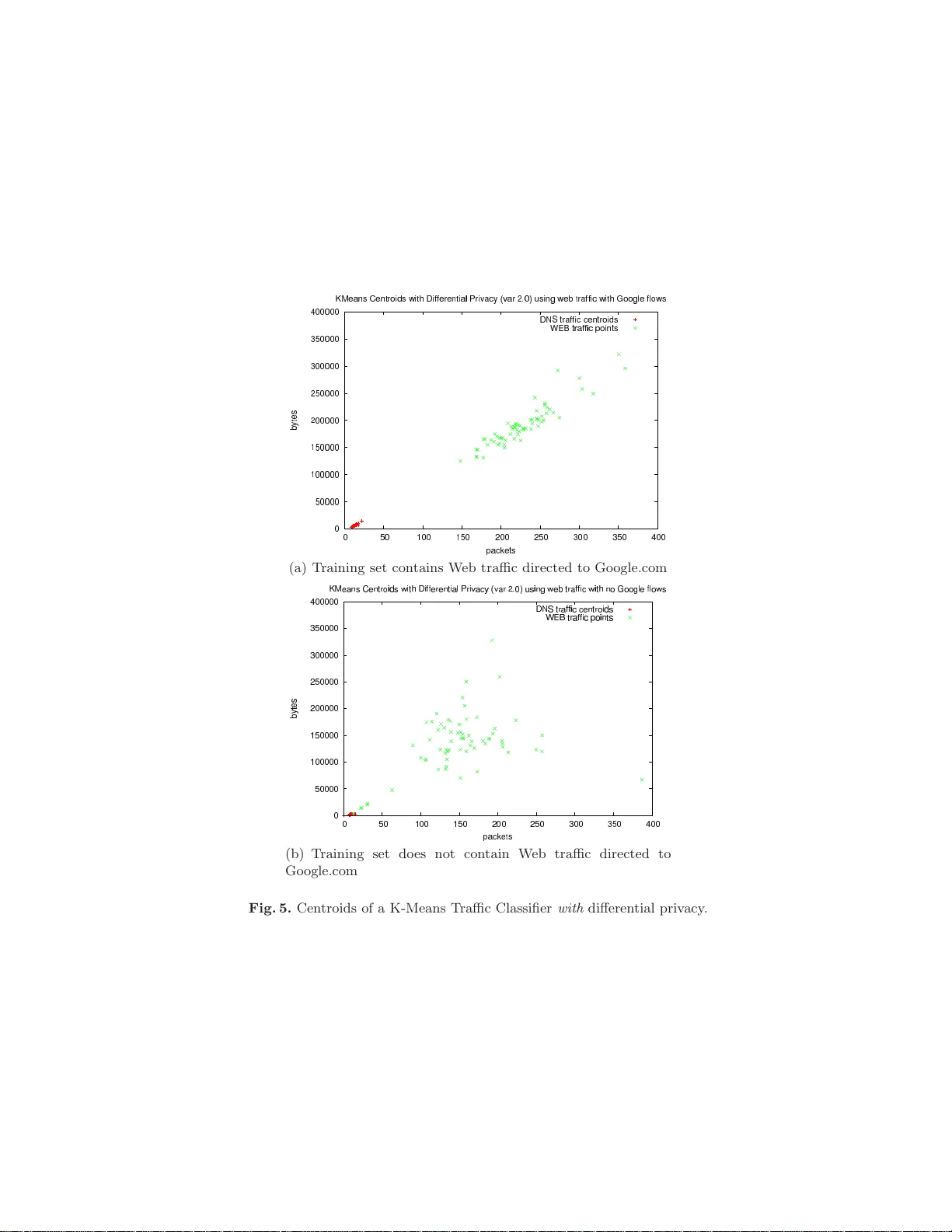

Hac king Smart Mac hines with Smarte r Ones: Ho w to Extract Meaningful Data from Mac hine Learning Classifiers Giusepp e A tenies e 1 , Giov anni F elici 2 , Luigi V. Mancini 1 , Angelo Spo g nardi 1 , An tonio Villani 3 , and Domenico Vitali 1 1 Universit` a di Roma La Sapienza, Dipartimento di Informatica { ateniese ,mancini,spognardi , vitali } @di.uniroma1.it 2 Consiglio Nazionale delle Ricerche, Istituto di Analisi dei Sistemi ed Informatica Roma giovanni.fe lici@iasi.cnr.it 3 Universit` a di Roma T re, Dipartimen to d i Matematic a villani@mat.uniro ma3.it Abstract. Mac hine Learning (ML) algorithms are used to train com- puters to p erform a va riety of complex tasks and improv e with exp eri- ence. Computers learn how to recognize patterns, make unintended deci- sions, or rea ct to a dy namic environment. Certain trained mac hines ma y b e more effective than others b ecause th ey are based on more suitable ML algorithms or b ecause they were trained through sup erior training sets. Although ML algorithms are known and publicly rel eased, training sets may n ot b e reasonably ascertainable and , indeed, may b e guarded as trade secrets. While muc h researc h has b een p erformed about th e priv acy of the elements of training sets, in this p aper we fo cus our at- tention on ML classifiers and on the statistical information that can b e unconsciously or maliciously revealed from them. W e show that it is p os- sible to infer unexp ected but useful information from ML classifiers. In particular, we build a nov el meta-classifier and train it to hack other clas- sifiers, obtaining meaningful information abou t their training sets. This kind of information leak age can b e ex ploited, for examp le, by a vendor to build m ore effective classifiers or to simply acquire trade secrets from a comp etitor’s ap p aratus, p otentially violating its intellectual prop erty righ ts. 1 In tro duction Machine learning cla ssifiers ar e des igned to make effective and efficient predic- tion of “ patterns” fro m lar ge data sets. Many applications have b een prop ose d in the literatur e (e.g., [27, 54, 49, 23, 25]) a nd machine lea rning alg o rithms p erv ade several contexts of informa tio n technology . ML approaches (such as Supp ort V ector machines, Clustering, Ba yesian net work, Hidden Marko v models, etc.) rely o n quite distinct ma thematical c o ncepts but generally they are employed to so lve similar proble ms . A machine learning alg orithm consists of tw o pha ses: tr aining and classific ation . During the training, th e ML algorithm is fed with a tr aining set of samples. In this phase, the relationships a nd the corr elations implied in the tr aining samples are gather ed ins ide the mo del . Afterwards, the mo del is used during the class ific a tion phas e to classify and ev alua te new data. ML c lassifiers a r e usually able to mana ge a larg e amount o f data and to ada pt to dynamic environment s . Their versatility makes them suitable for several im- po rtant tasks. F or example, classification a nd regression mo dels are employ e d to a nalyze curren t and historical trends to make predictions in financial mar- kets [24, 33, 8], to study bio lo gical problems [54], to s uppor t medica l dia gnosis [30, 42, 57], to cla ssify netw or k traffic or detect anomalies [22, 28, 39, 12, 49]. One may think tha t it is safe to relea se a cla ssifier, whether in hardware or softw are, s ince in tellec tual prop erty laws would preven t any o ne from pro ducing a similar appar atus, fo r example, b y cop ying its code or its design principles. How ever, rele a sing a trained class ifie r ma y be sub ject to unexp ected informa- tion lea k ag es that ma ke it pos sible to produce a comp etitive pro duct witho ut violating any in tellectual pr op erty rights. Let us consider , for ins tance, a classifier C a that is less effective than a cla ssifier C b pro duced b y a competitor. The ML alg orithms us ed in C b may b e publicly a v ail- able or be infer r ed through r everse enginee r ing. F or example, c o mmercial soft- ware pro ducts for sp e ech recognition, such a s Nuance Dragon NaturallySp eak- ing [1], utilize widely studied Hidden Mar ko v Mo dels . These a lgorithms, alo ng with their optimizations , ar e well-understo o d a nd quite standard. Thus, the co m- mon assumption is that a n yone c a n easily replicate them. In par ticular, we could assume that the tra ining set used for C b is sup erior , in the sense tha t makes C b more effective than C a even t ho ugh bo th implemen t essentially the same ML algorithms. What mak es C b better than C a is the sp ecific knowledge for med dur- ing the training phase, inferr e d by the training s e t. F or instance, a classifier that makes sto ck market predic tio ns base d o n neural net work holds its p ow er in the weigh ts at its hidden la yer (see A). But those w eights depend exclus iv e ly on the training set, hence v alua ble informatio n that must b e treasur ed. Thu s , it is fair to as k: Is it safe to r elease a pro fitable ML classifier? W ould selling a softw are/ha rdware clas sifier reveal concre te hin ts about its training set, uncov ering the secrets of its effectiveness a nd jeopar dizing the vendor? W e s how that a classifier c a n be hack ed and that it is pos sible to extract from it meaningful information about its training set. This can b e a ccomplished bec ause a typical ML classifie r learns by changing its internal structur e to a b- sorb the information con ta ine d in the training da ta. In particular, w e devise and train a meta- classifier tha t can succe ssfully detect and class ify these changes and deduce v aluable information. How ever, we could no t rep ort on pro ducts r eleased by co mmercial vendors b ecause w e did not get legal p ermission to ha ck a propri- etary pro duct. Nevertheless, we analyzed the same ML algo r ithms emplo yed by commercial pro ducts. F or example, we c onsidered the HMM-based sp eech recog- nition engine of the op en-source pack ag e V o xF org e which is similar to the o ne s employ ed b y commercial pro ducts , such as Nuance Dr a gon NaturallySpeking . W e no te, in addition, that using op en-sour ce s oft ware makes our exp eriments easily repro ducible by others. It is impor ta n t to obser ve that we a re not in terested in priv acy leaks, but rather in discov er ing anything that makes classifier s b e tter than others . In partic- ular, we do not c are ab out pr o tecting the elements of the training set. Cons ide r the following exa mple: a sp eech recognition s o ft ware recognizes spoken words better than comp eting pro ducts, even though they a ll implemen t the sa me ML algorithms. The training s et is comp osed of commonly sp oken w ords, thus it do es not ma ke sense to talk ab out priv acy pro tection. How ever, we show how to build a meta-class ifie r trained to re veal that, for instance, the ma jority o f training samples came fr om female voices or from voices of p eo ple with marked accents (e.g., Indian, Br itish, American, etc.). Then, we can extra pola te certain hidden att ributes whic h are somehow absorb ed by the learning algor ithm, thus po ssibly uncov ering the secret sauce that makes the sp e ech reco gnition softw are stay ahead of the comp etition. Therefore the type of leak age w e are in ter e sted in is quite different than that considered in pr iv acy preserving data mining and s ta tistical databases [14] or differential pr iv ac y [9, 19]. Indeed, in Section 4, we show that a system providing Different ia l Priv acy is utterly insecur e in our mo del. Remark: W e intro duce a nov el type of informatio n leak ag e and sho w t ha t it is inherent to lea rning. This is far from obvious a nd, indeed, quite unexp ected: Clearly , a ll lea rning algo rithms must reco gnize patterns in their datas e t. Thus, classifiers will inheren tly reveal some information. The open question is whether this information has a ny meaning. Indeed, classifie r s are v ery o pa que ob jects and make it difficult to infer a n ything useful a t all. What we show here is that it is s till p ossible to extra c t something meaningful relating to pr op erties o f the training s et. This is surpris ing and achiev able through a meta-clas sifier that is sp ecially trained to exp ose this information. How ever, we do not attempt to formally define this new type o f information leak ag e nor provide mec hanisms to preven t it. 1.1 Con tributi o ns Our res ults evince rea lis tic issues facing machine learning algo r ithms. In partic- ular, the main contributions of our work a re: 1. W e put forward a new type o f infor mation leak age that, to the b est of our knowledge, has not been cons idered b efore. W e show that it is unsafe to release trained cla ssifiers since v a luable information ab out the t r aining set can b e extr a cted from them. 2. W e propose a wa y to leverage the ab ove infor mation leak age, devising a gen- eral attack strateg y that ca n b e used to ha ck ML classifiers . In particular , we define a mo del for a meta - classifier that can b e trained to extract meaningful data fr om targeted cla ssifiers. 3. W e describ e s everal attacks against exis ting ML cla s sifiers: we success fully attack ed a n Internet traffic classifier implemented via Supp ort V ector Ma- chines (SVMs) and a sp eech reco gnition softw ar e based o n Hidden Mar ko v Mo dels (HMMs). W e be lieve existing clas sifiers, whether commercia l pro ducts or pr ototypes re- leased to the rese a rch communit y , are susceptible to our general attack str ategy . W e put forward the imp ortance of pr otecting the tr aining set and of the need fo r nov el ma chine le a rning techniques tha t would pre ven t determined co mpetitor s from pr obing a ML cla s sificator and lear ning trade secrets from it. 1.2 Organization of this pap er The r e s t of the pap er is org anized as follows: Section 2 descr ib es the pro blem and int r o duces an attack methodo logy that makes use of a ML mo del. Se c tion 3 shows how we success fully applied our prop os e d metho dology to hack tra ined SVM and HMM c lassifiers. In Section 4 we analyze the b ehavior of our attack metho do logy when the training set is provided through differential pr iv acy . Section 5 contains some related w o rks. Section 6 concludes our work with some remark s . 2 Hac king Mac hine Learning classifiers In this pap er we a r e interested in Machine Learning a lgorithms used for class i- fication purp oses, such as Int e r net traffic classifier s, speech recognitio n s y stems, or for financial mark et predictions. Our goal is to hack a tr ained classifier to obtain informatio n that w as implicitly a bsorb ed from the element s the classifier received as input. Consider for instance the Artificia l Neur al Networks (ANNs) based o n Multi- layer p er c eptr on (please refer to A for details ab out this a lgorithm). Consider a simple neur al netw or k tha t has to lea rn the iden tity function over a vector of eight bits, only one of them set to 1 ( this example is ta ken fr om the p o pular bo o k of Mitchell [47]). The net work has a fixed structure with eight input neu- rons, three hidden units and eig ht output neurons. Using the ba ckpropagation algorithm ov er the eigh t p ossible input sequences, the net work ev entually learns the target function. By examining the weigh ts of the three hidden units, it is po ssible to observe how they actually enco de (in bina ry) eight distinct v alues, namely all po ssible sequences ov er three bits (000 , 001 , 0 10 , . . . , 111). The exact v a lues of the hidden units for one typical r un of the backpropagation algorithm are s hown in T able 1. Basically , the hidden units of the net work were able to capture the essential infor ma tion from the eight inputs, automatica lly discov er - ing a way to repr esent the inputs. Thus, it is p ossible to ex tract the (p oss ibly sensitive) cardinality of the training set by just lo o k ing at the trained netw ork. In the following sec tio n, we des crib e a metho d to ex tract this type of sensitive information. Namely , we show in Section 3.2 tha t it is po ssible to determine if a cer tain t y p e of netw ork tr a ffic was included in the tra ining set of an Internet classifier trained on Cis co net work data flows [53]. Similarly , w e hack ed a sp eech recognition system a nd were able to determine the accent of s pea kers emplo yed during its training. This ca se study is rep orted in Section 3.1. Input Hidden V alues Output 10000000 → . 89 . 04 . 08 → 10000000 01000000 → . 15 . 99 . 99 → 01000000 00100000 → . 01 . 97 . 27 → 00100000 00010000 → . 99 . 97 . 71 → 00010000 00001000 → . 03 . 05 . 02 → 00001000 00000100 → . 01 . 11 . 88 → 00000100 00000010 → . 80 . 01 . 98 → 00000010 00000001 → . 60 . 94 . 01 → 00000001 T able 1. The wei ghts of the hidd en states , taken fro m Figure 4.7 of [47] 2.1 An attac k strategy In this section we devise a general attac k strateg y a gainst a tra ined clas sifier that can make an attac ker able to disco ver some statistica l infor mation ab out the training set. W e define the training data s et D as a m ultiset wher e all the elements ar e couples of the form { ( a , l ) | a = h a 1 , a 2 , . . . , a n i} ; to simplify , we can assume without loss of genera lit y that a i ∈ { 0 , 1 } m , and l ∈ { 0 , 1 } ν . Each training element a is represented as a vector o f n fe atur es (the v alues a i of the v ecto r) a nd has an asso ciated classification lab el l . C is a generic machine learning classifier trained on D : it could be a n Artificia l Neu r al Net work (AN N), a Hidden Markov Mo del (HMM) or a s imple De cision T r e e (DT). W e as s ume that C is disclosed after the end of the tr a ining phase. This means that in our mo del the adversary cannot taint C during the learning pr o cess. Instead, we a ssume that the adv er sary is a ble to arbitrarily modify the b ehavior o f C during the c la ssification pr o c e ss. In fact, when C is disclose d , it includes the set of instructions for the classificatio n task as well a s the mo del definition; hence, bo th the data structures and the instruction sequences ar e completely in the hand of the adversary . The assumption that the adversary has co mplete access to the cla ssifier is reasonable s inc e it is p ossible to extract the plain classifier a lso from a binary executable thro ugh, for instance, dyna mic ana ly sis tec hniq ue s [13]. Each classifier C ca n be e nc o ded in a se t of feature vectors tha t can b e used as input to train a meta-clas sifier MC . The set of feature vectors that repre sent s C are denoted by F C . F or example, in the case of an SVM, the set F C would contain the list of all the s upp or t vectors of the class ifie r C . In Figure 1, C x is the trained class ifie r that the adversary want s to ex a mine in o rder to infer so me statistical informa tion ab out the training set D x . Le t P be the prop er ty that the adversary wan ts to learn about the undisclosed D x . W e write P ≈ D to say that the pr o per t y P is pres e rved b y the datase t D . F o r instance, in the con text o f medical diag nosis applications, P could b e: the entries of the tr aining set ar e e qu al ly b alanc e d b etwe en males and female s . T o discern whether P ≈ D x , the adversary ca n build a meta-classifier MC , that is a classifier trained o ver a particula r data s et D C comp osed of the elements a ∈ F C i lab eled with l ∈ { P , P } . The label is assigned according to the nature of the Fig. 1. A ttack metho dology: the target training set D x prod uced C x . Using seve ral training sets D 1 , . . . , D n with or without a sp ecific prop erty , we build C 1 , . . . , C n , namely the training set for the meta-classifier MC that will classify C x . dataset used to tr a in the class ifier C i . T o train MC the a dversar y has to build the tr aining set first. F or this purp ose, the adversary ge nerates a vector of sp ecific datasets D = ( D 1 , . . . , D n ) in such wa y that D co ntains a (pos s ibly) balanced amount of instances reflecting P and P . After this step, he tr ains the meta-classifier MC as describ ed in Alg orithm 1. The algor ithm takes as input the crea ted training se ts D a nd their cor r esp onding lab els. It starts with an empt y data set (line 3). Then, it trains a classifier C i on each cr eated data set (line 5 ) and ge ts the repr esentation of the classifier as a set of feature vectors (line 6 ). Then, it adds each feature vector to the datas et D C (line 8). Finally , it trains the meta-clas sifier using the res ulting data s et D C (line 11). Next, the adversary uses the meta-classifier MC o n F C x to predict which class l x the classifier C x belo ngs to. This is already a new form of information leak age since the adversary learns whether the orig inal tra ining data D x preserves P o r not. In practice, thanks to our attack, we ar e able to infer an y key statistical prop erty P preser ved by the training set performing a sort of brute-force a ttack on the set of pr ope rties. It is important to remark that with this metho dolog y the adv ers ary extra cts external information , NOT in th e form of a ttributes o f the dataset D x . Thes e are essentially statistical prop erties inferred from the rela tio nship amo ng data set ent r ies. F or exa mple, in Section 3 .1 w e show ho w to attack a speech recog nitio n classifier b y extracting information abo ut the a ccent o f the spea kers. This in- formation is not supp osed to be captured explicitly b y the model nor it is an attribute of the tra ining se t. T o further impr ov e the quality o f the clas sification pr o cess, some filt ers can be applied to the set D C of mo dels resulting from the tra ining phase. The filters depe nd on the problem domain a nd ar e used to find optimal mo dels for the Input : D : the arra y of training sets l : the arra y of labels, where each l i ∈ { P , P } Output : The meta-classifier MC 1 T rainMC ( D , l ) 2 b egin 3 D C = {∅} 4 foreac h D i ∈ D do 5 C i ← train( D i ) 6 F C i ← getF eatureV ectors( C i ) 7 foreac h a ∈ F C i do 8 D C = D C ∪ { a , l i } 9 end 10 end 11 MC ← t rain( D C ) 12 return MC 13 end Algorithm 1: T r aining of the meta-cla ssifier prop erty P and get rid o f les s significant entries. In so me c ases (as the example in Section 3.2), this step can be simply assimilated int o the tra ining pha s e of the meta-classifier . In other cases, as the ex ample in Section 3.1, we will discuss a filter realized with the Kullback-Leibler divergence [43]. 3 Case studies In this se c tion we provide tw o examples of attac ks performed according with the metho dology introduce d in Section 2.1. W e prob e tw o co mplex systems, one of which is la rgely used by so ft ware vendors and research communities. As our first example, we attack a Speech Recognition sys tem realized by Hidden Markov Mo dels; later, we co nsider a netw ork traffic cla ssifier implemented by Supp ort V ecto r Machines. O ur exp eriments ar e p erfor med using W ek a ([5 6]). In ea c h experiment, we us e Decision T ree as meta-class ifie r M C (more details on Decisio n T ree are rep or ted in B); we a lwa ys use the C 4 . 5’s implementation, namely J 48 mo dule, included within the W ek a framework. Clear ly , the a ttack could b e replic a ted using meta- classifiers base d on other ML algor ithms. The ev aluation o f our exp eriments is per formed using standard metrics : (1 ) r e- c al l , that is the true p ositive rate, and (2) pr e cision , that is the ra tio of true po sitive and the total num b er of p ositive predictions of the mo del. F ur thermore, (3) ac cur acy , namely the r ate of corre ct predictions made by the classifier ov er the num ber of instances o f the entire data set, can b e eas ily derived from the co nfusion matric es in Sections 3.1 and 3.2. In order to ev aluate the effectiveness of our attack stra tegy , we crafted several classifiers trained on str ongly biased training sets. Thes e clas sifiers would prob- ably o bta in very low p erformance dur ing the classification pha se; as s uc h, they would b e unlikely employ ed in a commercial pro duct. Moreover, in our e x per i- men ts , we decided to focus on simple binary pro per ties. Our aims are to pr ovide an attack strateg y that could b e easily gener alized a nd to demostrate that it is po ssible to infer information o n the tra ining s et lo o king at the weigh ts lea rned by a classifier. A ttacking commerc ial pro ducts is o nly a ma tter of tuning the genera tion of the sets D 1 , . . . , D n according to mor e co mplex pro p er ties . T o ev aluate our a ttack strategy we ma ke tw o assumptions: 1) the adversary knows which machine learning algorithm is emplo yed by the tar g et 2) the adver- sary ha s complete a ccess to the clas sifier. W e claim that these tw o assumptions are rea sonable. In fact, the information a bo ut wha t algor ithms are employ ed is not considered a sensitive information, and sometimes it is adv er tised b y the vendor itself; for instance, the newest version of the NaturallySp eaking engine (whic h is the version 12 at the time of wr iting) leverages HMM and five-grams to per form speech recognition and this info r mation can b e ga thered from Nuance’s website and patents. F o r wha t concerns the second assumption, note that in ma n y cases vendors need to hand o ut their classifiers to end-user s e mbedding them within the soft ware executable or appara tuse s; as such, an adversary would b e able to extrac t the classifier using, for instance, tec hniq ue s based on dyna mic binary analy sis. Per- forming this t yp e of analysis is orthogona l to our a ttack metho dology and is out of the scop e o f this work. It is worth remar king that the structure o f the tra ining set (e.g., the list of at- tributes) is no t necessary to p erform our a ttack; indeed, w e are interested o n the external information ab out the training data and w e do not consider the attribute v a lues. 3.1 Hidden Mark ov Mo del s Bac kground A Marko v Mo del is a sto chastic pr o cess tha t can b e r epresented as a finite state mac hine in whic h the transitio n probability depends only on the curren t state and is indep endent from any pr ior (and future) state of the pro cess. An Hidden Markov Mo del , introduced in [16], is a particular type of Marko v Mo del for mo deling seque nc e s that can be characterized b y an under ly - ing pro cess genera ting an obse r v a ble sequence. Indeed, o nly the o utputs of the states are o bs erved (the actual sequence o f the states of the pro cess canno t b e directly observed). One of the most elegant examples to describ e HMMs was conceived b y Jaso n Eisner [26]: Supp ose tha t, in the year 2799 , a climate scien- tist is studying the weather in Ba ltimore Ma ryland for the summer of 20 07 by examining a diar y , which ha d rec o rded how many ice cr eams were eaten by Ja son every day of that summer. O nly using this rec o rd (the observ able sequence ), is it p ossible to estimate with a goo d a ppr oximation the daily temp erature (t he hidden sequence). HMMs s o lve the se quential le arning pr oblem that is a sp e c ial learning problem whe r e the da ta domain is sequential b y its natur e (e.g. sp eech recognition pro blem). In Figure 2, a simple mo del M is repr e sent e d that can b e 2 q 3 q 1 q Fig. 2. An example of Hidd en Ma rkov Model with th ree states. describ ed by: – a set of hidden states Q = q 1 , q 2 , ..., q m – a transition probability matrix A = a 11 a 12 . . . a 1 m a 21 a 22 . . . a 2 m . . . . . . . . . . . . where the element a i,j represents the probability of moving fr om s tate i to state j – an emissio n proba bilit y matrix B ( m × n ), where the element b j,k is the probability to pro duce the o bs erv a ble o k from the state j , that is b j,k = B j ( k ) = P ( o k | q j ) The HMM mo del is bas e d on t wo main assumptions. The first is the Marko v assumption, namely that given a sequence x 1 , . . . , x i − 1 of transitions b etw een states, the pr obability of the next sta te dep ends o nly on the present state: P ( x i = q j | x 1 , x 2 , . . . , x i − 1 ) = P ( x i = q j | x i − 1 ) The se c ond is the output indep endence assumption, namely that given a se- quence x 1 , . . . , x T of tra nsitions b etw een states, where x i = q j , and the o bserved sequence y 1 , . . . , y T , the emission pro bability of any observ able o k depe nds only on the pre sent state a nd not on any other state or observ able: P ( y i = o k | x 1 , . . . , x i , . . . , x T , y 1 , . . . , y T ) = P ( o k | q j ) In Figure 2, thr e e s ta tes ( q 1 , q 2 and q 3 ) are shown: the tr ansition probabilities a ij , and, for the three states, the emission pro babilities ( B 1 , B 2 , B 3 resp ectively) of the three o bserv able ( o 1 , o 2 , o 3 ). The HMM mo dels are well-suited to s olve three types of problems: likeliho o d, deco ding and learning [38]. Likeliho o d problems are rela ted to ev aluating the probability of observing a given obs e rv a ble sequence y 1 , . . . , y T , given a complete HMM mo del, where bo th matrices A and B a re known. Decoding pr oblems call for the ev aluation of the bes t sequence of hidden states x 1 , . . . , x T that can hav e pro duced a given observ able sequence y 1 , . . . , y T . Le a rning problems consist of reconstructing the t wo matrices A and B o f an HMM, given the set of states Q and one (or more) observ ation seq uence Y . F or this task , the Viterbi and the Baum-Welch algorithms are used r esp ectively to train and tune the HMM. HMM for sp eec h recognition In this section we descr ib e the attack to the HMM in the sp ecific case of Sp e e ch R e c o gnition Engines (SRE). Sp e e ch R e c o gni- tion (SR) is the pr o cess of conv er ting a so und r e corded through an acquis itio n hardware to a seq uence of written words. The applica tions of SR are manyfold: dictation, voice search, hands-fr e e co mma nd execution, audio archiv e searching, etc. The predo minant technology us ed to p erfor m this t a sk is the HMM [37], many to o ls a r e nowada ys av ailable ([5, 41]). W e ex plo ited o ur metho dolog y to verify whether the HMM was trained with a biase d training set: acco rding to the metho dology describ ed in 2.1, we are able to detect with high co nfidence whether the HMM was trained only with p eople from the sa me nationality . T o r ecognize a sp eech, SREs require tw o types of input: – an A c oustic Mo del , whic h is created b y taking speech audio files, i.e., the sp e e ch c orpus , and their transcr iptions, and co m bing them into a s tatistical representation of the sounds that make up ea ch word; – and either a L anguage Mo del or a Gr ammar File . Both describ e the set of words that the statistica l mo del will b e able to classify . How ever, the first mo del contains the probabilities o f sequences of w ords, while the second con- tains a set of predefined com bina tions of w or ds. In the following exp eriment, this pap er use s only the La nguage Mo del. Let us briefly in tr o duce the typical SRE workflow. An unknown sp eech wav eform is captured by the a cquisition hardware, the Pulse Co de Mo dula tion provides the digital r epresentation of the ana logical audio signal. This bitstream is now con- verted in mel-fr e quency c epstr al c o efficients (MF CCs), namely a r e pr esentation of the short-term p ow er spe c trum of sounds. The MFCCs a re the observables o f a Hidden Marko v Mo del that changes state o ver time and that generates one (or more) observables once it enters in to a new sta te. In this scenar io, the states of the HMM are all the p ossible subphonemes o f the la nguage while the tr ansition matr ix contains the proba bility for each sub- phoneme to cycle ov er itself or to move to the next subphoneme. The emission probabilities are the pro babilit y to obser ve a cer tain MFCC fr o m each sub- phoneme. The o nly p ossible tra nsitions be t ween the states of each phonemes are to themse lves o r to success ive s ta tes, in a left-to-r ig ht fashion; the self- lo ops makes it p ossible to deal with the v aria ble length of each pho neme with ease. Both tr ansition and emission pr obabilities are built using the Viterbi alg o - rithm [32] ov er a lar ge sp eech corpus. Since the MFCC files are v ector s of real-v a lued num b ers, they are approximated by the m ultiv ar iate Gaussians distribution (note that the probability to have exactly the same v ector w ould b e nearly 0 ). F or an y differen t state (i.e., sub- phoneme), each dimension of the vector ha s a cer tain me an and varianc e that represent the likeliho o d of a n individual aco ustic obs e rv a tion from that state. F o r the sake of our ex p er iments, we build the Hidden Markov Models using the Hidden Markov Mo del T o olkit (HTK) [60] to olkit. HTK consists o f a set of librar y mo dules and to ols a v ailable in C. The HTK to o lk it provides a high level of modularity and is organized through a set of libra ries with functions (e.g., HMem for memor y manag ement , H SigP for sig nal pro ces s ing ,. . . ) a nd a small core. The MFCC files w er e gathere d from the V oxF orge pro ject [2], the most imp ortant spe ech co rpus and acous tic model rep osito r y for op en-source sp eech reco gnition engines. Mo r eov er, ea c h sp eech file relea sed by V oxF o rge is asso ciated with several categor ies such as gender, age range, and pronunciation dialect. The aim of our e xper iment is to extract this infor mation, which is im- plicitly cor r elated with the conten ts, even if it do es no t a ppea r as a n a ttribute in our data s et. A ttac k description The main ob jective of this attack is to build a meta- classifier for the following prop erty P : the classifier was tr aine d only with p e ople who sp e ak an Indi an english diale ct . W e emph a size that this is external infor- mation as intro duced in Sectio n 2.1: the sp eech dialect is NOT explicitly used during the tra ining pro c ess, but in practice it influences the o utput of the clas - sifier. The first part of the exp eriment des crib es the enco ding of the HMMs; next, we describ e the decisio n tree o f the meta-class ifier; finally , we present an improv ed version of the classifier that uses a filter to improve the clas sification. T o ca rry out the a ttac k , we retrieved 11 , 137 re c ordings from the V oxF orge cor- pus. In par ticular, for our exp eriment, we to ok only the MFCC files in the English language. Each tr a ck co mes with a form con ta ining some meta-info r mation (e.g . gender, a ge, pronunciation dialec t). W e ha ve par titioned the corpus according to this meta-information; for this exp eriment, we hav e c onsidered the pa rtition containing the recor dings ma de with the same pronunciation diale c t and similar recording equimen ts. W e prepro cess ed the corpus with the HTK too lkit in order to minimize the environment a l noise. Sta rting from this pa rtition, we have cre- ated D accor ding to the rule defined in Section 2 .1. Then, we ha ve tra ined each classifier C i as describ ed in Algo rithm 1. After that, we started with the enco ding phase which is describ ed b elow. Ea c h classifier C i , is r epresented in the HTK to olkit by a n ASCII file containing an HMM for ea ch phoneme b elong ing to the English language . E ach HMM is comp osed of: a transition probability matrix A ( n × n ) whic h descr ibes the tra n- sition b etw een hidden states and the tw o vectors M = ( µ 1 , µ 2 , . . . , µ m ) and V = ( σ 1 , σ 2 , . . . , σ m ) that are resp ectively mean and v ariance of the o utput probability distribution from a given hidden state (see Sections 3.1 a nd 3.1). In our exp eriments w e took the default HTK v alues during the training s tep (i.e. m = 25 and n = 5). T o enco de a single HMM w e chose to f o cus only on the output distributions, that is, the co uple o f vectors ( M , V ). The idea is that all these v alue s are initialized in the early steps of the training, accor ding to a mean computed ov er the entire MF CC dataset: since all the v alues ar e iter- atively r efined thro ugh the HTK to olk it, then we exp ect that these v alues a re correla ted in some w ay with the voices of the learning set a nd, b y extension, with the pronunciation dialects. F or this r eason we s et the feature vector a ∈ F C as follows: a = ( ph, µ 1 , µ 2 , . . . , µ m , σ 1 , σ 2 , . . . , σ m , l i ) where ph is a string v a lue represe n ting a phoneme, µ 1 , µ 2 , . . . , µ m and σ 1 , σ 2 , . . . , σ m are the output probability vectors and l i ∈ { Indian , not Indian } is the lab el of the curr en t row. It is imp ortant to no tice that this e nc o ding gives a row in D C for each phoneme of the aco ustic mo del. O ur training set was comp osed of 5 , 4 20 tuples eq ually balanced ov er the tw o class ifications considered for this exp eriment (i.e. the 50% of training data were gener ated by Indian p eople a nd the remaining 50% by p eople sp eak ing with different a ccent ). The test set was comp osed of 1 , 01 6 insta nces: 774 of these are classified as not Indian and the re- maining 242 a re cla ssified as Indian . The tr a ining ended up with a very complex meta-classifier : the decision tree was co mp ose d of more than 811 no des with 610 leav es. Indian not I n dian classified as 220 22 Indian 72 702 not Indian T able 2. The confusion matrix of the meta-classifier Precision R ecall NotIndian 0.97 0.91 Indian 0.75 0.91 T able 3. The precision and recall summary of th e meta-classifier T a ble 2 rep orts the co nfusion ma tr ix obtained from this e x per imen t (w e recall that the co nfusio n matrix shows how correctly a classifier a ssigned the lab els to the element s of the input s et). The n ot Indian classifiers ar e cor rectly class ified with precision o f 0.97 whereas the Indian classifiers are r ecognized with pr ecision 0.75. (Sp ecifically: r ecall Indian: 0.909 and r ecall not Indian: 0.9 07.) One of the most interesting features provided by the C4.5 algorithm consists of the order in which the attributes decis io n tree appear. In fact C4.5 puts the most 0 50 100 150 200 250 300 350 400 0 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 Number of occurences indian not indian Fig. 3. The frequency of th e v alues of σ 2 for all p honemes in the training data of the meta-classifier. representative attributes at the hig her level of the tree. In our exp eriment, one of the most representativ e no des is σ 2 . The frequencies of ea c h v alue of σ 2 in the training data of the meta-cla ssifier are repres en ted in figure 3. It is easy to notice that the mean v a lues of ea c h distribution are co nsiderably shifted and can b e easily re c o gnized with r esp ect to the c la ss. Our meta -classifier is v ery effectiv e in catching those diff er ences; hence, as our exp eriments show, it correctly classifies the most par t of the test set. T o further impr ove the quality o f MC , we hav e applied a filter to the tra ining se t D C . Our goal w as to extract the pho nemes that b etter differ entiate the langua ge dialect. T o perfor m this task, we employ ed the Kul lb ack-L eibler (KL) div ergenc e betw e en the o utput probability distributions of the mo dels. The KL divergence is defined as follows: D K L ( P || Q ) = X i P ( i ) l og P ( i ) Q ( i ) (1) A low D K L v a lue means a high similarity o f the tw o pro ba bilit y dis tr ibutions, while on the other hand, high divergence v a lue s cor resp ond to an inferior simi- larity . T his means that the phonemes with the hig he s t divergence are the ones which b etter discriminate the Indian a ccent from o thers. Since the output proba bilities fo llow a Normal distributio n, we used the following equation to compute the K L divergence: D K L ( X i || X j ) = ( µ i − µ j ) 2 2 σ 2 i + 1 2 σ 2 i σ 2 j − 1 − ln σ 2 i σ 2 j ! (2) where X i ∼ N ( µ i , σ i ) and X j ∼ N ( µ j , σ j ). W e built 100 differ e nt training sets without India n reco rds, obtaining the relative acoustic mo dels C = ( C 1 , C 2 , . . . , C 100 ). Then, w e built the r eference le a rning set containing only Indian recor ds , obtaining the relative aco ustic mo del C r . Then, we compared the distance b etw een the output pro bability dis tributions of C r with every C i ∈ C , o btaining the summed v alue of the divergence. Since the same phoneme state has 25 p ossible output distributio ns , we have just computed the mean distance v alue across all the distributions. Finally , we to ok the fiv e phonemes with the highest divergence and w e re built MC using only the en tr ies relative to these phonemes. Indian not I n dian classified as 169 6 Indian 2 137 not Indian T able 4. The confusion matrix of the filter e d meta-class ifi er Precision R ecall NotIndian 0.98 0.96 Indian 0.95 0.98 T able 5. The precision and recall summary of th e filter e d meta-classi fi er T a ble 4 shows the confusion matrix of the fi lter e d classifier. The new r esults are noticeably improved: the pr ecision for the not Indian class is 0 . 9 8 a s b efore whereas the precis io n for the In dian clas s is increased to 0 . 95. (Sp ecifically: reca ll Indian: 0.986 and r ecall no t Indian: 0.9 66.) Also, the size o f the decision tree has dropp ed down sig nificantly (the res ulting decision tree is comp osed o nly of 2 1 no des with 11 leaves). 3.2 Supp ort V ector M ac hines Bac kground Supp ort V e c tor Machines (SVM) are sup ervised lea rning metho ds related to st atistic al le arning the ory and fir st in tr o duce d by Boser et al. in [1 7]. SVMs ar e la rgely used fo r class ific a tion a nd regr ession a na lysis. In their basic form, SVMs are first trained with sets of input da ta classified in t wo cla sses and are then used t o guess the clas s for eac h new given input. This a spec t makes SVM a non-pr ob abilistic binary line ar classifier . Suppo r t V ector classifiers a re based on the c o ncept of sep ar ating hyp erplanes , that a re the hyperpla ne s in the attribute space that defines the decis ion b oundar ie s b etw een sets of ob jects belo nging to different cla sses. During the training phase, the SVM receives a set of lab eled exa mples, each of them descr ib ed by n numerical a ttributes ( fe atur es ) and thus r epresented as a s et of p oints in a n -dimensio nal s pace. F or the sa ke of simplicity , we briefly introduce how an SVM works with data repr esented by t wo attributes a nd mapp ed into t wo cla sses. The en try i of the training datas et is represented by a 2-dimensio na l vector x i = h x i 1 , x i 2 i and b elongs to one and only one class y i : ( y 1 , x 1 ) , ( y 2 , x 2 ) , . . . ( y n , x m ) y j ∈ − 1 , 1 (3) Let us supp o se that the training data is linearly separable, na mely there ex is ts a vector w a nd a scalar v a lue b such that: w · x i + b ≥ 1 if y i = 1 , w · x i + b ≤ 1 if y i = − 1 (4) In order to deal with sets that are not linear ly separ able, the tra ining vectors x i can be mapp ed into a higher dimensional space by the function φ , the s o called kernel function : many k ernel functions have b een pr o po sed, but the most used are linear K ( x i , x j ) = x T i x j , p oly no mial K ( x i , x j ) = ( γ x T i x j + r ) d , γ ≥ 0 , radial basis function, RBF, K ( x i , x j ) = exp ( − γ k x i − x j k 2 ) , γ ≥ 0 a nd sig moid K ( x i , x j ) = tanh ( γ x T i x j + r ). The Suppo r t V ector classifier finds the optimal hyperplanes that separa te the training data with a maximal mar gin in this higher dimensional space; formally it resolves the sy stem of equa tio ns: y i ( w 0 · x + b 0 ) = 0 (5) It must b e p ointed out that, thanks to the natur e of the tra ining a lgorithm adopted by SVM, the solution of (5) can be o btained a t a re a sonable compu- tational cost reg ardless of the kernel function adopted. Intuitiv ely , a g o o d s e p- aration is achiev ed by the hyperplane tha t has the la rgest distance - o r mar gin - b etw een the nearest tra ining data p oints of different classes: these p oints ar e called the supp ort ve ctors . R o ughly sp eaking, the lar ger the margin, the lower the generaliza tion error o f the cla ssifier. It is easy to notice how the functional mar gin p oints determine the hyperplane of separa tion. This informatio n is tr iv ially fea tured by the attribute v alues in the training sets. F urthermore, we highlight that SVM can disclose more informa- tion when sev er al classifiers tr ained with differen t kernel functions a re provided. Since a trained SVM is repres en ted b y a set of w eights and a subset of the tra in- ing sample, it is not ea sy to obta in use ful informa tion on the characteristics of the complete training se t dir ectly fro m the SVM repr esentation. SVMs g enerated a significant res earch activit y which extends across the limits of data mining area . Although SVMs were initially int r o duced to solve pattern recognition problems in an efficie nt w ay ([2 0]), no wadays they ar e suitable in several co ntexts. In fact, SV Ms a re used for in trusion detection and anomaly detection ([39, 34, 22]) or as pa rt of co mplex sys tems for s imilar ta sks ([4 8, 6]). Other author s prop os e SVM-based s y stems for priv acy-cr itical tasks, such a s cancer diagnostic [30, 7], text ca tegorizatio n [36 ], or face recog nition [4]. SVM for ne tw ork traffic class ification As s hown by the extensive literature on this topic [28, 27, 49, 15], netw or k tra ffic classific a tion is commonly r ealized by means of Machine Learning algorithms, like K-Means, HMM, decision tre e s , and SVM. In order to ev aluate the informatio n leak ag e of SVM class ifiers, we s et up a simple Netw ork T raffic Clas sifier able to distinguish b etw een DNS and WEB traffic. In particular, we c onsidered an SVM c lassifier bas ed on the SMO mo dule (Sequent ia l Minimal Optimiza tion [50]) o f the W ek a framework. Our exper iment uses a r e al netflow dataset, gathered b y a national tier 2 Autonomous System. NetFlow is a Cisco TM proto col used by netw or k adminis- trators for gather ing traffic sta tis tics [5 3]. NetFlow is used to monitor data a t Lay ers 2-4 of the netw ork ing proto col sta ck and to pr ovide an aggr egated v iew of the netw o rk status. In particula r, NetFlo w efficient ly supp orts many net work tasks such as tr a ffic acc o un ting , net work billing a nd planning, a s well as Denial of Service monito r ing. A netflo w-enabled router pro duces o ne new reco rd for each newly established connection, collecting selec ted fields from its IP hea der. More precisely , a single netflow r ecord is defined as a unidir e ct ional sequenc e of packet s all sharing the following v alues : so urce and destination IP addres s es, source a nd destinatio n po rts (for UDP or TCP , 0 for other proto c o ls), IP pr oto col, Ingress in terface index and IP Type o f Service. Other v aluable information a sso ciated with the flow, suc h a s timestamp, du- ration, num b er of packets and t r ansmitted bytes, a re also recorded. Then, w e consider a single netflow as a record tha t repres en ts the da ta exchanged b etw een t wo hosts only in one dir ection. W e co nsider a netw o rk traffic cla ssifier aimed at corr ectly distinguishing the WE B and DNS traffic. The classifier was trained using a balanced s et of netflo ws of WEB a nd DNS tr a ffic. It is worth noting that the WEB data set includes s everal tr a ffic patterns. Namely , it co n ta ins the flo ws directed to national newspap ers, advertising websites, and the Go ogle search engine website. During the training phase o f the exp eriment, we used all the fields of the netflow ent r ies, except t he source a nd destination IP addresses o f the tr a ck ed connec- tions. In the literature there a re exa mples of SVM Class ifie r s for traffic detec- tion [28] able to distinguis h a grea ter v a riety of netw ork proto cols; the metho d- ology used in o ur exp eriment is s imilar, and can b e co nsidered appropr iate to highlight the statistical information leak ag e issues that ar e the tar get of our resear ch. No tice that the ac cur acy and the p re cision of the obtained classifier is optimal, thanks to the simplicity of the training samples: indeed, WEB and DNS co nnections hav e well-separated traffic patterns, pro ducing a large marg in for c lassification. A ttac k description In our exp eriment we in vestigate whether it is p ossible to extrap olate the t y p e of tra ffic that was used during the construction of the SVM mo del. F or example: Can we infer wh et her Go o gle web tr affic was use d in the tr aining samples? (As be fo re, Go o g le traffic do es NOT app ear in the a ttributes of the training set.) W e pro ceed with our attac k by c r eating sev e ral a d-ho c data sets with well-defined statistica l pro per ties and use them to build o ur meta- classifier MC . Namely , we created 70 ad-ho c data sets, selecting 20.0 00 flows of net work traffic, distinct from the original tr aining set. While all 70 c lassifiers were trained with a non-sp ecific DNS traffic, the first half of the classifier s w er e trained using WEB traffic directed only to Go og le sear c h eng ine (prop erty P ). F o r the r emaining 3 5 classifiers , w e us ed WEB traffic without any netflow dire c ted to Go ogle sea rch engine (prop erty P ). Each classifier was trained us ing a po lynomial k er nel function of degree 3 and was enco ded by the list of the s uppor t vectors it contains, namely a set of po in ts ( y , x ) in the n − dimensional spa ce ( x = { x 1 , x 2 , . . . , x n } ). The training samples o f the c la ssifier MC are comp ose d of all the supp ort vectors of the 70 classifiers , lab eled according to the pr o pe r t y P or P used for training: D C = [ C i { ( y , h x i , l ab el ) } W e ev a luate the pe rformance of MC using the cr oss v alida tion s tr ategy , a metho d that divides the data into k mutually ex clusive subsets (namely , the “folds”) of approximately equal size. With cross v alidation, the accuracy estimate is the av erag e accuracy for the k folds. Google not Google classified as 2312 101 Google 92 2786 not Google T able 6. The confusion matrix of the meta-classifier Precision R ecall Google 0. 95 0.93 not Google 0.94 0.96 T able 7. The precision and recall summary of th e meta-classifier T a ble 6 summarizes the exp eriment r e s ults: with respect to the Go o gle class, we a ch ie v e a pr e cision of 0.954 and a r e c al l of 0.932. On the other hand, w e correctly classify not Go o gle instances with a pr e cision of 0.9 43 and a r e c al l of 0.962. As in the example with the HMMs, the exp erimental r esults show tha t we were a ble to build a n effective meta-cla ssifier that infers whether the t r aining set given as input includes als o a sp ecific type of traffic. 4 Differen tial priv acy In this section we sho w that differe ntial priv acy is ineffective against our attack strategy . More sp ecifically , the information leak ag e we are after sits o utside the adversary mo del considered by differential priv a cy . Different ia l priv acy [1 9, 1 4, 9] pr otects ag a inst uninten tional disclo sure of po ten tia lly sensitive infor mation rela ted to a single recor d of a databa s e D . In other words, differential pr iv ac y maximizes the accura cy of queries fro m statis- tical databases a nd, at the same time, minimizes the ability to identifying single records. T o protect the priv acy of data base reco r ds, differen tia l priv acy opts for basically three approaches: 1. The fir st is to o bfusca te the or iginal database D and transform it into D ′ . This strateg y is completely ineffective in our mo del since D ′ is the database actually used during training and it is exactly what the a dversary in our mo del is after . That is, our adversary is not interested in D , or any of its records, but it is ra ther eager for any information on D ′ , i.e., anything that is the result of the trans formations applied by differential priv acy . 2. Another appro ach is to train a classifie r and then add noise to the output. This is also ineffective since, in o ur mo del, the adversary has complete acces s to the classifier and co uld just disa ble the instruction that adds nois e . 3. The thir d a pproach is more subtle. I t consists of adding no ise during train- ing, thus effectively obfuscating the lear ning pr o cess. This appro a ch is still ineffective aga inst our adv er sary since, in tuitively , the final classifier m ust anyw ay conv erge to classify corr ectly the training set. Thus, the noise must be s omehow restrained and its effect can easily b e mitigated (see b elow). It may be unclear why the third appro ach ab ove fails to provide an y protec- tion a gainst our adversary . Hence, we p erformed nex t an exp eriment sho wing how to extract sensitive information from a cla ssifier tra ined within the fra mework SuLQ, in tr o duce d in [9]. The SulQ a utho r s impro ved sev era l standar d classifiers to provide diff er ent ia l priv acy . The main idea co nsists of adding a small amoun t of noise, according to a Normal Distribution N (0 , σ ), to any access to the train- ing s e t. The v ariance of N regula tes the priv acy prop erty provided by differential priv a cy . Before intro ducing the exp eriment, we br iefly recall some concepts of K - Means, whic h is the most p opular cluster ing algor ithm. 4.1 K-Means: the clusteri zation algorithm Clustering is the task o f par titio ning unstructured data in such a way that ob jects with an hig h level of similarity fall into the sa me par tition. Clustering is a t y pic a l example of unsup ervised lear ning mo dels where examples a re unlab eled, i.e., they are no t pre- c lassified. The K-Me ans a lgorithm [3] is one of the most common metho ds in this family and it has b een used in many applications (e.g., [12, 59, 1 0, 11]). F o r example, in [12] the author s developed a re a l-time tra ffic classificatio n metho d, base d o n K-Me ans , to identify SSH flows from statistical behavior of IP tr affic par ameters, such as length, ar riv a l times and dir ection of pa c kets. In K -Me ans b oth tra ining and classificatio n phases a r e very intu itive. Dur- ing the lear ning pro c e ss, the algor ithm par titions a set of n obs erv a tions into k clusters. Then, the a lgorithm s elects the c entro id (i.e., the bar ycenter, o r geo - metric midp oint) of every cluster as a repr esentativ e for that set of ob jects. More formally , given a set of obs erv a tions ( x 1 , x 2 , . . . , x n ), wher e ea ch observ ation is a d - dimensional re a l vector, K-Me ans partitions the n observ atio ns into k sets ( k ≤ n ) S = { S 1 , S 2 , . . . , S k } in o rder to minimize the within-cluster function: argmin S k X i =1 X x j ∈ S i k x j − µ i k (6) where µ i is the mean o f p oints in S i . T o classify a given data set of d - dimensional elemen ts with respect to k clus- ters, K-Me ans r uns a learning pro cess that can b e summarized by the following steps: 1. Randomly pick k initial cluster centroids; 2. Assign each instance x to the cluster tha t ha s a centroid near est to x ; 3. Recompute each cluster’s cent r oid bas ed o n whic h element s are con tained in it; 4. Rep eat Steps 2 and 3 unt il co nv ergence is achiev ed; 4.2 Hac king mo de ls secured b y Differential Priv acy W e implemented tw o v ariants of a netw o r k traffic classifier that makes use of K-Me ans . W e trained both classifier s with the same data set of the SVM e x per i- men t of Section 3.2. The fir st implementation directly uses the euclidian distance as metric to revise the c entr oids in the iterative r efinement phase (equa tion 6). The seco nd version implements a priv acy pres erving version of K-Me ans , provid- ing differential priv acy . W e implemented the latter within the SulQ framework, int r o duced by Blum et al. [9]. W e ran the t wo cla s sifiers on 70 training sets , obtaining 7 0 distinct centroids. Recall that our ob jective is to recog nize whether there was Go ogle traffic within the traces. With resp ect to t he classifier with no differential pr iv ac y , we represe n t the centroids when there is traffic to Go ogle.com in figure 4(a), and no t r affic to Go ogle.com in fig ur e 4 (b). It is ea sy to see that the pos itio ns o f the centroids are quite different, allowing us to eas ily distinguish b etw een these tw o cases. Similar r esults app ear when w e picture the cent r oids of the classifier pr oviding differential priv acy in figur es 5(a) and 5(b), resp ectively . Even in this case, an adversary can easily distinguish whether there is Go ogle.com tr affic or not. ✥ ✥ ✥ ✥ ✥ ✁✥ ✥ ✥ ✥ ✥ ✁✥ ✥ ✥ ✥ ✂✥ ✥ ✥ ✥ ✥ ✂✥ ✥ ✥ ✥ ✄✥ ✥ ✥ ✥ ✥ ✄✥ ✥ ✥ ✥ ☎✥ ✥ ✥ ✥ ✥ ✥ ✥ ✁✥ ✥ ✁✥ ✂✥ ✥ ✂✥ ✄✥ ✥ ✄✥ ☎✥ ✥ ❜ ✆ ✝ ✞ ✟ ♣✠✡☛☞✌ ✍ ❑ ✎ ☞✠ ✏✍ ✑☞ ✏ ✌ ✒ ✓✔ ✕ ✍ ✖ ✔ ✌ ✗ ✏✓ ✘ ✔ ✙ ✙ ☞✒ ☞ ✏ ✌ ✔ ✠ ✚ ✛ ✒ ✔ ✜✠✡✢ ✣✍✔ ✏ ✤ ✖ ☞✦ ✌ ✒ ✠ ✙ ✙ ✔ ✡ ✖ ✔ ✌ ✗ ✧✓✓ ✤ ✚ ☞ ✙ ✚ ✓✖ ✍ ✘❉★ ✌ ✒ ✠ ✙ ✙ ✔ ✡ ✡☞ ✏ ✌ ✒ ✓✔ ✕ ✍ ❲✩✪ ✌ ✒ ✠ ✙ ✙ ✔ ✡ ♣ ✓✔ ✏ ✌ ✍ (a) T raining set conta ins W eb traffic directed to Google.com ✥ ✥ ✥ ✥ ✥ ✁✥ ✥ ✥ ✥ ✥ ✁✥ ✥ ✥ ✥ ✂✥ ✥ ✥ ✥ ✥ ✂✥ ✥ ✥ ✥ ✄✥ ✥ ✥ ✥ ✥ ✄✥ ✥ ✥ ✥ ☎✥ ✥ ✥ ✥ ✥ ✥ ✥ ✁✥ ✥ ✁✥ ✂✥ ✥ ✂✥ ✄✥ ✥ ✄✥ ☎✥ ✥ ❜ ✆ ✝ ✞ ✟ ♣✠✡☛☞✌ ✍ ❑✎ ☞✠ ✏✍ ✑☞ ✏ ✌ ✒ ✓✔ ✕✍ ✖✔ ✌ ✗ ✏ ✓ ✘✔ ✙ ✙ ☞✒ ☞ ✏ ✌ ✔ ✠ ✚ ✛✒ ✔ ✜✠✡✢ ✣✍ ✔ ✏ ✤ ✖ ☞ ✦ ✌ ✒ ✠ ✙ ✙ ✔ ✡ ✖✔ ✌ ✗ ✏ ✓ ✧ ✓✓✤ ✚ ☞ ✙ ✚ ✓✖ ✍ ✘❉★ ✌ ✒ ✠ ✙ ✙ ✔ ✡ ✡☞ ✏ ✌ ✒ ✓✔ ✕✍ ❲✩✪ ✌ ✒ ✠ ✙ ✙ ✔ ✡ ♣✓✔ ✏ ✌ ✍ (b) T raining set does not contain W eb traffic directed t o Google.com Fig. 4. Centroids of the K-Means traffic classifier without differential priv acy . ✥ ✥ ✥ ✥ ✥ ✁✥ ✥ ✥ ✥ ✥ ✁✥ ✥ ✥ ✥ ✂✥ ✥ ✥ ✥ ✥ ✂✥ ✥ ✥ ✥ ✄✥ ✥ ✥ ✥ ✥ ✄✥ ✥ ✥ ✥ ☎✥ ✥ ✥ ✥ ✥ ✥ ✥ ✁✥ ✥ ✁✥ ✂✥ ✥ ✂✥ ✄✥ ✥ ✄✥ ☎✥ ✥ ❜ ✆ ✝ ✞ ✟ ♣✠✡☛☞✌ ✍ ❑ ✎ ☞✠✏ ✍ ✑☞✏✌ ✒ ✓✔ ✕ ✍ ✖ ✔ ✌ ✗ ✘ ✔ ✙ ✙ ☞ ✒ ☞✏✌ ✔ ✠ ✚ ✛✒ ✔ ✜✠✡✢ ✣ ✜✠✒ ✂ ✤ ✥ ✦ ✧✍✔ ✏★ ✖☞✩ ✌ ✒ ✠ ✙ ✙ ✔ ✡ ✖✔ ✌ ✗ ✪✓✓★ ✚ ☞ ✙ ✚ ✓✖✍ ✘❉✫ ✌ ✒ ✠ ✙ ✙ ✔ ✡ ✡☞✏✌ ✒ ✓✔ ✕ ✍ ❲✬✭ ✌ ✒ ✠ ✙ ✙ ✔ ✡ ♣ ✓✔ ✏✌ ✍ (a) T raining set conta ins W eb traffic directed to Google.com ✥ ✥ ✥ ✥ ✥ ✁✥ ✥ ✥ ✥ ✥ ✁✥ ✥ ✥ ✥ ✂✥ ✥ ✥ ✥ ✥ ✂✥ ✥ ✥ ✥ ✄✥ ✥ ✥ ✥ ✥ ✄✥ ✥ ✥ ✥ ☎✥ ✥ ✥ ✥ ✥ ✥ ✥ ✁✥ ✥ ✁✥ ✂✥ ✥ ✂✥ ✄✥ ✥ ✄✥ ☎✥ ✥ ❜ ✆ ✝ ✞ ✟ ♣✠✡☛☞✌ ✍ ❑✎ ☞✠ ✏✍ ✑☞ ✏ ✌ ✒ ✓✔ ✕✍ ✖✔ ✌ ✗ ✘✔ ✙ ✙ ☞✒ ☞ ✏✌ ✔ ✠✚ ✛✒ ✔ ✜ ✠✡ ✢ ✣ ✜ ✠✒ ✂✤ ✥ ✦ ✧ ✍ ✔ ✏★ ✖☞ ✩ ✌ ✒ ✠✙ ✙ ✔ ✡ ✖ ✔ ✌ ✗ ✏ ✓ ✪ ✓✓ ★ ✚ ☞ ✙ ✚ ✓✖ ✍ ✘ ❉✫ ✌ ✒ ✠✙ ✙ ✔ ✡ ✡☞ ✏ ✌ ✒ ✓✔ ✕✍ ❲✬✭ ✌ ✒ ✠✙ ✙ ✔ ✡ ♣✓✔ ✏ ✌ ✍ (b) T raining set does not contain W eb traffic directed t o Google.com Fig. 5. Centroids of a K-Means T raffic Classifier with differential priv acy . 5 Related works The re s earch ar ea closest to the iss ues addresse d in our pap er app ears to be In- formation Disclosure co nsidered in priv acy pre s erving da ta mining and statistica l databases. It is worth descr ibing some o f these r elated results, even though we stress that the type of lea k a g e we co nsider in this paper has not been considered befo re. As formalized by Dwork in [19], differ ential privacy deals with the g eneral problem of pr iv acy pre s erving analysis o f data. More for mally , a ra n domize d me chanism M pr ovides ǫ − differ ential privacy if, for a datab ase D 1 and D 2 , which differ by at most one element, and for any t: P r [ M ( D 1 ) = t ] P r [ M ( D 2 ) = t ] ≤ e ǫ In the differen tia l priv acy mo del, a trusted server holds a database with sen- sitive information. Answers to q ue r ies are p erturb ed b y the addition of random noise generated a ccording to a r andom distribution (usually a L aplac e distribu- tion). Two settings are defined: n on inter active , where the trusted server computes and publishes statistics on the original data, and inter active , where the server sits in the middle and dir e ctly alters the answers to user queries to gua rantee sp ecific priv a cy pr op e r ties. Chaudhuri et al. [40] design a priv acy preserving logistic regression a lg orithm which w orks in the ǫ − differential priv acy mo del ([31]). The idea is q uite simple: the res ult of the trained clas s ifier is p erturb ed with a dynamic amount of noise. This approach do es not conside r the secur ity is sues due to the exp osure of the mo del generated dur ing the lear ning phas e of the linear regre ssion algo rithm. Other machine lear ning a lg orithms, such as Decision T rees, Ar tificia l Neura l Net works, Clustering, hav e b een re - engineered to provide differential priv acy and se veral a re defined within the SulQ fra mew o rk [9]. Privacy Pr eserving Data Mining (PPDM) [1 4] is a novel resear c h ar e a aimed at developing techniques that p erfor m data mining pr imitiv e s while pr otecting the priv acy of individual data r ecords. In [55], V er ykios et al. class ified PPDM techn iq ues in fiv e clas ses. Amo ng them, we men tion the Privacy pr eservation class which refers to techniques used to preserve pr iv acy for sele ct ive mo difi- cations of da ta recor ds. This c an b e achieved through heuristic v a lues (e.g., selecting the v alues that minimize the utility loss of the data), cry pto graphic pro- to cols (e.g., via Sec ur e Multipar t y Computation [44]), o r reconstruction-based techn iq ues (e.g., s tr ategy aimed at reconstructing the original data dis tribution using r andomized data). Some previous work exists r elated to extra ction of informa tion from clas sifiers. F o r instance, in [2 9], the authors show how a bay esia n learning a lgorithm ca n be used to learn which words are employ ed by a classifier to cla ssify messages as spam and ham. Similarly , in [45, 58], the a utho r s de s crib e some statistica l attacks against spam filters aimed a t unders ta nding mes sage fea tures that a re not correc tly classified by the filter s. Although using learning alg orithms a gainst other learning algor ithms is not unprecedented([29, 46]), o ur approa ch is different since w e uncov e r ed a new class of information leak age that is inherent to the learning pro cess and that ha s never bee n dis c ussed b efore. 6 Conclusions In this pap er we in tr o duced a nov el a pproach to extract meaning ful data fr o m machine learning clas sifiers using a meta-cla ssifier. While previous works in ves- tigated priv acy concer ns of a single da tabase record, our appr oach fo cuses on the statistical informa tion stric tly correlated to the tra ining samples used during the learning phase. W e show ed that se veral ML classifier s suffer from a new class of information leak age that is not captured b y priv acy- preserving mo dels, such as PPDM o r differe n tial priv acy . W e devised a meta-cla s sifier to successfully distinguish the accent o f users inv olved in defining the corpus of a sp eech recognition engine. F urthermor e, we attack ed an Internet traffic classifier to infer whether a specific t r affic pattern was used dur ing tra ining. Our results evince r ealistic issues facing machine learning alg orithms as we put forward the imp ortance o f protecting the tra ining s et—the alluring recip e that makes a class ifier b etter than the comp etition and that should b e gua rded as a tra de secr et. Bibliograph y [1] http ://www .nuance.com/dragon/index.htm . [2] http ://www .voxforge.org . [3] Some metho ds of classific ation and analysis of multivariate observations , 1967. [4] F ac e R e c o gnition by Su pp ort V e ctor Machines , FG ’00 , W ashington, DC, USA, 20 00. IEEE Computer Society . ISBN 0 -7695 -0580- 5. URL http:/ /dl.ac m.org/citation.cfm?id=795661.796198 . [5] Julius — an op en sour c e r e al-time lar ge vo c abulary r e c o gnition engine. , Aal- bo rg, Denmark , 2 001. [6] Intru s ion dete ction using neur al networks and su pp ort ve ctor machines , vol- ume 2, 2 002. [7] Morpholo gic al Classific ation of Me dic al Images using Nonline ar Supp ort V e ctor Machines , 200 4. IEEE . [8] Applic ation of mo difie d n eur al network weights’ matric es explaining determinants of for eign investment p attern s in the emer ging mar- kets , MICAI’05 , Berlin, Heidelb erg , 20 05. Springer-V erlag . ISBN 3- 540-2 9896- 7 , 978 -3-540 -2989 6-0. doi: 1 0.1007 / 11579 427 73. URL http:/ /dx.do i.org/10.1007/11579427_73 . [9] Pr actic al priva cy: the SuLQ fr amework , PODS ’05 , New Y ork, NY, USA, 2005. ACM. ISBN 1-5 9593- 0 62-0. doi: 10.11 45/10 65167.1065184. URL http:/ /doi.a cm.org/10.1145/1065167.1065184 . [10] T r affic classific ation using clustering algorithms , MineNet ’06, New Y o rk, NY, USA, 2006 . ACM. ISBN 1-595 93-569 - X. doi: ht tp:// doi.acm.org /10.11 45/1162678.1162679. URL http:/ /doi.a cm.org/10.1145/1162678.1162679 . [11] T r affic classific ation on the fl y , volume 36, New Y ork, NY, USA, April 2006. ACM. doi: h ttp://do i.acm.org/ 10.114 5/1129582.1129589. URL http:/ /doi.a cm.org/10.1145/1129582.1129589 . [12] R e al Time Identific ation of SS H Encrypte d A pplic ation Flows by Using Cluster Analysis T e chniques , NETWORKING ’09, Berlin, Heidelber g, 2009. Springer-V erlag . ISBN 978-3 -642-0 1398- 0. doi: http://dx.doi.org/ 10.100 7 /978- 3 - 642- 01399- 7 15. URL http:/ /dx.do i.org/10.1007/978- 3 - 642- 01399- 7_15 . [13] Automatic Reverse Engine ering of Data Struct ur es fr om Binary Exe cut ion , 2010. The Internet So cie t y . [14] Rakesh Agraw al and Ra makrishnan Srik ant. Priv acy- preserving data mining. SIGMOD R e c. , 29(2):439 –450, May 2000. ISSN 01 63-580 8. doi: 10 .1 145/3 35191 .335438. UR L http:/ /doi.a cm.org/10.1145/335191.335438 . [15] T om Auld, Andrew W. Mo ore, and Stephen F. Gull. Bay esia n neural ne t- works for internet traffic classifica tion. IEEE T r ansactions on N eu r al Net- works , 1 8(1):223– 239, 2007. [16] L. E . Baum and T. Petrie. Statistical inf er ence for pr o babilistic functions of finite state Marko v chains. Annals of Mathematic al Statistics , 3 7:1554 –1563 , 1966. [17] Ber nha rd E. Boser, Isa belle M. Guyon, and Vladimir N. V apnik. A training algorithm for optimal margin classifiers . In Pr o c e e dings o f the 5th Annual ACM Workshop on Computational L e arning The ory , pages 144 –152. ACM Press, 1992. [18] Leo Breiman, J. H. F riedman, R. A . Ols he n, and C. J. Stone. Classific ation and Re gr ession T r e es . Statistics/Pr obability Series. W adsworth Publishing Company , Belmont, C a lifornia, U.S.A., 1 984. [19] Michele Bugliesi, Bar t Preneel, Vladimiro Sas sone, a nd Ingo W eg ener, e di- tors. Differ ential Privacy , volume 4052 of L e ctu r e N otes in Computer Sci- enc e , 20 06. Springer . ISBN 3 -540-3 5907- 9. [20] Christo pher J. C. Burges. A tutorial on supp ort vector machines for pattern recognition. Data Min. Know l. Disc ov. , 2 (2):121–1 67, June 1998. ISSN 13 84-58 1 0. doi: 10.10 2 3/A:100 9 715923555. URL http:/ /dx.do i.org/10.1023/A:1009715923555 . [21] Yves Chauvin and David E . Rumelha r t, editors. Backpr op agation: the ory, ar chite ctu re s , a n d applic ations . L. Er lbaum As s o ciates Inc., Hillsdale, NJ, USA, 1 995. ISBN 0 -8058 -1259- 8. [22] Rung Ching Chen, Kai-F an Cheng, and Chia-F en Hsieh. Using roug h set and supp or t vector machine for netw ork intrusion detection. CoRR , abs/10 04.056 7 , 2010 . [23] Edith Cohen and Carsten Lund. Pack et classifica tion in la rge isps: de- sign and ev alua tion of decis ion tree classifier s. SIGMETRICS Perform. Eval. R ev. , 33(1):73– 84, June 2005. ISSN 016 3 -5999 . doi: 10.1145 /1071 690. 10642 22. URL ht tp://d oi.acm. org/10.1145/1071690.1064222 . [24] V asant Dhar. Prediction in financial markets: The case for small disjuncts. ACM T r ans. Int el l. Syst. T e chnol. , 2(3):19:1– 19:22, May 2011. ISSN 2157- 6904. doi: 10.114 5/196 1189.1961191. URL http:/ /doi.a cm.org/10.1145/1961189.1961191 . [25] Ruisheng Dia o , Kai Sun, Vijay Vittal, Rober t J. O’Keefe, Michael R. Richardson, Navin Bhatt, Dw ayne Stradford, and Sanjoy K. Sarawgi. Decision T ree- B ased Online V oltage Security Assessment Using PMU Measurements. IEEE T r ansactions on Power Systems , 24(2):832 – 839, May 20 09. ISSN 0885 -8950. doi: 10 .1109/ TPWRS.2009.2 016528. URL http:/ /dx.do i.org/10.1109/TPWRS.2009.2016528 . [26] Ja s on Eisner . An interactive sprea dsheet for teaching the for ward-backw ard algorithm. In Pr o c e e dings of t he ACL-02 Workshop on Effe ctive t o ols and metho dolo gies for te aching natur al language pr o c essing and c omputational linguistics - V olume 1 , ETMTNLP ’02, pages 1 0 –18, Stro uds bur g, P A, USA, 2002. Asso cia tio n for Computationa l L inguistics. do i: 10.3 115/1 118108. 11181 10. URL ht tp://d x.doi.o rg/10.3115/1118108.1118110 . [27] Jeffrey Er man, Anirban Mahanti, Martin Arlitt, Ir a Cohen, and Carey Williamson. Offline/realtime traffic cla s sification using semi-sup ervised learning . P erform. Eval. , 64 :1194– 1213, Octo- ber 2007 . ISSN 0166- 5316. doi: 10.10 16/j.p ev a.2007 .06.014. URL http:/ /dl.ac m.org/citation.cfm?id=1284907.1285040 . [28] Alice Este, F ra ncesco Gring oli, and Luca Sa lgarelli. Suppo r t vector machines for tcp tr affic classificatio n. Computer Networks , 53(14 ):2476 – 2490, 2009. ISSN 1389-1 286. doi: 10.1016/ j.comnet.2009.0 5.003. URL http:/ /www.s ciencedirect.com/science/article/pii/S1389128609001649 . [29] J. Graham-Cumming. How to b eat a n a da ptiv e spam filter. In The MIT Sp am Confer enc e , 200 4. [30] Isab elle Guyon, Ja son W eston, Stephen Ba rnhill, and Vladimir V apnik. Gene selection for cance r classifica tion using supp ort vector machines. Mach. L e arn. , 46(1-3):389– 422, Ma rch 2 002. ISSN 0885-6 125. doi: 10.1023 / A:10124 87302 7 97. URL http ://dx. doi.or g/10.1023/A:1012487302797 . [31] Shai Halevi a nd T al Rabin, editors. Calibr ating Noise to Sensitivity in Private Data Analy sis , volume 3876 of L e ct ur e Notes in Computer Scienc e , 2006. Springe r. ISBN 3 -540- 32731 - 2. [32] J.F. Hay es. The viter bi algo rithm applied to dig ital data transmissio n. Communic ations Magazine, IEEE , 40(5):2 6 – 32, may 20 02. ISSN 01 63- 6804. doi: 10.110 9/MCOM.20 02.1006969. [33] Ypke Hiemstra . Linear re g ression versus backpropagation netw orks to predict quarter ly s to ck market exces s r eturns. Comput. Ec on. , 9(1):67– 76, F ebruary 199 6. ISSN 0 927-70 99. doi: 10.100 7/BF001 15692. URL http:/ /dx.do i.org/10.1007/BF00115692 . [34] W enjie Hu, Yihua Liao, and V. Rao V em ur i. Robust ano ma ly detection using support vector mac hines. In In Pr o c e e dings of the International Con- fer enc e on Machine L e arning . Morga n Kaufmann P ublishers Inc. [35] Anil K. Jain, Jianchang Mao , and K. Mohiuddin. Artificial neural netw or ks: A tutor ial. IEEE Computer , 29:31– 44, 1996. [36] Thor sten Joa chims. T ext categor ization with suppo rt vector machines: Learning with many r elev a n t features. In Cla ire N´ edellec and C´ eline Rou- veirol, editor s, Ma chine L e arning: ECML-98 , volume 1 398 of L e ctu r e Notes in Computer Scienc e , pages 13 7–142 . Springer Berlin / Heidelberg , 19 98. ISBN 978-3 -540- 6 4417-0. URL http://d x.doi. org/10.1007/BFb0026683 . 10.100 7/BFb002 6683. [37] B. H. Juang a nd L. R. Rabiner. Hidden markov mo dels for sp eech reco g- nition. T e chnometrics , 33 (3):251–2 72, August 199 1. ISSN 0 040-1 706. doi: 10.230 7/126 8779. URL ht tp://d x.doi. org/10.2307/1268779 . [38] Daniel Jur a fsky and James H. Martin. Sp e e ch and L anguage Pr o- c essing (2nd Edition) ( Pr ent ic e Hal l Series in Artificial Intel li- genc e) . Prentice Hall, 2 e dition, 2008 . ISBN 01 31873 210. URL http:/ /www.a mazon.com/Language- Processing- Prentice- Artificial- In telligence/dp/013187321 [39] Latifur Kha n, Mamoun Awad, and Bhav ani Thuraisingham. A new int r usion detection system using supp ort vector machines and hi- erarchical cluster ing. The VLD B Journ al , 16(4):50 7 –521, Octob er 2007. ISSN 10 66-888 8. doi: 10.1 007/s 00778 - 006- 0002- 5. URL http:/ /dx.do i.org/10.1007/s00778- 006- 0002- 5 . [40] Daphne Koller , Dale Sch uurmans, Y oshua Bengio, a nd L´ eon Bottou, editors. Privacy-pr eserving lo gistic r e gr ession , 20 08. Curr a n Asso ciates, Inc. [41] K.- F. Lee a nd H.-W. Hon. Large -vocabular y sp eaker-indep endent cont inu- ous sp eech re c o gnition using hmm. In Ac oustics, S p e e ch, and Signal Pr o- c essing, 1988. ICASS P- 88., 1988 In ternational Confer enc e on , pages 123 –126 vol.1, a pr 1 9 88. doi: 10 .1109/ ICASSP .1 988.19 6527. [42] Ming Li and Zhi-Hua Z hou. Impr ov e Co mputer-Aided Diagnosis With Ma- chine Lear ning T echniques Using Undiagnosed Sa mples. IEEE T r ansactions on Systems, Man, and Cyb ernetics - Part A: Systems and Hum ans , 37(6 ): 1088– 1098, Novem b er 2007 . ISSN 1083 -4427 . doi: 10 .1109/ TSMCA.2007. 90474 5. U RL h ttp:// dx.doi .org/10.1109/TSMCA.2007.904745 . [43] Jia nhua Lin. Divergence mea sures ba s ed on the shannon entropy . IEEE T r ansactions on Information the ory , 37:1 45–15 1, 19 91. [44] Y ehuda Lindell and Be nn y P ink as . Secure mult ipa rty computation for priv a cy-preser ving data mining. IACR Cryptolo gy ePrint Ar chive , 2008 : 197, 2 008. [45] D. Lowd and C. Meek. Go o d w o r d attacks on statistical spam filters. In In Pr o c e e dings of the 2nd Confer enc e on Email and Anti-Sp am , 200 5. [46] Daniel Lowd a nd Christopher Meek . Adv e r sarial learning. In Pr o c e e d- ings of the eleventh ACM SIGKDD international c onfer enc e on Kn ow le dge disc overy in data mining , KDD ’0 5, pag e s 641–6 47, New Y ork, NY, USA, 2005. ACM. ISBN 1 -5959 3 -135- X. doi: 1 0.1145 /1081 870.1081950. URL http:/ /doi.a cm.org/10.1145/1081870.1081950 . [47] T. Mitchell. Ma chine L e arning . McGraw-Hill Education (ISE Editions), 1st edition, Oc to ber 1 997. ISB N 00711 54671 . URL http:/ /www.a mazon.com/exec/obidos/redirect?tag=citeulike07- 2 0&path=ASIN/0071154671 . [48] Snehal A. Mulay , P .R. Dev ale , and G.V. Ga rje. Article:intrusion detec- tion system using supp o rt vector machine and decisio n tree. In t ernational Journal of Computer A pplic ations , 3 (3):40–43 , June 2010 . P ublis hed By F o undation o f Co mputer Science. [49] T.T.T. Nguy en and G. Armitage. A survey of tech niq ues for in ternet traffic classification using machine lear ning. Communic ations Surveys T ut orials, IEEE , 10(4 ):5 6 –76, quar ter 2 008. ISSN 1553-877 X. doi: 10 .1109/ SUR V. 2008.0 80406 . [50] J. Platt. F ast training of support v e c tor mac hines using sequential min- imal optimization. In B. Schoelkopf, C. Burg es, a nd A. Smola, editors , A dvanc es in Kernel Metho ds - Supp ort V e ctor L e arning . MIT Pr ess, 19 98. URL h ttp:// resear ch.microsoft.com/ ~ jplatt /smo.h tml . [51] J. R. Quinlan. Induction of dec ision trees. Mach. L e arn. , 1(1):8 1–106 , March 19 8 6. ISSN 088 5-612 5 . doi: 1 0 .1023/ A:10226 4 3204877. URL http:/ /dx.do i.org/10.1023/A:1022643204877 . [52] J. Ro ss Quinlan. C4.5: pr o gr ams for machine le arning . Morgan Kaufmann Publishers Inc., Sa n F r ancisco, CA, USA, 199 3 . ISBN 1-5 5860-2 38-0. [53] Cisco Systems. Cisco Systems NetFlow Services Exp or t V ersion 9. http:/ /tools .ietf.org/html/rfc3954 , 2004 . [54] Adi L T arca , Vincen t J Ca rey , Xue-wen Chen, Rob erto Romer o, and Sorin Dr˘ aghici. Machine learning and its applications to bio lo gy . PL oS Com- put Biol , 3(6):e116, 06 20 07. doi: 10.13 71/jour na l.pcbi.0 03011 6. URL http:/ /dx.do i.org/10.1371%2Fjournal.pcbi.0030116 . [55] V assilios S. V erykio s , Elisa Bertino, Igor Nai F ovino, Loredana Parasil- iti Prov enza, Y ucel Saygin, and Y annis Theo doridis. State-of-the- art in pr iv acy preser v ing da ta mining. SIGMOD R e c. , 33(1):50–5 7 , March 2004 . ISSN 016 3-580 8 . doi: 10 .1145/ 9 74121 .974131. URL http:/ /doi.a cm.org/10.1145/974121.974131 . [56] W ek a Machine L e arning Pro ject. W ek a. URL ht tp:// www.cs.waik ato.ac .nz / ˜ ml/wek a. [57] M. W ernick, Y ongyi Y ang, J. Br anko v, G. Y our ganov, a nd S. Strother. Ma- chine lear ning in medical ima ging. Signal Pr o c essing M agazine, IEEE , 2 7 (4):25 –38, july 2 010. ISSN 1 0 53-58 88. doi: 10.1 109/MSP .20 10.936730. [58] Greg ory L. Wittel a nd S. F elix W u. O n attacking statistical spa m filters. In IN PR OC. OF THE CONFERENCE ON EMAIL AND ANTI-S P AM (CEAS), MOU NT AIN VIEW , 200 4. [59] Guowu Xie, M. Iliofotou, R. K eralapura , M. F aloutsos , and A. Nucci. Sub- flow: T ow a rds practical flow-lev e l tr affic classifica tion. In INFOCOM, 2012 Pr o c e e dings IEEE , pa ges 254 1 –254 5, mar ch 2012 . doi: 10.11 09/INFCOM. 2012.6 19564 9. [60] Steve Y oung. A review of la rge-voca bulary contin uo us-sp eech. Signal Pr o c essing Magazine, IEEE , 13(5):4 5, sept. 1 9 96. ISSN 105 3-588 8. do i: 10.110 9/79.5 36824. A Artificial Neural Netw or ks The Artificial Neur al Networks (ANNs) are a categor y of machine learning a l- gorithms able to solve a v a riety of problems in decision making , o ptimization, prediction, and co ntrol, lear ning functions from real, discr ete and vector v a lued examples. The ANNs obtain go o d p erfor mances in pro blems where the training data is retrieved by complex senso r, such as camer as or micropho nes. These a l- gorithms are also resilient to the presence of noise in the da taset. Several t y pes of ANN hav e b een pro po sed [35]. W e fo cus on a par ticular family of ANNs, the ones based on Multilayer Per c eptr ons , a nd the r elated Backpr op agation algorithm ([21]) us e d fo r their tr aining. The basic unit o f an ANN is the Per c eptr on (or neur o n), a unit that ta kes a v ector of real-v a lued inputs, calcula tes a linear combination of these inputs and then o utputs 1 if the re s ult is greater than some threshold and -1 otherwise. More formally a pe r ceptron can b e re pr esented as a function o ( x 1 , . . . , x n ) = 1 if P n i =0 w i x i > 0 − 1 otherwise where we consider x 0 to b e always set to 1 to simplify the notation, a nd we call net = P n i =1 w i x i . Obser ve that − w 0 is the threshold that makes the neur on to output 1. A sing le p erceptron r e presents a n hyperplane decision surface in the n − dimensional space of instances. This kind of p erceptr on can only discr iminate b etw een lin- early separable instances. T o overcome this limitation, the sigmoid function σ is used to decide the output v alue: σ ( net ) = 1 1 − exp net An ANN is a multi-lay er netw o rk of neurons: a fir st input lay er r eceives the input bits and provides mo dified inputs to a fo llowing lay er , that, in tur n, ela bo rates them and feeds a new lay er, and so on. The las t layer outputs the result o f the ANN. The neuro ns that form the internal lay ers are ca lled the hidden units. The core function of the net work resides in the weigh t of the hidden units in the int er nal lay e r s which are set through the bac kpr o pagation algor ithm. Starting from random weigh ts, the algorithm tunes them using a training s et of input- output pairs: the inputs go forward to the netw or k until they b ecome output, while the error s (namely , the difference b etw een a ctual and ex pected outputs) are back-propag ated to correct the weights. The err or is r educed iteratively un til a minimal and toler able err or is obtained. The backpropaga tion of the er ror is inspired by the principle of gra dien t descent: in a nutshell, if the weigh t sig nifi- cantly contributes to the error then its a djustmen t will b e grea ter. B Classification and Regression T r ees A classifica tion or r egressio n tree (introduce by Breiman et al. in [18] in 1 984) is a prediction mo del which maps o bserv ations in a de cision tr e e . The obser v atio ns L = ( x 1 , y 1 ) , ( x 2 , y 2 ) , . . . , ( x N , y N ) constitute the training set and are used to le arn a decis ion tree. Both class ific a tion a nd reg ression tr ees dea l with the prediction of a resp onse v a riable y (let Y be the domain of y ), given the v a lues of a vector of predictor v ar iables x (let X b e the domain of x ). If y is a contin uo us or disc r ete v ariable tak ing rea l v alues (e.g., the size o f an o b ject, the num ber o f occur r ences of certain ev ents), the pr oblem is called r e gr ession ; if Y is a finite set of unorde r ed v alue s (e.g., the t y p e of Iris plants), the pr oblem is called classific ation . The training pha se pro duces a tree str uctur e in which the leav es represe nt the class lab els and the branches repr esent c onjun ct ions of fe atur es that lea d to the cla ss lab els of their leaves. Decisio n tre e s can b e considered as disjunction of conjunctions of constraints on the attr ibute-v alues of instances. Ea c h path from the tre e ro ot to a leaf cor resp onds to a c onjunction of attribute tests , a nd the tree itself to a disju nc tio n of these conjunctions [47]. Decision tr ees w or k better whe n the t a rget function has discr ete output (for example “yes or no ”) and the data instances are represented by attribute-v alue pairs. F urthermo re, decision trees p erform well ev en when the training da taset con tains er rors or missing v alues. These characteristics make decision tree a s uitable s olution for many classifica tio n problems and in a great v a r iety of contexts. The most p opular implemen ta tion of decisio n trees is the C 4 . 5 [52] algo r ithm, which is an extended version of the I D 3 algorithm [51]. top-down, greedy search through the space of all p oss ible decisio n trees. In detail, ID3 algor ithm starts the search of decision tree answering the question: which attribute should b e use d at the r o ot of t he tr e e? Once the ro ot is found, a desce nden t no de of the ro ot is created for each po ssible v alue, then the same question is ask ed recursively at each new no de, un til: (i) each attribute has b een considered in the path through the tree, or (ii) the training exa mples related to a specific leaf has the same a ttribute v alues. The s election of the b est attribute in ea ch level of the tr e e is p erfor med using the concept of information gain . In fact, the information ga in measures how well a given attribute sepa rates the training examples. Giv en a collectio n S o f items, for each attribute A , I D 3 alg orithm ev aluates the ga in o f A with resp ect to S via the eq uation: Gain ( S, A ) = H ( S ) − X v ∈ V alues ( A ) | S v | | S | H ( S v ) where H ( S ) repr esents the Entropy of the entire dataset and S v is the subset of S fo r which a ttribute A ha s v alue v .

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment