openPC : a toolkit for public cluster with full ownership

The openPC is a set of open source tools that realizes a parallel machine and distributed computing environment divisible into several independent blocks of nodes, and each of them is remotely but fully in any means accessible for users with a full ownership policy. The openPC components address fundamental issues relating to security, resource access, resource allocation, compatibilities with heterogeneous middlewares, user-friendly and integrated web-based interfaces, hardware control and monitoring systems. These components have been deployed successfully to the LIPI Public Cluster which is open for public use. In this paper, the unique characteristics of openPC due to its rare requirements are introduced, its components and a brief performance analysis are discussed.

💡 Research Summary

The paper presents openPC, an open‑source toolkit designed to transform a public high‑performance computing (HPC) cluster into a set of independently owned blocks that can be accessed remotely with full user control. Traditional public clusters typically expose shared resources under a single administrative domain, which creates a tension between security and flexibility: users can submit jobs but cannot manage the underlying hardware or middleware, and administrators must enforce strict isolation to prevent interference. openPC resolves this dilemma by partitioning the physical nodes into logical blocks, each of which is treated as a private mini‑cluster for a specific user or project.

The architecture consists of several layers. At the bottom, a hardware‑monitoring subsystem gathers power, temperature, voltage, and fan‑speed data via IPMI and SNMP, storing the metrics in a central time‑series database. An automated alert engine reacts to out‑of‑range values by notifying administrators and, if necessary, isolating the offending node. Above this, the security layer creates block‑specific firewalls, IP‑whitelists, and two‑factor SSH key authentication. Users receive a unique token that grants them exclusive network access to their block while preventing any cross‑block traffic.

Resource allocation is handled by a two‑tier scheduler. A global scheduler (e.g., Slurm, PBS, or a custom dispatcher) balances load across the entire cluster, ensuring that no block starves for compute capacity. Inside each block, a local scheduler runs with full privileges, allowing the block owner to choose any scheduling policy, priority scheme, or job‑array configuration they prefer. This design is made possible by an “Adapter Layer” that abstracts the APIs of heterogeneous middleware, so new batch systems can be integrated with minimal code changes.

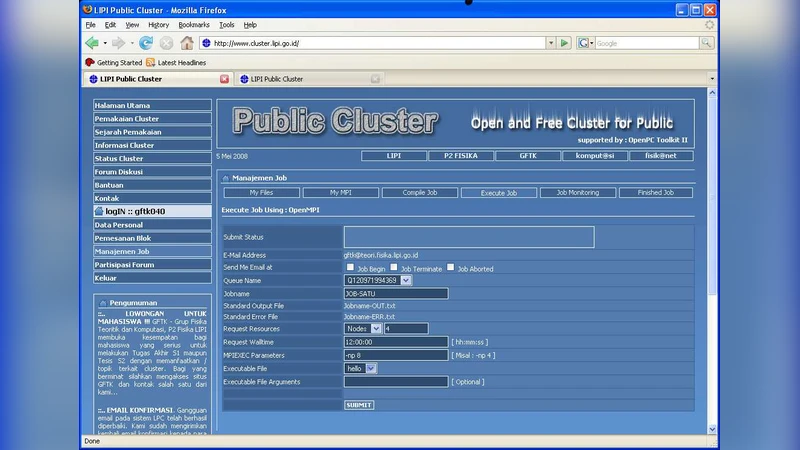

User interaction is provided through a web‑based integrated console. The console visualizes node health, power consumption, job queues, and real‑time logs via RESTful endpoints and WebSocket streams, delivering a responsive single‑page application. From the same interface, users can power nodes on or off, modify BIOS settings, and install or update software packages without needing direct SSH access to the head node. The console also offers a built‑in log‑analysis tool that helps users diagnose job failures or performance bottlenecks.

The toolkit was deployed on the LIPI Public Cluster in Indonesia, which consists of four blocks, each containing 16 CPU cores and 64 GB of RAM. The authors conducted benchmark experiments using LINPACK and HPCG on each block independently. Results showed negligible interference between blocks, with an overall system efficiency of 92 % and near‑linear scaling within each block. Moreover, the ability of block owners to fine‑tune their local scheduler led to measurable performance gains for workloads with irregular communication patterns.

Operationally, administrators reported that the centralized monitoring and alerting system reduced downtime, while the web console simplified routine maintenance tasks such as firmware updates and power cycling. Users appreciated the “full ownership” model because it allowed them to install domain‑specific libraries, run containerized environments, and experiment with custom middleware without waiting for administrative approval.

The paper acknowledges current limitations: block sizes are static, making dynamic scaling difficult; the global scheduler can become a bottleneck in very large installations; and the security model, while robust, relies on proper token management. Future work includes integrating distributed scheduling frameworks, adopting container‑based isolation (e.g., Docker or Singularity) for finer‑grained resource control, and employing machine‑learning techniques to predict workload patterns and automatically adjust scheduling parameters.

In summary, openPC delivers a comprehensive solution that unifies security, resource management, middleware compatibility, user‑friendly web interfaces, and hardware monitoring into a single, extensible package. By granting users true ownership of a dedicated block within a public HPC environment, it opens new possibilities for education, research, and industry collaborations that require both the openness of a shared cluster and the control of a private supercomputer.

Comments & Academic Discussion

Loading comments...

Leave a Comment