Multiclass Semi-Supervised Learning on Graphs using Ginzburg-Landau Functional Minimization

We present a graph-based variational algorithm for classification of high-dimensional data, generalizing the binary diffuse interface model to the case of multiple classes. Motivated by total variation techniques, the method involves minimizing an en…

Authors: Cristina Garcia-Cardona, Arjuna Flenner, Allon G. Percus

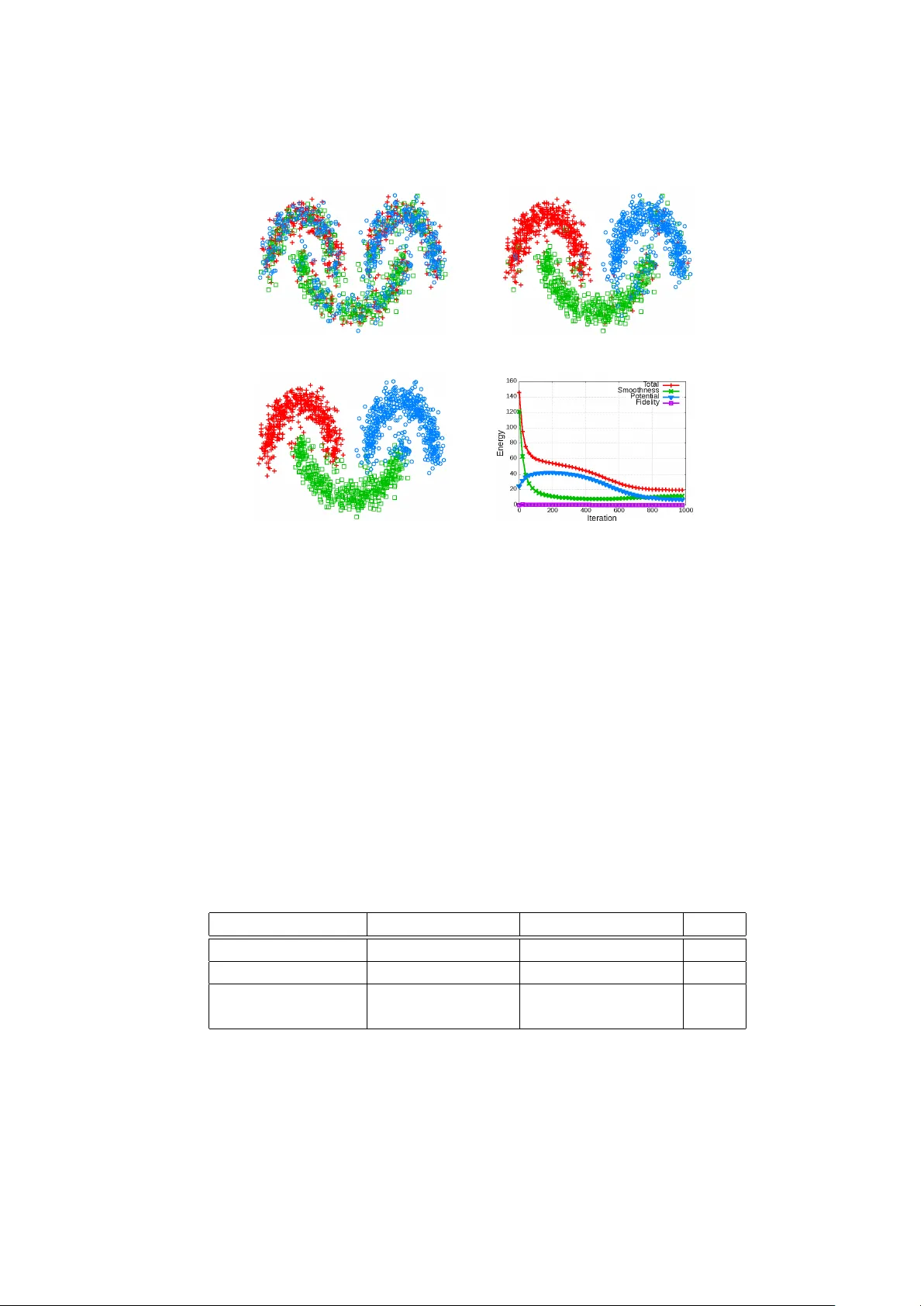

Multiclass Semi-Sup ervised Learning on Graphs using Ginzburg-Landau F unctional Minimization Cristina Garcia-Cardona 1 , Arjuna Flenner 2 , and Allon G. P ercus 1 1 Claremon t Graduate Univ ersity , Institute of Mathematical Sciences, Claremon t, CA 91711, USA, cristina.cgarcia@gmail.com, allon.p ercus@cgu.edu 2 Na v al Air W arfare Center, Physics and Computational Sciences, China Lake, CA 93555, USA Abstract. W e presen t a graph-based v ariational algorithm for classifi- cation of high-dimensional data, generalizing the binary diffuse interface mo del to the case of multiple classes. Motiv ated by total v ariation tech- niques, the metho d inv olv es minimizing an energy functional made up of three terms. The first t wo terms promote a step wise contin uous clas- sification function with sharp transitions betw een classes, while preserv- ing symmetry among the class lab els. The third term is a data fidelity term, allowing us to incorp orate prior information into the mo del in a semi-sup ervised framework. The performance of the algorithm on syn- thetic data, as w ell as on the COIL and MNIST b enc hmark datasets, is comp etitiv e with state-of-the-art graph-based multiclass segmentation metho ds. Keyw ords: diffuse in terfaces, learning on graphs, semi-sup ervised meth- o ds 1 In tro duction Man y tasks in pattern recognition and machine learning rely on the abilit y to quan tify lo cal similarities in data, and to infer meaningful global structure from suc h lo cal characteristics [8]. In the classification framework, the desired global structure is a descriptiv e partition of the data in to categories or classes. Man y studies hav e b een devoted to the binary classification problems. The m ultiple- class case, where data are partitioned into more than t wo clusters, is more c hal- lenging. One approac h is to treat the problem as a series of binary classifica- tion problems [1]. In this pap er, w e develop an alternative metho d, inv olving a m ultiple-class extension of the diffuse in terface mo del introduced in [4]. The diffuse interface mo del by Bertozzi and Flenner com bines metho ds for diffusion on graphs with efficien t partial differential equation techniques to solve binary segmentation problems. As with other metho ds inspired b y physical phe- nomena [3,17,21], it requires the minimization of an energy expression, sp ecifi- cally the Ginzburg-Landau (GL) energy functional. The formulation generalizes the GL functional to the case of functions defined on graphs, and its minimiza- tion is related to the minimization of weigh ted graph cuts [4]. In this sense, it 2 Garcia-Cardona et al. parallels other techniques based on inference on graphs via diffusion op erators or function estimation [8,7,31,26,28,5,25,15]. Multiclass segmen tation methods that cast the problem as a series of binary classification problems use a num b er of different strategies: (i) deal directly with some binary co ding or indicator for the lab els [9,28], (ii) build a hierarch y or com bination of classifiers based on the one-vs-all approach or on class rank- ings [14,13] or (iii) apply a recursive partitioning scheme consisting of succes- siv ely sub dividing clusters, until the desired num b er of classes is reached [25,15]. While there are adv an tages to these approaches, such as p ossible robustness to mislab eled data, there can b e a considerable num b er of classifiers to compute, and p erformance is affected b y the num b er of classes to partition. In contrast, we prop ose an extension of the diffuse in terface mo del that ob- tains a sim ultaneous segmentation into m ultiple classes. The m ulticlass extension is built by mo difying the GL energy functional to remov e the prejudicial effect that the order of the lab elings, given by integer v alues, has in the smo othing term of the original binary diffuse interface mo del. A new term that promotes homogenization in a multiclass setup is introduced. The expression penalizes data points that are lo cated close in the graph but are not assigned to the same class. This p enalt y is applied indep endently of ho w different the in teger v alues are, represen ting the class lab els. In this w ay , the c haracteristics of the mul- ticlass classification task are incorp orated directly in to the energy functional, with a measure of smoothness indep enden t of label order, allowing us to obtain high-qualit y results. Alternative multiclass metho ds minimize a Kullback-Leibler div ergence function [23] or expressions inv olving the discrete Laplace op erator on graphs [30,28]. This paper is organized as follows. Section 2 reviews the diffuse interface mo del for binary classification, and describ es its application to semi-sup ervised learning. Section 3 discusses our prop osed multiclass extension and the corre- sp onding computational algorithm. Section 4 presents results obtained with our metho d. Finally , section 5 dra ws conclusions and delineates future work. 2 Data Segmen tation with the Ginzburg-Landau Mo del The diffuse interface model [4] is based on a con tinuous approac h, using the Ginzburg-Landau (GL) energy functional to measure the qualit y of data seg- men tation. A go od segmentation is c haracterized by a state with small energy . Let u ( x ) b e a scalar field defined ov er a space of arbitrary dimensionality , and represen ting the state of the system. The GL energy is written as the functional GL( u ) = 2 Z |∇ u | 2 d x + 1 Z Φ ( u ) d x , (1) with ∇ denoting the spatial gradient operator, > 0 a real constant v alue, and Φ a double w ell p oten tial with minima at ± 1: Φ ( u ) = 1 4 u 2 − 1 2 . (2) Multiclass SSL on Graphs using Ginzburg-Landau F unctional Minimization 3 Segmen tation requires minimizing the GL functional. The norm of the gra- dien t is a smo othing term that p enalizes v ariations in the field u . The potential term, on the other hand, comp els u to adopt the discrete lab els of +1 or − 1, clustering the state of the system around tw o classes. Jointly minimizing these t wo terms pushes the system domain tow ards homogeneous regions with v alues close to the minima of the double w ell potential, making the mo del appropriate for binary segmen tation. The smoothing term and p oten tial term are in conflict at the in terface b e- t ween the tw o regions, with the first term fa voring a gradual transition, and the second term p enalizing deviations from the discrete lab els. A compromise b et ween these conflicting goals is established via the constan t . A small v alue of denotes a small length transition and a sharp er interface, while a large w eights the gradient norm more, leading to a slow er transition. The result is a diffuse in te rface betw een regions, with sharpness regulated by . It can b e sho wn that in the limit → 0 this function approximates the total v ariation (TV) form ulation in the sense of functional ( Γ ) con vergence [18], pro- ducing piecewise constan t solutions but with greater computational efficiency than conv entional TV minimization methods. Th us, the diffuse interface mo del pro vides a framework to compute piecewise constan t functions with diffuse tran- sitions, approaching the ideal of the TV formulation, but with the adv antage that the smooth energy functional is more tractable numerically and can be minimized b y simple n umerical metho ds suc h as gradient descen t. The GL energy has been used to approximate the TV norm for image seg- men tation [4] and image inpainting [3,10]. F urthermore, a calculus on graphs equiv alen t to TV has b een introduced in [12,25]. Application of Diffuse In terface Mo dels to Graphs An undirected, w eighted neighborho o d graph is used to represent the local rela- tionships in the data set. This is a common tec hnique to segmen t classes that are not linearly separable. In the N -neigh b orho od graph mo del, eac h vertex v i ∈ V of the graph corresponds to a data p oin t with feature v ector x i , while the w eight w ij is a measure of similarit y b etw een v i and v j . Moreo ver, it satisfies the sym- metry prop ert y w ij = w j i . The neighborho o d is defined as the set of N closest p oin ts in the feature space. Accordingly , edges exist b etw een eac h vertex and the v ertices of its N -nearest neigh b ors. F ollo wing the approach of [4], we calculate w eights using the lo cal scaling of Zelnik-Manor and P erona [29], w ij = exp − || x i − x j || 2 τ ( x i ) τ ( x j ) . (3) Here, τ ( x i ) = || x i − x M i || defines a local v alue for eac h x i , where x M i is the p osition of the M th closest data p oin t to x i , and M is a global parameter. It is con v enient to express calculations on graphs via the graph Laplacian matrix, denoted b y L . The pro cedure w e use to build the graph Laplacian is as follo ws. 4 Garcia-Cardona et al. 1. Compute the similarity matrix W with comp onen ts w ij defined in (3). As the neighborho od relationship is not symmetric, the resulting matrix W is also not symmetric. Make it a symmetric matrix b y connecting vertices v i and v j if v i is among the N -nearest neighbors of v j or if v j is among the N -nearest neighbors of v i [27]. 2. Define D as a diagonal matrix whose i th diagonal elemen t represents the degree of the v ertex v i , ev aluated as d i = X j w ij . (4) 3. Calculate the graph Laplacian: L = D − W . Generally , the graph Laplacian is normalized to guaran tee sp ectral con vergence in the limit of large sample size [27]. The symmetric normalized graph Laplacian L s is defined as L s = D − 1 / 2 L D − 1 / 2 = I − D − 1 / 2 W D − 1 / 2 . (5) Data segmentation can now b e carried out through a graph-based formula- tion of the GL energy . T o implement this task, a fidelity term is added to the functional as initially suggested in [11]. This enables the sp ecification of a priori information in the system, for example the kno wn labels of certain points in the data set. This kind of setup is called semi-sup ervised learning (SSL). The discrete GL energy for SSL on graphs can b e written as [4]: GL SSL ( u ) = 2 h u , L s u i + 1 X v i ∈ V Φ ( u ( v i )) + X v i ∈ V µ ( v i ) 2 ( u ( v i ) − ˆ u ( v i )) 2 (6) = 4 X v i ,v j ∈ V w ij u ( v i ) √ d i − u ( v j ) p d j ! 2 + 1 X v i ∈ V Φ ( u ( v i )) + X v i ∈ V µ ( v i ) 2 ( u ( v i ) − ˆ u ( v i )) 2 . (7) In the discrete formulation, u is a v ector whose comp onen t u ( v i ) represen ts the state of the vertex v i , > 0 is a real constan t characterizing the smo othness of the transition b etw een classes, and µ ( v i ) is a fidelity w eight taking v alue µ > 0 if the lab el ˆ u ( v i ) (i.e. class) of the data point asso ciated with vertex v i is known b eforehand, or µ ( v i ) = 0 if it is not kno wn (semi-sup ervised). Minimizing the functional sim ulates a diffusion pro cess on the graph. The information of the few labels known is propagated through the discrete structure b y means of the smo othing term, while the p oten tial term clusters the vertices around the states ± 1 and the fidelit y term enforces the kno wn labels. The energy minimization process itself attempts to reduce the in terface regions. Note that in the absence of the fidelit y term, the pro cess could lead to a trivial steady-state solution of the diffusion equation, with all data p oin ts assigned the same lab el. The final state u ( v i ) of each v ertex is obtained by thresholding, and the resulting homogeneous regions with lab els of +1 and − 1 constitute the t wo-class data segmen tation. Multiclass SSL on Graphs using Ginzburg-Landau F unctional Minimization 5 3 Multiclass Extension The double-w ell potential in the diffuse interface model for SSL drives the state of the system tow ards t wo definite lab els. Multiple-class segmen tation requires a more general p otenti al function Φ M ( u ) that allo ws clusters around more than t wo lab els. F or this purp ose, we use the p erio dic-w ell p oten tial suggested by Li and Kim [21], Φ M ( u ) = 1 2 { u } 2 ( { u } − 1) 2 , (8) where { u } denotes the fractional part of u , { u } = u − b u c , (9) and b u c is the largest in teger not greater than u . This p eriodic p oten tial well promotes a multiclass solution, but the graph Laplacian term in Equation (6) also requires mo dification for effective calcula- tions due to the fixed ordering of class lab els in the multiple class setting. The graph Laplacian term p enalizes large c hanges in the spatial distribution of the system state more than smaller gradual changes. In a multiclass framework, this implies that the p enalty for tw o spatially contiguous classes with differen t lab els ma y v ary according to the (arbitrary) ordering of the labels. This phenomenon is shown in Fig. 1. Supp ose that the goal is to segment the image into three classes: class 0 composed b y the blac k region, class 1 composed b y the gray region and class 2 comp osed by the white region. It is clear that the horizontal interfaces comprise a jump of size 1 (analogous to a tw o class segmen tation) while the v ertical in terface implies a jump of size 2. Accordingly , the smo othing term will assign a higher cost to the v ertical in terface, ev en though from the p oin t of view of the classification, there is no specific reason for this. In this example, the problem cannot b e solved with a different lab el assignment. There will alw ays b e an interface with higher costs than others indep enden t of the in tege r v alues used. Th us, the multiclass approach breaks the symmetry among classes, influenc- ing the diffuse interface evolution in an undesirable manner. Eliminating this in- con venience requires restoring the symmetry , so that the difference b et ween tw o classes is alwa ys the same, regardless of their lab els. This ob jectiv e is achiev ed b y introducing a new class difference measure. Fig. 1. Three-class segmen tation. Blac k: class 0. Gra y: class 1. White: class 2. 6 Garcia-Cardona et al. 3.1 Generalized Difference F unction The final class labels are determined by thresholding each vertex u ( v i ), with the lab el y i set to the nearest in teger: y i = u ( v i ) + 1 2 . (10) The boundaries b etw een classes then occur at half-integer v alues corresp ond- ing to the unstable equilibrium states of the p otential w ell. Define the function ˆ r ( x ) to represen t the distance to the nearest half-in teger: ˆ r ( x ) = 1 2 − { x } . (11) A schematic of ˆ r ( x ) is depicted in Fig. 2. The ˆ r ( x ) function is used to define a generalized difference function b etw een classes that restores symmetry in the energy functional. Define the generalized difference function ρ as: ρ ( u ( v i ) , u ( v j )) = ˆ r ( u ( v i )) + ˆ r ( u ( v j )) y i 6 = y j | ˆ r ( u ( v i )) − ˆ r ( u ( v j )) | y i = y j (12) Th us, if the vertices are in differen t classes, the difference ˆ r ( x ) betw een eac h state’s v alue and the nearest half-integer is added, whereas if they are in the same class, these differences are subtracted. The function ρ ( x, y ) corresponds to the tree distance (see Fig. 2). Strictly speaking, ρ is not a metric since it do es not satisfy ρ ( x, y ) = 0 ⇒ x = y . Nevertheless, the cost of interfaces betw een classes b ecomes the same regardless of class labeling when this generalized distance function is implemen ted. t @ @ @ @ a a a Half-in teger In teger @ @ ˆ r ( x ) Fig. 2. Schematic interpretation of generalized difference: ˆ r ( x ) measures distance to nearest half-integer, and ρ is a tree distance measure. The GL energy functional for SSL, using the new generalized difference func- tion ρ and the p eriodic p oten tial, is expressed as MGL SSL ( u ) = 2 X v i ∈ V X v j ∈ V w ij p d i d j [ ρ ( u ( v i ) , u ( v j )) ] 2 + Multiclass SSL on Graphs using Ginzburg-Landau F unctional Minimization 7 1 2 X v i ∈ V { u ( v i ) } 2 ( { u ( v i ) } − 1) 2 + X v i ∈ V µ ( v i ) 2 ( u ( v i ) − ˆ u ( v i )) 2 . (13) Note that the smo othing term in this functional is comp osed of an operator that is not just a generalization of the normalized symmetric Laplacian L s . The new smo othing op eration, written in terms of the generalized distance function ρ , constitutes a non-linear op erator that is a symmetrization of a different nor- malized Laplacian, the random walk Laplacian L w = D − 1 L = I − D − 1 W [27]. The reason is as follo ws. The Laplacian L satisfies ( L u ) i = X j w ij ( u i − u j ) and L w satisfies ( L w u ) i = X j w ij d i ( u i − u j ) . No w replace w ij /d i in the latter express ion with the symmetric form w ij / p d i d j . This is equiv alent to constructing a rew eighted graph with w eights b w ij giv en b y: b w ij = w ij p d i d j . The corresp onding rew eighted Laplacian b L satisfies: ( b L u ) i = X j b w ij ( u i − u j ) = X j w ij p d i d j ( u i − u j ) , (14) and h u , b L u i = 1 2 X i,j w ij p d i d j ( u i − u j ) 2 . (15) While b L = b D − c W is not a standard normalized Laplacian, it does hav e the desirable prop erties of stability and consistency with increasing sample size of the data set, and of satisfying the conditions for Γ -con vergence to TV in the → 0 limit [2]. It also generalizes to the tree distance more easily than does L s . Replacing the difference ( u i − u j ) 2 with the generalized difference [ ρ ( u i , u j )] 2 then gives the new smo othing multiclass term of equation (13). Empirically , this new term seems to perform well ev en though the normalization pro cedure differs from the binary case. By implementing the generalized difference function on a tree, the cost of in terfaces b et ween classes becomes the same regardless of class lab eling. 8 Garcia-Cardona et al. 3.2 Computational Algorithm The GL energy functional giv en b y (13) ma y b e minimized iterativ ely , using gradien t desc en t: u n +1 i = u n i − dt δ MGL SSL δ u i , (16) where u i is a shorthand for u ( v i ), dt represents the time step and the gradient direction is giv en b y: δ MGL SSL δ u i = ˆ R ( u n i ) + 1 Φ 0 M ( u n i ) + µ i ( u n i − ˆ u i ) (17) ˆ R ( u n i ) = X j w ij p d i d j ˆ r ( u n i ) ± ˆ r ( u n j ) ˆ r 0 ( u n i ) (18) Φ 0 M ( u n i ) = 2 { u n i } 3 − 3 { u n i } 2 + { u n i } (19) The gradient of the generalized difference function ρ is not defined at half in teger v alues. Hence, we mo dify the metho d using a greedy strategy: after de- tecting that a vertex changes class, the new class that minimizes the smo othing term is selected, and the fractional part of the state computed b y the gradient descen t up date is preserved. Consequen tly , the new state of v ertex i is the re- sult of gradien t descent, but if this causes a c hange in class, then a new state is determined. Sp ecifically , let k represen t an in tege r in the range of the problem, i.e. k ∈ [0 , K − 1], where K is the n umber of classes in the problem. Giv en the fractional part { u } resulting from the gradien t descen t up date, find the integer k that mini- mizes P j w ij √ d i d j [ ρ ( k + { u i } , u j ) ] 2 , the smoothing term in the energy functional, and use k + { u i } as the new vertex state. A summary of the pro cedure is shown Algorithm 1 Calculate u Require: , dt, N D , n max , K Ensure: out = u end for i = 1 → N D do u 0 i ← r and ((0 , K )) − 1 2 . If µ i > 0 , u 0 i ← ˆ u i end for for n = 1 → n max do for i = 1 → N D do u n +1 i ← u n i − dt ˆ R ( u n i ) + 1 Φ 0 M ( u n i ) + µ i ( u n i − ˆ u i ) if Lab el( u n +1 i ) 6 = Lab el( u n i ) then ˆ k = arg min 0 ≤ k

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment